题目:

网址为https://beijing.anjuke.com/sale/,

利用BeautifulSoup库,爬取第1页的信息,具体信息如下:进入每个房源的页面,爬取小区名称、参考预算、发布时间和核心卖点,并将它们打印出来。(刚学网络爬虫。若有错误,望指正)

代码如下:

import requests

from bs4 import BeautifulSoup

headers = {

'User-Agent':'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/94.0.4606.81 Safari/537.36 Edg/94.0.992.50'

}

info_lists = []

house=requests.get("https://beijing.anjuke.com/sale/",headers=headers)

soup=BeautifulSoup(house.text,"lxml")

names=soup.select("h3")

positions=soup.select("p.property-content-info-comm-name")

moneys=soup.select("div.property-price > p.property-price-total > span.property-price-total-num")

years=soup.select("div.property-content > div.property-content-detail > section > div:nth-of-type(1) > p:nth-of-type(5)")

points=soup.select("div.property-content > div.property-content-detail > section > div:nth-of-type(3)")

for name,position,money,year,point in zip(names,positions,moneys,years,points):

info = {

'name':name.get_text().strip(),

'position':position.get_text().strip(),

'money':money.get_text().strip(),

'year':year.get_text().strip(),

'point':point.get_text().strip()

}

info_lists.append(info)

for info_list in info_lists:

f = open(r'C:\Users\23993\Desktop\house_info.txt','a+')

try:

f.write(info_list["name"]+' '+info_list["position"]+' '+info_list["money"]+'万'+' '+info_list["year"]+' '+info_list["point"]+'\n')

f.close()

except UnicodeEncodeError:

pass

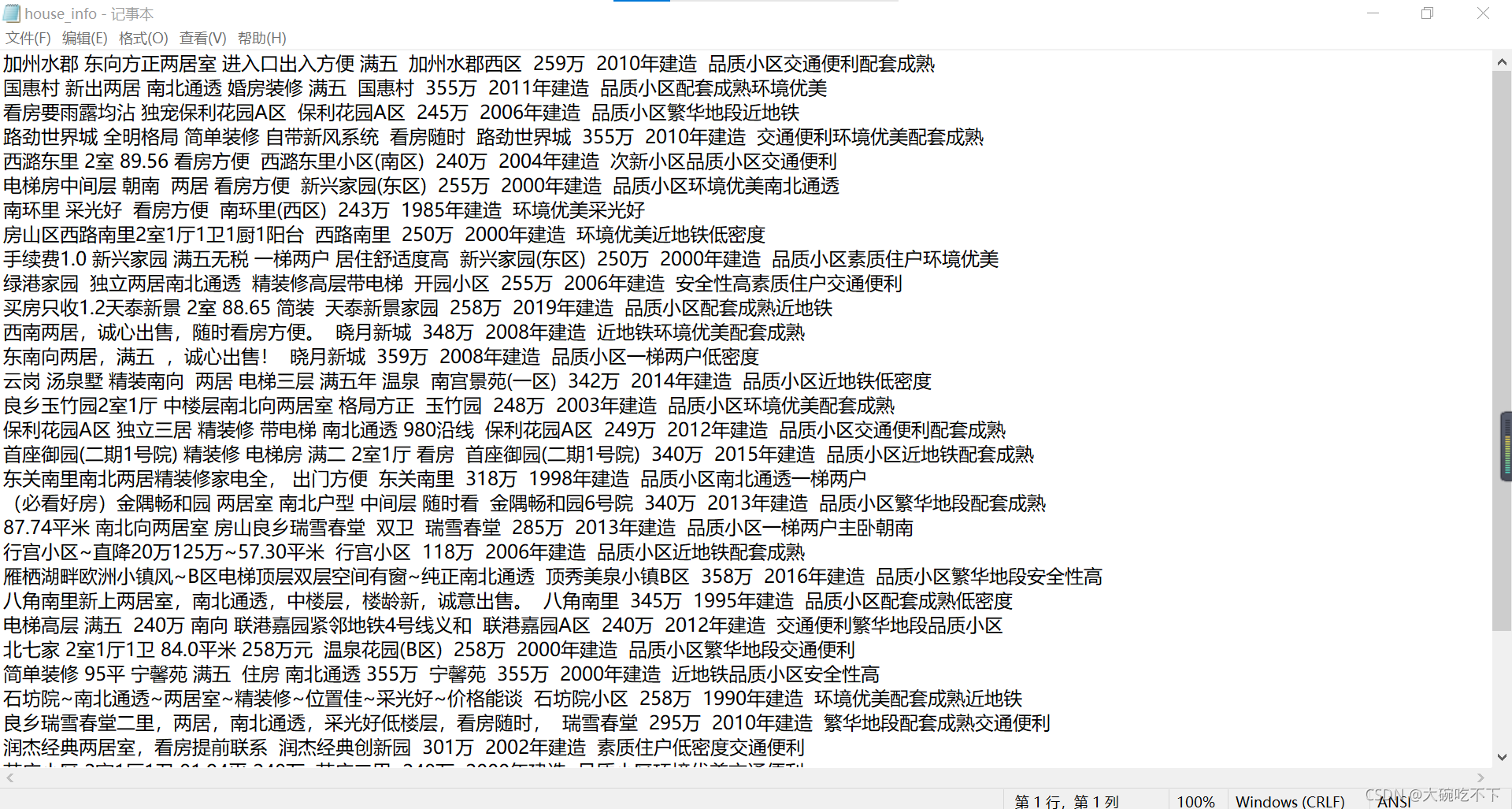

部分结果截图: