数据集链接:https://pan.baidu.com/s/1bIKasCIDAaT-_EwB6hcAMQ

提取码:4fij

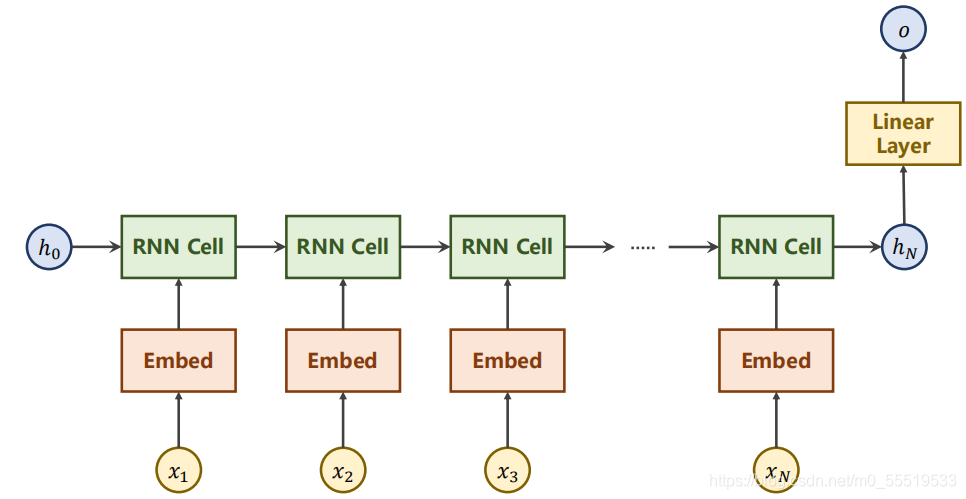

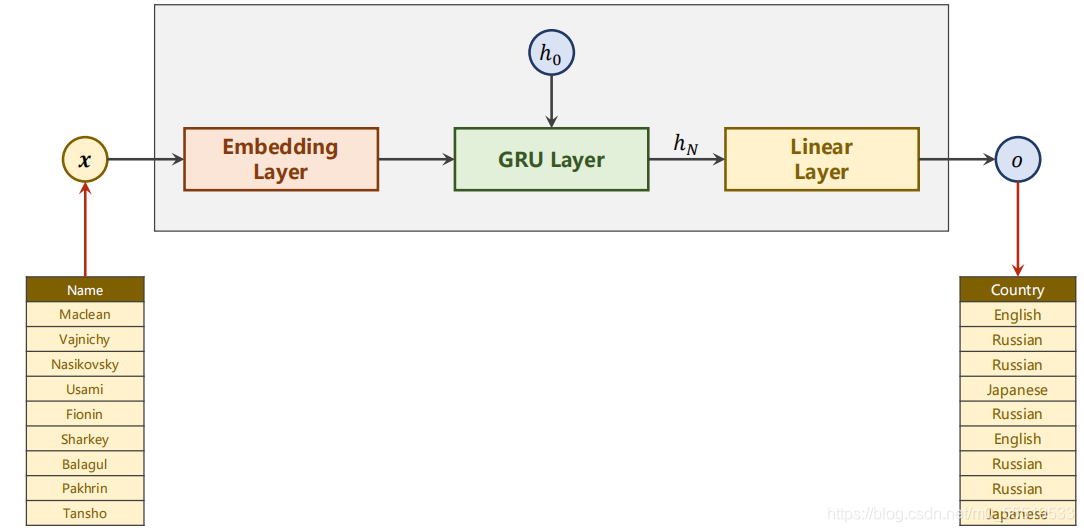

任务:使用RNN通过训练name数据集来预测name属于哪个country.

RNN,LSTM,GRU都是循环神经网络。

网络模型:

?

?最后只需要一个Linear Layer来得出整个name序列的预测结果。

?数据准备

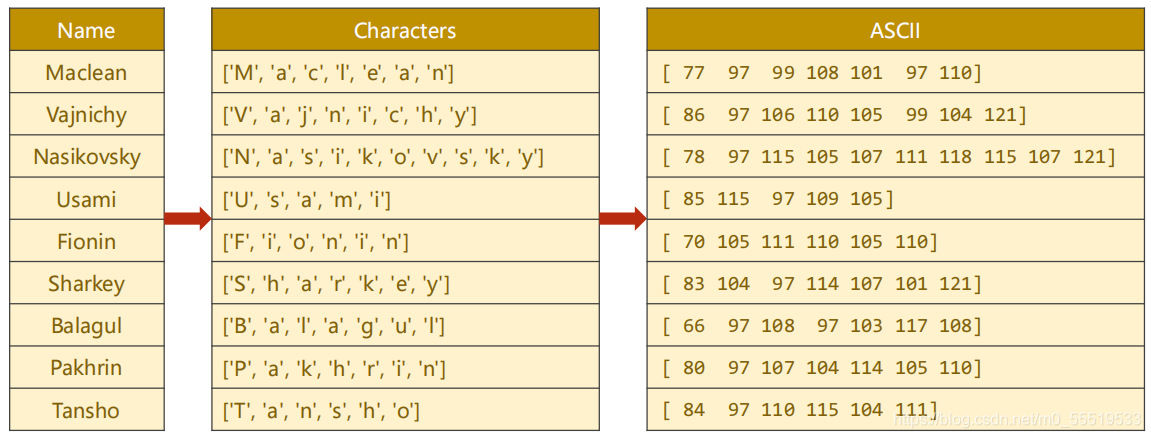

Name序列处理步骤:

1.Name转成序列List,即Maclean→['M', 'a', 'c', 'l', 'e', 'a', 'n']

2.用ASCⅡ码表示List中的元素,即[77 97 99 108 101 97 110],里面不是代表数值,而是one-hot向量,比如77是指共128维的向量,只有Idex77是1,其他都是0

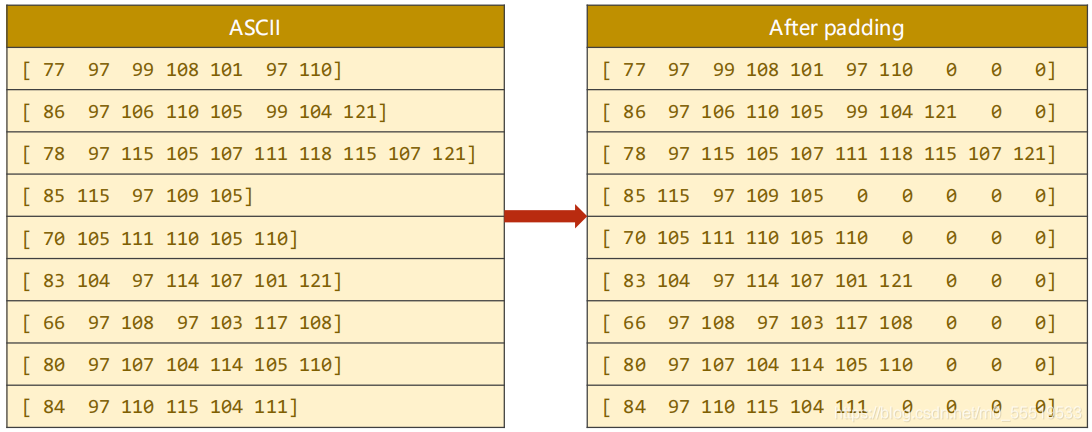

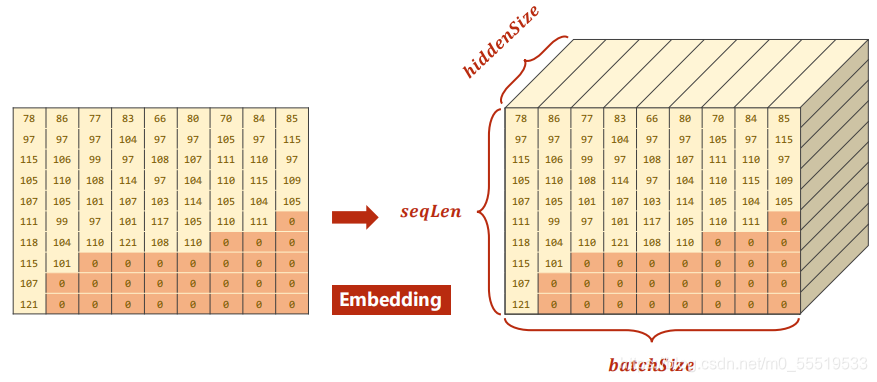

3.Padding将一个batch_size的序列填充成长度相同,即[77 97 99 108 101 97 110 0 0 0]

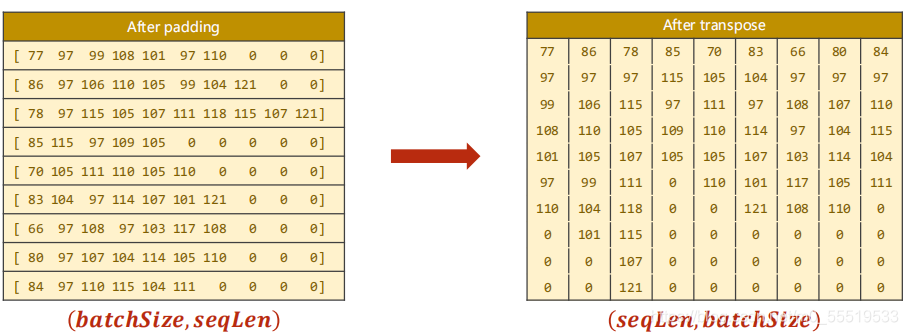

4.将Padding后的矩阵转置

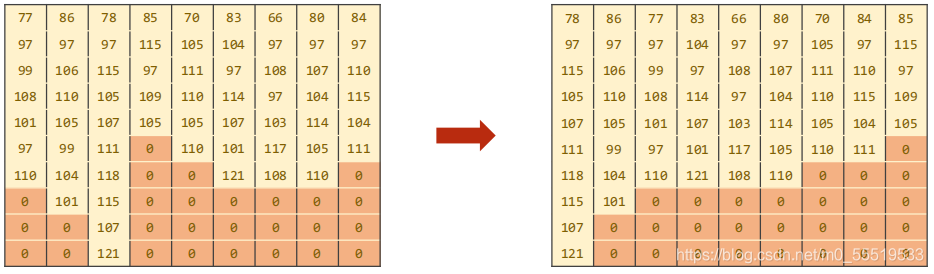

?5.按照序列长度降序排列

?同时要获得对应顺序的序列长度列表[10, 8, 7, 7, 7, 7,6, 6, 5]

?Country的处理步骤:

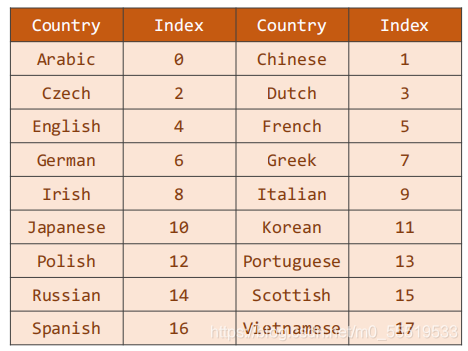

?给每个Country一个索引,构成字典{‘country’: idx},在name排序之后,也要按照相同的排序将name对应的Country标签排序。

就是说,在拿到一个name后,就能根据name在batch_size中的位置找到country,再进一步找到分类标签idx。

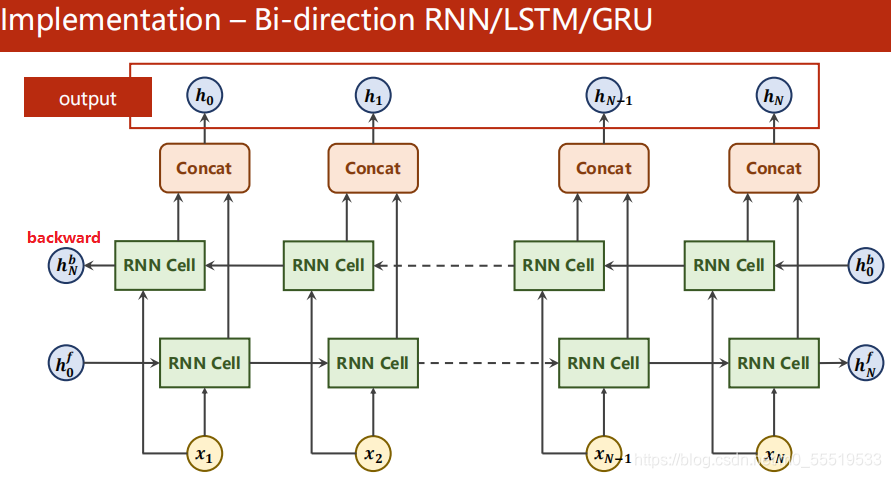

双向RNN

?从

算一遍

,再从

算一遍

,将相应的

和

拼接起来,最终得到的hidden=[

,

]。

是否双向在代码中通过bidirectional = 1或2 体现。

?torch.nn.GRU()

1.维度

input:(seqlen, batch_size, hidden_size)

output:(seqlen, batch_size, hidden_size * nDirections)

hidden:(nLayers * nDirections, batch_size, hidden_size)

2.参数设置

?torch.nn.GRU(hidden_size, hidden_size, num_layers, bidirectional=_)

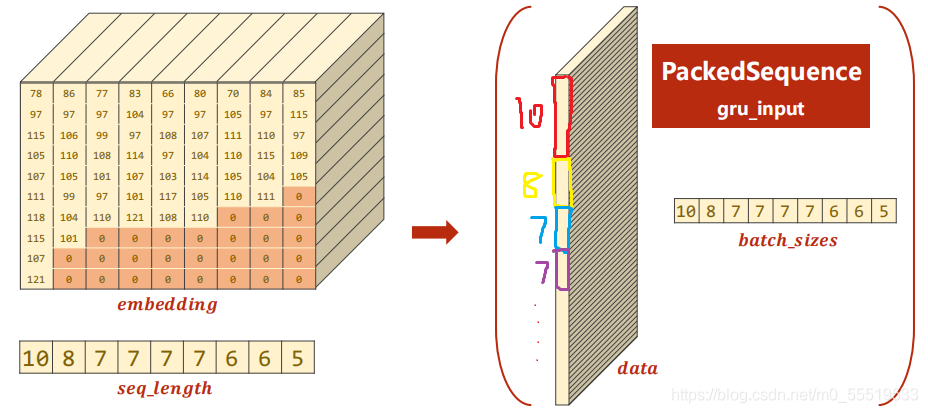

?torch.nn.utils.rnn.pack_padded_sequence()

1.作用:提升GRU的运行效率

2.用法:经过embadding之后的维度(seqlen, baych_size, hidden_size)

?随后通过rnn.pack_padded_sequence(embadding, seq_lengths)对非零的值进行打包

示例代码:

import torch

import gzip

import csv

from torch.utils.data import DataLoader

import numpy as np

from torch.nn.utils import rnn

from torch.utils.data import Dataset

import time

import matplotlib.pyplot as plt

import math

#参数初始化

Hidden_size = 100

Batch_size = 256

Num_layers = 2

Num_epoch = 10

Num_chars = 128

use_gpu = False

class NameDataset(Dataset):

'构造数据集'

def __init__(self, is_train_set=True):

super(NameDataset, self).__init__()

filename = 'E:/image-dataset/names_sets/names_train.csv.gz' if is_train_set else 'E:/image-dataset/names_sets/names_test.csv.gz'

with gzip.open(filename, 'rt') as f:

reader = csv.reader(f)

rows = list(reader) #rows=[(name,country),(name,country),...]

self.names = [row[0] for row in rows]

self.len = len(self.names)

self.countries = [row[1] for row in rows]

self.country_list = list(sorted(set(self.countries))) #经过去重、排序后得到country列表

self.country_dict = self.getCountryDict() #用此函数将list转成对应的dict,['country1':idx分类号,'country2':idx分类号,...]

self.country_num = len(self.country_list)

def __getitem__(self, index):

'为了数据集能提供索引访问,返回:索引对应的name字符串,索引对应的country的分类号'

return self.names[index], self.country_dict[self.countries[index]]

def __len__(self):

return self.len

def getCountryDict(self):

country_dict = dict()

for idx, country_name in enumerate(self.country_list, 0):

country_dict[country_name] = idx

return country_dict

def idx2country(self,index):

return self.country_list[index]

def getCountryNum(self):

return self.country_num

#数据准备

train_sets = NameDataset(is_train_set=True)

train_loader = DataLoader(train_sets, batch_size = Batch_size, shuffle=True)

test_sets = NameDataset(is_train_set=False)

test_loader = DataLoader(test_sets, batch_size=Batch_size, shuffle=False)

Num_country = train_sets.getCountryNum() #模型最终的分类类别数

def create_tensor(tensor):

if use_gpu:

device = torch.device("cuda:0")

tensor = tensor.to(device)

return tensor

def name2list(name):

"读取名字的每个字符 对应的 的ASC码值,将名字list变成由ASC表示的列表.输出元组:名字的ASC表示 和 名字长度"

arr = [ord(c) for c in name]

return arr, len(arr)

def make_tensors(names, countries):

sequences_and_lengths = [name2list(name) for name in names]

name_sequences = [sl[0] for sl in sequences_and_lengths]

seq_lengths = torch.LongTensor([sl[1] for sl in sequences_and_lengths])

countries = countries.long()

# make tensor of name, Batchsize*seqlen

seq_tensor = torch.zeros(len(name_sequences), seq_lengths.max()).long()

for idx, (seq, seq_len) in enumerate(zip(name_sequences, seq_lengths), 0):

seq_tensor[idx, :seq_len] = torch.LongTensor(seq) #idx对应的位置中,从0到name长度将name填进去

#按照name长度进行排序

seq_lengths, perm_idx = seq_lengths.sort(dim=0, descending=True)

seq_tensor = seq_tensor[perm_idx] #将这两个也按照相同的idx排序

countries = countries[perm_idx]

return create_tensor(seq_tensor),\

create_tensor(seq_lengths),\

create_tensor(countries)

class RNNClassifier(torch.nn.Module):

'构造RNN分类器模型'

def __init__(self, input_size, hidden_size, output_size, n_layers=1,bidirectional=True):

super(RNNClassifier, self).__init__()

self.hidden_size = hidden_size

self.n_layers = n_layers

self.directions = 2 if bidirectional else 1

self.embadding = torch.nn.Embedding(input_size, hidden_size)

self.gru = torch.nn.GRU(hidden_size, hidden_size, n_layers, bidirectional=bidirectional)

self.fc = torch.nn.Linear(hidden_size * self.directions, output_size)

def _init_hidden(self,batch_size):

hidden = torch.zeros(self.n_layers * self.directions, batch_size, self.hidden_size)

return create_tensor(hidden)

def forward(self,input, seq_lengths):

'input:所有序列,seq_lengths:每个序列的长度'

input = input.t() #将input做转置,由batch×seq_len到seq_len×batch

batch_size = input.size(1) #用它来构造最初的隐层H0

hidden = self._init_hidden(batch_size)

embadding = self.embadding(input) #shape:(seq_len,batchsize,hiddensize)

# 将序列按照长度降序打包

gru_input = rnn.pack_padded_sequence(embadding, seq_lengths)

output, hidden = self.gru(gru_input, hidden)

if self.directions==2:

hidden_cat = torch.cat([hidden[-1], hidden[-2]], dim=1)

else:

hidden_cat = hidden[-1]

fc_output = self.fc(hidden_cat)

return fc_output

def time_since(since):

s = time.time()-since

m = math.floor(s/60)

s -= m * 60

return '%dm %ds' % (m, s)

def trainModel():

total_loss = 0

for i, (names,countries) in enumerate(train_loader,1):

inputs, seq_lengths, targets = make_tensors(names,countries)

output = classifier(inputs, seq_lengths)

loss = criterion(output, targets)

optimizer.zero_grad()

loss.backward()

optimizer.step()

total_loss += loss.item()

if i % 10 == 0:

print(f'[{time_since(start)}] Epoch {epoch}', end='')

print(f'[{i * len(inputs)}/{len(train_sets)}]',end='')

print(f'loss={total_loss / (i * len(inputs))}')

return total_loss

def testModel():

correct = 0

total = len(test_sets)

print('evaluating trained model...')

with torch.no_grad():

for i, (names, countries) in enumerate(test_loader, 1):

inputs, seq_lengths, targets = make_tensors(names, countries)

output = classifier(inputs, seq_lengths)

pred = output.max(dim=1, keepdim=True)[1]

correct += pred.eq(targets.view_as(pred)).sum().item()

percent = '%.2f' % (100 * correct / total)

print(f'Test set:Accuracy {correct} / {total} {percent}%')

return correct / total

#参数初始化

if __name__=="__main__":

classifier = RNNClassifier(Num_chars, Hidden_size, Num_country, Num_layers)

if use_gpu:

device = torch.device('cuda:0')

classifier.to(device)

criterion = torch.nn.CrossEntropyLoss()

optimizer = torch.optim.Adam(classifier.parameters(), lr=0.001)

start = time.time()

print('Training for %d epochs...' % Num_epoch)

acc_list = []

for epoch in range(1,Num_epoch+1):

trainModel()

acc = testModel()

acc_list.append(acc)

epoch = np.arange(1, len(acc_list) + 1, 1)

acc_list = np.array(acc_list)

plt.plot(epoch, acc_list)

plt.xlabel('Epoch')

plt.ylabel('Accuracy')

plt.grid()

plt.show()说明:m.sort(dim = _, descending = _),dim=0或1,0是按列排序,1是按行排序;descending=True是由大到小,false是由小到大。最后返回:1.排序后的序列;2.排序后对应的原来的idx。