论文链接:https://arxiv.org/abs/1706.03762

文章目录

Transformer为许多 NLP 任务提供了一种新的架构,其完全基于注意机制,完全舍弃循环卷积结构,使得其并行计算能力十分强大,而且刷新了许多NLP任务的SOTA,不得不说是一个非常先进的模型,因此在此学习记录下心得,主要参考的是哈佛的NLP团队实现的一个基于PyTorch的版本:http://nlp.seas.harvard.edu/2018/04/03/attention.html

原理讲解有一篇也很棒:《细讲|Attention Is All You Need》.

一、 背景

在这之前,RNN,LSTM等模型被公认是sequence modeling和transduction problems的最先进方法,其通常沿着输入和输出序列的符号位置计算,并将位置与时间步骤对齐,以此生成一系列隐藏状态,这种计算方式使模型没有办法并行运行,效率低,且面临对齐问题。Attention允许对序列符号依赖关系进行建模,而不用考虑它们在输入或输出序列中的距离,然而Attention却只结合上述的Recurrent network来使用,无法解决RN的天生问题。因此提出Transformer,完全抛弃了传统的encoder-decoder模型必须结合CNN或者RNN的固有模式,只用Attention。主要目的是减少计算量和提高并行效率,同时不损害精度。

二、模型架构

我用的:pytorch==1.9.0

import numpy as np

import torch

import torch.nn as nn

import torch.nn.functional as F

import math, copy, time

from torch.autograd import Variable

import matplotlib.pyplot as plt

import seaborn

seaborn.set_context(context="talk")

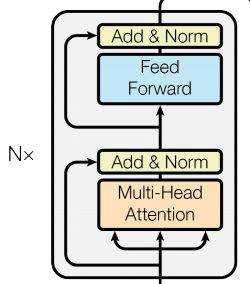

1.整体框架

大多数神经序列转换模型都有encoder-decoder结构。encoder用于将符号表示的输入序列 (x1, …, xn)编码映射到一个连续表示的序列z = (z1, …, zn)。给定z,decoder一次生成一个元素符号的输出序列(y1,…,ym)。Transformer也遵循这种架构,由encoder-decoder结构构成,其结构如下图所示:

class EncoderDecoder(nn.Module):

"""

标准的encoder-decoder架构

@输入参数:

encoder:编码器

decoder:解码器

src_embed:输入词向量

tgt_embed:目标词向量

generator:生成器,对应上图的linear + softmax

"""

def __init__(self, encoder, decoder, src_embed, tgt_embed, generator):

super(EncoderDecoder, self).__init__()

self.encoder = encoder

self.decoder = decoder

self.src_embed = src_embed

self.tgt_embed = tgt_embed

self.generator = generator

def forward(self, src, tgt, src_mask, tgt_mask):

"喂入和处理masked src与目标序列."

return self.decode(self.encode(src, src_mask), src_mask, tgt, tgt_mask)

def encode(self, src, src_mask):

return self.encoder(self.src_embed(src), src_mask)

def decode(self, memory, src_mask, tgt, tgt_mask):

return self.decoder(self.tgt_embed(tgt), memory, src_mask, tgt_mask)

class Generator(nn.Module):

" 定义标准的linear + softmax生成器."

def __init__(self, d_model, vocab):

super(Generator, self).__init__()

self.proj = nn.Linear(d_model, vocab)

def forward(self, x):

return F.log_softmax(self.proj(x), dim=-1)

2.编码器

# 编码器由一堆相同的EncoderLayer堆砌而成,N=6

def clones(module, N):

"生成N个相同的层."

return nn.ModuleList([copy.deepcopy(module) for _ in range(N)])

# 总的编码器

class Encoder(nn.Module):

"由N个相同的层(如上图)组成"

def __init__(self, layer, N):

super(Encoder, self).__init__()

self.layers = clones(layer, N)

self.norm = LayerNorm(layer.size)

def forward(self, x, mask):

"轮流给每层喂入."

for layer in self.layers:

x = layer(x, mask)

return self.norm(x)

# 归一化,即每个子层的输出为LayerNorm(x+Sublayer(x)),(x+Sublayer(x)是子层自己实现的功能。

# 将 dropout 应用于每个子层的输出,然后再将其添加到子层输入中并进行归一化。

# 为了促进这些残差连接,模型中的所有子层以及嵌入层产生维度输出为512

class LayerNorm(nn.Module):

"层归一化"

def __init__(self, features, eps=1e-6):

super(LayerNorm, self).__init__()

self.a_2 = nn.Parameter(torch.ones(features))

self.b_2 = nn.Parameter(torch.zeros(features))

self.eps = eps

def forward(self, x):

mean = x.mean(-1, keepdim=True)

std = x.std(-1, keepdim=True)

return self.a_2 * (x - mean) / (std + self.eps) + self.b_2

# Add&Norm

class SublayerConnection(nn.Module):

"""

残差连接,连的是归一化的层.

"""

def __init__(self, size, dropout):

super(SublayerConnection, self).__init__()

self.norm = LayerNorm(size)

self.dropout = nn.Dropout(dropout)

def forward(self, x, sublayer):

return x + self.dropout(sublayer(self.norm(x)))

# 定义编码层,每层有两个子层。第一个是多头自注意力机制,第二个是简单的、位置明确的全连接前馈网络。

class EncoderLayer(nn.Module):

" EncoderLayer由self_attn和feed_forward组成(后面再定义)"

def __init__(self, size, self_attn, feed_forward, dropout):

super(EncoderLayer, self).__init__()

self.self_attn = self_attn

self.feed_forward = feed_forward

self.sublayer = clones(SublayerConnection(size, dropout), 2)

self.size = size

def forward(self, x, mask):

x = self.sublayer[0](x, lambda x: self.self_attn(x, x, x, mask))

return self.sublayer[1](x, self.feed_forward)

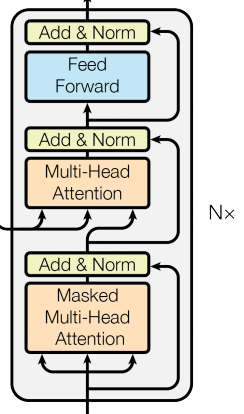

3.解码器

除了每个编码器层中的两个子层之外,解码器层还插入了第三个子层,该层对编码器层的输出执行多头注意。

# 解码器和编码器一样,由N个相同decoderLayer堆砌而成

class Decoder(nn.Module):

def __init__(self, layer, N):

super(Decoder, self).__init__()

self.layers = clones(layer, N)

self.norm = LayerNorm(layer.size)

def forward(self, x, memory, src_mask, tgt_mask):

for layer in self.layers:

x = layer(x, memory, src_mask, tgt_mask)

return self.norm(x)

# 除了每个编码器层中的两个子层之外,解码器还插入了第三个子层,该层对编码器的输出执行多头注意。

# 与编码器类似,在每个子层周围使用残差连接,然后进行层归一化。

class DecoderLayer(nn.Module):

"DecoderLayer由self-attn, src-attn和 feed forward 组成(后面定义)"

def __init__(self, size, self_attn, src_attn, feed_forward, dropout):

super(DecoderLayer, self).__init__()

self.size = size

self.self_attn = self_attn

self.src_attn = src_attn

self.feed_forward = feed_forward

self.sublayer = clones(SublayerConnection(size, dropout), 3)

def forward(self, x, memory, src_mask, tgt_mask):

m = memory

# masked Multi-Head Attention

x = self.sublayer[0](x, lambda x: self.self_attn(x, x, x, tgt_mask))

# Multi-Head Attention

x = self.sublayer[1](x, lambda x: self.src_attn(x, m, m, src_mask))

# feed forward

return self.sublayer[2](x, self.feed_forward)

# 修改了解码器堆栈中的自注意力子层,以防止位置关注后续位置。

# 这种掩蔽与输出嵌入偏移一个位置的事实相结合,确保了位置i的预测只能依赖小于位置i的已知输出

def subsequent_mask(size):

attn_shape = (1, size, size)

subsequent_mask = np.triu(np.ones(attn_shape), k=1).astype('uint8')

return torch.from_numpy(subsequent_mask) == 0

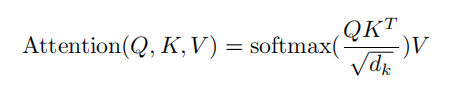

4.注意力层

注意力函数可以描述为将一个query和一组keys对映射到一个输出,其中query、keys、values和输出都是向量。输出计算为values的加权总和,其中分配给每个值的权重由query与相应key的兼容性函数计算。

Scaled Dot-Product Attention

首先给一个输入X, 先通过3个线性转换把X转换为Q(query),K(key),V(value)。Scaled Dot-Product Attention的输入就由维度为dk的Q,K以及维度为dv的V组??成,使用所有key计算query的点积,将每个键除以√dk,并应用 softmax 函数来获得值的权重。在实践中,同时计算一组query的注意力函数,打包成一个矩阵Q。如下图:

计算公式如下:

两个最常用的注意力函数是加法注意力和点积(乘法)注意力,两者复杂度相似,但点积注意力的运算速度更快,因此论文用的是点积注意力,相对于常规的点积注意力,论文多加了个缩放因子1/√dk,之所以加个缩放因子,是为了防止点积后的结果过大,导致softmax函数落在一个梯度很小的地方。

def attention(query, key, value, mask=None, dropout=None):

"计算'Scaled Dot Product Attention'"

d_k = query.size(-1)

# attention得分计算,key要转置一下

scores = torch.matmul(query, key.transpose(-2, -1)) / math.sqrt(d_k)

if mask is not None:

scores = scores.masked_fill(mask == 0, -1e9)

p_attn = F.softmax(scores, dim=-1)

if dropout is not None:

p_attn = dropout(p_attn)

return torch.matmul(p_attn, value), p_attn

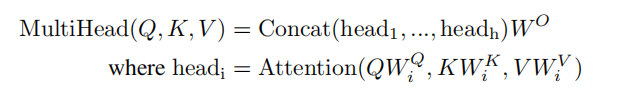

Multi-Head Attention

Multi-Head Attention就是把Scaled Dot-Product Attention的过程做h次,然后把输出Z合起来。怎么组合呢?论文公式如下:

就是先拼接,然后乘以一个矩阵W0,使得输出与输入结构对称。

注意encoder里面是叫self-attention,decoder里面是叫masked self-attention。

这里的masked就是要在做language modelling(或翻译)的时候,不给模型看到未来的信息。

# 多头注意力允许模型共同关注来自不同位置的不同表示子空间的信息。

class MultiHeadedAttention(nn.Module):

def __init__(self, h, d_model, dropout=0.1):

super(MultiHeadedAttention, self).__init__()

assert d_model % h == 0

# 假设 d_v 总是等于 d_k

self.d_k = d_model // h

self.h = h

self.linears = clones(nn.Linear(d_model, d_model), 4)

self.attn = None

self.dropout = nn.Dropout(p=dropout)

def forward(self, query, key, value, mask=None):

"复现上图"

if mask is not None:

# 给所有h个heads应用相同的mask.

mask = mask.unsqueeze(1)

nbatches = query.size(0)

# 1) 对每个batch进行线性投影得到相应向量

query, key, value = \

[l(x).view(nbatches, -1, self.h, self.d_k).transpose(1, 2)

for l, x in zip(self.linears, (query, key, value))]

# 2) 每个batch使用注意力

x, self.attn = attention(query, key, value, mask=mask, dropout=self.dropout)

# 3) 拼接所有head然后线性变换

x = x.transpose(1, 2).contiguous().view(nbatches, -1, self.h * self.d_k)

return self.linears[-1](x)

Applications of Attention in our Model

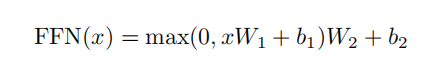

5.位置前馈网络

除了注意力子层之外,encoder和decoder中的每一层都包含一个全连接前馈网络,该网络分别应用于每个位置。这由两个线性变换组成,中间有一个 ReLU 激活。

lass PositionwiseFeedForward(nn.Module):

"实现FFN"

def __init__(self, d_model, d_ff, dropout=0.1):

super(PositionwiseFeedForward, self).__init__()

self.w_1 = nn.Linear(d_model, d_ff)

self.w_2 = nn.Linear(d_ff, d_model)

self.dropout = nn.Dropout(dropout)

def forward(self, x):

return self.w_2(self.dropout(F.relu(self.w_1(x))))

6.Embeddings 和 Softmax

与其他序列转换模型类似,利用训练好的embeddings将输入token和输出token转换为维度向量。另外还使用线性变换和 softmax 函数将decoder输出转换为预测的下一个token概率。在transformer中,在两个embedding层之间共享相同权重矩阵和pre-softmax线性变换。在嵌入层中,将这些权重乘以一个系数sqrt(模型的维度)。

class Embeddings(nn.Module):

def __init__(self, d_model, vocab):

super(Embeddings, self).__init__()

self.lut = nn.Embedding(vocab, d_model)

self.d_model = d_model

def forward(self, x):

return self.lut(x) * math.sqrt(self.d_model)

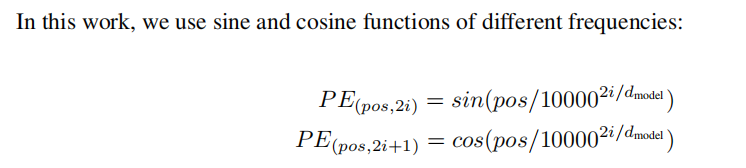

7.位置编码

由于Transformer不包含递归和卷积,为了让模型利用序列的顺序,必须注入一些关于token在序列中的相对或绝对位置的信息。为此,在encoder和decoder底部的输入嵌入中添加了“位置编码”,与输入embeddings相加作为最后encoder和decoder的输入。位置编码有许多不同的选择,论文使用sine和cosine函数实现:

之所以选择上诉函数是因为三角函数的周期性,允许模型很容易地学习相对位置信息,即位置p+k的向量可以表示成位置p的向量的线性变换。

class PositionalEncoding(nn.Module):

"Implement the PE function."

def __init__(self, d_model, dropout, max_len=5000):

super(PositionalEncoding, self).__init__()

self.dropout = nn.Dropout(p=dropout)

# Compute the positional encodings once in log space.

pe = torch.zeros(max_len, d_model)

position = torch.arange(0, max_len).unsqueeze(1)

div_term = torch.exp(torch.arange(0, d_model, 2) *

-(math.log(10000.0) / d_model))

pe[:, 0::2] = torch.sin(position * div_term)

pe[:, 1::2] = torch.cos(position * div_term)

pe = pe.unsqueeze(0)

self.register_buffer('pe', pe)

def forward(self, x):

x = x + Variable(self.pe[:, :x.size(1)],

requires_grad=False)

return self.dropout(x)

8.整体模型

def make_model(src_vocab, tgt_vocab, N=6, d_model=512, d_ff=2048, h=8, dropout=0.1):

c = copy.deepcopy

attn = MultiHeadedAttention(h, d_model)

ff = PositionwiseFeedForward(d_model, d_ff, dropout)

position = PositionalEncoding(d_model, dropout)

model = EncoderDecoder(

Encoder(EncoderLayer(d_model, c(attn), c(ff), dropout), N),

Decoder(DecoderLayer(d_model, c(attn), c(attn),c(ff), dropout), N),

nn.Sequential(Embeddings(d_model, src_vocab), c(position)),

nn.Sequential(Embeddings(d_model, tgt_vocab), c(position)),

Generator(d_model, tgt_vocab))

# This was important from their code.

# Initialize parameters with Glorot / fan_avg.

for p in model.parameters():

if p.dim() > 1:

nn.init.xavier_uniform(p)

return model

三、模型训练

- 定义一个批处理对象,其中包含用于训练的源句子和目标句子,以及构建掩码。

class Batch:

"用于在训练使用mask保存一批数据."

def __init__(self, src, trg=None, pad=0):

self.src = src

self.src_mask = (src != pad).unsqueeze(-2)

if trg is not None:

self.trg = trg[:, :-1]

self.trg_y = trg[:, 1:]

self.trg_mask = self.make_std_mask(self.trg, pad)

self.ntokens = (self.trg_y != pad).data.sum()

@staticmethod

def make_std_mask(tgt, pad):

"生成一个mask隐藏填充和将来的单词."

tgt_mask = (tgt != pad).unsqueeze(-2)

tgt_mask = tgt_mask & Variable(

subsequent_mask(tgt.size(-1)).type_as(tgt_mask.data))

return tgt_mask

- 创建一个通用的训练和评分函数。

def run_epoch(data_iter, model, loss_compute):

start = time.time()

total_tokens = 0

total_loss = 0

tokens = 0

for i, batch in enumerate(data_iter):

out = model.forward(batch.src, batch.trg,

batch.src_mask, batch.trg_mask)

loss = loss_compute(out, batch.trg_y, batch.ntokens)

total_loss += loss

total_tokens += batch.ntokens

tokens += batch.ntokens

if i % 50 == 1:

elapsed = time.time() - start

print("Epoch Step: %d Loss: %f Tokens per Sec: %f" %

(i, loss / batch.ntokens, tokens / elapsed))

start = time.time()

tokens = 0

return total_loss / total_tokens

- 数据训练和批处理

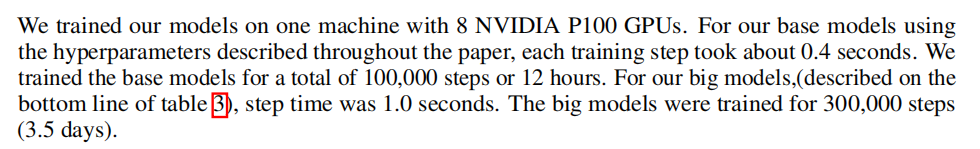

论文在包含约 450 万个句子对的标准 WMT 2014 英德数据集上进行了训练。句子使用 byte-pair编码进行编码,有大约 37000 个token的源-目标词汇表。对于英语-法语,使用更大的 WMT 2014 英语-法语数据集,该数据集由 3600 万个句子组成,并将token拆分为 32000 个单词词表。句子对按近似序列长度分批在一起。每个训练批次包含一组句子对,其中包含大约 25000 个源token和 25000 个目标token。

global max_src_in_batch, max_tgt_in_batch

def batch_size_fn(new, count, sofar):

global max_src_in_batch, max_tgt_in_batch

if count == 1:

max_src_in_batch = 0

max_tgt_in_batch = 0

max_src_in_batch = max(max_src_in_batch, len(new.src))

max_tgt_in_batch = max(max_tgt_in_batch, len(new.trg) + 2)

src_elements = count * max_src_in_batch

tgt_elements = count * max_tgt_in_batch

return max(src_elements, tgt_elements)

- 硬件设备

和谷歌没法比的,用自己的破笔记:RTX3060,也不怎么考虑多GPU并行运行了。 - 优化器:adam

主要是根据论文的公式动态调整学习率

class NoamOpt:

def __init__(self, model_size, factor, warmup, optimizer):

self.optimizer = optimizer

self._step = 0

self.warmup = warmup

self.factor = factor

self.model_size = model_size

self._rate = 0

def step(self):

"更新参数和学习率"

self._step += 1

rate = self.rate()

for p in self.optimizer.param_groups:

p['lr'] = rate

self._rate = rate

self.optimizer.step()

def rate(self, step=None):

"执行上面的学习率"

if step is None:

step = self._step

return self.factor * (self.model_size ** (-0.5) * min(step ** (-0.5), step * self.warmup ** (-1.5)))

# 调用例子

def get_std_opt(model):

return NoamOpt(model.src_embed[0].d_model, 2, 4000,

torch.optim.Adam(model.parameters(), lr=0, betas=(0.9, 0.98), eps=1e-9))

- 正则化

一个是dropout,另一个是标签平滑。

在训练期间,使用values的标签平滑,使用 KL div 损失实现标签平滑。目的是防止模型在训练时过于自信地预测标签,改善泛化能力差的问题。

class LabelSmoothing(nn.Module):

def __init__(self, size, padding_idx, smoothing=0.0):

super(LabelSmoothing, self).__init__()

self.criterion = nn.KLDivLoss(size_average=False)

self.padding_idx = padding_idx

self.confidence = 1.0 - smoothing

self.smoothing = smoothing

self.size = size

self.true_dist = None

def forward(self, x, target):

assert x.size(1) == self.size

true_dist = x.data.clone()

true_dist.fill_(self.smoothing / (self.size - 2))

# 注意scatter中tensor类型要是long

true_dist.scatter_(1, target.data.unsqueeze(1).long(), self.confidence)

true_dist[:, self.padding_idx] = 0

mask = torch.nonzero(target.data == self.padding_idx)

if mask.dim() > 0:

true_dist.index_fill_(0, mask.squeeze(), 0.0)

self.true_dist = true_dist

return self.criterion(x, Variable(true_dist, requires_grad=False))

四、实战

翻译任务要下数据集啥的,比较麻烦,先来个简单的任务:给定来自小词汇表的一组随机输入符号,目标是生成与输入相同的符号,称之为src-tgt copy task。

- 先造个数据集

def data_gen(V, batch, nbatches):

"为src-tgt copy task随机生成数据."

for i in range(nbatches):

data = torch.from_numpy(np.random.randint(1, V, size=(batch, 10)))

data[:, 0] = 1

src = Variable(data, requires_grad=False)

tgt = Variable(data, requires_grad=False)

yield Batch(src, tgt, 0)

- 计算损失

class SimpleLossCompute:

def __init__(self, generator, criterion, opt=None):

self.generator = generator

self.criterion = criterion

self.opt = opt

def __call__(self, x, y, norm):

x = self.generator(x)

loss = self.criterion(x.contiguous().view(-1, x.size(-1)), y.contiguous().view(-1)) / norm

loss.backward()

if self.opt is not None:

self.opt.step()

self.opt.optimizer.zero_grad()

# return loss.data.[0] * norm

return loss.data.item() * norm

- 解码用贪心策略

def greedy_decode(model, src, src_mask, max_len, start_symbol):

memory = model.encode(src, src_mask)

ys = torch.ones(1, 1).fill_(start_symbol).type_as(src.data)

for i in range(max_len - 1):

out = model.decode(memory, src_mask,

Variable(ys),

Variable(subsequent_mask(ys.size(1)).type_as(src.data)))

prob = model.generator(out[:, -1])

_, next_word = torch.max(prob, dim=1)

next_word = next_word.data[0]

ys = torch.cat([ys, torch.ones(1, 1).type_as(src.data).fill_(next_word)], dim=1)

return ys

- 模型训练

V = 11

criterion = LabelSmoothing(size=V, padding_idx=0, smoothing=0.0)

model = make_model(V, V, N=2)

model_opt = NoamOpt(model.src_embed[0].d_model, 1, 400,

torch.optim.Adam(model.parameters(), lr=0, betas=(0.9, 0.98), eps=1e-9))

for epoch in range(10):

model.train()

run_epoch(data_gen(V, 30, 20), model, SimpleLossCompute(model.generator, criterion, model_opt))

model.eval()

print(run_epoch(data_gen(V, 30, 5), model, SimpleLossCompute(model.generator, criterion, None)))

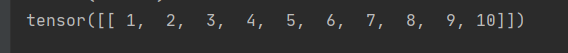

- 模型测试

model.eval()

src = Variable(torch.LongTensor([[1, 2, 3, 4, 5, 6, 7, 8, 9, 10]]))

src_mask = Variable(torch.ones(1, 1, 10))

print(greedy_decode(model, src, src_mask, max_len=10, start_symbol=1))

输入:[1, 2, 3, 4, 5, 6, 7, 8, 9, 10]

看看输出: