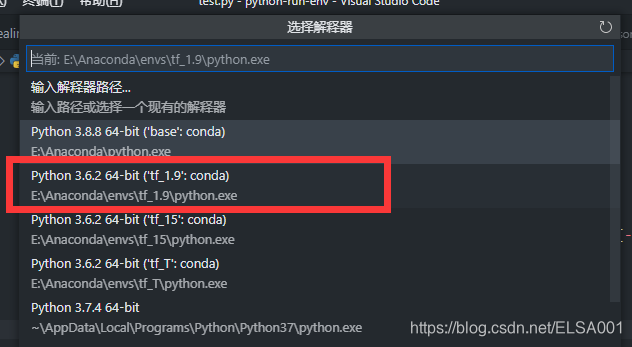

一、环境配置

Anaconda:4.10.3

Python:3.6.2

TensorFlow:1.9.0

二、图片准备

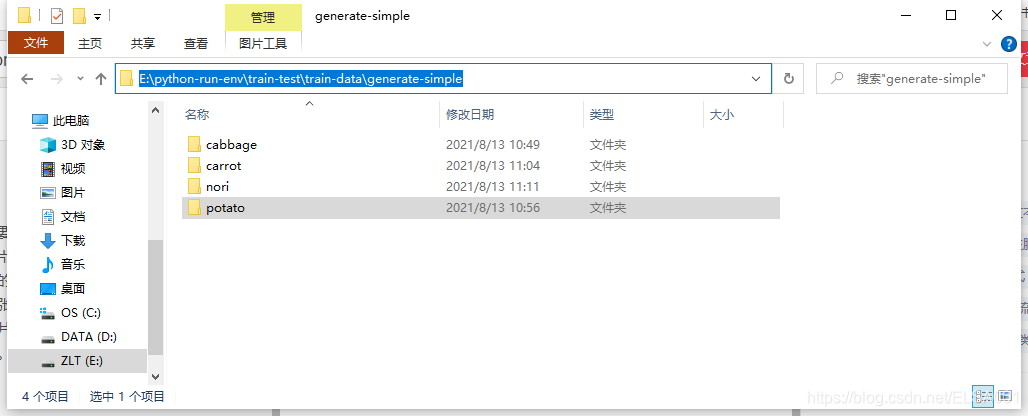

在这个小项目中,我们首先需要自己在网上收集四类图片(每类图片30张,一共120张),这些图片的格式最好是统一的JPG格式,对于分辨率来说没有特定的要求,我们的项目在预处理中可以进行分辨率统一化的预处理(也就是把每一张图片变成一样的分辨率64*64)。

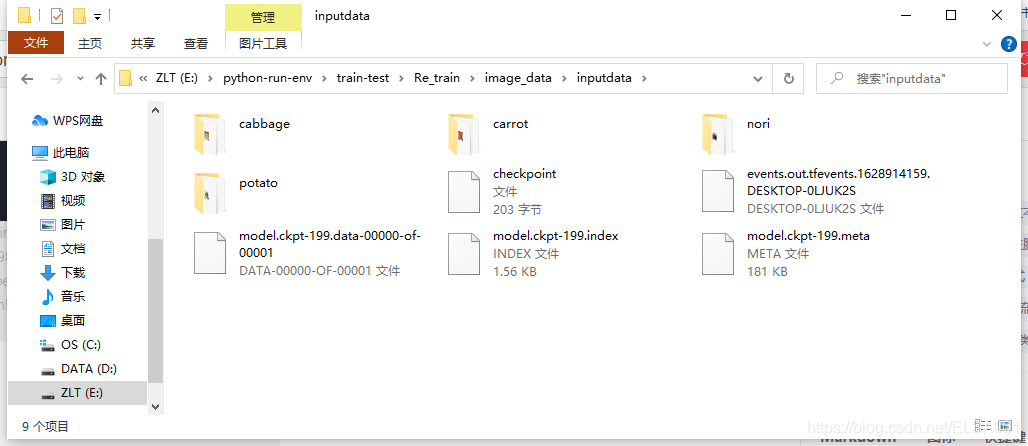

不过要根据你自己的目录把图片放在上面,不然代码可是找不到的。我把图片放在了如图这个地方。

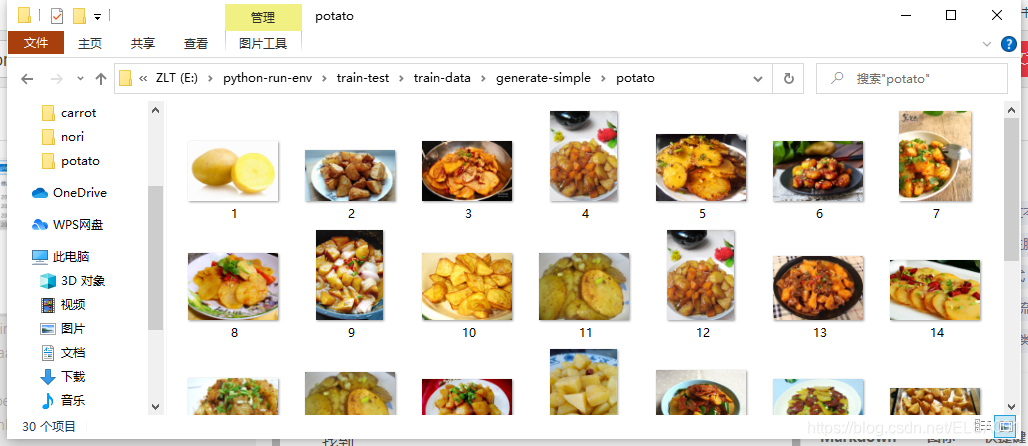

每一张图片都需要整理分类到每一个文件夹中,程序才可以正常找到。比如我把土豆放在这个potato文件夹下。

三、效果展示

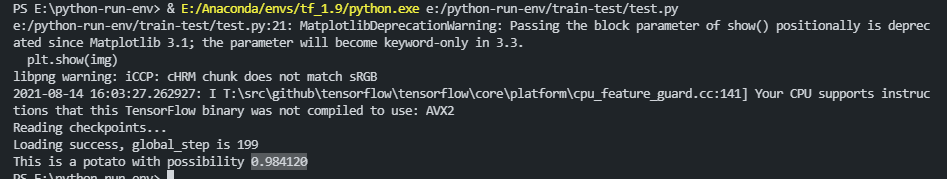

在测试代码中点击运行:

便会出现要预测的图片(图片显示不清是因为这个图片的像素只有64*64).

接着,把图片关闭,即可显示出预测是potato(土豆)的可能性是0.984120。

四、源代码

(1)preprocessing.py(图片预处理)

# 将原始图片转换成需要的大小,并将其保存

import os

import tensorflow as tf

from PIL import Image

# 原始图片的存储位置 E:/python-run-env/train-test/train-data/generate-simple/

orig_picture = 'E:/python-run-env/train-test/train-data/generate-simple/'

# 生成图片的存储位置 E:/python-run-env/train-test/Re_train/image_data/inputdata/

gen_picture = 'E:/python-run-env/train-test/Re_train/image_data/inputdata/'

# 需要的识别类型

classes = {'cabbage','carrot','nori','potato'}

# 样本总数

num_samples = 120

# 制作TFRecords数据

def create_record():

writer = tf.python_io.TFRecordWriter("dishes_train.tfrecords")

for index, name in enumerate(classes):

class_path = orig_picture +"/"+ name+"/"

# os.listdir() 方法用于返回指定的文件夹包含的文件或文件夹的名字的列表。

for img_name in os.listdir(class_path):

img_path = class_path + img_name

img = Image.open(img_path)

img = img.resize((64, 64)) # 设置需要转换的图片大小

img_raw = img.tobytes() # 将图片转化为原生bytes

print (index,img_raw)

example = tf.train.Example(

features=tf.train.Features(feature={

"label": tf.train.Feature(int64_list=tf.train.Int64List(value=[index])),

'img_raw': tf.train.Feature(bytes_list=tf.train.BytesList(value=[img_raw]))

}))

writer.write(example.SerializeToString())

writer.close()

def read_and_decode(filename):

# 创建文件队列,不限读取的数量

filename_queue = tf.train.string_input_producer([filename])

# create a reader from file queue

reader = tf.TFRecordReader()

# reader从文件队列中读入一个序列化的样本

_, serialized_example = reader.read(filename_queue)

# get feature from serialized example

# 解析符号化的样本

features = tf.parse_single_example(

serialized_example,

features={

'label': tf.FixedLenFeature([], tf.int64),

'img_raw': tf.FixedLenFeature([], tf.string)

})

label = features['label']

img = features['img_raw']

img = tf.decode_raw(img, tf.uint8)

img = tf.reshape(img, [64, 64, 3])

# img = tf.cast(img, tf.float32) * (1. / 255) - 0.5

label = tf.cast(label, tf.int32)

return img, label

if __name__ == '__main__':

create_record()

batch = read_and_decode('dishes_train.tfrecords')

init_op = tf.group(tf.global_variables_initializer(), tf.local_variables_initializer())

with tf.Session() as sess: # 开始一个会话

sess.run(init_op)

coord=tf.train.Coordinator()

threads= tf.train.start_queue_runners(coord=coord)

for i in range(num_samples):

example, lab = sess.run(batch) # 在会话中取出image和label

img=Image.fromarray(example, 'RGB') # 这里Image是之前提到的

img.save(gen_picture+'/'+str(i)+'samples'+str(lab)+'.jpg')#存下图片;注意cwd后边加上‘/’

print(example, lab)

coord.request_stop()

coord.join(threads)

sess.close()

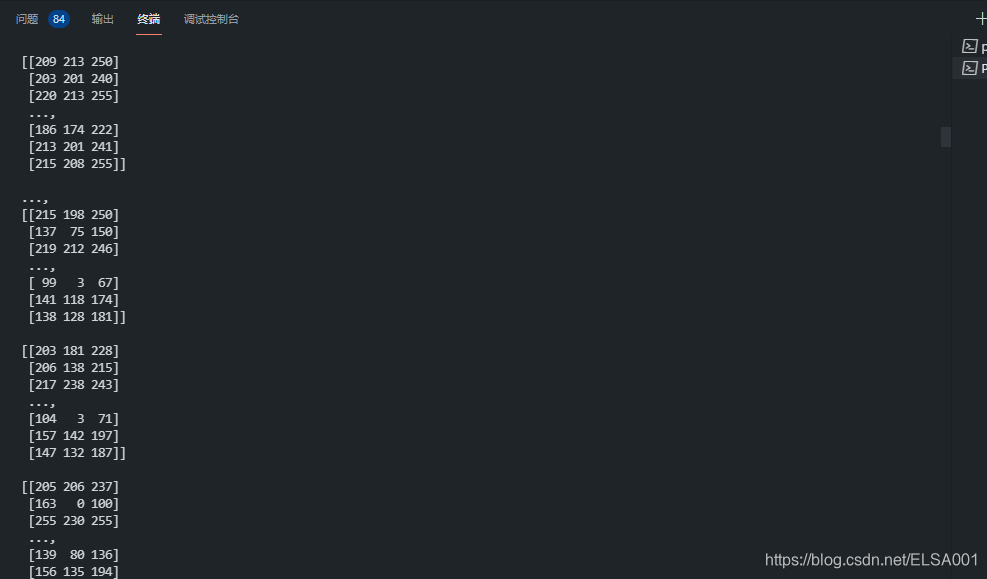

点击运行后,可以在终端看到很多输出

然后在这里可以看到很多图片,要把这些图片进行分类,然后装到这些文件夹里面:

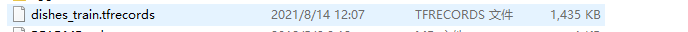

除此之外,还会产生一个TFrecord的二进制文件:

(2)batchdealing.py(输入图片处理)

import os

import math

import numpy as np

import tensorflow as tf

import matplotlib.pyplot as plt

# -----------------生成图片路径和标签的List------------------------------------

# 生成图片的存储位置 E:/python-run-env/train-test/Re_train/image_data/inputdata/

train_dir = 'E:/python-run-env/train-test/Re_train/image_data/inputdata/'

cabbage = []

label_cabbage = []

carrot = []

label_carrot = []

nori = []

label_nori = []

potato = []

label_potato = []

# step1:获取'E:/Re_train/image_data/training_image'下所有的图片路径名,存放到

# 对应的列表中,同时贴上标签,存放到label列表中。

# ratio是测试集的比例

def get_files(file_dir, ratio):

for file in os.listdir(file_dir+'/cabbage'):

cabbage.append(file_dir +'/cabbage'+'/'+ file)

label_cabbage.append(0)

for file in os.listdir(file_dir+'/carrot'):

carrot.append(file_dir +'/carrot'+'/'+file)

label_carrot.append(1)

for file in os.listdir(file_dir+'/nori'):

nori.append(file_dir +'/nori'+'/'+ file)

label_nori.append(2)

for file in os.listdir(file_dir+'/potato'):

potato.append(file_dir +'/potato'+'/'+file)

label_potato.append(3)

# step2:对生成的图片路径和标签List做打乱处理把所有的数据合起来组成一个list(img和lab)

# np.hstack水平(按列)按顺序堆叠数组。

# >>> a = np.array((1,2,3))

# >>> b = np.array((2,3,4))

# >>> np.hstack((a,b))

# array([1, 2, 3, 2, 3, 4])

image_list = np.hstack((cabbage, carrot, nori, potato))

label_list = np.hstack((label_cabbage, label_carrot, label_nori, label_potato))

# 利用shuffle打乱顺序

temp = np.array([image_list, label_list])

temp = temp.transpose()

np.random.shuffle(temp)

# 从打乱的temp中再取出list(img和lab)

# image_list = list(temp[:, 0])

# label_list = list(temp[:, 1])

# label_list = [int(i) for i in label_list]

# return image_list, label_list

# 将所有的img和lab转换成list

all_image_list = list(temp[:, 0])

all_label_list = list(temp[:, 1])

# 将所得List分为两部分,一部分用来训练tra,一部分用来测试val

# ratio是测试集的比例

# n_sample全部样本数

n_sample = len(all_label_list)

n_val = int(math.ceil(n_sample*ratio)) # 测试样本数

n_train = n_sample - n_val # 训练样本数

# 训练的图片和标签

tra_images = all_image_list[0:n_train]

tra_labels = all_label_list[0:n_train]

tra_labels = [int(float(i)) for i in tra_labels]

# 测试图片和标签

val_images = all_image_list[n_train:-1]

val_labels = all_label_list[n_train:-1]

val_labels = [int(float(i)) for i in val_labels]

return tra_images, tra_labels, val_images, val_labels

# --------------------生成Batch----------------------------------------------

# step1:将上面生成的List传入get_batch() ,转换类型,产生一个输入队列queue,因为img和lab

# 是分开的,所以使用tf.train.slice_input_producer(),然后用tf.read_file()从队列中读取图像

# image_W, image_H, :设置好固定的图像高度和宽度

# 设置batch_size:每个batch要放多少张图片

# capacity:一个队列最大多少

def get_batch(image, label, image_W, image_H, batch_size, capacity):

# 转换类型

image = tf.cast(image, tf.string)

label = tf.cast(label, tf.int32)

# make an input queue

# tf.train.slice_input_producer是一个tensor生成器,作用是按照设定,

# 每次从一个tensor列表中按顺序或者随机抽取出一个tensor放入文件名队列。

input_queue = tf.train.slice_input_producer([image, label])

label = input_queue[1]

image_contents = tf.read_file(input_queue[0]) # read img from a queue

# step2:将图像解码,不同类型的图像不能混在一起,要么只用jpeg,要么只用png等。

image = tf.image.decode_jpeg(image_contents, channels=3)

# step3:数据预处理,对图像进行旋转、缩放、裁剪、归一化等操作,让计算出的模型更健壮。

image = tf.image.resize_image_with_crop_or_pad(image, image_W, image_H)

image = tf.image.per_image_standardization(image)

# step4:生成batch

# image_batch: 4D tensor [batch_size, width, height, 3],dtype=tf.float32

# label_batch: 1D tensor [batch_size], dtype=tf.int32

image_batch, label_batch = tf.train.batch([image, label],

batch_size= batch_size,

num_threads= 32,

capacity = capacity)

# 重新排列label,行数为[batch_size]

label_batch = tf.reshape(label_batch, [batch_size])

image_batch = tf.cast(image_batch, tf.float32)

return image_batch, label_batch

(3)forward.py

# 建立神经网络

import tensorflow as tf

# 网络结构定义

# 输入参数:images,image batch、4D tensor、tf.float32、[batch_size, width, height, channels]

# 返回参数:logits, float、 [batch_size, n_classes]

def inference(images, batch_size, n_classes):

# 构建一个简单的卷积神经网络,其中(卷积+池化层)x2,全连接层x2,最后一个softmax层做分类。

# 卷积层1

# 64个3x3的卷积核(3通道),padding=’SAME’,表示padding后卷积的图与原图尺寸一致,激活函数relu()

# tf.variable_scope 可以让变量有相同的命名,包括tf.get_variable得到的变量,还有tf.Variable变量

# 它返回的是一个用于定义创建variable(层)的op的上下文管理器。

with tf.variable_scope('conv1') as scope:

# tf.truncated_normal截断的产生正态分布的随机数,即随机数与均值的差值若大于两倍的标准差,则重新生成。

# shape,生成张量的维度

# mean,均值

# stddev,标准差

weights = tf.Variable(tf.truncated_normal(shape=[3,3,3,64], stddev = 1.0, dtype = tf.float32),

name = 'weights', dtype = tf.float32)

# 偏置biases计算

# shape表示生成张量的维度

# 生成初始值为0.1的偏执biases

biases = tf.Variable(tf.constant(value = 0.1, dtype = tf.float32, shape = [64]),

name = 'biases', dtype = tf.float32)

# 卷积层计算

# 输入图片x和所用卷积核w

# x是对输入的描述,是一个4阶张量:

# 比如:[batch,5,5,3]

# 第一阶给出一次喂入多少张图片也就是batch

# 第二阶给出图片的行分辨率

# 第三阶给出图片的列分辨率

# 第四阶给出输入的通道数

# w是对卷积核的描述,也是一个4阶张量:

# 比如:[3,3,3,16]

# 第一阶第二阶分别给出卷积行列分辨率

# 第三阶是通道数

# 第四阶是有多少个卷积核

# strides卷积核滑动步长:[1,1,1,1]

# 第二阶第三阶表示横向纵向滑动的步长

# 第一和第四阶固定为1

# 使用0填充,所以padding值为SAME

conv = tf.nn.conv2d(images, weights, strides=[1,1,1,1], padding='SAME')

# 非线性激活

# 对卷积后的conv1添加偏执,通过relu激活函数

pre_activation = tf.nn.bias_add(conv, biases)

conv1 = tf.nn.relu(pre_activation, name= scope.name)

# 池化层1

# 3x3最大池化,步长strides为2,池化后执行lrn()操作,局部响应归一化,对训练有利。

# 最大池化层计算

# x是对输入的描述,是一个四阶张量:

# 比如:[batch,28,28,6]

# 第一阶给出一次喂入多少张图片batch

# 第二阶给出图片的行分辨率

# 第三阶给出图片的列分辨率

# 第四阶输入通道数

# 池化核大小2*2的

# 行列步长都是2

# 使用SAME的padding

with tf.variable_scope('pooling1_lrn') as scope:

pool1 = tf.nn.max_pool(conv1, ksize=[1,3,3,1],strides=[1,2,2,1],padding='SAME', name='pooling1')

# 局部响应归一化函数tf.nn.lrn

norm1 = tf.nn.lrn(pool1, depth_radius=4, bias=1.0, alpha=0.001/9.0, beta=0.75, name='norm1')

# 卷积层2

# 16个3x3的卷积核(16通道),padding=’SAME’,表示padding后卷积的图与原图尺寸一致,激活函数relu()

with tf.variable_scope('conv2') as scope:

weights = tf.Variable(tf.truncated_normal(shape=[3,3,64,16], stddev = 0.1, dtype = tf.float32),

name = 'weights', dtype = tf.float32)

biases = tf.Variable(tf.constant(value = 0.1, dtype = tf.float32, shape = [16]),

name = 'biases', dtype = tf.float32)

conv = tf.nn.conv2d(norm1, weights, strides = [1,1,1,1],padding='SAME')

pre_activation = tf.nn.bias_add(conv, biases)

conv2 = tf.nn.relu(pre_activation, name='conv2')

# 池化层2

# 3x3最大池化,步长strides为2,池化后执行lrn()操作,

# pool2 and norm2

with tf.variable_scope('pooling2_lrn') as scope:

norm2 = tf.nn.lrn(conv2, depth_radius=4, bias=1.0, alpha=0.001/9.0,beta=0.75,name='norm2')

pool2 = tf.nn.max_pool(norm2, ksize=[1,3,3,1], strides=[1,1,1,1],padding='SAME',name='pooling2')

# 全连接层3

# 128个神经元,将之前pool层的输出reshape成一行,激活函数relu()

with tf.variable_scope('local3') as scope:

# 函数的作用是将tensor变换为参数shape的形式。 其中shape为一个列表形式,特殊的一点是列表中可以存在-1。

# -1代表的含义是不用我们自己指定这一维的大小,函数会自动计算,但列表中只能存在一个-1。

reshape = tf.reshape(pool2, shape=[batch_size, -1])

# get_shape返回的是一个元组

dim = reshape.get_shape()[1].value

weights = tf.Variable(tf.truncated_normal(shape=[dim,128], stddev = 0.005, dtype = tf.float32),

name = 'weights', dtype = tf.float32)

biases = tf.Variable(tf.constant(value = 0.1, dtype = tf.float32, shape = [128]),

name = 'biases', dtype=tf.float32)

local3 = tf.nn.relu(tf.matmul(reshape, weights) + biases, name=scope.name)

# 全连接层4

# 128个神经元,激活函数relu()

with tf.variable_scope('local4') as scope:

weights = tf.Variable(tf.truncated_normal(shape=[128,128], stddev = 0.005, dtype = tf.float32),

name = 'weights',dtype = tf.float32)

biases = tf.Variable(tf.constant(value = 0.1, dtype = tf.float32, shape = [128]),

name = 'biases', dtype = tf.float32)

local4 = tf.nn.relu(tf.matmul(local3, weights) + biases, name='local4')

# dropout层

# with tf.variable_scope('dropout') as scope:

# drop_out = tf.nn.dropout(local4, 0.8)

# Softmax回归层

# 将前面的FC层输出,做一个线性回归,计算出每一类的得分,在这里是2类,所以这个层输出的是两个得分。

with tf.variable_scope('softmax_linear') as scope:

weights = tf.Variable(tf.truncated_normal(shape=[128, n_classes], stddev = 0.005, dtype = tf.float32),

name = 'softmax_linear', dtype = tf.float32)

biases = tf.Variable(tf.constant(value = 0.1, dtype = tf.float32, shape = [n_classes]),

name = 'biases', dtype = tf.float32)

softmax_linear = tf.add(tf.matmul(local4, weights), biases, name='softmax_linear')

return softmax_linear

# loss计算

# 传入参数:logits,网络计算输出值。labels,真实值,在这里是0或者1

# 返回参数:loss,损失值

def losses(logits, labels):

with tf.variable_scope('loss') as scope:

# 传入的logits为神经网络输出层的输出,shape为[batch_size,num_classes],

# 传入的label为一个一维的vector,长度等于batch_size,

# 每一个值的取值区间必须是[0,num_classes),其实每一个值就是代表了batch中对应样本的类别

cross_entropy = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=logits, labels=labels, name='xentropy_per_example')

# tf.reduce_mean 函数用于计算张量tensor沿着指定的数轴(tensor的某一维度)上的的平均值,

# 主要用作降维或者计算tensor(图像)的平均值。

loss = tf.reduce_mean(cross_entropy, name='loss')

# tf.summary.scalar用来显示标量信息

# 一般在画loss,accuary时会用到这个函数。

tf.summary.scalar(scope.name+'/loss', loss)

return loss

# loss损失值优化

# 输入参数:loss。learning_rate,学习速率。

# 返回参数:train_op,训练op,这个参数要输入sess.run中让模型去训练。

def trainning(loss, learning_rate):

with tf.name_scope('optimizer'):

# tf.train.AdamOptimizer()函数是Adam优化算法:是一个寻找全局最优点的优化算法,引入了二次方梯度校正。

# learning_rate:张量或浮点值。学习速率

optimizer = tf.train.AdamOptimizer(learning_rate= learning_rate)

global_step = tf.Variable(0, name='global_step', trainable=False)

# minimize() 实际上包含了两个步骤,即 compute_gradients 和 apply_gradients,

# 前者用于计算梯度,后者用于使用计算得到的梯度来更新对应的variable

train_op = optimizer.minimize(loss, global_step= global_step)

return train_op

# 评价/准确率计算

# 输入参数:logits,网络计算值。labels,标签,也就是真实值,在这里是0或者1。

# 返回参数:accuracy,当前step的平均准确率,也就是在这些batch中多少张图片被正确分类了。

def evaluation(logits, labels):

with tf.variable_scope('accuracy') as scope:

# tf.nn.in_top_k用于计算预测的结果和实际结果的是否相等,并返回一个bool类型的张量

correct = tf.nn.in_top_k(logits, labels, 1)

# tf.cast()函数的作用是执行 tensorflow 中张量数据类型转换

correct = tf.cast(correct, tf.float16)

# tf.reduce_mean计算张量的各个维度的元素的量

accuracy = tf.reduce_mean(correct)

# tf.summary.scalar用来显示标量信息

tf.summary.scalar(scope.name+'/accuracy', accuracy)

return accuracy

(4)backward.py(训练模型)

# 导入文件

import os

import numpy as np

import tensorflow as tf

import batchdealing

import forward

# 变量声明

N_CLASSES = 4 # 4类 分别是:'cabbage','carrot','nori','potato'

IMG_W = 64 # resize图像,太大的话训练时间久

IMG_H = 64

BATCH_SIZE =20 # 一次喂入多少

CAPACITY = 200 # 容量

MAX_STEP = 200 # 一般大于10K

learning_rate = 0.0001 # 一般小于0.0001

# 获取批次batch E:/python-run-env/train-test/Re_train/image_data/inputdata/

train_dir = 'E:/python-run-env/train-test/Re_train/image_data/inputdata/' # 训练样本的读入路径

logs_train_dir = 'E:/python-run-env/train-test/Re_train/image_data/inputdata/' # logs存储路径

# logs_test_dir = 'E:/Re_train/image_data/test' # logs存储路径

# train, train_label = batchdealing.get_files(train_dir)

train, train_label, val, val_label = batchdealing.get_files(train_dir, 0.3)

# 训练数据及标签

train_batch,train_label_batch = batchdealing.get_batch(train, train_label, IMG_W, IMG_H, BATCH_SIZE, CAPACITY)

# 测试数据及标签

val_batch, val_label_batch = batchdealing.get_batch(val, val_label, IMG_W, IMG_H, BATCH_SIZE, CAPACITY)

# 训练操作定义

train_logits = forward.inference(train_batch, BATCH_SIZE, N_CLASSES)

train_loss = forward.losses(train_logits, train_label_batch)

train_op = forward.trainning(train_loss, learning_rate)

train_acc = forward.evaluation(train_logits, train_label_batch)

# 测试操作定义

test_logits = forward.inference(val_batch, BATCH_SIZE, N_CLASSES)

test_loss = forward.losses(test_logits, val_label_batch)

test_acc = forward.evaluation(test_logits, val_label_batch)

# 这个是log汇总记录

summary_op = tf.summary.merge_all()

# 产生一个会话

sess = tf.Session()

# 产生一个writer来写log文件

train_writer = tf.summary.FileWriter(logs_train_dir, sess.graph)

# val_writer = tf.summary.FileWriter(logs_test_dir, sess.graph)

# 产生一个saver来存储训练好的模型

saver = tf.train.Saver()

# 所有节点初始化

sess.run(tf.global_variables_initializer())

# 队列监控

coord = tf.train.Coordinator()

threads = tf.train.start_queue_runners(sess=sess, coord=coord)

# 进行batch的训练

try:

# 执行MAX_STEP步的训练,一步一个batch

for step in np.arange(MAX_STEP):

if coord.should_stop():

break

# 启动以下操作节点

_, tra_loss, tra_acc = sess.run([train_op, train_loss, train_acc])

# 每隔50步打印一次当前的loss以及acc,同时记录log,写入writer

if step % 10 == 0:

print('Step %d, train loss = %.2f, train accuracy = %.2f%%' %(step, tra_loss, tra_acc*100.0))

summary_str = sess.run(summary_op)

train_writer.add_summary(summary_str, step)

# 每隔100步,保存一次训练好的模型

if (step + 1) == MAX_STEP:

checkpoint_path = os.path.join(logs_train_dir, 'model.ckpt')

saver.save(sess, checkpoint_path, global_step=step)

except tf.errors.OutOfRangeError:

print('Done training -- epoch limit reached')

finally:

coord.request_stop()

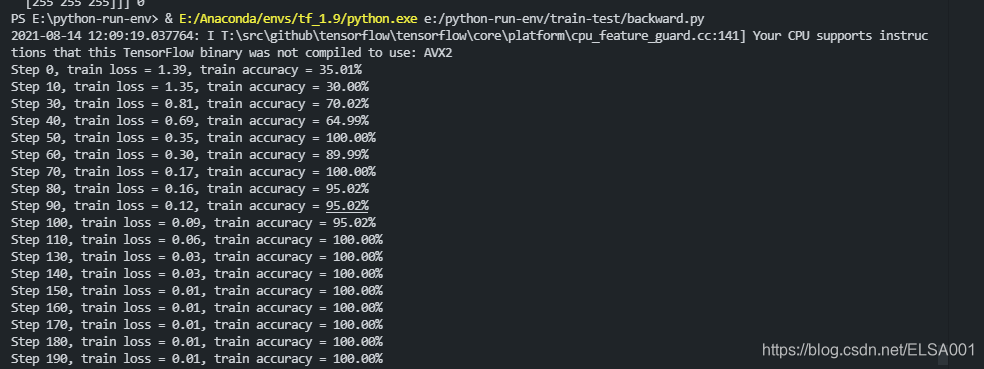

训练结束后,在终端显示:

(5)test.py

from PIL import Image

import numpy as np

import tensorflow as tf

import matplotlib.pyplot as plt

import forward

from batchdealing import get_files

# 获取一张图片

def get_one_image(train):

# 输入参数:train,训练图片的路径

# 返回参数:image,从训练图片中随机抽取一张图片

n = len(train)

ind = np.random.randint(0, n)

img_dir = train[ind] # 随机选择测试的图片

img = Image.open(img_dir)

# 显示图片,在jupyter notebook下当然也可以不用plt.show()

plt.imshow(img)

plt.show(img)

imag = img.resize([64, 64]) # 由于图片在预处理阶段以及resize,因此该命令可略

image = np.array(imag)

return image

# 测试图片

def evaluate_one_image(image_array):

with tf.Graph().as_default():

BATCH_SIZE = 1

N_CLASSES = 4

image = tf.cast(image_array, tf.float32)

# 线性缩放图像以具有零均值和单位范数。

image = tf.image.per_image_standardization(image)

image = tf.reshape(image, [1, 64, 64, 3])

# 构建卷积神经网络

logit = forward.inference(image, BATCH_SIZE, N_CLASSES)

# softmax函数的作用就是归一化

# 输入: 全连接层(往往是模型的最后一层)的值,一般代码中叫做logits。

# 输出: 归一化的值,含义是属于该位置的概率,一般代码叫做probs。

logit = tf.nn.softmax(logit)

x = tf.placeholder(tf.float32, shape=[64, 64, 3])

# you need to change the directories to yours. E:/python-run-env/train-test/Re_train/image_data/inputdata/

logs_train_dir = 'E:/python-run-env/train-test/Re_train/image_data/inputdata/'

# tf.train.Saver() 保存和加载模型

saver = tf.train.Saver()

with tf.Session() as sess:

print("Reading checkpoints...")

ckpt = tf.train.get_checkpoint_state(logs_train_dir)

if ckpt and ckpt.model_checkpoint_path:

global_step = ckpt.model_checkpoint_path.split('/')[-1].split('-')[-1]

saver.restore(sess, ckpt.model_checkpoint_path)

print('Loading success, global_step is %s' % global_step)

else:

print('No checkpoint file found')

# feed_dict的作用是给使用placeholder创建出来的tensor赋值。

# 其实,他的作用更加广泛:feed使用一个值临时替换一个op的输出结果。

# 你可以提供feed数据作为run()调用的参数。

# feed只在调用它的方法内有效,方法结束,feed就会消失。

# 当我们构建完图后,需要在一个会话中启动图,启动的第一步是创建一个Session对象。

# 为了取回(Fetch)操作的输出内容,可以在使用Session对象的run()调用执行图时,

# 传入一些tensor,这些tensor会帮助你取回结果。

prediction = sess.run(logit, feed_dict={x: image_array})

# 取出prediction中元素最大值所对应的索引,也就是最大的可能

max_index = np.argmax(prediction)

if max_index==0:

print('This is a cabbage with possibility %.6f' %prediction[:, 0])

elif max_index==1:

print('This is a carrot with possibility %.6f' %prediction[:, 1])

elif max_index==2:

print('This is a nori with possibility %.6f' %prediction[:, 2])

else:

print('This is a potato with possibility %.6f' %prediction[:, 3])

if __name__ == '__main__':

train_dir = 'E:/python-run-env/train-test/Re_train/image_data/inputdata/'

train, train_label, val, val_label = get_files(train_dir, 0.3)

img = get_one_image(val) # 通过改变参数train or val,进而验证训练集或测试集

evaluate_one_image(img)

点击测试之后,就可以显示如三效果展示那样的效果了,每一次都是随机选取我们自己的图片来测试的。