��ϵ�������ǽ���Python OpenCVͼ����֪ʶ,ǰ����Ҫ����ͼ�����š�OpenCV�����÷�,���ڽ���ͼ�����ĸ����㷨,����ͼ�������ӡ�ͼ����ǿ������ͼ��ָ��,���ڽ�����ѧϰ�о�ͼ��ʶ��ͼ�����Ӧ�á�ϣ�����¶�����������,����в���֮��,���뺣��~

��һƪ������Ҫ����ACEȥ���㷨����ͨ������ȥ���㷨�Լ����������㷨,���Ҳο�����λ������Ӿ�����(Rizzi ������)�����ġ�������Ҫͨ��Keras���ѧϰ����CNNģ��ʶ��������д����ͼ��,һƪ�dz������ͼ��������֡����IJο�������������ɭ��ʦ�IJ���,�Ƽ���ҹ�ע��������,��ķdz���!ϣ����ϲ��,�ҿ�����ϧ��

�ڶ������ǽ�����Pythonͼ��ʶ��,�ò�����Ҫ��Ŀ���⡢ͼ��ʶ���Լ����ѧϰ���ͼ�����Ϊ��,���������50ƪ����,��л��һ��������֧�֡�����Ҳ��������͵�!

����Ŀ¼

ͬʱ,�ò���֪ʶ��Ϊ���߲�������д�ܽ�,���ҿ�������շ�ר��,ΪС�����̷�Ǯ,��л����̧���������������ʱ˽����,ֻ�����ܴ����ϵ����ѧ��֪ʶ,һ����͡��������ص�ַ(���ϲ���ǵ�star,һ���):

ͼ��ʶ��:

- [Pythonͼ��ʶ��] ��ʮ��.�����ⰸ�����ż�ImageAI�����÷�

- [Pythonͼ��ʶ��] ��ʮ��.ͼ��Ԥ����֮ͼ��ȥ�����(ACE�㷨�Ͱ�ͨ������ȥ���㷨)

- [Pythonͼ��ʶ��] ��ʮ��.Keras���ѧϰ����CNNʶ��������д����ͼ��

ͼ����:

- [Pythonͼ����] һ.ͼ��������֪ʶ��OpenCV���ź���

- [Pythonͼ����] ��.OpenCV+Numpy���ȡ��������

- [Pythonͼ����] ��.��ȡͼ�����ԡ���ȤROI����ͨ������

- [Pythonͼ����] ��.ͼ��ƽ��֮��ֵ�˲��������˲�����˹�˲�����ֵ�˲�

- [Pythonͼ����] ��.ͼ���ںϡ��ӷ����㼰ͼ������ת��

- [Pythonͼ����] ��.ͼ�����š�ͼ����ת��ͼ��ת��ͼ��ƽ��

- [Pythonͼ����] ��.ͼ����ֵ���������㷨�Ա�

- [Pythonͼ����] ��.ͼ��ʴ��ͼ������

- [Pythonͼ����] ��.��̬ѧ֮ͼ�����㡢�����㡢�ݶ�����

- [Pythonͼ����] ʮ.��̬ѧ֮ͼ��ñ����ͺ�ñ����

- [Pythonͼ����] ʮһ.�Ҷ�ֱ��ͼ���OpenCV����ֱ��ͼ

- [Pythonͼ����] ʮ��.ͼ�α任֮ͼ�����任��ͼ���ӱ任��ͼ��У��

- [Pythonͼ����] ʮ��.���ڻҶ���άͼ��ͼ��ñ����ͺ�ñ����

- [Pythonͼ����] ʮ��.����OpenCV�����ش�����ͼ��ҶȻ�����

- [Pythonͼ����] ʮ��.ͼ��ĻҶ����Ա任

- [Pythonͼ����] ʮ��.ͼ��ĻҶȷ����Ա任֮�����任��٤���任

- [Pythonͼ����] ʮ��.ͼ�������Ե���֮Roberts���ӡ�Prewitt���ӡ�Sobel���Ӻ�Laplacian����

- [Pythonͼ����] ʮ��.ͼ�������Ե���֮Scharr���ӡ�Canny���Ӻ�LOG����

- [Pythonͼ����] ʮ��.ͼ��ָ�֮����K-Means���������ָ�

- [Pythonͼ����] ��ʮ.ͼ�����������Ͳ����������ֲ���������Ч

- [Pythonͼ����] ��ʮһ.ͼ�������֮ͼ������ȡ��������ȡ��

- [Pythonͼ����] ��ʮ��.Pythonͼ����Ҷ�任ԭ����ʵ��

- [Pythonͼ����] ��ʮ��.����Ҷ�任֮��ͨ�˲��͵�ͨ�˲�

- [Pythonͼ����] ��ʮ��.ͼ����Ч����֮ë�����������������Ч

- [Pythonͼ����] ��ʮ��.ͼ����Ч����֮���衢���ɡ����ա������Լ��˾���Ч

- [Pythonͼ����] ��ʮ��.ͼ�����ԭ��������KNN�����ر�Ҷ˹�㷨��ͼ����స��

- [Pythonͼ����] ��ʮ��.OpenGL���ż����ƻ���ͼ��(һ)

- [Pythonͼ����] ��ʮ��.OpenCV����ʵ��������⼰��Ƶ�е�����

- [Pythonͼ����] ��ʮ��.MoviePy��Ƶ�༭��ʵ�ֶ�������Ƶ���кϲ�����

- [Pythonͼ����] ��ʮ.ͼ����������������������ϸ�ܽ�(�Ƽ�)

- [Pythonͼ����] ��ʮһ.ͼ������㴦����������ϸ�ܽ�(�ҶȻ���������ֵ������)

- [Pythonͼ����] ��ʮ��.����Ҷ�任(ͼ��ȥ��)�����任(����ʶ��)������ϸ�ܽ�

- [Pythonͼ����] ��ʮ��.ͼ�������Ч������ԭ���������(ë�������������衢���ɡ����ꡢ�˾���)

- [Pythonͼ����] ��ʮ��.����ͼ���������뼸��ͼ�λ����������(�Ƽ�)

- [Pythonͼ����] ��ʮ��.OpenCVͼ�������š�������������ͼ���ں�(�Ƽ�)

- [Pythonͼ����] ��ʮ��.OpenCVͼ�α任�������(ƽ��������ת�����������)

- [Pythonͼ����] ��ʮ��.OpenCV��Matplotlib����ֱ��ͼ�������(��Ĥֱ��ͼ��H-Sֱ��ͼ����ҹ�����ж�)

- [Pythonͼ����] ��ʮ��.OpenCVͼ����ǿ�������(ֱ��ͼ���⻯���ֲ�ֱ��ͼ���⻯���Զ�ɫ�ʾ��⻯)

- [Pythonͼ����] ��ʮ��.Pythonͼ������������(��Ҷ˹ͼ����ࡢKNNͼ����ࡢDNNͼ�����)

- [Pythonͼ����] ��ʮ.ȫ����Pythonͼ��ָ��������(��ֵ�ָ��Ե�ָ�����ָ��ˮ���㷨��K-Means�ָ��ˮ���ָ����λ)

- [Pythonͼ����] ��ʮһ.Pythonͼ��ƽ���������(��ֵ�˲��������˲�����˹�˲�����ֵ�˲���˫���˲�)

- [Pythonͼ����] ��ʮ��.Pythonͼ������Ե����������(Roberts��Prewitt��Sobel��Laplacian��Canny��LOG)

- [Pythonͼ����] ��ʮ��.Pythonͼ����̬ѧ�����������(��ʴ���͡��������㡢�ݶȶ�ñ��ñ����)

- ���ֳ��ĸ����������ѧϰPythonͼ���� (��ƪ��� ��ʮ��)

һ.���ݼ�����

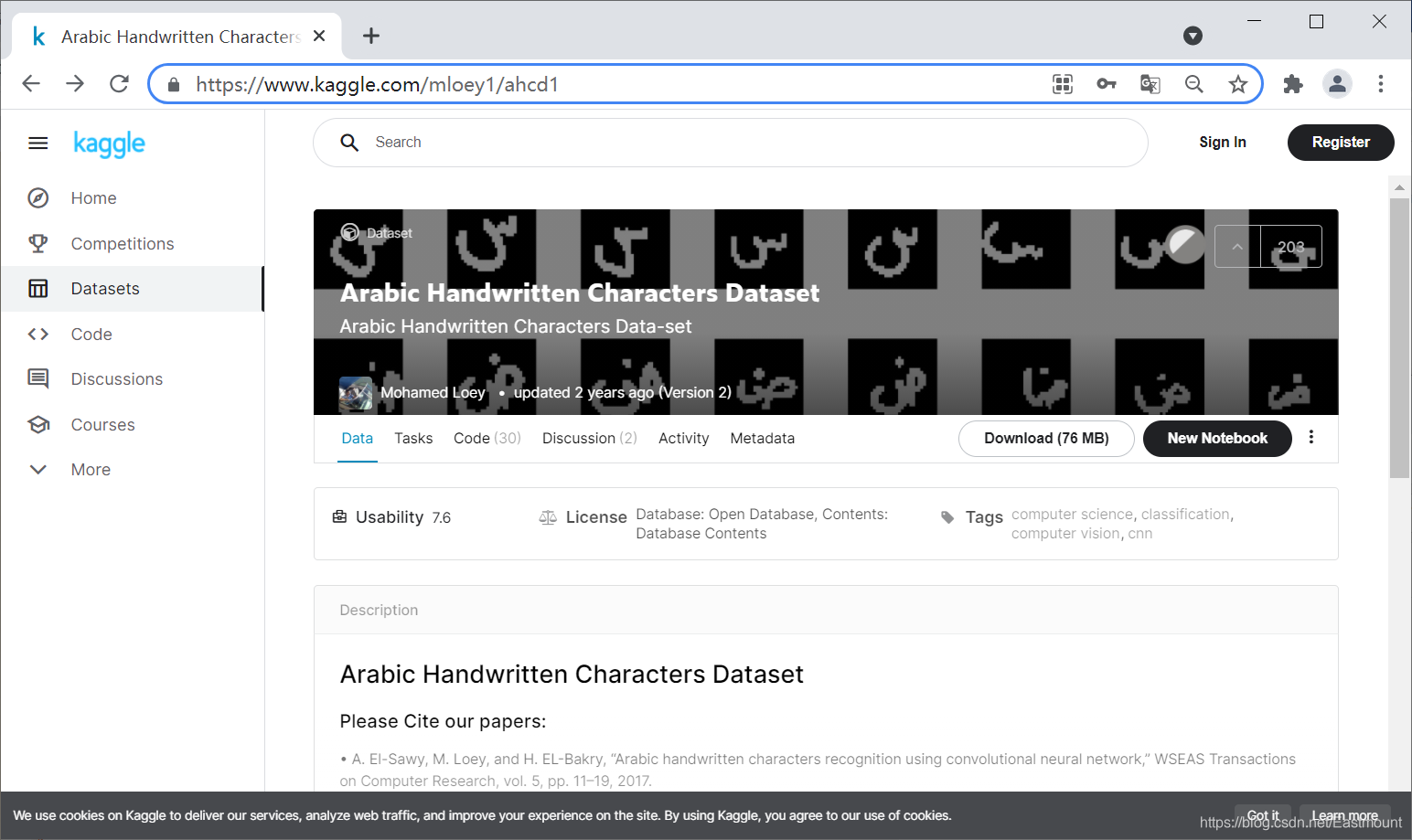

��������ĸ���й���28��,����ͼ��ʾ:

������֪,��дʶ�������Ƿdz������ͼ��������ݼ�(MNIST),������ʹ��kaggle����һ�����ݼ��������ݼ�����60����������д��16800���ַ���ɡ�

- ���ݼ���ַ:https://www.kaggle.com/mloey1/ahcd1

�������ݼ���������ʽ��д��ÿ���ַ�(�ӡ�alef������yeh��)��ʮ��,���ݼ������ļ�����ͼ��ʾ:

��ѹ������ͼ��ʾ:

ѵ��������13440���ַ�ͼ��,28�����,ÿ��ͼ��32x32��С,����ͼ��ʾ��

- Train Images 13440x32x32

ѵ��������3360���ַ�ͼ��,28�����,ÿ��ͼ��32x32��С,����ͼ��ʾ��

- Test Images 3360x32x32

ͬʱ,���ݼ��а���CSV�ļ�,��Ӧͼ�����صķֲ����,������Լ�����������ͼ��ʾ��

- csvTestImages 3360x1024.csv

��Ӧ���������ͼ��ʾ,��1��28,��������ݴ���ʱӦת��Ϊ0��27�±ꡣ

- csvTrainLabel 13440x1.csv

ע��,�ܶ�ʱ��ͼ�����ݼ�Ҳ��ʹ��CSV�ļ��洢,Ȼ�����ǿ��Ի�ԭ����Ӧ��ͼ��ֻ��ҪCSV�����ݼ������һһ��Ӧ����,���������ǿ�ʼʵ���!

��.���ݶ�ȡ

# -*- coding: utf-8 -*-

"""

Created on Wed Jul 7 18:54:36 2021

@author: xiuzhang CSDN

�ο�:����ɭ��ʦ���� �Ƽ���ҹ�ע ��������һλCV����

https://maoli.blog.csdn.net/article/details/117688738

"""

import numpy as np

import pandas as pd

from IPython.display import display

import csv

from PIL import Image

from scipy.ndimage import rotate

#----------------------------------------------------------------

# ��һ�� ��ȡ����

#----------------------------------------------------------------

#ѵ������images��labels

letters_training_images_file_path = "dataset/csvTrainImages 13440x1024.csv"

letters_training_labels_file_path = "dataset/csvTrainLabel 13440x1.csv"

#��������images��labels

letters_testing_images_file_path = "dataset/csvTestImages 3360x1024.csv"

letters_testing_labels_file_path = "dataset/csvTestLabel 3360x1.csv"

#��������

training_letters_images = pd.read_csv(letters_training_images_file_path, header=None)

training_letters_labels = pd.read_csv(letters_training_labels_file_path, header=None)

testing_letters_images = pd.read_csv(letters_testing_images_file_path, header=None)

testing_letters_labels = pd.read_csv(letters_testing_labels_file_path, header=None)

print("%d��32x32���ص�ѵ����������ĸͼ��" % training_letters_images.shape[0])

print("%d��32x32���صIJ���������ĸͼ��" % testing_letters_images.shape[0])

print(training_letters_images.head())

print(np.unique(training_letters_labels))

����������ͼ��ʾ:

�����������28�ࡣ

- [ 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28]

��.����Ԥ����

��Ҫ�����������IJ���:��ֵ����ת��ͼ��ͼ�������

1.��ֵ����ת��ͼ��

���ų��Խ�CSV�ļ�����ֵ����ת��Ϊһ��ͼ��,ͼ������Ϊ32x32����Ҫע��,����ԭʼ���ݼ�������,���ʹ��np.flip��תͼ��,��ͨ��rotate��ת�Ӷ���ø��õ�ͼ��

#----------------------------------------------------------------

# �ڶ��� ��ֵת��Ϊͼ������

#----------------------------------------------------------------

#ԭʼ���ݼ�������ʹ��np.flip��ת�� ͨ��rotate��ת�Ӷ���ø��õ�ͼ��

def convert_values_to_image(image_values, display=False):

#ת����32x32

image_array = np.asarray(image_values)

image_array = image_array.reshape(32,32).astype('uint8')

#��ת+��ת

image_array = np.flip(image_array, 0)

image_array = rotate(image_array, -90)

#ͼ����ʾ

new_image = Image.fromarray(image_array)

if display == True:

new_image.show()

return new_image

convert_values_to_image(training_letters_images.loc[0], True)

��������һ��f��ĸ��

2.ͼ���������

ͼ������ǽ�ͼ���е�ÿ�����ص����255,�Ӷ����Ų�������[0, 1]��Χ��ͬʱ,�������ͽ�����Ӧת����

#----------------------------------------------------------------

# ������ ͼ���������

#----------------------------------------------------------------

training_letters_images_scaled = training_letters_images.values.astype('float32')/255

training_letters_labels = training_letters_labels.values.astype('int32')

testing_letters_images_scaled = testing_letters_images.values.astype('float32')/255

testing_letters_labels = testing_letters_labels.values.astype('int32')

print("Training images of letters after scaling")

print(training_letters_images_scaled.shape)

print(training_letters_images_scaled[0:5])

������������ʾ:

Training images of letters after scaling

(13440, 1024)

[[0. 0. 0. ... 0. 0. 0.]

[0. 0. 0. ... 0. 0. 0.]

[0. 0. 0. ... 0. 0. 0.]

[0. 0. 0. ... 0. 0. 0.]

[0. 0. 0. ... 0. 0. 0.]]

3.���One-hot����ת��

���ڱ�����һ�����������,������28����������ĸ,�����Ҫ�������(0��27)����One-hotת��,����������չ����

- from keras.utils import to_categorical

to_categorical �ǽ��������ת��Ϊ������(ֻ��0��1)�ľ������ͱ�ʾ,�����Ϊ��ԭ�е��������ת��ΪOne-hot�������ʽ����һ���Ĵ���ʾ������:

from keras.utils.np_utils import *

#�����������

b = [0,1,2,3,4,5,6,7,8]

#����to_categorical��b����9�����������ת��

b = to_categorical(b, 9)

print(b)

"""

ִ�н������:

[[1. 0. 0. 0. 0. 0. 0. 0. 0.]

[0. 1. 0. 0. 0. 0. 0. 0. 0.]

[0. 0. 1. 0. 0. 0. 0. 0. 0.]

[0. 0. 0. 1. 0. 0. 0. 0. 0.]

[0. 0. 0. 0. 1. 0. 0. 0. 0.]

[0. 0. 0. 0. 0. 1. 0. 0. 0.]

[0. 0. 0. 0. 0. 0. 1. 0. 0.]

[0. 0. 0. 0. 0. 0. 0. 1. 0.]

[0. 0. 0. 0. 0. 0. 0. 0. 1.]]

"""

�����ϴ������п��Կ���,��ԭ����������е�ÿ��ֵ��ת��Ϊ�������һ��������,������������0��8����𡣱���2��ʾΪ[0. 0. 1. 0. 0. 0. 0. 0. 0.],ֻ�е�3��Ϊ1,��Ϊ��Чλ,����ȫ��Ϊ0��one_hot encoding(���ȱ���)�ֳ�Ϊһλ��Чλ����,�ϱߴ�����������ʵ���ǽ��������ת��Ϊ���ȱ��������������ת��:

0 1 2 3 4 5 6 7 8

0=> [1. 0. 0. 0. 0. 0. 0. 0. 0.]

1=> [0. 1. 0. 0. 0. 0. 0. 0. 0.]

2=> [0. 0. 1. 0. 0. 0. 0. 0. 0.]

3=> [0. 0. 0. 1. 0. 0. 0. 0. 0.]

4=> [0. 0. 0. 0. 1. 0. 0. 0. 0.]

5=> [0. 0. 0. 0. 0. 1. 0. 0. 0.]

6=> [0. 0. 0. 0. 0. 0. 1. 0. 0.]

7=> [0. 0. 0. 0. 0. 0. 0. 1. 0.]

8=> [0. 0. 0. 0. 0. 0. 0. 0. 1.]

�ò��ֵ�Ԥ������������:

#----------------------------------------------------------------

# ���IJ� ���One-hot����ת��

#----------------------------------------------------------------

import keras

from keras.utils import to_categorical

number_of_classes = 28

training_letters_labels_encoded = to_categorical(training_letters_labels-1,

num_classes=number_of_classes)

testing_letters_labels_encoded = to_categorical(testing_letters_labels-1,

num_classes=number_of_classes)

print(training_letters_labels)

print(training_letters_labels_encoded)

print(training_letters_images_scaled.shape)

# (13440, 1024)

����������ͼ��ʾ:

4.��״��

���ѧϰ���������״�dz���Ҫ,���Ľ�����ͼ����Ϊ32x32x1,��Keras�н���һ����ά������Ϊ����,��Ӧ��״Ϊ:

- ͼ����������

- ��

- ��

- ͨ��

#----------------------------------------------------------------

# ���岽 ��״��

#----------------------------------------------------------------

#������״ 32x32x1

training_letters_images_scaled = training_letters_images_scaled.reshape([-1, 32, 32, 1])

testing_letters_images_scaled = testing_letters_images_scaled.reshape([-1, 32, 32, 1])

print(training_letters_images_scaled.shape,

training_letters_labels_encoded.shape,

testing_letters_images_scaled.shape,

testing_letters_labels_encoded.shape)

����ͼ����32x32�ĻҶ�ͼ,������������ʾ:

- (13440, 32, 32, 1) (13440, 28) (3360, 32, 32, 1) (3360, 28)

��.CNNģ�ʹ

1.�������������

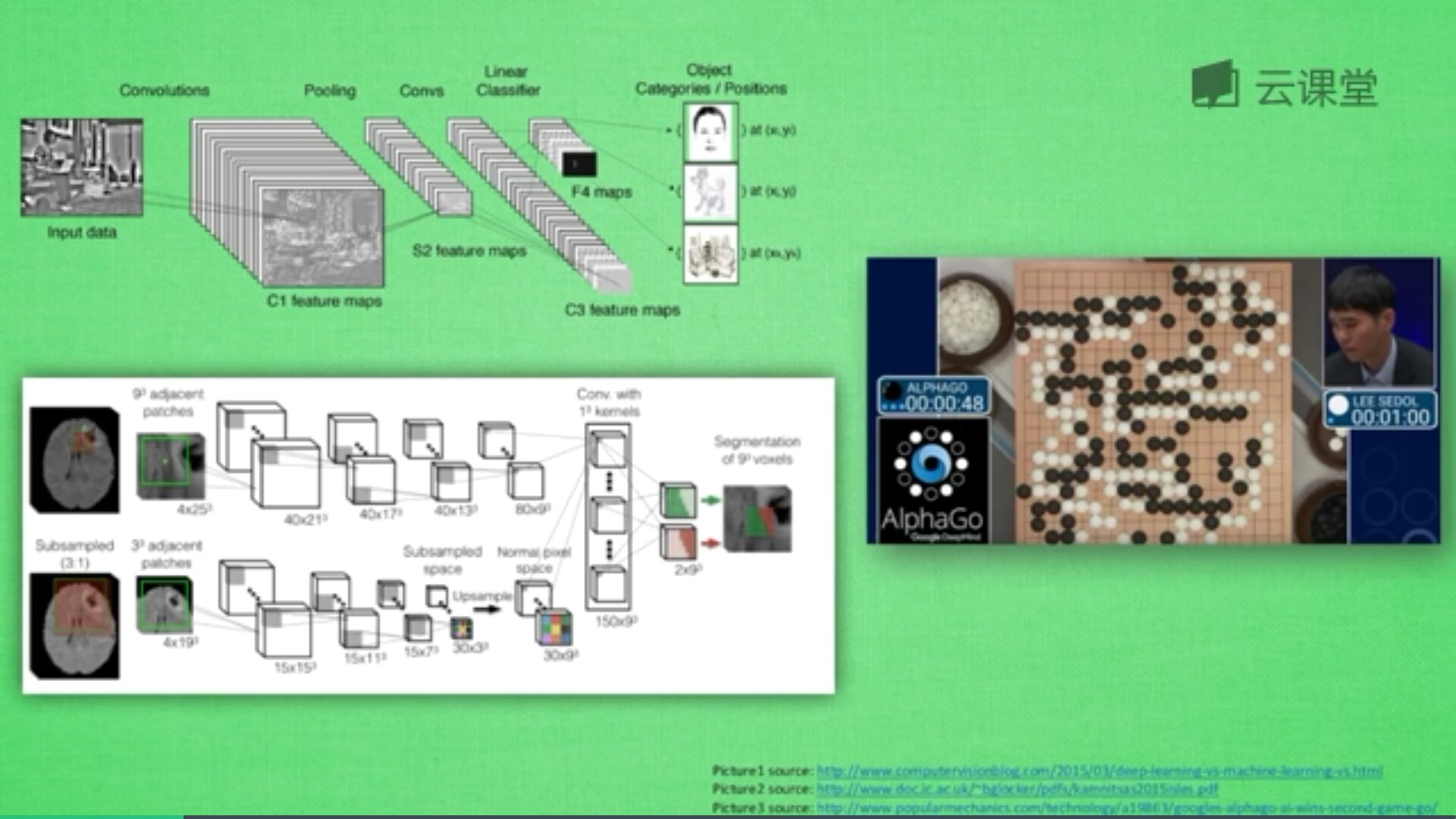

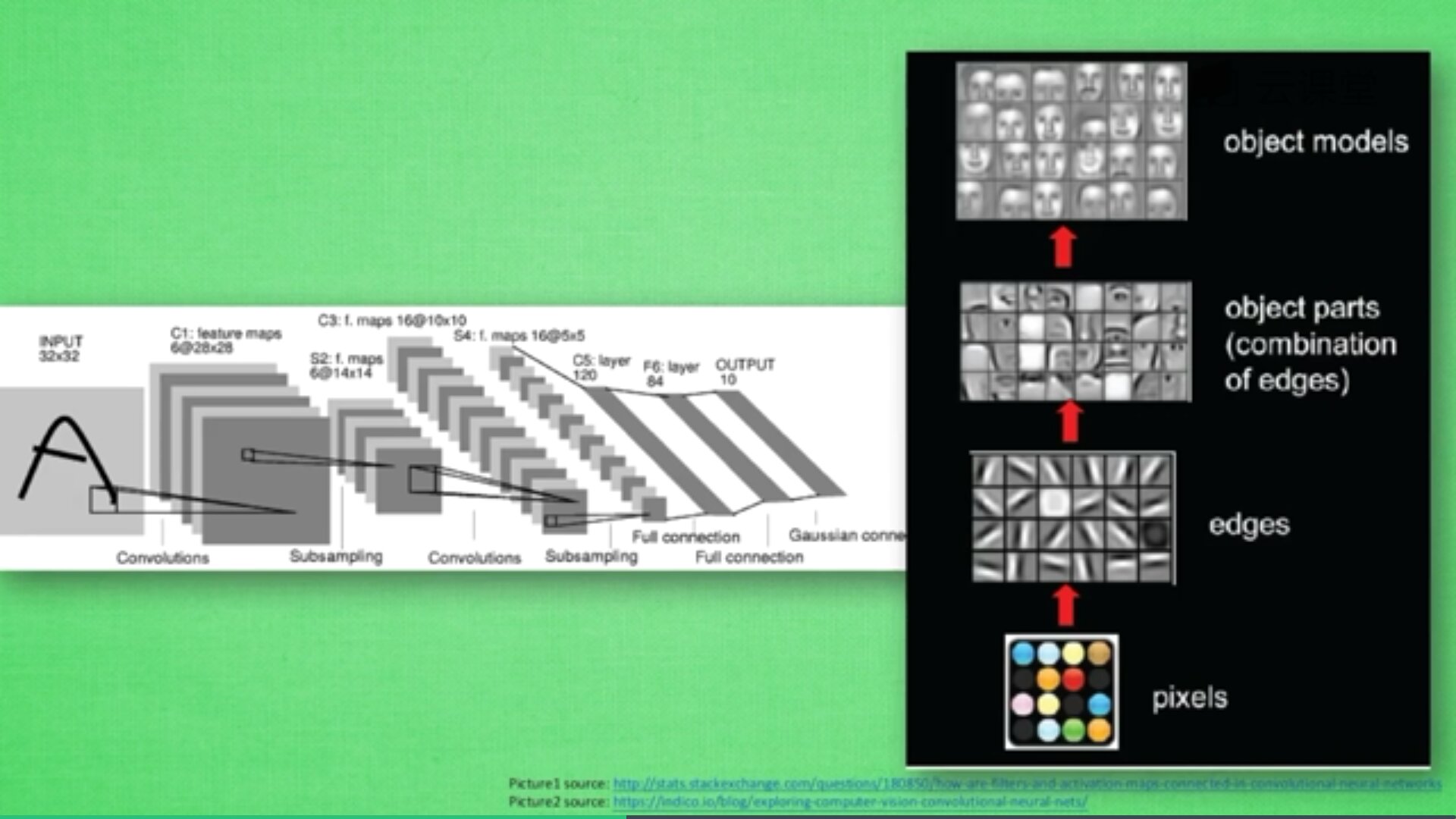

�����������Ӣ����Convolutional Neural Network,���CNN����ͨ��Ӧ����ͼ��ʶ�������ʶ������,���ܸ���������Ľ��,Ҳ����Ӧ������Ƶ�������������롢��Ȼ���Դ�����ҩ��ֵ����������İ��������ü��������Χ����ǻ��ھ���������ġ�

���������ɺܶ������,ÿһ�����д��ںܶ���Ԫ,��Щ��Ԫ��ʶ������Ĺؼ�,��������ͼƬʱ,��ʵ����һ�����֡�

����,������ʲô��˼��?

������ָ���ڶ�ÿ������������,���Ƕ�ͼƬ������д���,����������ǿ��ͼƬ��������,��������һ��ͼ�ζ�����һ����,Ҳ�������������ͼƬ�����⡣

��������������������,����������ͼƬ�Ϲ����Ѽ���Ϣ,ÿһ����������һС����Ϣ,������һС����Ϣ֮��õ���Ե��Ϣ�������һ�εó��۾�����������,�پ���һ�ι���,��������Ϣ�ܽ����,�ٽ���Щ��Ϣ�ŵ�ȫ�������н���ѵ��,����ɨ�����յó��ķ�����������ͼ��ʾ,è��һ����Ƭ��Ҫת��Ϊ��ѧ����ʽ,������ó����ߴ洢,���кڰ���Ƭ�ĸ߶�Ϊ1,��ɫ��Ƭ�ĸ߶�Ϊ3(RGB)��

�������Ѽ���Щ��Ϣ,���õ�һ����С��ͼƬ,�پ���ѹ��������ϢǶ�뵽��ͨ����,���յõ�����Ľ��,������̼��Ǿ�����Convnets��һ���ڿռ��Ϲ���������������,����ͼ��ʾ,����һ��RGBͼƬ����ѹ������,�õ�һ���ܳ��Ľ����

һ����������������������Ļ���,���ǽ�ʹ�������������������ľ�����ˡ�����ͼ��ʾ,�����γɽ�������״,����������һ���dz����dz��ͼƬ,������������,ͨ������������ѹ�ռ��ά��,ͬʱ�����������,ʹ�����Ϣ�����Ͽ��Ա�ʾ�����ӵ����塣ͬʱ,������ڽ������Ķ���ʵ��һ��������,���пռ���Ϣ����ѹ����һ����ʶ,ֻ�а�ͼƬӳ�䵽��ͬ�����Ϣ����,�����CNN������˼�롣

��ͼ�ľ�����������:

- ����,������һ�Ų�ɫͼƬ,������RGB��ԭɫ����,ͼ��ij��Ϳ�Ϊ256*256,��������ֱ��Ӧ��(R)����(G)����(B)����ͼ��,Ҳ���Կ������ص�ĺ�ȡ�

- ���,CNN��ͼƬ�ij��ȺͿ��Ƚ���ѹ��,���128x128x16�ķ���,ѹ���ķ����ǰ�ͼƬ�ij��ȺͿ���ѹС,�Ӷ����ߺ�ȡ�

- �ٴ�,����ѹ����64x64x64,ֱ��32x32x256,��ʱ�������һ���ܺ�ij�������,���������֮Ϊ������Classifier���÷������ܹ������ǵķ���������Ԥ��,MNIST��д�����ݼ�Ԥ������10������,����[0,0,0,1,0,0,0,0,0,0]��ʾԤ��Ľ��������3,Classifier��������൱����10�����С�

- ���,CNNͨ������ѹ��ͼƬ�ij��ȺͿ���,���Ӻ��,���ջ�����һ���ܺ�ķ�����,�Ӷ����з���Ԥ�⡣

��������������ٷ�չ,����һ������Ҫ��ԭ�����CNN��������������,��Ҳ�Ǽ�����Ӿ������ķ�Ծ����������TensorFlow�е�CNN,Google��˾Ҳ����һ���dz����ʵ���Ƶ�̳�,Ҳ�Ƽ����ȥѧϰ��

2.ģ�����

�ò��ֲ���Keras��IJ�CNN����,�DZ��ĵĺ��Ĺ���,�ٴθ�л���ִ���,�����dz��

#----------------------------------------------------------------

# ������ CNNģ�����

#----------------------------------------------------------------

from keras.models import Sequential

from keras.layers import Conv2D, MaxPooling2D, GlobalAveragePooling2D, BatchNormalization, Dropout, Dense

#����ģ��

def create_model(optimizer='adam', kernel_initializer='he_normal', activation='relu'):

#��һ��������

model = Sequential()

model.add(Conv2D(filters=16, kernel_size=3, padding='same', input_shape=(32, 32, 1), kernel_initializer=kernel_initializer, activation=activation))

model.add(BatchNormalization())

model.add(MaxPooling2D(pool_size=2))

model.add(Dropout(0.2))

#�ڶ���������

model.add(Conv2D(filters=32, kernel_size=3, padding='same', kernel_initializer=kernel_initializer, activation=activation))

model.add(BatchNormalization())

model.add(MaxPooling2D(pool_size=2))

model.add(Dropout(0.2))

#������������

model.add(Conv2D(filters=64, kernel_size=3, padding='same', kernel_initializer=kernel_initializer, activation=activation))

model.add(BatchNormalization())

model.add(MaxPooling2D(pool_size=2))

model.add(Dropout(0.2))

#���ĸ�������

model.add(Conv2D(filters=128, kernel_size=3, padding='same', kernel_initializer=kernel_initializer, activation=activation))

model.add(BatchNormalization())

model.add(MaxPooling2D(pool_size=2))

model.add(Dropout(0.2))

model.add(GlobalAveragePooling2D())

#ȫ���Ӳ����28����

model.add(Dense(28, activation='softmax'))

#��ʧ��������

model.compile(loss='categorical_crossentropy', metrics=['accuracy'], optimizer=optimizer)

return model

#����ģ��

model = create_model(optimizer='Adam', kernel_initializer='uniform', activation='relu')

model.summary()

ģ�Ͱ����ĸ�����������,��ͨ��ȫ���Ӳ�ʵ�ַ��ࡣÿ����������������:

- ��������16������ͼ,��СΪ3��3��һ��relu�����;

- ����������������,��Ҫ���ڽ�������ֲ���ѵ���Ͳ��������еı仯,BN�������ڼ����ǰ,�����뼤�����������й�һ��,������ݷ���ƫ�ƺ������Ӱ��;

- �ػ�����Ҫ����������²���,�Ӷ���С������ѧϰ������ѵ��ʱ��;

- ʹ��dropout����,���ò���Ϊ0.2,��������Ϊ����ų�����20%����Ԫ�Լ��ٹ�����ϡ�

�ܹ���16��32��64��128��Ԫ�ص����ز�,���ͨ�������(���28),��ʹ��softmax�����,���ý�������Ϊ��ʧ����������ģ�͵Ľṹ���������ʾ:

Model: "sequential_1"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

conv2d_1 (Conv2D) (None, 32, 32, 16) 160

_________________________________________________________________

batch_normalization_1 (Batch (None, 32, 32, 16) 64

_________________________________________________________________

max_pooling2d_1 (MaxPooling2 (None, 16, 16, 16) 0

_________________________________________________________________

dropout_1 (Dropout) (None, 16, 16, 16) 0

_________________________________________________________________

conv2d_2 (Conv2D) (None, 16, 16, 32) 4640

_________________________________________________________________

batch_normalization_2 (Batch (None, 16, 16, 32) 128

_________________________________________________________________

max_pooling2d_2 (MaxPooling2 (None, 8, 8, 32) 0

_________________________________________________________________

dropout_2 (Dropout) (None, 8, 8, 32) 0

_________________________________________________________________

conv2d_3 (Conv2D) (None, 8, 8, 64) 18496

_________________________________________________________________

batch_normalization_3 (Batch (None, 8, 8, 64) 256

_________________________________________________________________

max_pooling2d_3 (MaxPooling2 (None, 4, 4, 64) 0

_________________________________________________________________

dropout_3 (Dropout) (None, 4, 4, 64) 0

_________________________________________________________________

conv2d_4 (Conv2D) (None, 4, 4, 128) 73856

_________________________________________________________________

batch_normalization_4 (Batch (None, 4, 4, 128) 512

_________________________________________________________________

max_pooling2d_4 (MaxPooling2 (None, 2, 2, 128) 0

_________________________________________________________________

dropout_4 (Dropout) (None, 2, 2, 128) 0

_________________________________________________________________

global_average_pooling2d_1 ( (None, 128) 0

_________________________________________________________________

dense_1 (Dense) (None, 28) 3612

=================================================================

Total params: 101,724

Trainable params: 101,244

Non-trainable params: 480

_________________________________________________________________

3.ģ�ͻ���

��Keras��,���ǿ��Ե��� keras.utils.vis_utils ģ�����ģ��,��ģ���ṩ��graphviz���ƹ��ܡ�

#----------------------------------------------------------------

# ���߲� ģ�ͻ���

#----------------------------------------------------------------

from keras.utils.vis_utils import plot_model

from IPython.display import Image as IPythonImage

plot_model(model, to_file="model.png", show_shapes=True)

display(IPythonImage('model.png'))

����������ͼ��ʾ:

graphviz�������

AssertionError: ��dot�� with args [��-Tps��, ��C:\Users\��\AppData\Local\Temp\tmp8tx_96_r��] returned code: 1,��������pydot�Ѿ�ֹͣ������,��Python3.5��Python3.6�������á��������:

- ��һ��,��Anaconda��������,��ж��pydot��չ��:pip uninstall pydot

- �ڶ���,��װpydotplus��չ��:pip install pydotplus,�����������ж�ذ�װ o(�i�n�i)o

pip install --target=��c:\software\program software\anaconda3\envs\tensorflow\lib\site-packages�� pydotplus- ������,�ҵ�keras�����utils.vis_utils.py,��pydot�Ķ��滻��pydotplus

import pydotplus as pydot

- ���ص�ַ:https://graphviz.gitlab.io/_pages/Download/windows/graphviz-2.38.msi

- pydot�÷�:https://blog.csdn.net/lizzy05/article/details/88529483

- �������:https://blog.csdn.net/qq_27825451/article/details/89338222

��.ʵ������

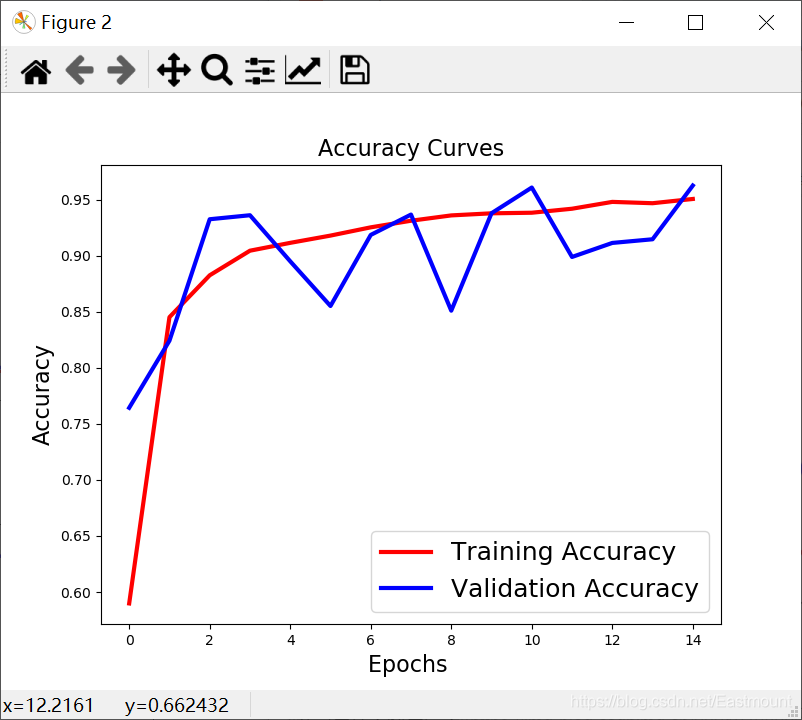

1.ģ��ѵ��

������ģ��ѵ��,����ʹ�ü���������ģ��Ȩ��,ͬʱbatch��С����Ϊ20,epochs����Ϊ15��

#----------------------------------------------------------------

# �ڰ˲� ģ��ѵ��

#----------------------------------------------------------------

from keras.callbacks import ModelCheckpoint

checkpointer = ModelCheckpoint(filepath='weights.hdf5',

verbose=1,

save_best_only=True)

history = model.fit(training_letters_images_scaled,

training_letters_labels_encoded,

validation_data=(testing_letters_images_scaled,

testing_letters_labels_encoded),

epochs=15,

batch_size=20,

verbose=1,

callbacks=[checkpointer])

print(history)

ѵ����������ͼ��ʾ:

Train on 13440 samples, validate on 3360 samples

Epoch 1/15

13440/13440 [==============================] - 21s 2ms/step - loss: 1.3694 - accuracy: 0.5759 - val_loss: 0.8664 - val_accuracy: 0.7271

Epoch 00001: val_loss improved from inf to 0.86641, saving model to weights.hdf5

Epoch 2/15

13440/13440 [==============================] - 20s 1ms/step - loss: 0.4939 - accuracy: 0.8394 - val_loss: 0.2832 - val_accuracy: 0.9125

Epoch 00002: val_loss improved from 0.86641 to 0.28324, saving model to weights.hdf5

Epoch 3/15

13440/13440 [==============================] - 20s 1ms/step - loss: 0.3559 - accuracy: 0.8861 - val_loss: 0.4726 - val_accuracy: 0.8438

Epoch 00003: val_loss did not improve from 0.28324

Epoch 4/15

13440/13440 [==============================] - 20s 1ms/step - loss: 0.2913 - accuracy: 0.9059 - val_loss: 0.2211 - val_accuracy: 0.9292

Epoch 00004: val_loss improved from 0.28324 to 0.22108, saving model to weights.hdf5

Epoch 5/15

13440/13440 [==============================] - 21s 2ms/step - loss: 0.2557 - accuracy: 0.9183 - val_loss: 0.1782 - val_accuracy: 0.9429

Epoch 00005: val_loss improved from 0.22108 to 0.17819, saving model to weights.hdf5

Epoch 6/15

13440/13440 [==============================] - 18s 1ms/step - loss: 0.2287 - accuracy: 0.9237 - val_loss: 0.1602 - val_accuracy: 0.9518

Epoch 00006: val_loss improved from 0.17819 to 0.16022, saving model to weights.hdf5

Epoch 7/15

13440/13440 [==============================] - 21s 2ms/step - loss: 0.2162 - accuracy: 0.9295 - val_loss: 0.1964 - val_accuracy: 0.9417

Epoch 00007: val_loss did not improve from 0.16022

Epoch 8/15

13440/13440 [==============================] - 20s 1ms/step - loss: 0.1913 - accuracy: 0.9365 - val_loss: 0.1751 - val_accuracy: 0.9491

Epoch 00008: val_loss did not improve from 0.16022

Epoch 9/15

13440/13440 [==============================] - 18s 1ms/step - loss: 0.1791 - accuracy: 0.9412 - val_loss: 0.1389 - val_accuracy: 0.9640

Epoch 00009: val_loss improved from 0.16022 to 0.13888, saving model to weights.hdf5

Epoch 10/15

13440/13440 [==============================] - 19s 1ms/step - loss: 0.1651 - accuracy: 0.9434 - val_loss: 0.1581 - val_accuracy: 0.9533

Epoch 00010: val_loss did not improve from 0.13888

Epoch 11/15

13440/13440 [==============================] - 19s 1ms/step - loss: 0.1705 - accuracy: 0.9426 - val_loss: 0.2523 - val_accuracy: 0.9140

Epoch 00011: val_loss did not improve from 0.13888

Epoch 12/15

13440/13440 [==============================] - 19s 1ms/step - loss: 0.1648 - accuracy: 0.9455 - val_loss: 0.3119 - val_accuracy: 0.9071

Epoch 00012: val_loss did not improve from 0.13888

Epoch 13/15

13440/13440 [==============================] - 21s 2ms/step - loss: 0.1517 - accuracy: 0.9503 - val_loss: 0.1698 - val_accuracy: 0.9497

Epoch 00013: val_loss did not improve from 0.13888

Epoch 14/15

13440/13440 [==============================] - 18s 1ms/step - loss: 0.1429 - accuracy: 0.9529 - val_loss: 0.1674 - val_accuracy: 0.9539

Epoch 00014: val_loss did not improve from 0.13888

Epoch 15/15

13440/13440 [==============================] - 19s 1ms/step - loss: 0.1363 - accuracy: 0.9527 - val_loss: 0.1502 - val_accuracy: 0.9598

Epoch 00015: val_loss did not improve from 0.13888

<keras.callbacks.callbacks.History object at 0x000001EF27F6DDA0>

2.��������ȷ������

�ò��ֺ��Ĵ�������:

#----------------------------------------------------------------

# �ھŲ� ����ͼ��

#----------------------------------------------------------------

import matplotlib.pyplot as plt

def plot_loss_accuracy(history):

# Loss

plt.figure(figsize=[8,6])

plt.plot(history.history['loss'],'r',linewidth=3.0)

plt.plot(history.history['val_loss'],'b',linewidth=3.0)

plt.legend(['Training loss', 'Validation Loss'],fontsize=18)

plt.xlabel('Epochs ',fontsize=16)

plt.ylabel('Loss',fontsize=16)

plt.title('Loss Curves',fontsize=16)

# Accuracy

plt.figure(figsize=[8,6])

plt.plot(history.history['accuracy'],'r',linewidth=3.0)

plt.plot(history.history['val_accuracy'],'b',linewidth=3.0)

plt.legend(['Training Accuracy', 'Validation Accuracy'],fontsize=18)

plt.xlabel('Epochs ',fontsize=16)

plt.ylabel('Accuracy',fontsize=16)

plt.title('Accuracy Curves',fontsize=16)

plot_loss_accuracy(history)

��������ͼ��ʾ�����

3.ģ������

ע��,������һ�������Ѿ�ѵ������ģ��,������������һ���ж�,��ֹ�ظ�ѵ��,ֱ�ӵ���weights.hdf5ģ�ͽ���Ԥ�⡣

#----------------------------------------------------------------

# �ڰ˲� ģ��ѵ��+������

#----------------------------------------------------------------

from keras.callbacks import ModelCheckpoint

from sklearn.metrics import classification_report

import matplotlib.pyplot as plt

#����ͼ��

def plot_loss_accuracy(history):

# Loss

plt.figure(figsize=[8,6])

plt.plot(history.history['loss'],'r',linewidth=3.0)

plt.plot(history.history['val_loss'],'b',linewidth=3.0)

plt.legend(['Training loss', 'Validation Loss'],fontsize=18)

plt.xlabel('Epochs ',fontsize=16)

plt.ylabel('Loss',fontsize=16)

plt.title('Loss Curves',fontsize=16)

# Accuracy

plt.figure(figsize=[8,6])

plt.plot(history.history['accuracy'],'r',linewidth=3.0)

plt.plot(history.history['val_accuracy'],'b',linewidth=3.0)

plt.legend(['Training Accuracy', 'Validation Accuracy'],fontsize=18)

plt.xlabel('Epochs ',fontsize=16)

plt.ylabel('Accuracy',fontsize=16)

plt.title('Accuracy Curves',fontsize=16)

#��������

def get_predicted_classes(model, data, labels=None):

image_predictions = model.predict(data)

predicted_classes = np.argmax(image_predictions, axis=1)

true_classes = np.argmax(labels, axis=1)

return predicted_classes, true_classes, image_predictions

def get_classification_report(y_true, y_pred):

print(classification_report(y_true, y_pred, digits=4)) #С����4λ

checkpointer = ModelCheckpoint(filepath='weights.hdf5',

verbose=1,

save_best_only=True)

flag = "test"

if flag=="train":

history = model.fit(training_letters_images_scaled,

training_letters_labels_encoded,

validation_data=(testing_letters_images_scaled,

testing_letters_labels_encoded),

epochs=15,

batch_size=20,

verbose=1,

callbacks=[checkpointer])

print(history)

plot_loss_accuracy(history)

else:

#���ؾ��������֤��ʧ��ģ��

model.load_weights('weights.hdf5')

metrics = model.evaluate(testing_letters_images_scaled,

testing_letters_labels_encoded,

verbose=1)

print("Test Accuracy: {}".format(metrics[1]))

print("Test Loss: {}".format(metrics[0]))

y_pred, y_true, image_predictions = get_predicted_classes(model,

testing_letters_images_scaled,

testing_letters_labels_encoded)

get_classification_report(y_true, y_pred)

����������������ʾ:

3360/3360 [==============================] - 2s 466us/step

Test Accuracy: 0.9639880657196045

Test Loss: 0.13888128581900328

precision recall f1-score support

0 0.9756 1.0000 0.9877 120

1 0.9675 0.9917 0.9794 120

2 0.9187 0.9417 0.9300 120

3 0.9504 0.9583 0.9544 120

4 0.9600 1.0000 0.9796 120

5 0.9590 0.9750 0.9669 120

6 0.9587 0.9667 0.9627 120

7 0.9435 0.9750 0.9590 120

8 0.9134 0.9667 0.9393 120

9 0.9370 0.9917 0.9636 120

10 0.9817 0.8917 0.9345 120

11 0.9008 0.9833 0.9402 120

12 0.9832 0.9750 0.9791 120

13 0.9818 0.9000 0.9391 120

14 0.9912 0.9333 0.9614 120

15 0.9375 1.0000 0.9677 120

16 0.9912 0.9417 0.9658 120

17 0.9912 0.9417 0.9658 120

18 0.9914 0.9583 0.9746 120

19 0.9286 0.9750 0.9512 120

20 0.9739 0.9333 0.9532 120

21 0.9600 1.0000 0.9796 120

22 1.0000 1.0000 1.0000 120

23 0.9916 0.9833 0.9874 120

24 0.9737 0.9250 0.9487 120

25 0.9832 0.9750 0.9791 120

26 0.9741 0.9417 0.9576 120

27 1.0000 0.9667 0.9831 120

accuracy 0.9640 3360

macro avg 0.9650 0.9640 0.9640 3360

weighted avg 0.9650 0.9640 0.9640 3360

4.����Ԥ��ͼƬ

#----------------------------------------------------------------

# �ھŲ� ���Ʋ���ͼ��

#----------------------------------------------------------------

fig = plt.figure(0, figsize=(14,14))

indices = np.random.randint(0, testing_letters_labels.shape[0], size=49)

y_pred = np.argmax(model.predict(training_letters_images_scaled), axis=1)

for i, idx in enumerate(indices):

plt.subplot(7,7,i+1)

image_array = training_letters_images_scaled[idx][:,:,0]

image_array = np.flip(image_array, 0)

image_array = rotate(image_array, -90)

plt.imshow(image_array, cmap='gray')

plt.title("Pred: {} - Label: {}".format(y_pred[idx],

(training_letters_labels[idx] -1)))

plt.xticks([])

plt.yticks([])

plt.show()

plt.savefig("resutl.png")

����������ͼ��ʾ:

��.��������

���մ�������:

# -*- coding: utf-8 -*-

"""

Created on Wed Jul 7 18:54:36 2021

@author: xiuzhang CSDN

�ο�:����ɭ��ʦ���� �Ƽ���ҹ�ע ��������һλCV����

https://maoli.blog.csdn.net/article/details/117688738

"""

import numpy as np

import pandas as pd

from IPython.display import display

import csv

from PIL import Image

from scipy.ndimage import rotate

#----------------------------------------------------------------

# ��һ�� ��ȡ����

#----------------------------------------------------------------

#ѵ������images��labels

letters_training_images_file_path = "dataset/csvTrainImages 13440x1024.csv"

letters_training_labels_file_path = "dataset/csvTrainLabel 13440x1.csv"

#��������images��labels

letters_testing_images_file_path = "dataset/csvTestImages 3360x1024.csv"

letters_testing_labels_file_path = "dataset/csvTestLabel 3360x1.csv"

#��������

training_letters_images = pd.read_csv(letters_training_images_file_path, header=None)

training_letters_labels = pd.read_csv(letters_training_labels_file_path, header=None)

testing_letters_images = pd.read_csv(letters_testing_images_file_path, header=None)

testing_letters_labels = pd.read_csv(letters_testing_labels_file_path, header=None)

print("%d��32x32���ص�ѵ����������ĸͼ��" % training_letters_images.shape[0])

print("%d��32x32���صIJ���������ĸͼ��" % testing_letters_images.shape[0])

print(training_letters_images.head())

print(np.unique(training_letters_labels))

#----------------------------------------------------------------

# �ڶ��� ��ֵת��Ϊͼ������

#----------------------------------------------------------------

#ԭʼ���ݼ�������ʹ��np.flip��ת�� ͨ��rotate��ת�Ӷ���ø��õ�ͼ��

def convert_values_to_image(image_values, display=False):

#ת����32x32

image_array = np.asarray(image_values)

image_array = image_array.reshape(32,32).astype('uint8')

#��ת+��ת

image_array = np.flip(image_array, 0)

image_array = rotate(image_array, -90)

#ͼ����ʾ

new_image = Image.fromarray(image_array)

if display == True:

new_image.show()

return new_image

convert_values_to_image(training_letters_images.loc[0], True)

#----------------------------------------------------------------

# ������ ͼ���������

#----------------------------------------------------------------

training_letters_images_scaled = training_letters_images.values.astype('float32')/255

training_letters_labels = training_letters_labels.values.astype('int32')

testing_letters_images_scaled = testing_letters_images.values.astype('float32')/255

testing_letters_labels = testing_letters_labels.values.astype('int32')

print("Training images of letters after scaling")

print(training_letters_images_scaled.shape)

print(training_letters_images_scaled[0:5])

#----------------------------------------------------------------

# ���IJ� ���One-hot����ת��

#----------------------------------------------------------------

import keras

from keras.utils import to_categorical

number_of_classes = 28

training_letters_labels_encoded = to_categorical(training_letters_labels-1,

num_classes=number_of_classes)

testing_letters_labels_encoded = to_categorical(testing_letters_labels-1,

num_classes=number_of_classes)

print(training_letters_labels)

print(training_letters_labels_encoded)

print(training_letters_images_scaled.shape)

# (13440, 1024)

#----------------------------------------------------------------

# ���岽 ��״��

#----------------------------------------------------------------

#������״ 32x32x1

training_letters_images_scaled = training_letters_images_scaled.reshape([-1, 32, 32, 1])

testing_letters_images_scaled = testing_letters_images_scaled.reshape([-1, 32, 32, 1])

print(training_letters_images_scaled.shape,

training_letters_labels_encoded.shape,

testing_letters_images_scaled.shape,

testing_letters_labels_encoded.shape)

# (13440, 32, 32, 1) (13440, 28) (3360, 32, 32, 1) (3360, 28)

#----------------------------------------------------------------

# ������ CNNģ�����

#----------------------------------------------------------------

from keras.models import Sequential

from keras.layers import Conv2D, MaxPooling2D, GlobalAveragePooling2D, BatchNormalization, Dropout, Dense

#����ģ��

def create_model(optimizer='adam', kernel_initializer='he_normal', activation='relu'):

#��һ��������

model = Sequential()

model.add(Conv2D(filters=16, kernel_size=3, padding='same', input_shape=(32, 32, 1), kernel_initializer=kernel_initializer, activation=activation))

model.add(BatchNormalization())

model.add(MaxPooling2D(pool_size=2))

model.add(Dropout(0.2))

#�ڶ���������

model.add(Conv2D(filters=32, kernel_size=3, padding='same', kernel_initializer=kernel_initializer, activation=activation))

model.add(BatchNormalization())

model.add(MaxPooling2D(pool_size=2))

model.add(Dropout(0.2))

#������������

model.add(Conv2D(filters=64, kernel_size=3, padding='same', kernel_initializer=kernel_initializer, activation=activation))

model.add(BatchNormalization())

model.add(MaxPooling2D(pool_size=2))

model.add(Dropout(0.2))

#���ĸ�������

model.add(Conv2D(filters=128, kernel_size=3, padding='same', kernel_initializer=kernel_initializer, activation=activation))

model.add(BatchNormalization())

model.add(MaxPooling2D(pool_size=2))

model.add(Dropout(0.2))

model.add(GlobalAveragePooling2D())

#ȫ���Ӳ����28����

model.add(Dense(28, activation='softmax'))

#��ʧ��������

model.compile(loss='categorical_crossentropy', metrics=['accuracy'], optimizer=optimizer)

return model

#����ģ��

model = create_model(optimizer='Adam', kernel_initializer='uniform', activation='relu')

model.summary()

#----------------------------------------------------------------

# ���߲� ģ�ͻ���

#----------------------------------------------------------------

from keras.utils.vis_utils import plot_model

from IPython.display import Image as IPythonImage

plot_model(model, to_file="model.png", show_shapes=True)

display(IPythonImage('model.png'))

#----------------------------------------------------------------

# �ڰ˲� ģ��ѵ��+������

#----------------------------------------------------------------

from keras.callbacks import ModelCheckpoint

from sklearn.metrics import classification_report

import matplotlib.pyplot as plt

#����ͼ��

def plot_loss_accuracy(history):

# Loss

plt.figure(figsize=[8,6])

plt.plot(history.history['loss'],'r',linewidth=3.0)

plt.plot(history.history['val_loss'],'b',linewidth=3.0)

plt.legend(['Training loss', 'Validation Loss'],fontsize=18)

plt.xlabel('Epochs ',fontsize=16)

plt.ylabel('Loss',fontsize=16)

plt.title('Loss Curves',fontsize=16)

# Accuracy

plt.figure(figsize=[8,6])

plt.plot(history.history['accuracy'],'r',linewidth=3.0)

plt.plot(history.history['val_accuracy'],'b',linewidth=3.0)

plt.legend(['Training Accuracy', 'Validation Accuracy'],fontsize=18)

plt.xlabel('Epochs ',fontsize=16)

plt.ylabel('Accuracy',fontsize=16)

plt.title('Accuracy Curves',fontsize=16)

#��������

def get_predicted_classes(model, data, labels=None):

image_predictions = model.predict(data)

predicted_classes = np.argmax(image_predictions, axis=1)

true_classes = np.argmax(labels, axis=1)

return predicted_classes, true_classes, image_predictions

def get_classification_report(y_true, y_pred):

print(classification_report(y_true, y_pred, digits=4)) #С����4λ

checkpointer = ModelCheckpoint(filepath='weights.hdf5',

verbose=1,

save_best_only=True)

flag = "test"

if flag=="train":

history = model.fit(training_letters_images_scaled,

training_letters_labels_encoded,

validation_data=(testing_letters_images_scaled,

testing_letters_labels_encoded),

epochs=15,

batch_size=20,

verbose=1,

callbacks=[checkpointer])

print(history)

plot_loss_accuracy(history)

else:

#���ؾ��������֤��ʧ��ģ��

model.load_weights('weights.hdf5')

metrics = model.evaluate(testing_letters_images_scaled,

testing_letters_labels_encoded,

verbose=1)

print("Test Accuracy: {}".format(metrics[1]))

print("Test Loss: {}".format(metrics[0]))

y_pred, y_true, image_predictions = get_predicted_classes(model,

testing_letters_images_scaled,

testing_letters_labels_encoded)

get_classification_report(y_true, y_pred)

#----------------------------------------------------------------

# �ھŲ� ���Ʋ���ͼ��

#----------------------------------------------------------------

fig = plt.figure(0, figsize=(14,14))

indices = np.random.randint(0, testing_letters_labels.shape[0], size=49)

y_pred = np.argmax(model.predict(training_letters_images_scaled), axis=1)

for i, idx in enumerate(indices):

plt.subplot(7,7,i+1)

image_array = training_letters_images_scaled[idx][:,:,0]

image_array = np.flip(image_array, 0)

image_array = rotate(image_array, -90)

plt.imshow(image_array, cmap='gray')

plt.title("Pred: {} - Label: {}".format(y_pred[idx],

(training_letters_labels[idx] -1)))

plt.xticks([])

plt.yticks([])

plt.show()

plt.savefig("resutl.png")

��.�ܽ�

д������,��ƪ���¾ͽ��ܽ�����,ϣ������������������Ȼ�����������ȶ�ͼ�Լ���ͬ�㷨�ĶԱ�,���波�Է�����ǰ�ص�ͼ������㷨��

- һ.���ݼ�����

- ��.���ݶ�ȡ

- ��.����Ԥ����

1.��ֵ����ת��ͼ��

2.ͼ���������

3.���One-hot����ת��

4.��״�� - ��.CNNģ�ʹ

1.�������������

2.ģ�����

3.ģ�ͻ��� - ��.ʵ������

1.ģ��ѵ��

2.��������ȷ������

3.ģ������

4.����Ԥ��ͼƬ - ��.��������

import seaborn as sns

from sklearn import metrics

Labname = [1,2,3,4,5,6,7,8,9,10,11,12,13,14,

15,16,17,18,19,20,21,22,23,24,25,26,27,28]

print(y_pre_test)

y_pre_test = [num+1 for num in y_pre_test]

print(np.argmax(testing_letters_labels,axis=1))

confm = metrics.confusion_matrix(testing_letters_labels,

y_pre_test)

plt.figure(figsize=(10,10))

sns.heatmap(confm.T, square=True, annot=True,

fmt='d', cbar=True, linewidths=.6,

cmap="YlGnBu")

plt.xlabel('True label',size = 12)

plt.ylabel('Predicted label', size = 12)

plt.xticks(np.arange(28)+0.5, Labname, size = 10)

plt.yticks(np.arange(28)+0.5, Labname, size = 10)

plt.savefig('headmap.png')

plt.show()

����ϣ����ƪ���¶�����������,����~

(By:Eastmount 2021-10-03 ҹ������ http://blog.csdn.net/eastmount/ )