���ѵ�pytorchѧϰ��¼

DAY 2 P15-P22 ������IJ㼶�ṹ

1.������nn.Module�Ǽ�

import torch

from torch import nn

# ����һ����̳�nn.Module

class Tudui(nn.Module):

def __init__(self):

# super()���Դ��游��,super().���� = ����.����

# �Ը�����г�ʼ��

super().__init__()

# ���ſ��������涨��һ�·���,��Ҫ��������IJ�

# ����һ��ǰ���ķ���

# �в���inputҪ����

def forward(self , input):

output = input + 1

return output

# ����һ��output

tudui = Tudui()

x = torch.tensor(1.0)

output = tudui(x)

print(output)

2.�ľ���ʵ��

import torch

import torch.nn.functional as F

# ��ά�������������ʾ,����ÿ�������ű�ʾһ��,����5��

input = torch.tensor([[1,2,0,3,1],

[0,1,2,3,1],

[1,2,1,0,0],

[5,2,3,1,1],

[2,1,0,1,1]])

# kernelָ���Ǿ�����

kernel = torch.tensor([[1,2,1],

[0,1,0],

[2,1,0]])

# ��Ϊinput�ĸ�ʽҪ����(minibatch, in_channels , iH , iW)

input = torch.reshape(input , (1,1,5,5))

kernel = torch.reshape(kernel , (1,1,3,3))

print(input.shape)

print(kernel.shape)

# conv2d�ǽ��ж�ά��������

# ��������

# input �C input tensor of shape (minibatch,in_channels,iH,iW) �������

# weight �C filters of shape (out_channels,groups,in_channels,kH,kW) Ȩֵ����

# bias �C optional bias tensor of shape (\text{out\_channels})(out_channels). Default: None �ؾ�

# stride �C the stride of the convolving kernel. Can be a single number or a tuple (sH, sW). Default: 1 ����ʱÿ���ƶ��IJ���

# padding �Cimplicit paddings on both sides of the input. Can be a string {��valid��, ��same��}, single number or a tuple (padH, padW). ��Χ��һȦ0

output = F.conv2d(input , kernel , stride=1)

print(output)

output2 = F.conv2d(input , kernel , stride=2)

print(output2)

output3 = F.conv2d(input , kernel , stride=1 , padding=1)

print(output3)

3.pytorch���ṩ�ľ�����conv2d

import torch

import torchvision

from torch import nn

from torch.nn import Conv2d

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

# ���ݼ�������������

dataset = torchvision.datasets.CIFAR10("dataseset_CIFAR10" , train=False ,

transform=torchvision.transforms.ToTensor() , download=True )

dataloader = DataLoader(dataset , batch_size=64)

class Tudui(nn.Module):

def __init__(self):

super(Tudui , self).__init__()

# ���������ǰchannelsΪ3 , ����������Ϊ6

self.conv1 = Conv2d(in_channels=3 , out_channels=6 ,kernel_size=3 , stride=1 ,padding=0)

def forward(self,x):

x = self.conv1(x)

return x

tudui = Tudui()

# ���ӻ�չʾ

writer = SummaryWriter("logs")

step = 0

for data in dataloader:

imgs , targets = data

output = tudui(imgs)

print(imgs.shape)

print(output.shape)

#torch.Size([64, 3, 32, 32])

# ע������ǵ���ͼƬչʾ��writer.add_image()

# ע������Ƕ���ͼƬչʾ��writer.add_images()

writer.add_images("input" , imgs , step)

# torch.Size([64, 6, 30, 30]) ---> torch.Size([64 , 3 ,30 ,30])

# reshape�ﲻ֪����һ��ֵ�Ƕ���ʱ����Ϊ-1

output = torch.reshape(output ,(-1 , 3 , 30 , 30))

writer.add_images("output" , output , step)

step = step + 1

writer.close()

channels���

4.�����

import torch

import torchvision

from torch import nn

from torch.nn import ReLU, Sigmoid

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

# �����Ա任��Ŀ����������������� , �Ӷ�ѵ�������ϸ����������������ģ�� , �߱���ǿ�ķ�����

input = torch.tensor([[1 , -0.5],

[-1 , 3]])

# ע�� reshape��-1ָ����batchsize(Ĭ��-1����) , 1��channelֵ , 2,2�Ǿ���ά��

input = torch.reshape(input , (-1,1,2,2))

print(input.shape)

dataset = torchvision.datasets.CIFAR10("dataseset_CIFAR10" , train=False , transform=torchvision.transforms.ToTensor(),

download=False)

dataloader = DataLoader(dataset , batch_size=64 )

class Tudui(nn.Module):

def __init__(self):

super(Tudui, self).__init__()

# ���弤���

self.relu1 = ReLU()

self.sigmoid1 = Sigmoid()

def forward(self , input):

# ʹ�ü����

output = self.sigmoid1(input)

return output

tudui = Tudui()

writer = SummaryWriter("logs")

step = 0

for data in dataloader:

imgs , targets = data

output = tudui(imgs)

writer.add_images("before" , imgs ,step)

writer.add_images("Sigmoid" , output ,step)

step = step + 1

writer.close()

���õ���Sigmoid ��Relu

5.�ػ���

�ػ����������ȥ������Ҫ�����ص�Ӷ�����ѵ����

���ػ��ľ�������ѡȡ�����������һ����

import torch

import torchvision

from torch import nn

from torch.nn import MaxPool2d

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

# �ػ����������ȥ������Ҫ�����ص�Ӷ�����ѵ����

# ���ػ��ľ�������ѡȡ�����������һ����

datdaset = torchvision.datasets.CIFAR10("dataseset_CIFAR10" , train=False , transform=torchvision.transforms.ToTensor()

,download=True)

dataloader = DataLoader(datdaset , batch_size=64 )

# input = torch.tensor([[1,2,0,3,1],

# [0,1,2,3,1],

# [1,2,1,0,0],

# [5,2,3,1,1],

# [2,1,0,1,1]], dtype=torch.float32)

#

# input = torch.reshape(input,(-1,1,5,5))

# print(input.shape)

class Tudui(nn.Module):

def __init__(self):

super(Tudui , self).__init__()

self.maxpool1 = MaxPool2d(kernel_size=3 , ceil_mode=True)

def forward(self,input):

output = self.maxpool1(input)

return output

tuidui = Tudui()

# output = tuidui(input)

# print(output)

writer = SummaryWriter("logs")

step = 0

for data in dataloader:

imgs , targets = data

writer.add_images("input" , imgs , step)

output = tuidui(imgs)

writer.add_images("output" , output ,step)

step = step +1

writer.close()

6.���Բ����˵ȫ���Ӳ�

import torch

import torchvision

from torch import nn

from torch.nn import Linear

from torch.utils.data import DataLoader

dataset = torchvision.datasets.CIFAR10("dataseset_CIFAR10" , train=False , transform=torchvision.transforms.ToTensor(),

download=False)

dataloader = DataLoader(dataset , batch_size=64)

class Tudui(nn.Module):

def __init__(self):

super(Tudui, self).__init__()

# ����������196608 ,����Linear�任�������Ϊ10�����

self.linear1 = Linear(196608 , 10)

def forward(self , input):

output = self.linear1(input)

return output

tudui = Tudui()

for data in dataloader:

imgs , targets = data

print(imgs.shape)

# input = torch.reshape(imgs , (1,1,1,-1))

# torch.flatten()��������һ��ע�ʹ����Ч,��ʾ�Ѽ��м���̯����һ��,����չ�������Բ����������

input = torch.flatten(imgs)

print(input.shape)

output = tudui(input)

print(output.shape)

ȫ���Ӳ�ʾ��

flattenչƽ

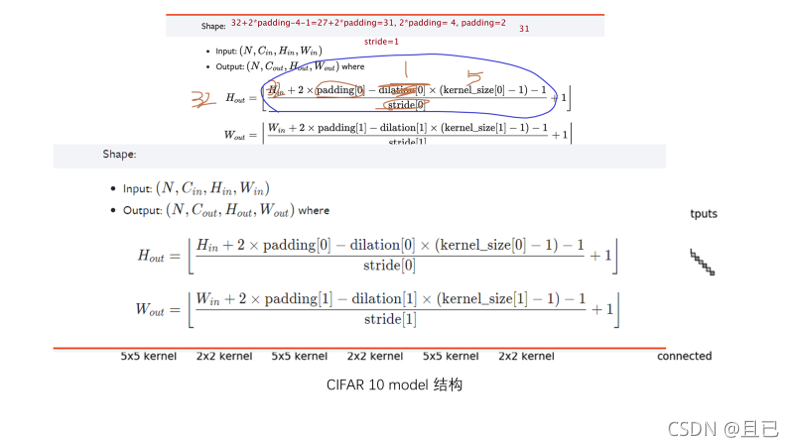

padding,stride�ȵļ���

7.Sequential��ʹ�úͼ�ģ�͵Ĵ

import torch

from torch import nn

from torch.nn import Conv2d, MaxPool2d, Linear, Flatten, Sequential

from torch.utils.tensorboard import SummaryWriter

class Tudui(nn.Module):

def __init__(self):

super(Tudui, self).__init__()

# self.conv1 = Conv2d(3 , 32 , 5, stride=1 , padding=2)

# self.maxpool1 = MaxPool2d(2)

# self.conv2 = Conv2d( 32 , 32 , 5 ,padding=2)

# self.maxpool2 = MaxPool2d(2)

# self.conv3 = Conv2d(32, 64 , 5 ,padding=2)

# self.maxpool3 = MaxPool2d(2)

# # ����㵽���ز�

# self.linear1 = Linear(1024 , 64)

# # ���ز㵽�����

# self.linear2 = Linear(64 , 10)

# self.flatten = Flatten()

# ����һ��Sequential,�����IJ��������model1,�Ա�����ʹ��

# ������δ��������ע�͵Ĵ���������ͬ

self.model1 = Sequential(Conv2d(3 , 32 , 5, stride=1 , padding=2),

MaxPool2d(2),

Conv2d( 32 , 32 , 5 ,padding=2),

MaxPool2d(2),

Conv2d(32, 64 , 5 ,padding=2),

MaxPool2d(2),

Flatten(),

Linear(1024 , 64),

Linear(64 , 10))

def forward(self , x):

# x = self.conv1(x)

# x = self.maxpool1(x)

# x = self.conv2(x)

# x = self.maxpool2(x)

# x = self.conv3(x)

# x = self.maxpool3(x)

# x = self.flatten(x)

# x = self.linear1(x)

# x = self.linear2(x)

# ����Ĵ������ú�������ͬ

x = self.model1(x)

return x

tudui = Tudui()

print(tudui)

input = torch.ones((64,3,32,32))

output = tudui(input)

print(output.shape)

writer = SummaryWriter("logs")

writer.add_graph(tudui , input)

writer.close()