使用不同优化器训练模型,画出不同优化器的损失(loss)变化图像

使用SGD优化器代码:

import torch

import matplotlib.pyplot as plt

#准备数据集

x_data = torch.Tensor([[1.0],[2.0],[3.0]])

y_data = torch.Tensor([[2.0],[4.0],[6.0]])

#设计模型

class LinearModel(torch.nn.Module):

def __init__(self):

super(LinearModel,self).__init__()

self.linear = torch.nn.Linear(1,1)

def forward(self,x):

y_pred = self.linear(x)

return y_pred

model = LinearModel()

#构造损失函数和优化器

criterion = torch.nn.MSELoss(size_average=False)

optimizer = torch.optim.SGD(model.parameters(),lr=0.01)

epoch_list = []

loss_list = []

#训练周期(前馈,反馈,更新)

for epoch in range(100):

y_pred = model(x_data)

loss = criterion(y_pred,y_data)

print(epoch,loss.item())

epoch_list.append(epoch)

loss_list.append(loss.item())

optimizer.zero_grad()

loss.backward()

optimizer.step()

print('w=',model.linear.weight.item())

print(('b=',model.linear.bias.item()))

x_test = torch.Tensor([[4.0]])

y_test = model(x_test)

print('y_pred =',y_test.data)

fig = plt.figure()

sub = fig.add_subplot(111)

sub.plot(epoch_list,loss_list)

sub.set_xlabel('epoch')

sub.set_ylabel('loss')

fig.suptitle('SGD')

plt.grid()

plt.show()

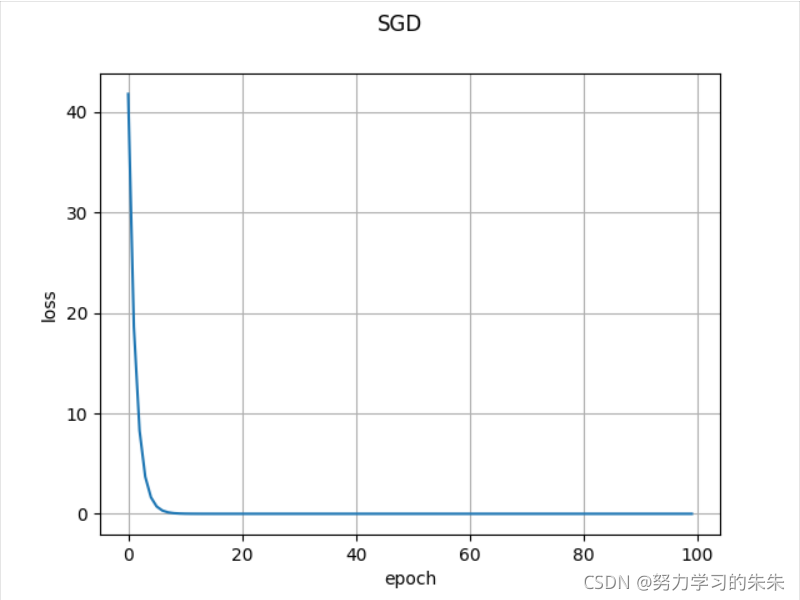

?使用SGD优化器的损失函数图像:

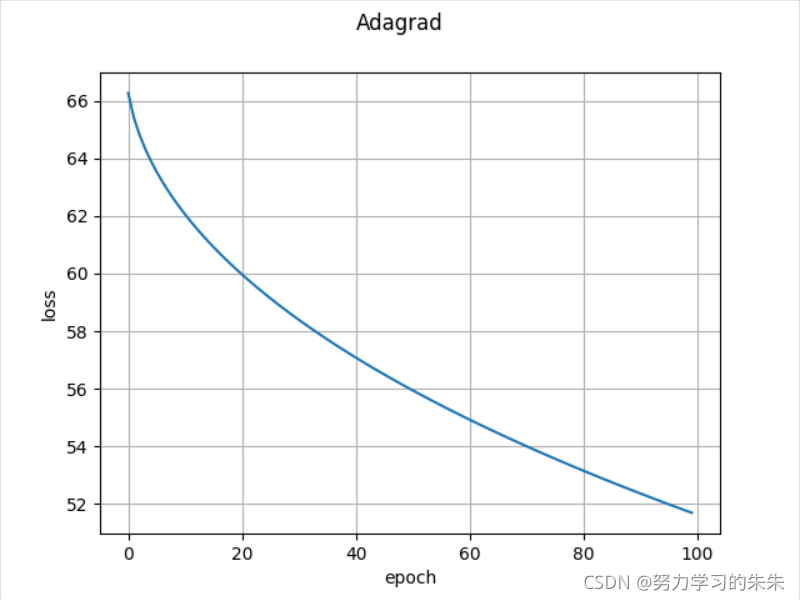

使用Adagrad优化器的损失函数图像:

?

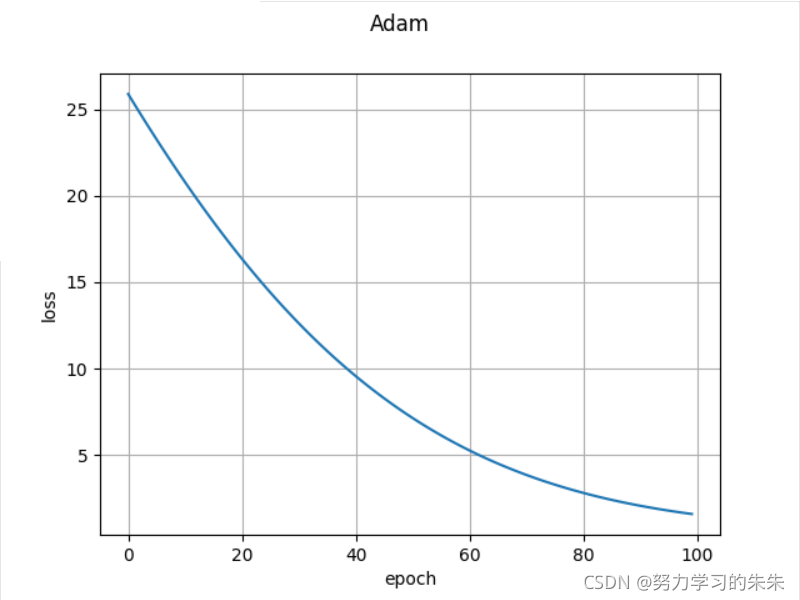

?使用Adam优化器的损失函数图像:

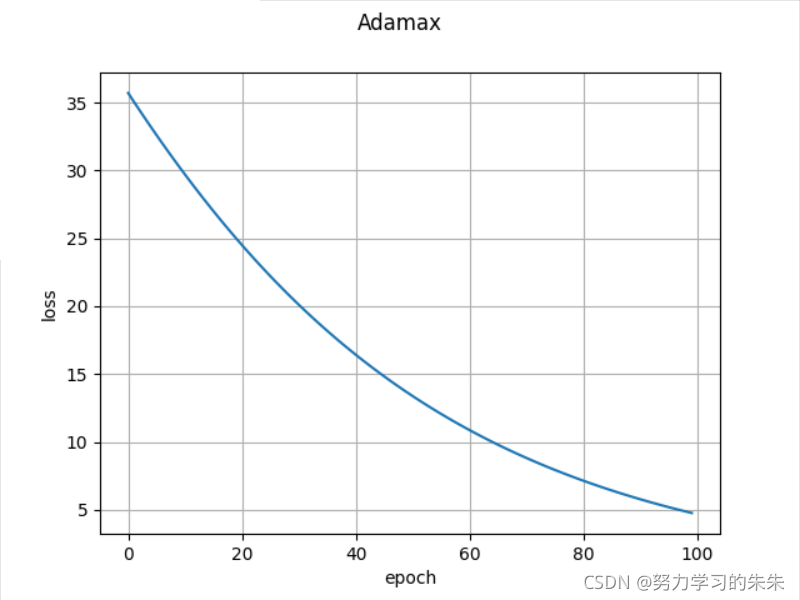

??使用Adamax优化器的损失函数图像:

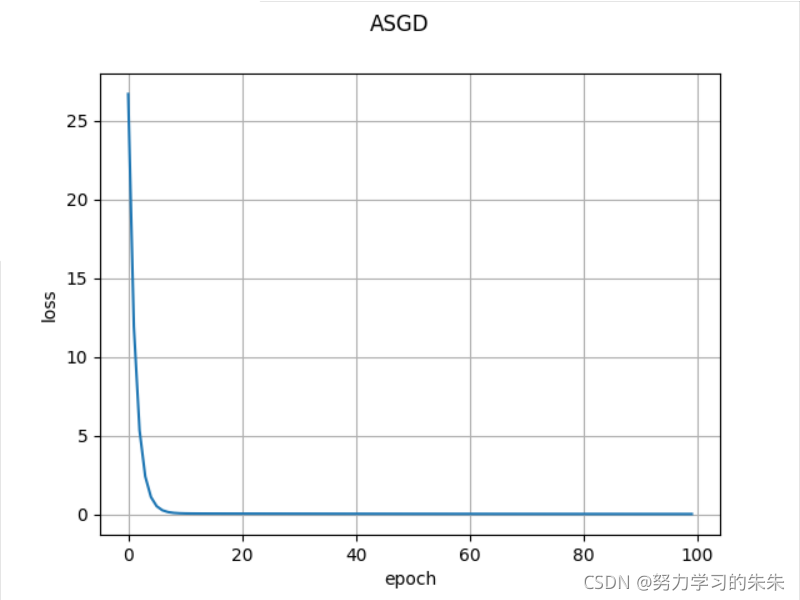

使用ASGD优化器的损失函数图像:

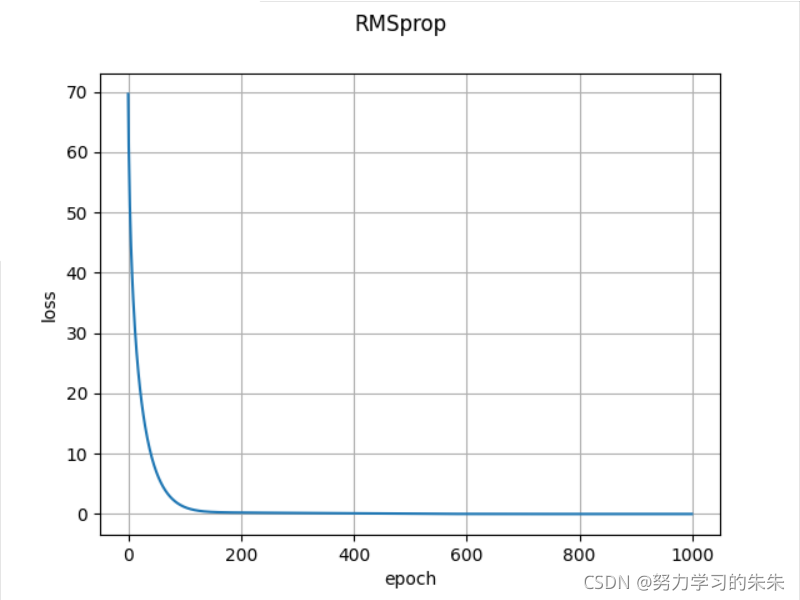

?使用RMSprop优化器的损失函数图像:

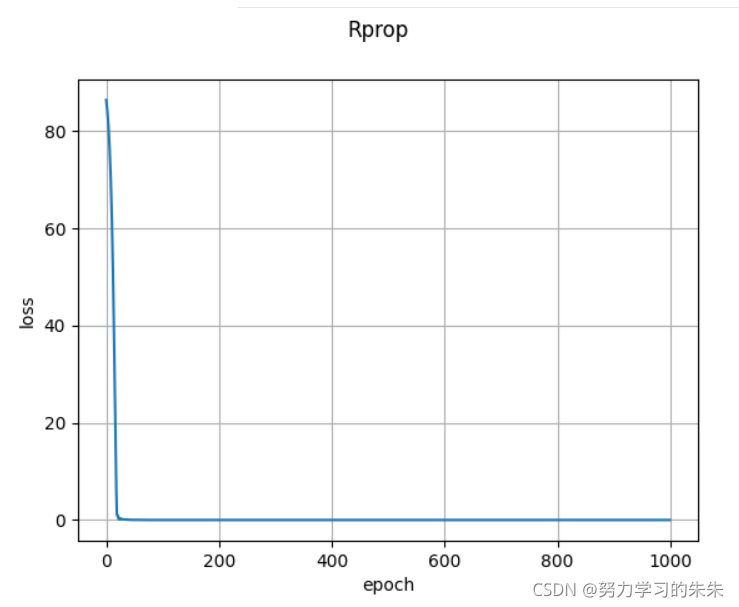

?使用Rprop优化器的损失函数图像:

?其中LBFGS的使用和以上其他的优化器有一些不同,LBFGS需要重复多次计算函数,因此你需要传入一个闭包去允许它们重新计算你的模型,这个闭包应当清空梯度, 计算损失,然后返回,即optimizer.step(closure);其他的优化器支持简化的版本即optimizer.step()。

下面介绍一下两种方式使用的模板:

optimizer.step(closure)

def closure():

optimizer.zero_grad()

y_pred = model(x_data)

loss = criterion(y_pred, y_data)

loss.backward()

return loss

#传入闭包closure

optimizer.step(closure)optimizer.step()

optimizer.zero_grad()

y_pred = model(x_data)

loss = criterion(y_pred,y_data)

loss.backward()

optimizer.step()具体代码如下:

import torch

import matplotlib.pyplot as plt

#准备数据集

x_data = torch.Tensor([[1.0],[2.0],[3.0]])

y_data = torch.Tensor([[2.0],[4.0],[6.0]])

#设计模型

class LinearModel(torch.nn.Module):

def __init__(self):

super(LinearModel,self).__init__()

self.linear = torch.nn.Linear(1,1)

def forward(self,x):

y_pred = self.linear(x)

return y_pred

model = LinearModel()

#构造损失函数和优化器

criterion = torch.nn.MSELoss(size_average=False)

optimizer = torch.optim.LBFGS(model.parameters(),lr=0.01)

epoch_list = []

loss_list = []

#训练周期(前馈,反馈,更新)

for epoch in range(1000):

def closure():

optimizer.zero_grad()

y_pred = model(x_data)

loss = criterion(y_pred,y_data)

print(epoch,loss.item())

epoch_list.append(epoch)

loss_list.append(loss.item())

loss.backward()

return loss

optimizer.step(closure)

print('w=',model.linear.weight.item())

print(('b=',model.linear.bias.item()))

x_test = torch.Tensor([[4.0]])

y_test = model(x_test)

print('y_pred =',y_test.data)

fig = plt.figure()

sub = fig.add_subplot(111)

sub.plot(epoch_list,loss_list)

sub.set_xlabel('epoch')

sub.set_ylabel('loss')

fig.suptitle('LBFGS')

plt.grid()

plt.show()

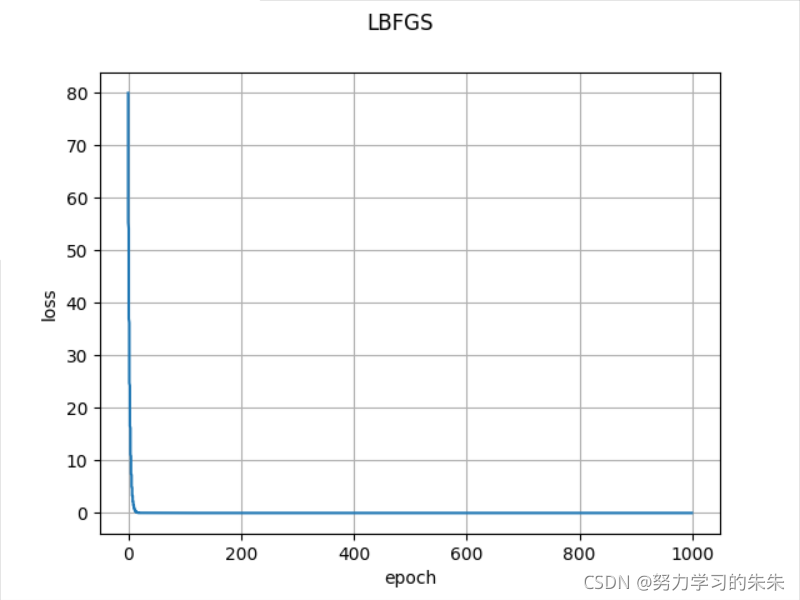

使用LBFGS优化器的损失函数图像: