前言

本文参考吴恩达老师的机器学习课程作业,结合自己的理解所作笔记

文章目录

1、热身:创建并初始化二层神经网络

模型的结构为:LINEAR -> RELU -> LINEAR -> SIGMOID

矩阵的形状要搞清楚,以这个五层神经网络为例(和本题无关),W矩阵的行数一定等于本层神经元的个数n[l],由Z = Wa + b 可知 W的列和a的行相等,也就是等于前一层神经元的个数,最终得到的乘积要和b相加等于一个(n[l],1)的结果Z,再输入激活函数a = g(Z),把a作为下一层神经网络的输入,如此反复。牢记下面画红圈的部分

随机初始化W1,W2。随意处理b1,b2 (一般赋值为零)

parameters是存放梯度下降所需要的参数字典

# GRADED FUNCTION: initialize_parameters

def initialize_parameters(n_x, n_h, n_y):

"""

Argument:

n_x -- size of the input layer

n_h -- size of the hidden layer

n_y -- size of the output layer

Returns:

parameters -- python dictionary containing your parameters:

W1 -- weight matrix of shape (n_h, n_x)

b1 -- bias vector of shape (n_h, 1)

W2 -- weight matrix of shape (n_y, n_h)

b2 -- bias vector of shape (n_y, 1)

"""

np.random.seed(1)

### START CODE HERE ### (≈ 4 lines of code)

W1 = np.random.randn(n_h, n_x) * 0.01

b1 = np.zeros((n_h, 1))

W2 = np.random.randn(n_y, n_h) * 0.01

b2 = np.zeros((n_y, 1))

### END CODE HERE ###

assert(W1.shape == (n_h, n_x))

assert(b1.shape == (n_h, 1))

assert(W2.shape == (n_y, n_h))

assert(b2.shape == (n_y, 1))

parameters = {"W1": W1,

"b1": b1,

"W2": W2,

"b2": b2}

return parameters

2、进阶:L层神经网络

模型的结构为 [LINEAR -> RELU] (L-1) -> LINEAR -> SIGMOID。也就是说,L-1层使用ReLU作为激活函数,最后一层采用sigmoid激活函数输出。

函数调用过程如下图所示:

我们要实现的四个主要函数

第一部分――正向传播

函数解释汇总

initialize_parameters_deep(layer_dims) 初始化神经网络的W和b,需要指定每层神经元的个数,返回parameter字典包含网络的参数W,b

linear_forward(A, W, b)计算Z = WA + b,返回Z和包含A,W,b的cache,便于后续反向传播更新参数

linear_activation_forward(A_prev, W, b, activation) 首先调用线性传播,获得Z和linear_cache,再计算A =g(Z),函数g可以是sigmoid或者relu,返回为A和包含Z的activitation_cache,最后把两个cache组合成为一个cache返回,“cache = (linear_cache, activation_cache)”,总结一下,其实最后返回的cache中包含的只不过四个参数W,b,Z,A,

L_model_forward(X, parameters)是正向传播的总函数,先用for循环进行L-1次[Linear->Relu]传播,调用linear_activation_forward(),参数activitation选择relu,然后获取每一层的cache,把它加到caches中备用(反向传播要用到),然后调用一次linear_activitation_forward()参数activitation 选择sigmoid,获取最后一层的cache和AL(也就是y_hat)

(1)初始化参数initialize_parameters_deep

initialize_parameters_deep(layer_dims) 初始化神经网络的W和b,需要指定每层神经元的个数,返回parameter字典包含网络的参数W,b

# GRADED FUNCTION: initialize_parameters_deep

def initialize_parameters_deep(layer_dims):

"""

Arguments:

layer_dims -- python array (list) containing the dimensions of each layer in our network

Returns:

parameters -- python dictionary containing your parameters "W1", "b1", ..., "WL", "bL":

Wl -- weight matrix of shape (layer_dims[l], layer_dims[l-1])

bl -- bias vector of shape (layer_dims[l], 1)

"""

np.random.seed(3)

parameters = {}

L = len(layer_dims) # number of layers in the network

for l in range(1, L):

### START CODE HERE ### (≈ 2 lines of code)

parameters['W' + str(l)] = np.random.randn(layer_dims[l], layer_dims[l-1])

parameters['b' + str(l)] = np.zeros((layer_dims[l], 1))

### END CODE HERE ###

assert(parameters['W' + str(l)].shape == (layer_dims[l], layer_dims[l-1]))

assert(parameters['b' + str(l)].shape == (layer_dims[l], 1))

return parameters

(2)线性传播linear_forward

linear_forward(A, W, b)计算Z = WA + b,返回Z和包含A,W,b的cache,便于后续反向传播更新参数

# GRADED FUNCTION: linear_forward

def linear_forward(A, W, b):

"""

Implement the linear part of a layer's forward propagation.

Arguments:

A -- activations from previous layer (or input data): (size of previous layer, number of examples)

W -- weights matrix: numpy array of shape (size of current layer, size of previous layer)

b -- bias vector, numpy array of shape (size of the current layer, 1)

Returns:

Z -- the input of the activation function, also called pre-activation parameter

cache -- a python dictionary containing "A", "W" and "b" ; stored for computing the backward pass efficiently

"""

### START CODE HERE ### (≈ 1 line of code)

Z = np.dot(W, A) + b

### END CODE HERE ###

assert(Z.shape == (W.shape[0], A.shape[1]))

cache = (A, W, b)

return Z, cache

(3)正向线性激活linear_activation_forward

linear_activation_forward(A_prev, W, b, activation) 首先调用线性传播,获得Z和linear_cache,再计算A =g(Z),函数g可以是sigmoid或者relu,返回为A和包含Z的activitation_cache,最后把两个cache组合成为一个cache返回,“cache = (linear_cache, activation_cache)”,总结一下,其实最后返回的cache中包含的只不过四个参数W,b,Z,A,

# GRADED FUNCTION: linear_activation_forward

def linear_activation_forward(A_prev, W, b, activation):

"""

Implement the forward propagation for the LINEAR->ACTIVATION layer

Arguments:

A_prev -- activations from previous layer (or input data): (size of previous layer, number of examples)

W -- weights matrix: numpy array of shape (size of current layer, size of previous layer)

b -- bias vector, numpy array of shape (size of the current layer, 1)

activation -- the activation to be used in this layer, stored as a text string: "sigmoid" or "relu"

Returns:

A -- the output of the activation function, also called the post-activation value

cache -- a python dictionary containing "linear_cache" and "activation_cache";

stored for computing the backward pass efficiently

"""

if activation == "sigmoid":

# Inputs: "A_prev, W, b". Outputs: "A, activation_cache".

### START CODE HERE ### (≈ 2 lines of code)

Z, linear_cache = linear_forward(A_prev,W,b)

A, activation_cache = sigmoid(Z)

### END CODE HERE ###

elif activation == "relu":

# Inputs: "A_prev, W, b". Outputs: "A, activation_cache".

### START CODE HERE ### (≈ 2 lines of code)

Z, linear_cache = linear_forward(A_prev,W,b)

A, activation_cache = relu(Z)

### END CODE HERE ###

assert (A.shape == (W.shape[0], A_prev.shape[1]))

cache = (linear_cache, activation_cache)

return A, cache

(4)正向传播L_model_forward(X, parameters)

L_model_forward(X, parameters)是正向传播的总函数,先用for循环进行L-1次[Linear->Relu]传播,调用linear_activation_forward(),参数activitation选择relu,然后获取每一层的cache,把它加到caches中备用(反向传播要用到),然后调用一次linear_activitation_forward()参数activitation 选择sigmoid,获取最后一层的cache和AL(也就是y_hat)

# GRADED FUNCTION: L_model_forward

def L_model_forward(X, parameters):

"""

Implement forward propagation for the [LINEAR->RELU]*(L-1)->LINEAR->SIGMOID computation

Arguments:

X -- data, numpy array of shape (input size, number of examples)

parameters -- output of initialize_parameters_deep()

Returns:

AL -- last post-activation value

caches -- list of caches containing:

every cache of linear_relu_forward() (there are L-1 of them, indexed from 0 to L-2)

the cache of linear_sigmoid_forward() (there is one, indexed L-1)

"""

caches = []

A = X

L = len(parameters) // 2 # number of layers in the neural network

# Implement [LINEAR -> RELU]*(L-1). Add "cache" to the "caches" list.

for l in range(1, L):

A_prev = A

### START CODE HERE ### (≈ 2 lines of code)

A, cache = linear_activation_forward(A_prev,parameters['W' + str(l)],parameters['b' + str(l)],activation = "relu")

caches.append(cache)

### END CODE HERE ###

# Implement LINEAR -> SIGMOID. Add "cache" to the "caches" list.

### START CODE HERE ### (≈ 2 lines of code)

AL, cache = linear_activation_forward(A,parameters['W' + str(L)],parameters['b' + str(L)],activation = "sigmoid")

caches.append(cache)

### END CODE HERE ###

assert(AL.shape == (1,X.shape[1]))

return AL, caches

第二部分――计算交叉熵损失

计算交叉熵损失compute_cost(AL, Y)

compute_cost(AL, Y)计算交叉熵损失,两个大小相同(1, n_x)的矩阵逐个元素(elementwise)相乘再相加,然后求和返回

# GRADED FUNCTION: compute_cost

def compute_cost(AL, Y):

"""

Implement the cost function defined by equation (7).

Arguments:

AL -- probability vector corresponding to your label predictions, shape (1, number of examples)

Y -- true "label" vector (for example: containing 0 if non-cat, 1 if cat), shape (1, number of examples)

Returns:

cost -- cross-entropy cost

"""

m = Y.shape[1]

# Compute loss from aL and y.

### START CODE HERE ### (≈ 1 lines of code)

cost = -1 / m * np.sum(Y * np.log(AL) + (1-Y) * np.log(1-AL),axis=1,keepdims=True)

### END CODE HERE ###

cost = np.squeeze(cost) # To make sure your cost's shape is what we expect (e.g. this turns [[17]] into 17).

assert(cost.shape == ())

return cost

第三部分――反向传播(梯度下降)

这一部分多了一些数学内容,函数基本和正向传播相似,不过计算是求梯度

推导如下

(C1W3L010 slides)

最终结论

总体思路

从最后一层开始往前传播,先求解最后一层,如下:

先求dAL(损失函数对倒数第一个神经元的激活输出y_hat求导)公式如下:

dAL = - (np.divide(Y, AL) - np.divide(1 - Y, 1 - AL))

有了dAL,我们可以调用linear_activation_backward(第一次先选择sigmoid,其他L-1次选择relu)求出dZ,内部调用linear_backward求出dAL_prev,dWL,dbL,再把dAL_prev入作为新的输入到linear_activation_backward如此传播(for循环)直到达到第一层

(1)线性反向linear_backward(dZ, cache)

求dW,db

# GRADED FUNCTION: linear_backward

def linear_backward(dZ, cache):

"""

Implement the linear portion of backward propagation for a single layer (layer l)

Arguments:

dZ -- Gradient of the cost with respect to the linear output (of current layer l)

cache -- tuple of values (A_prev, W, b) coming from the forward propagation in the current layer

Returns:

dA_prev -- Gradient of the cost with respect to the activation (of the previous layer l-1), same shape as A_prev

dW -- Gradient of the cost with respect to W (current layer l), same shape as W

db -- Gradient of the cost with respect to b (current layer l), same shape as b

"""

A_prev, W, b = cache

m = A_prev.shape[1]

### START CODE HERE ### (≈ 3 lines of code)

dW = 1 / m * np.dot(dZ ,A_prev.T)

db = 1 / m * np.sum(dZ,axis = 1 ,keepdims=True)

dA_prev = np.dot(W.T,dZ)

### END CODE HERE ###

assert (dA_prev.shape == A_prev.shape)

assert (dW.shape == W.shape)

assert (db.shape == b.shape)

return dA_prev, dW, db

(2)线性激活反向linear_activation_backward(dA, cache, activation)

# GRADED FUNCTION: linear_activation_backward

def linear_activation_backward(dA, cache, activation):

"""

Implement the backward propagation for the LINEAR->ACTIVATION layer.

Arguments:

dA -- post-activation gradient for current layer l

cache -- tuple of values (linear_cache, activation_cache) we store for computing backward propagation efficiently

activation -- the activation to be used in this layer, stored as a text string: "sigmoid" or "relu"

Returns:

dA_prev -- Gradient of the cost with respect to the activation (of the previous layer l-1), same shape as A_prev

dW -- Gradient of the cost with respect to W (current layer l), same shape as W

db -- Gradient of the cost with respect to b (current layer l), same shape as b

"""

linear_cache, activation_cache = cache

if activation == "relu":

### START CODE HERE ### (≈ 2 lines of code)

dZ = relu_backward(dA, activation_cache)

dA_prev, dW, db = linear_backward(dZ, linear_cache)

### END CODE HERE ###

elif activation == "sigmoid":

### START CODE HERE ### (≈ 2 lines of code)

dZ = sigmoid_backward(dA, activation_cache)

dA_prev, dW, db = linear_backward(dZ, linear_cache)

### END CODE HERE ###

return dA_prev, dW, db

(3)反向传播L_model_backward(AL, Y, caches)

# GRADED FUNCTION: L_model_backward

def L_model_backward(AL, Y, caches):

"""

Implement the backward propagation for the [LINEAR->RELU] * (L-1) -> LINEAR -> SIGMOID group

Arguments:

AL -- probability vector, output of the forward propagation (L_model_forward())

Y -- true "label" vector (containing 0 if non-cat, 1 if cat)

caches -- list of caches containing:

every cache of linear_activation_forward() with "relu" (it's caches[l], for l in range(L-1) i.e l = 0...L-2)

the cache of linear_activation_forward() with "sigmoid" (it's caches[L-1])

Returns:

grads -- A dictionary with the gradients

grads["dA" + str(l)] = ...

grads["dW" + str(l)] = ...

grads["db" + str(l)] = ...

"""

grads = {}

L = len(caches) # the number of layers

m = AL.shape[1]

Y = Y.reshape(AL.shape) # after this line, Y is the same shape as AL

# Initializing the backpropagation

### START CODE HERE ### (1 line of code)

dAL = - (np.divide(Y, AL) - np.divide(1 - Y, 1 - AL))

### END CODE HERE ###

# Lth layer (SIGMOID -> LINEAR) gradients. Inputs: "AL, Y, caches". Outputs: "grads["dAL"], grads["dWL"], grads["dbL"]

### START CODE HERE ### (approx. 2 lines)

current_cache = caches[L-1]

grads["dA" + str(L)], grads["dW" + str(L)], grads["db" + str(L)] = linear_activation_backward(dAL, current_cache, activation = "sigmoid")

### END CODE HERE ###

for l in reversed(range(L-1)):

# lth layer: (RELU -> LINEAR) gradients.

# Inputs: "grads["dA" + str(l + 2)], caches". Outputs: "grads["dA" + str(l + 1)] , grads["dW" + str(l + 1)] , grads["db" + str(l + 1)]

### START CODE HERE ### (approx. 5 lines)

current_cache = caches[l]

dA_prev_temp, dW_temp, db_temp = linear_activation_backward(grads["dA" + str(l+2)], current_cache, activation = "relu")

grads["dA" + str(l + 1)] = dA_prev_temp

grads["dW" + str(l + 1)] = dW_temp

grads["db" + str(l + 1)] = db_temp

### END CODE HERE ###

return grads

(4)更新参数update_parameters(parameters, grads, learning_rate)

# GRADED FUNCTION: update_parameters

def update_parameters(parameters, grads, learning_rate):

"""

Update parameters using gradient descent

Arguments:

parameters -- python dictionary containing your parameters

grads -- python dictionary containing your gradients, output of L_model_backward

Returns:

parameters -- python dictionary containing your updated parameters

parameters["W" + str(l)] = ...

parameters["b" + str(l)] = ...

"""

L = len(parameters) // 2 # number of layers in the neural network

# Update rule for each parameter. Use a for loop.

### START CODE HERE ### (≈ 3 lines of code)

for l in range(L):

parameters["W" + str(l+1)] = parameters["W" + str(l+1)] - learning_rate * grads["dW" + str(l + 1)]

parameters["b" + str(l+1)] = parameters["b" + str(l+1)] - learning_rate * grads["db" + str(l + 1)]

### END CODE HERE ###

return parameters

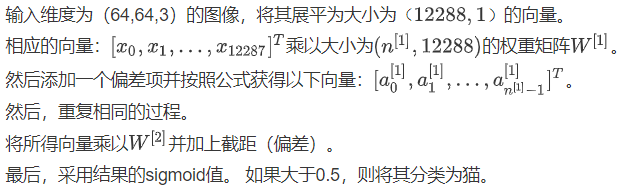

3、深层神经网络的应用――图像分类

(1)函数调用

# GRADED FUNCTION: L_layer_model

def L_layer_model(X, Y, layers_dims, learning_rate = 0.0075, num_iterations = 3000, print_cost=False):#lr was 0.009

"""

Implements a L-layer neural network: [LINEAR->RELU]*(L-1)->LINEAR->SIGMOID.

Arguments:

X -- data, numpy array of shape (number of examples, num_px * num_px * 3)

Y -- true "label" vector (containing 0 if cat, 1 if non-cat), of shape (1, number of examples)

layers_dims -- list containing the input size and each layer size, of length (number of layers + 1).

learning_rate -- learning rate of the gradient descent update rule

num_iterations -- number of iterations of the optimization loop

print_cost -- if True, it prints the cost every 100 steps

Returns:

parameters -- parameters learnt by the model. They can then be used to predict.

"""

np.random.seed(1)

costs = [] # keep track of cost

# Parameters initialization.

### START CODE HERE ###

parameters = initialize_parameters_deep(layers_dims)

### END CODE HERE ###

# Loop (gradient descent)

for i in range(0, num_iterations):

# Forward propagation: [LINEAR -> RELU]*(L-1) -> LINEAR -> SIGMOID.

### START CODE HERE ### (≈ 1 line of code)

AL, caches = L_model_forward(X, parameters)

### END CODE HERE ###

# Compute cost.

### START CODE HERE ### (≈ 1 line of code)

cost = compute_cost(AL, Y)

### END CODE HERE ###

# Backward propagation.

### START CODE HERE ### (≈ 1 line of code)

grads = L_model_backward(AL, Y, caches)

### END CODE HERE ###

# Update parameters.

### START CODE HERE ### (≈ 1 line of code)

parameters = update_parameters(parameters, grads, learning_rate)

### END CODE HERE ###

# Print the cost every 100 training example

if print_cost and i % 100 == 0:

print ("Cost after iteration %i: %f" %(i, cost))

if print_cost and i % 100 == 0:

costs.append(cost)

# plot the cost

plt.plot(np.squeeze(costs))

plt.ylabel('cost')

plt.xlabel('iterations (per tens)')

plt.title("Learning rate =" + str(learning_rate))

plt.show()

return parameters

采用五层神经网络训练,结果如下

预测准确率80%

(2)结果分析

首先,让我们看一下L层模型标记错误的一些图像。 这将显示一些分类错误的图像

该模型在表现效果较差的的图像包括:

猫身处于异常位置

图片背景与猫颜色类似

猫的种类和颜色稀有

相机角度

图片的亮度

比例变化(猫的图像很大或很小)

4、reference

deeplearning.ai by Andrew Ng on couresa