全部代码在最后面。

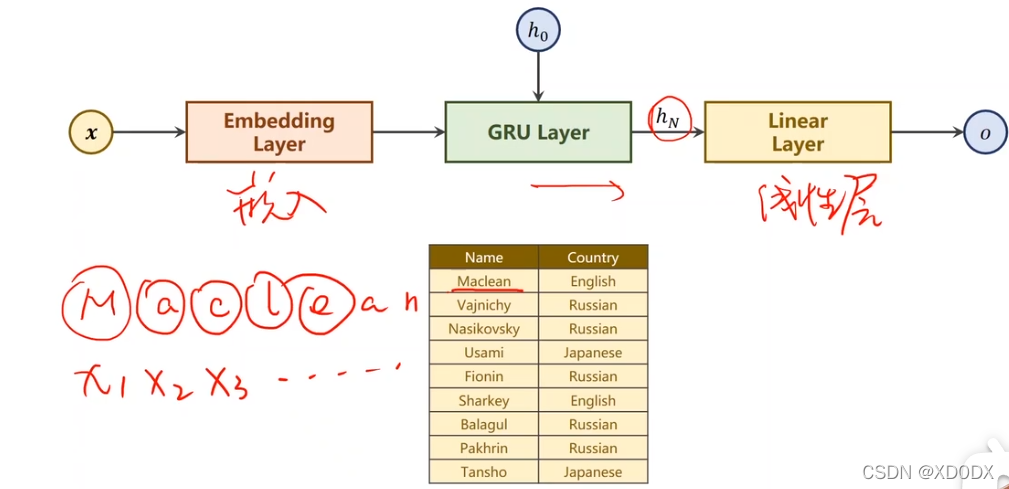

分类任务:

用名字识别出语言;

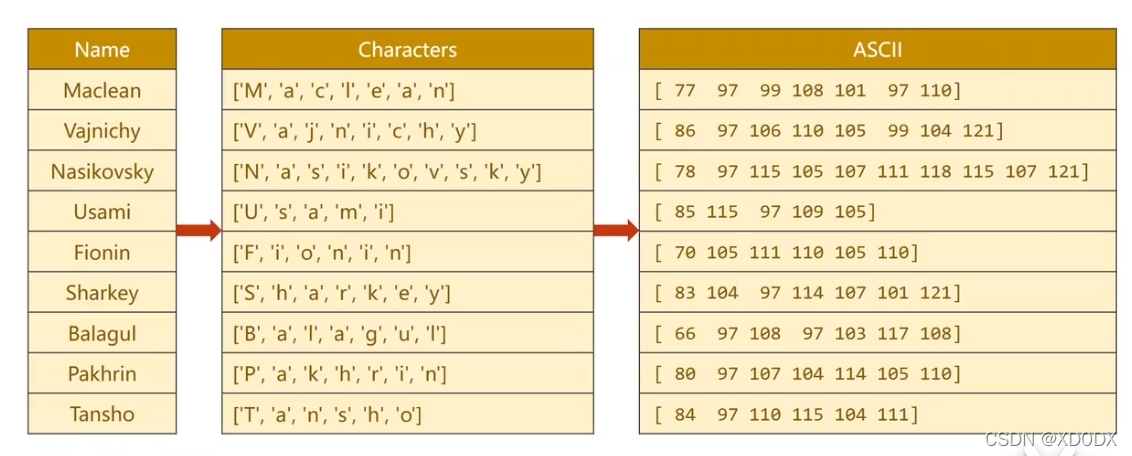

这里每一个名字实际上是一个序列,(序列长短不一致)

例如Maclean,-> M a c l e a n == x1,x2,x3,x4,x5,x6,x7

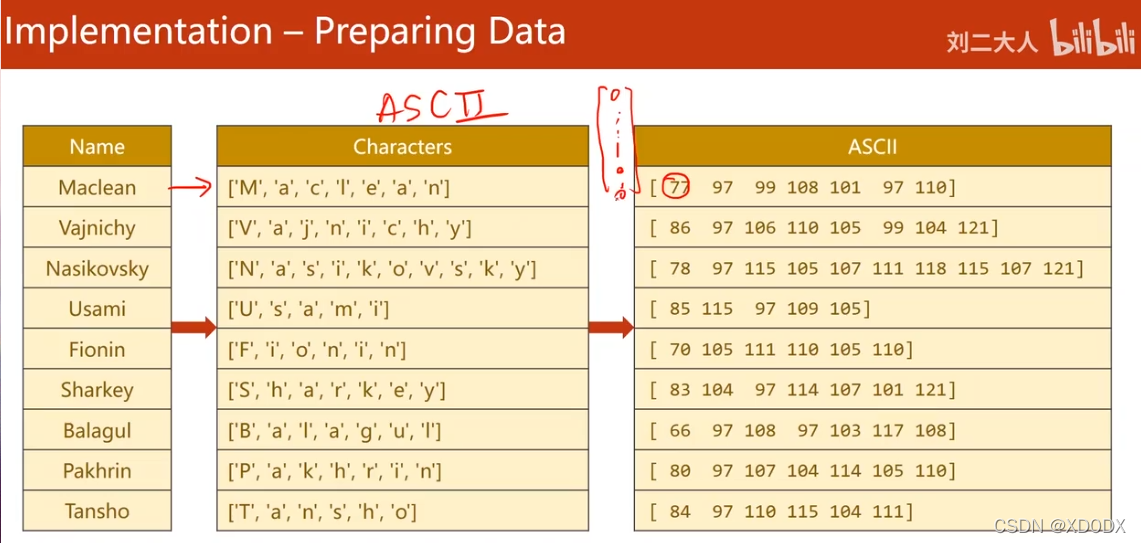

①准备数据

用ASCII码来表示;

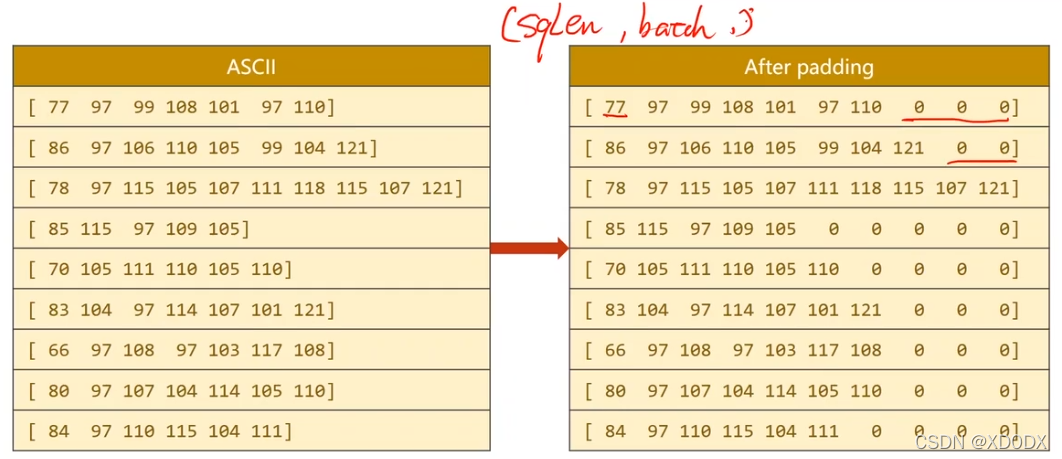

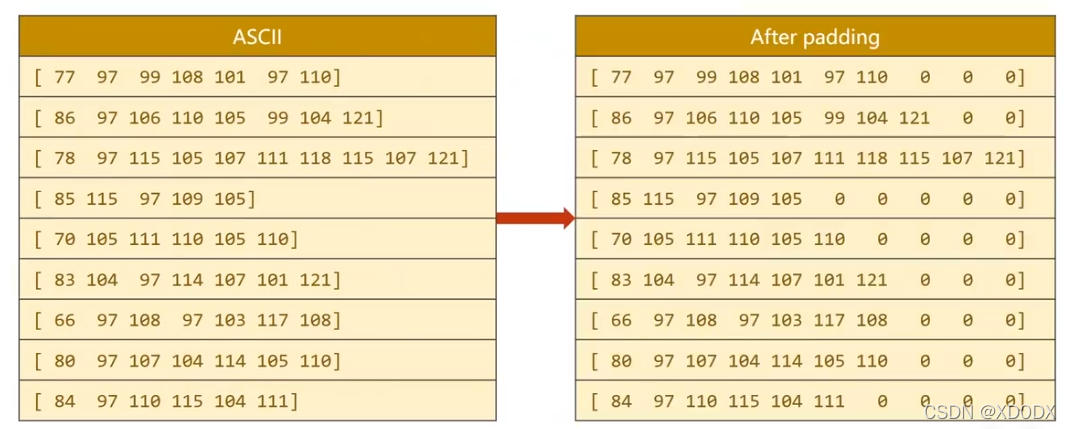

序列长短不一: 用padding

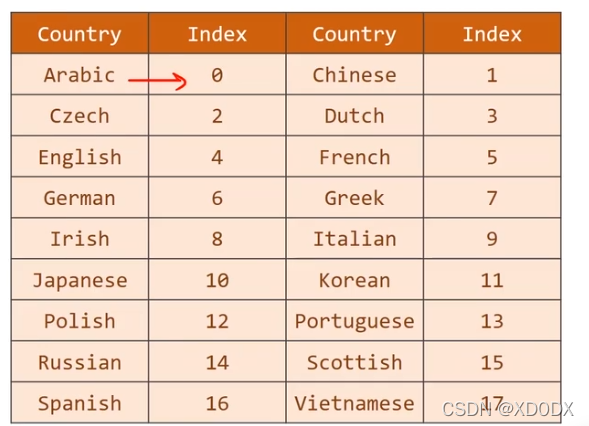

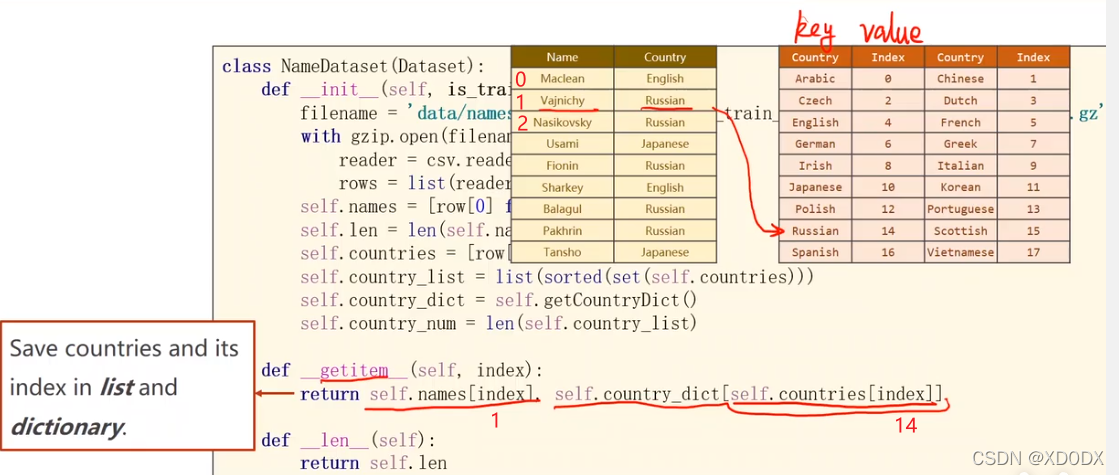

对标签Y也做成词典:将来就可以再这个词典里查找

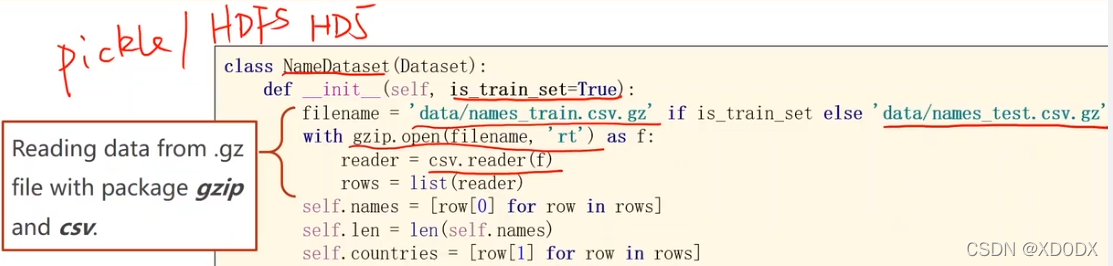

对数据(.gz类型)的读取:

不同数据类型用不同包去读取!

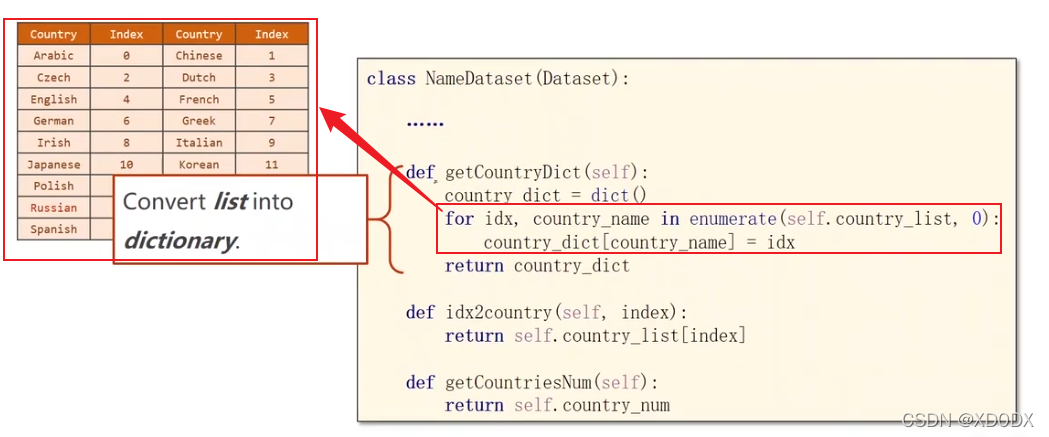

制作键值对表:

code:

def getConutryDict(self):

country_dict = dict()

for idx, country_name in enumerate(self.country_list, 0):

country_dict[country_name] = idx

return country_dict

根据键值对的索引返回国家的字符串:

code:

def idx2country(self, index):

return self.country_list[index]

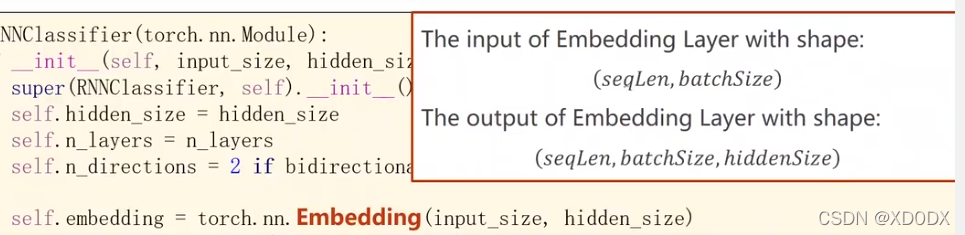

embedding层的输入输出维度:

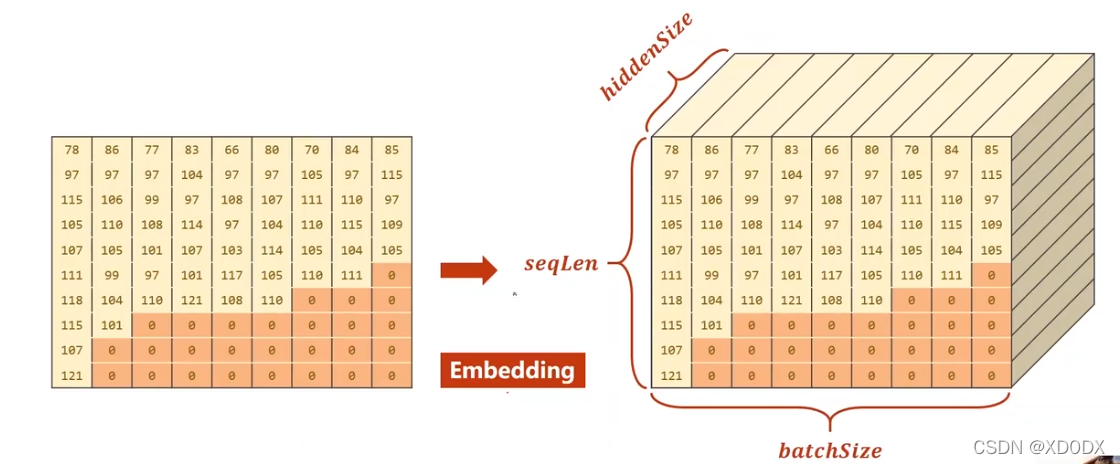

embedding图示:

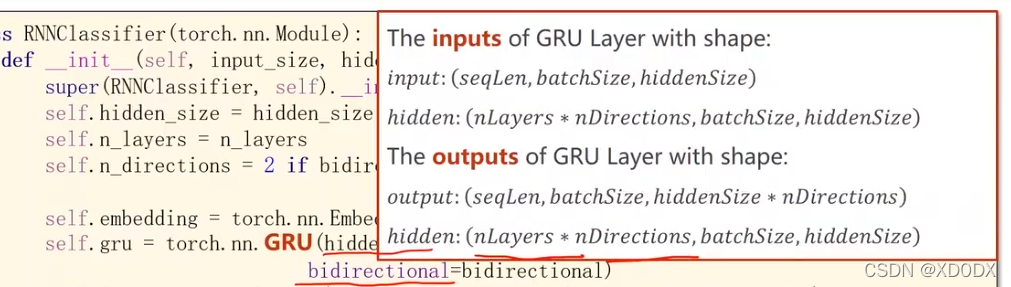

GRU的输入输出维度:

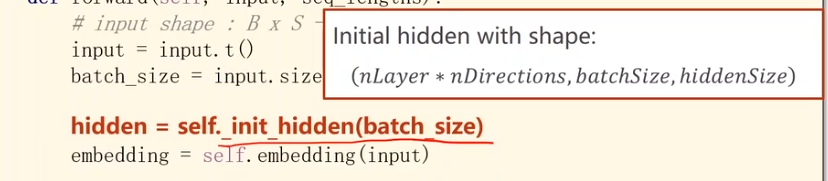

初始化的隐层维度:

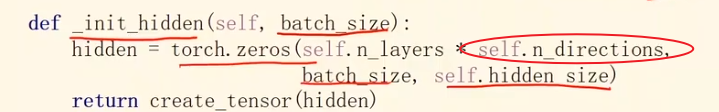

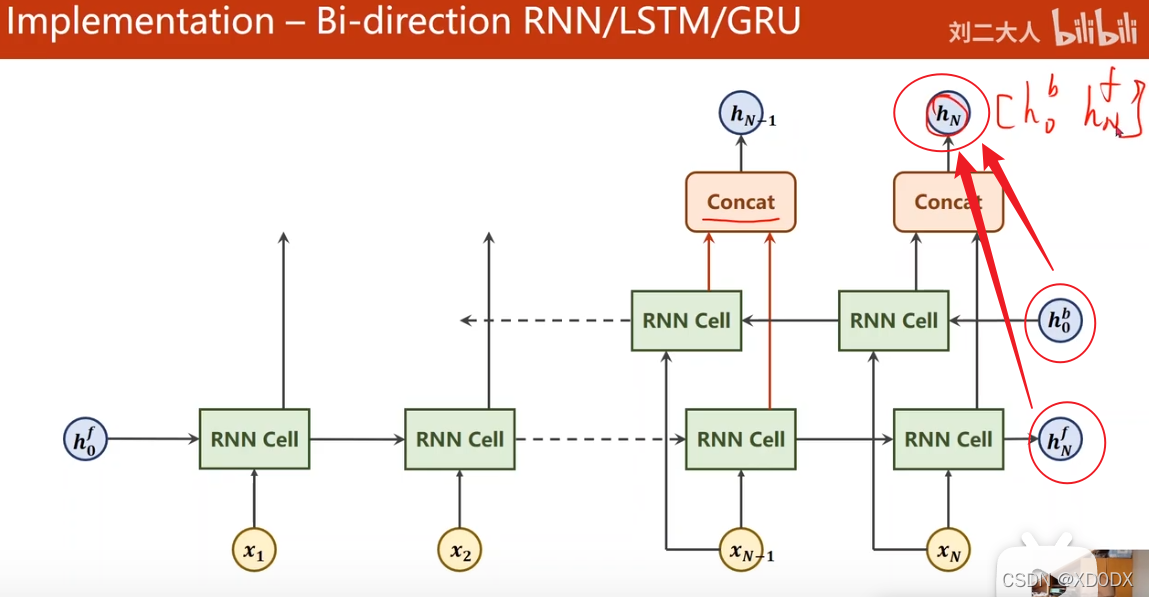

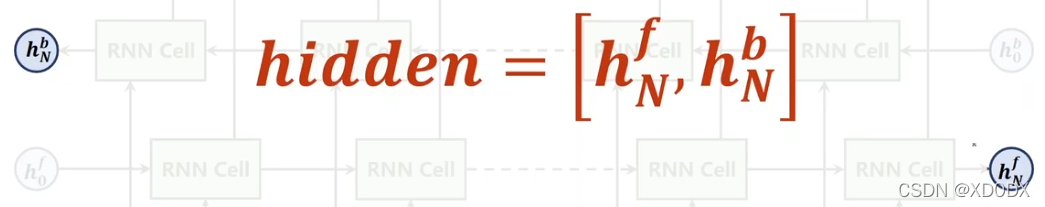

Bi-direction RNN/LSTM/GRU(双向的):

疑问:h0b从哪里得来的?或许也是初始化好全0的,如下图代码所示:

将来的输出:

hidden将来状态:

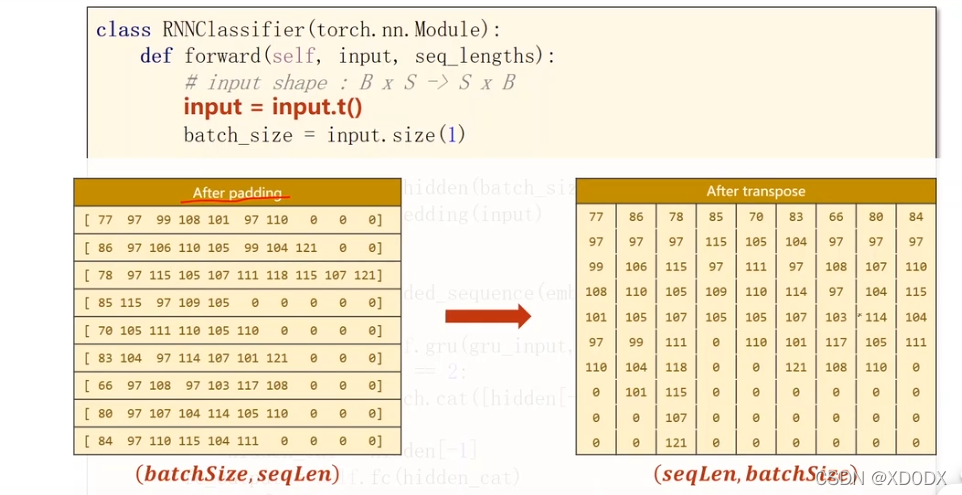

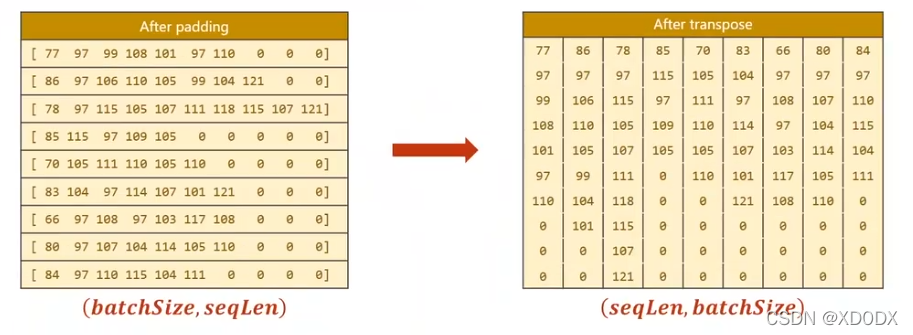

input维度变换:

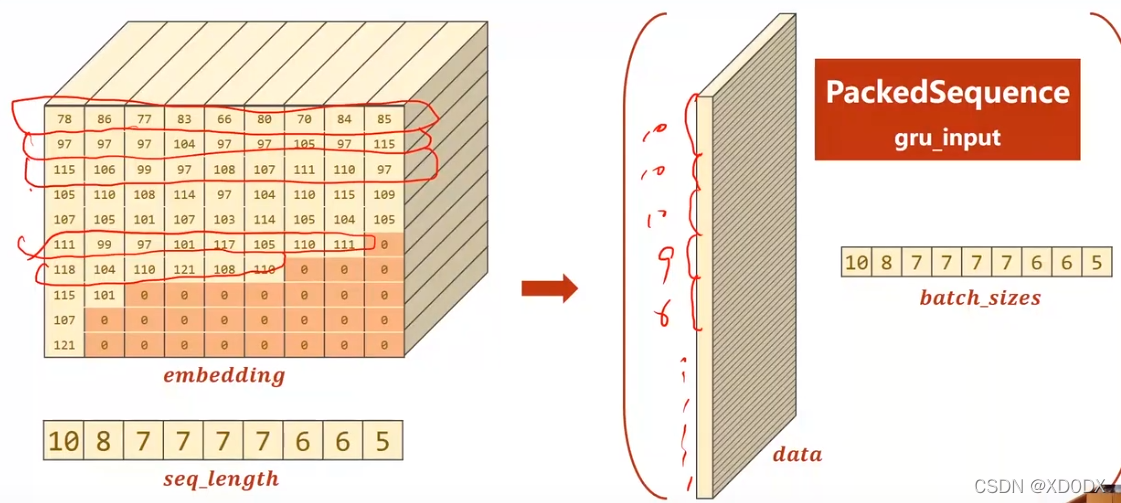

PackedSequence

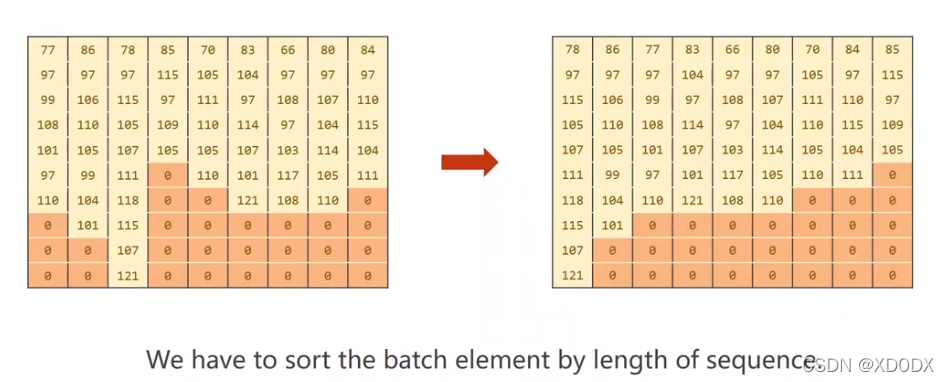

得先排序!(“去掉”0的padding层)

作用:方便gru的计算

图示:

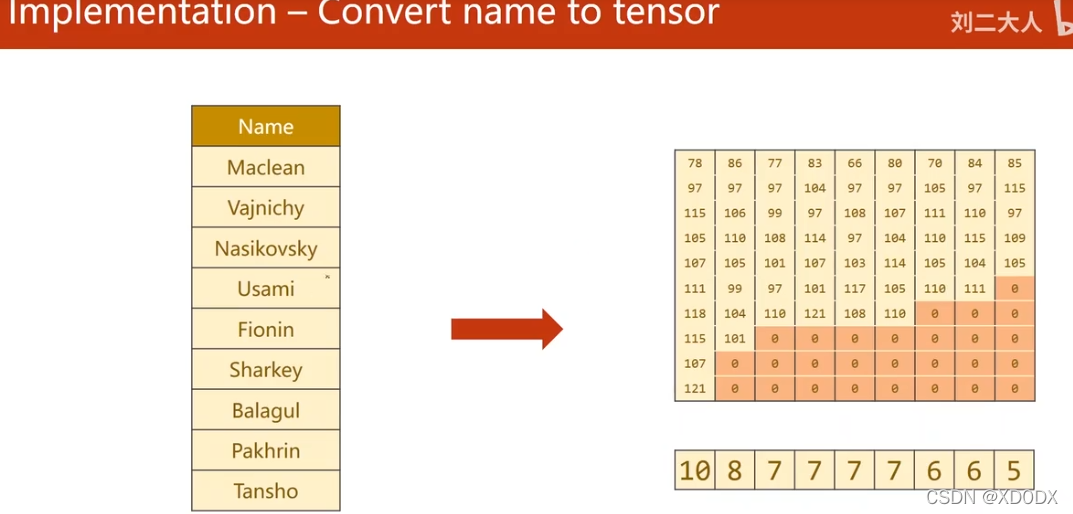

把name转换成张量:

①:先把人名拆开,再利用ASCII码对标;

②填充,padding,弥补长短不一问题;

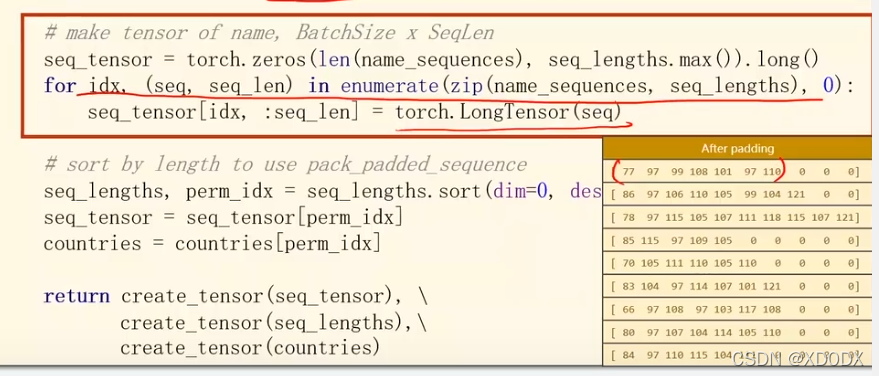

padding 实现代码:

实际上是先做一个全0的张量,然后依次把每个样本的指贴过去

code:

# Padding make tensor of name, BatchSize x SeqLen

# 先做一个全0的张量

seq_tensor = torch.zeros(len(name_sequences), seq_lengths.max()).long()

# 这是一个复制操作,

for idx, (seq, seq_len) in enumerate(zip(name_sequences, seq_lengths), 0):

seq_tensor[idx, :seq_len] = torch.LongTensor(seq)

③转置,以便于满足embedding层的输入维度;

④把这些排序;

按序列长度排序:

code:

# sort by length to use

# 排完序后得到 seq_lengths(排序后的序列) perm_idx(排序后对应的ID,即索引)pack_padded_seqence

seq_lengths, perm_idx = seq_lengths.sort(dim=0, descending=True)

seq_tensor = seq_tensor[perm_idx]

countries = countries[perm_idx]

总的code:

import gzip

import csv

import math

import torch

import time

from torchvision import datasets

from torch.utils.data import Dataset

from torch.utils.data import DataLoader

from torch.nn.utils.rnn import pack_padded_sequence

HIDDEN_SIZE = 100

BATCH_SIZE = 256

N_LAYER = 2

N_EPOCHS = 100

N_CHARS = 128

USE_GPU = False

class NameDataset(Dataset):

# 选择从训练集中读取/测试集中读取

def __init__(self, is_train_set=True):

filename = 'data/names_train.csv.gz' if is_train_set else 'data/names_test.csv.gz'

with gzip.open(filename, 'rt') as f:

reader = csv.reader(f)

# 读取所有行 类型(name, language)

rows = list(reader)

# 把每一行的第0个元素放一起,即 name (row[0])集合在一个列表

self.names = [row[0] for row in rows]

self.len = len(self.names)

# countries 同理 countries对应将来的标签

self.countries = [row[1] for row in rows]

# set(): 把列表变为集合,即去除重复元素,每个语言就只剩下一个实例,再sorted排序

self.country_list = list(sorted(set(self.countries)))

# 把这个列表转变为一个词典

self.country_dict = self.getCountryDict()

self.country_num = len(self.country_list)

# 提供索引访问,返回两项

def __getitem__(self, index):

return self.names[index], self.country_dict[self.countries[index]]

def __len__(self):

return self.len

def getCountryDict(self):

country_dict = dict()

for idx, country_name in enumerate(self.country_list, 0):

country_dict[country_name] = idx

return country_dict

# 根据键值对的索引返回国家的字符串

def idx2country(self, index):

return self.country_list[index]

# 想知道一共有几个国家

def getCountriesNum(self):

return self.country_num

trainset = NameDataset(is_train_set=True)

trainloader = DataLoader(trainset, batch_size=BATCH_SIZE, shuffle=True)

testset = NameDataset(is_train_set=False)

testloader = DataLoader(testset, batch_size=BATCH_SIZE, shuffle=False)

# N_COUNTRY 决定将来模型的输出维度

N_COUNTRY = trainset.getCountriesNum()

def create_tensor(tensor):

if USE_GPU:

device = torch.device("cuda:0")

tensor = tensor.to(device)

return tensor

class RNNClassifier(torch.nn.Module):

def __init__(self, input_size, hidden_size, output_size, n_layers=1, bidirectional=True):

super(RNNClassifier, self).__init__()

# hidden_size 和n_layers要用在GRU的处理上

self.hidden_size = hidden_size

self.n_layers = n_layers

self.n_directions = 2 if bidirectional else 1

self.embedding = torch.nn.Embedding(input_size, hidden_size)

self.gru = torch.nn.GRU(hidden_size, hidden_size, n_layers,

bidirectional=bidirectional)

# 若是n_directions = 2,hidden是俩个,所以线形层要把这俩个拼在一起,故hidden_size也要乘n_directions。

self.fc = torch.nn.Linear(hidden_size * self.n_directions, output_size)

def _init_hidden(self, batch_size):

hidden = torch.zeros(self.n_layers * self.n_directions,

batch_size, self.hidden_size)

return create_tensor(hidden)

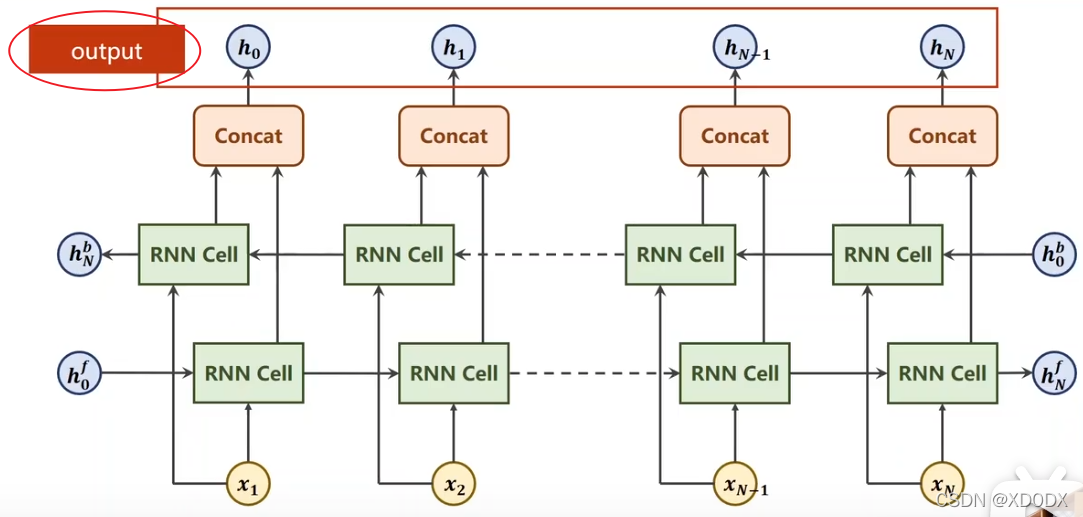

def forward(self, input, seq_lengths):

# input shape:B X S -> S X B 矩阵转置,因为embedding层 需要的输入维度为:seqLen * batch_size

input = input.t()

# 同时算出batch_size

batch_size = input.size(1)

# 利用构造的函数和求导的batch_size得到初始化的隐层h

hidden = self._init_hidden(batch_size)

embedding = self.embedding(input)

# pack them up

gru_input = pack_padded_sequence(embedding, seq_lengths)

output, hidden = self.gru(gru_input, hidden)

if self.n_directions == 2:

hidden_cat = torch.cat([hidden[-1], hidden[-2]], dim=1)

else:

hidden_cat = hidden[-1]

fc_output = self.fc(hidden_cat)

return fc_output

# name2list是一个元组

def name2list(name):

# 把名字变为列表,列表生成式↓👇,把每一个名字变成一个ASCII码列表

arr = [ord(c) for c in name]

# 返回一个列表本身和列表的长度

return arr, len(arr)

def make_tensors(names, countries):

sequences_and_lengths = [name2list(name) for name in names]

# 取出列表的名字和列表长度

name_sequences = [sl[0] for sl in sequences_and_lengths]

seq_lengths = torch.LongTensor([sl[1] for sl in sequences_and_lengths])

countries = countries.long()

# Padding make tensor of name, BatchSize x SeqLen

# 先做一个全0的张量

seq_tensor = torch.zeros(len(name_sequences), seq_lengths.max()).long()

# 这是一个复制操作,

for idx, (seq, seq_len) in enumerate(zip(name_sequences, seq_lengths), 0):

seq_tensor[idx, :seq_len] = torch.LongTensor(seq)

# sort by length to use pack_padded_seqence

# 排完序后得到 seq_lengths(排序后的序列) perm_idx(排序后对应的ID,即索引)

seq_lengths, perm_idx = seq_lengths.sort(dim=0, descending=True)

seq_tensor = seq_tensor[perm_idx]

countries = countries[perm_idx]

return create_tensor(seq_tensor), create_tensor(seq_lengths), create_tensor(countries)

def trainModel():

total_loss = 0

for i, (names, countries) in enumerate(trainloader, 1):

inputs, seq_lengths, target = make_tensors(names, countries)

output = classifier(inputs, seq_lengths)

loss = criterion(output, target)

optimizer.zero_grad()

loss.backward()

optimizer.step()

total_loss += loss.item()

if i % 10 == 0:

print(f'[{time_since(start)}] Epoch {epoch} ', end='')

print(f'[{i * len(inputs)}/{len(trainset)}] ', end='')

print(f'loss={total_loss / (i * len(inputs))}')

return total_loss

def testModel():

correct = 0

total = len(testset)

print("evaluating train model ...")

with torch.no_grad():

for i, (names, countries) in enumerate(testloader, 1):

inputs, seq_lengths, target = make_tensors(names, countries)

output = classifier(inputs, seq_lengths)

pred = output.max(dim=1, keepdim=True)[1]

correct += pred.eq(target.view_as(pred)).sum().item()

percent = '%.2f' % (100 * correct / total)

print(f'Test set: Accuracy {correct}/{total} {percent}%')

return correct / total

def time_since(since):

# 当前的时间-开始的时间

s = time.time() - since

m = math.floor(s / 60)

s -= m * 60

return '%dm %ds' % (m, s)

if __name__ == '__main__':

classifier = RNNClassifier(N_CHARS, HIDDEN_SIZE, N_COUNTRY, N_LAYER)

if USE_GPU:

device = torch.device("cude:0")

classifier.to(device)

criterion = torch.nn.CrossEntropyLoss()

optimizer = torch.optim.Adam(classifier.parameters(), lr=0.001)

# 求一下训练时间有多长

start = time.time()

print("Training for %d eopchs..." % N_EPOCHS)

acc_list = []

for epoch in range(1, N_EPOCHS + 1):

trainModel()

acc = testModel()

acc_list.append(acc)

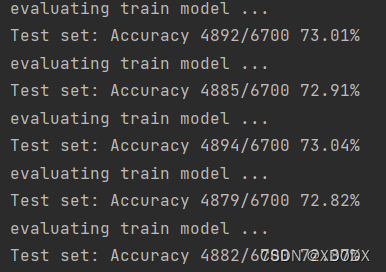

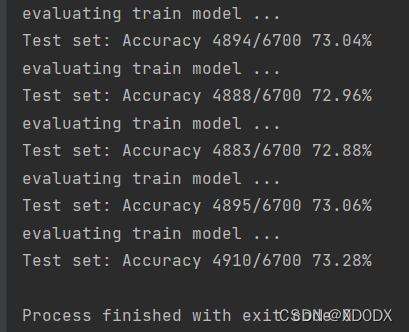

效果图(cpu):

效果图(GPU):

tips,用GPU时需要在此处更改:在seq_lengths加一个.cpu()

# pack them up

gru_input = pack_padded_sequence(embedding, seq_lengths.cpu())