aceback (most recent call last):

File "D:\my_codeworkspace\bishe_new\jiaoben\train_KINN_NonFEM_based_swin_freezebone.py", line 264, in <module>

trainOneParameter(params)

File "D:\my_codeworkspace\bishe_new\jiaoben\train_KINN_NonFEM_based_swin_freezebone.py", line 234, in trainOneParamet

er

train(model_two=model_two_output,optimizer_model_two=optimizer,train_loader=train_loader)

File "D:\my_codeworkspace\bishe_new\jiaoben\train_KINN_NonFEM_based_swin_freezebone.py", line 130, in train

P_I_loss.backward()

File "C:\Users\asus\.conda\envs\pytorch\lib\site-packages\torch\_tensor.py", line 307, in backward

torch.autograd.backward(self, gradient, retain_graph, create_graph, inputs=inputs)

File "C:\Users\asus\.conda\envs\pytorch\lib\site-packages\torch\autograd\__init__.py", line 154, in backward

Variable._execution_engine.run_backward(

RuntimeError: one of the variables needed for gradient computation has been modified by an inplace operation: [torch.cu

da.DoubleTensor [18, 3]], which is output 0 of AsStridedBackward0, is at version 1; expected version 0 instead. Hint: e

nable anomaly detection to find the operation that failed to compute its gradient, with torch.autograd.set_detect_anoma

ly(True).

��������δ�������ֵ�

def train(model_two, optimizer_model_two, train_loader):

model_two.train() # train model�Ὺ��Dropout��BN

# model_stress.train()

for i, data in enumerate(train_loader):

optimizer_model_two.zero_grad() # �� optimizer �� model ������ gradient �w��

# optimizer_model_stress.zero_grad()

data = tuple(i.cuda() for i in data) # ��ѭ��

strain, stress = model_two(

(data[0], data[3][:, 0:2, :, :])) # ���� model �� forward ��������Ԥ����

# stress = model_stress((data[0], data[3][:, 0:2, :, :]))

# print(data[8].shape)

# print(displacement.shape)

trival_loss = model_two.forward_loss(strain_target=data[2], strain=strain, stress_target=data[1], stress=stress)

# loss2 = model_stress.forward_loss(stress_target=data[1], stress=stress)

# print(displacement)

# print('**********')

# print(loss)

P_I_loss = calculate_P_Iloss(stress_12=stress, strain_12=strain)

# loss = loss1+loss2 + P_I_loss

print('PI {}'.format(P_I_loss))

print('trival {}'.format(trival_loss))

# print(loss)

# loss = trival_loss

trival_loss.backward(retain_graph=True) # tensor(item, grad_fn=<NllLossBackward>)

# print(model_two.blocks.grad) ����

# print(model_two.transform.grad)

# print_module_grad(model_two.blocks)

# print_module_grad(model_two.transform)

# # P_I_loss.backward()

# activate_module(model_two.blocks)

# activate_module(model_two.transform)

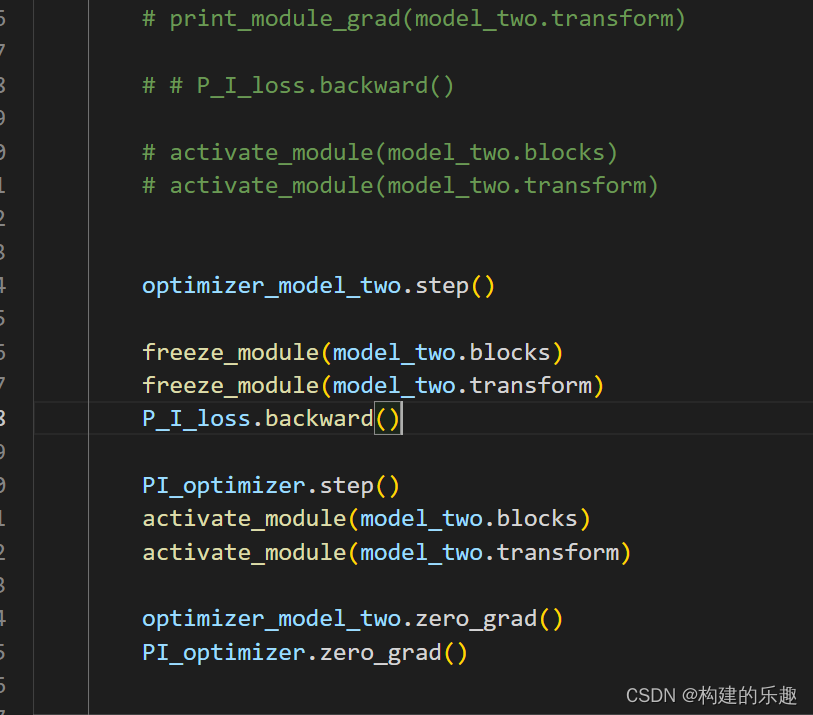

optimizer_model_two.step()

optimizer_model_two.zero_grad()

freeze_module(model_two.blocks)

freeze_module(model_two.transform)

P_I_loss.backward()

optimizer_model_two.step()

optimizer_model_two.zero_grad()

����Ϊ,���������ģ�������require_grad������ΪF��,optimizer����������������Ҫ�IJ��ֵIJ�������������ԸΥ���ҵĴ�����P_I loss.backward���־ͳ��˴���

���Ʋⱨ��ԭ��:

������־���ᵽ��in-place�ؼ���,��֪��in-place�����ƻ��˼���ͼ,�����Ҿͷ�����:

optimizer_model_two.zero_grad()

freeze_module(model_two.blocks)

freeze_module(model_two.transform)

P_I_loss.backward()

optimizer_model_two.step()

optimizer_model_two.zero_grad()

zero_grad����in_place����!

���,ÿ��zero_grad֮�����ٽ���ǰ����������ͼ���ܷ�����ʹ��backward������(��freeze�����)

��������ƿ�,��Ϊ����trival_loss��Ӧ��optimizer�����Ժ�,�������zero_grad,��ʹ��PI_loss����,�ͻ���ɲ���λ�õ��ݶ�ʵ��ֵ��trival_loss��P_Iloss���η����������ݶ�֮��,��һ��PI_optimizer����,���Ч����P_I����Ͻ���Dz������������trival_loss���ݶȱ����������Ρ�

��������Ҫ������:Ū���require_grad����backward��ֹ�ݶȻ�����optimzer��ֹ�ݶȡ����Ʋ�����optimizer�Ρ�

����:

���췢�ָ���������,ʹ������·��������:

��ȷ������zero_grad����in-place����,����optimizer step����Ҳ����in-place����,�������������Ҫ��Σ����