import torch

from torchvision import datasets, transforms

import time

import numpy as np

import matplotlib.pyplot as plt

from PIL import Image

transform = transforms.Compose([transforms.ToTensor(),

transforms.Normalize((0.5,), (0.5,))])

trainset = datasets.FashionMNIST('~/.pytorch/F_MNIST_data/', download=True, train=True, transform=transform)

trainloader = torch.utils.data.DataLoader(trainset, batch_size=64, shuffle=True)

testset = datasets.FashionMNIST('~/.pytorch/F_MNIST_data/', download=True, train=False, transform=transform)

testloader = torch.utils.data.DataLoader(testset, batch_size=64, shuffle=True)

from torch import nn, optim

import torch.nn.functional as F

from sklearn.metrics import accuracy_score

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(1, 20, 5, 1)

self.conv2 = nn.Conv2d(20, 50, 5, 1)

self.fc1 = nn.Linear(4 * 4 * 50, 500)

self.fc2 = nn.Linear(500, 10)

def forward(self, x):

x = F.relu(self.conv1(x))

x = F.max_pool2d(x, 2, 2)

x = F.relu(self.conv2(x))

x = F.max_pool2d(x, 2, 2)

x = x.view(-1, 4 * 4 * 50)

x = F.relu(self.fc1(x))

x = self.fc2(x)

return F.log_softmax(x, dim=1)

def predict(self,x):

pred = self.forward(x).argmax(dim=1, keepdim=True).cpu().detach().numpy().reshape(1,-1)[0]

return pred

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

criterion = nn.NLLLoss()

model = Net().to(device)

optimizer = optim.SGD(model.parameters(),lr=0.003)

import numpy as np

import torch

class EarlyStopping:

def __init__(self, patience=7, verbose=False, delta=0):

self.patience = patience

self.verbose = verbose

self.counter = 0

self.best_score = None

self.early_stop = False

self.val_loss_min = np.Inf

self.delta = delta

def __call__(self, val_loss, model):

score = -val_loss

if self.best_score is None:

self.best_score = score

self.save_checkpoint(val_loss, model)

elif score < self.best_score + self.delta:

self.counter += 1

print(f'EarlyStopping counter: {self.counter} out of {self.patience}')

if self.counter >= self.patience:

self.early_stop = True

else:

self.best_score = score

self.save_checkpoint(val_loss, model)

self.counter = 0

def save_checkpoint(self, val_loss, model):

'''Saves model when validation loss decrease.'''

if self.verbose:

print(f'Validation loss decreased ({self.val_loss_min:.6f} --> {val_loss:.6f}). Saving model ...')

torch.save(model.state_dict(), 'checkpoint.pt')

self.val_loss_min = val_loss

epochs = 200

losses = []

losses_test = []

for e in range(epochs):

since = time.time()

print("epoch:",e+1)

running_loss = 0

acc = 0

for images, labels in trainloader:

images = images.to(device)

labels = labels.to(device)

log_ps = model(images)

loss = criterion(log_ps, labels)

optimizer.zero_grad()

loss.backward()

optimizer.step()

acc = acc + accuracy_score(model(images).argmax(dim=1, keepdim=True).cpu().detach().numpy().reshape(1,-1)[0],labels.cpu().detach().numpy().reshape(1,-1)[0])

running_loss += loss.item()

print("Average trainning loss:{:.4f}".format(running_loss/len(trainloader)))

losses.append(running_loss/len(trainloader))

print("Average Accuracy:{:.4f}".format(acc/len(trainloader)))

test_loss = 0

correct = 0

with torch.no_grad():

for data, target in testloader:

data, target = data.to(device), target.to(device)

output = model(data)

test_loss += F.nll_loss(output, target, reduction='sum').item()

pred = output.argmax(dim=1, keepdim=True)

correct += pred.eq(target.view_as(pred)).sum().item()

test_loss /= len(testloader.dataset)

losses_test.append(test_loss)

print('Test set: Average loss: {:.4f}\nAccuracy: {}/{} ({:.0f}%)'.format(

test_loss, correct, len(testloader.dataset),

100. * correct / len(testloader.dataset)))

print("total acc:{:.4f}".format((correct / len(testloader.dataset)+acc/len(trainloader))/2))

time_elapsed = time.time() - since

print('Training complete in {:.0f}m {:.0f}s\n'.format(

time_elapsed // 60, time_elapsed % 60))

epoch: 1

Average trainning loss:1.8171

Average Accuracy:0.4848

Test set: Average loss: 1.0191

Accuracy: 6506/10000 (65%)

total acc:0.5677

Training complete in 0m 17s

epoch: 2

Average trainning loss:0.8103

Average Accuracy:0.7237

Test set: Average loss: 0.7470

Accuracy: 7318/10000 (73%)

total acc:0.7277

Training complete in 0m 17s

...

epoch: 198

Average trainning loss:0.0796

Average Accuracy:0.9936

Test set: Average loss: 0.3552

Accuracy: 9007/10000 (90%)

total acc:0.9471

Training complete in 0m 18s

epoch: 199

Average trainning loss:0.0789

Average Accuracy:0.9940

Test set: Average loss: 0.3161

Accuracy: 9079/10000 (91%)

total acc:0.9509

Training complete in 0m 18s

epoch: 200

Average trainning loss:0.0761

Average Accuracy:0.9946

Test set: Average loss: 0.3057

Accuracy: 9109/10000 (91%)

total acc:0.9527

Training complete in 0m 18s

fig = plt.figure(figsize=(14,8))

plt.plot(range(len(losses)),losses,label="train_loss")

plt.plot(range(len(losses)),losses_test,label="losses_test")

plt.legend()

<matplotlib.legend.Legend at 0x1913bfd7e80>

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

criterion = nn.NLLLoss()

model = Net().to(device)

optimizer = optim.SGD(model.parameters(),lr=0.003)

epochs = 200

patience = 10

early_stopping = EarlyStopping(patience, verbose=True)

losses = []

losses_test = []

for e in range(epochs):

since = time.time()

print("epoch:",e+1)

running_loss = 0

acc = 0

for images, labels in trainloader:

images = images.to(device)

labels = labels.to(device)

log_ps = model(images)

loss = criterion(log_ps, labels)

optimizer.zero_grad()

loss.backward()

optimizer.step()

acc = acc + accuracy_score(model(images).argmax(dim=1, keepdim=True).cpu().detach().numpy().reshape(1,-1)[0],labels.cpu().detach().numpy().reshape(1,-1)[0])

running_loss += loss.item()

print("Average trainning loss:{:.4f}".format(running_loss/len(trainloader)))

losses.append(running_loss/len(trainloader))

print("Average Accuracy:{:.4f}".format(acc/len(trainloader)))

test_loss = 0

correct = 0

with torch.no_grad():

for data, target in testloader:

data, target = data.to(device), target.to(device)

output = model(data)

test_loss += F.nll_loss(output, target, reduction='sum').item()

pred = output.argmax(dim=1, keepdim=True)

correct += pred.eq(target.view_as(pred)).sum().item()

test_loss /= len(testloader.dataset)

losses_test.append(test_loss)

print('Test set: Average loss: {:.4f}\nAccuracy: {}/{} ({:.0f}%)'.format(

test_loss, correct, len(testloader.dataset),

100. * correct / len(testloader.dataset)))

early_stopping(test_loss, model)

if early_stopping.early_stop:

print("Early stopping")

print("total acc:{:.4f}\n".format((correct / len(testloader.dataset)+acc/len(trainloader))/2))

time_elapsed = time.time() - since

print('Training complete in {:.0f}m {:.0f}s'.format(

time_elapsed // 60, time_elapsed % 60))

break

print("total acc:{:.4f}".format((correct / len(testloader.dataset)+acc/len(trainloader))/2))

time_elapsed = time.time() - since

print('Training complete in {:.0f}m {:.0f}s\n'.format(

time_elapsed // 60, time_elapsed % 60))

epoch: 1

Average trainning loss:1.5747

Average Accuracy:0.5249

Test set: Average loss: 0.8811

Accuracy: 6810/10000 (68%)

Validation loss decreased (inf --> 0.881058). Saving model ...

total acc:0.6029

Training complete in 0m 18s

epoch: 2

Average trainning loss:0.7524

Average Accuracy:0.7442

Test set: Average loss: 0.7077

Accuracy: 7396/10000 (74%)

Validation loss decreased (0.881058 --> 0.707704). Saving model ...

total acc:0.7419

Training complete in 0m 18s

...

epoch: 57

Average trainning loss:0.2447

Average Accuracy:0.9316

Test set: Average loss: 0.3118

Accuracy: 8885/10000 (89%)

EarlyStopping counter: 9 out of 10

total acc:0.9100

Training complete in 0m 19s

epoch: 58

Average trainning loss:0.2426

Average Accuracy:0.9325

Test set: Average loss: 0.3106

Accuracy: 8875/10000 (89%)

EarlyStopping counter: 10 out of 10

Early stopping

total acc:0.9100

Training complete in 0m 19s

fig = plt.figure(figsize=(14,8))

plt.plot(range(len(losses)),losses,label="train_loss")

plt.plot(range(len(losses)),losses_test,label="losses_test")

plt.legend()

<matplotlib.legend.Legend at 0x1908097d400>

print(model)

Net(

(conv1): Conv2d(1, 20, kernel_size=(5, 5), stride=(1, 1))

(conv2): Conv2d(20, 50, kernel_size=(5, 5), stride=(1, 1))

(fc1): Linear(in_features=800, out_features=500, bias=True)

(fc2): Linear(in_features=500, out_features=10, bias=True)

)

text_labels = ['t-shirt', 'trouser', 'pullover', 'dress', 'coat', 'sandal', 'shirt', 'sneaker', 'bag', 'ankle boot']

dic = {}

for i in text_labels:

dic[i] = 0

trainloader_total = torch.utils.data.DataLoader(trainset, batch_size=1, shuffle=True)

for images, labels in trainloader_total:

dic[text_labels[labels[0]]] = dic[text_labels[labels[0]]] + 1

num = []

for i in dic:

num.append(dic[i])

fig = plt.figure(figsize=(14,8))

plt.bar(range(len(num)),num)

plt.xticks(range(len(num)),text_labels)

plt.ylabel("Number of samples")

Text(0, 0.5, 'Number of samples')

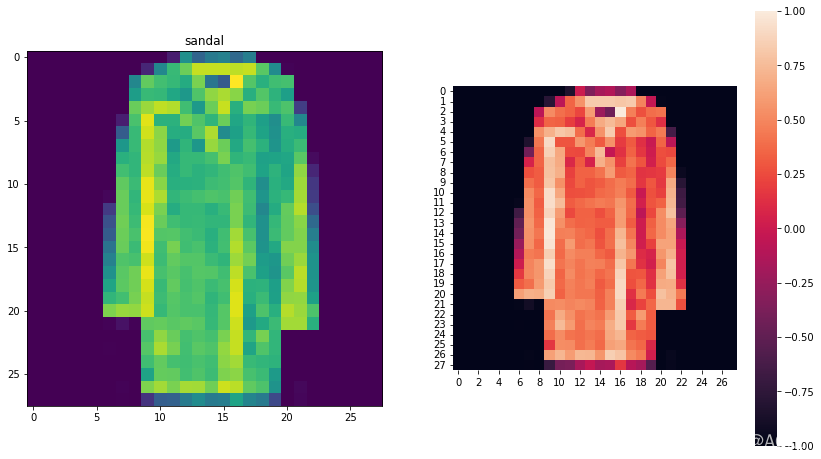

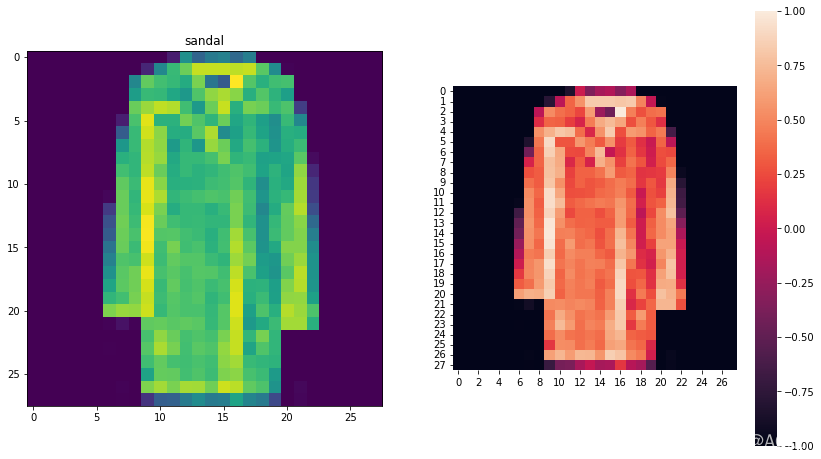

trainloader_tota2 = torch.utils.data.DataLoader(trainset, batch_size=60000, shuffle=True)

for images, labels in trainloader_tota2:

img = images[0].reshape((28, 28)).numpy()

break

Text(0.5, 1.0, 'coat')

import seaborn as sns

fig = plt.figure(figsize=(14,8))

fig = plt.subplot(121)

plt.imshow(img)

plt.title(text_labels[labels[0]])

fig = plt.subplot(122)

sns.heatmap(img,square=True)

<AxesSubplot:>

|