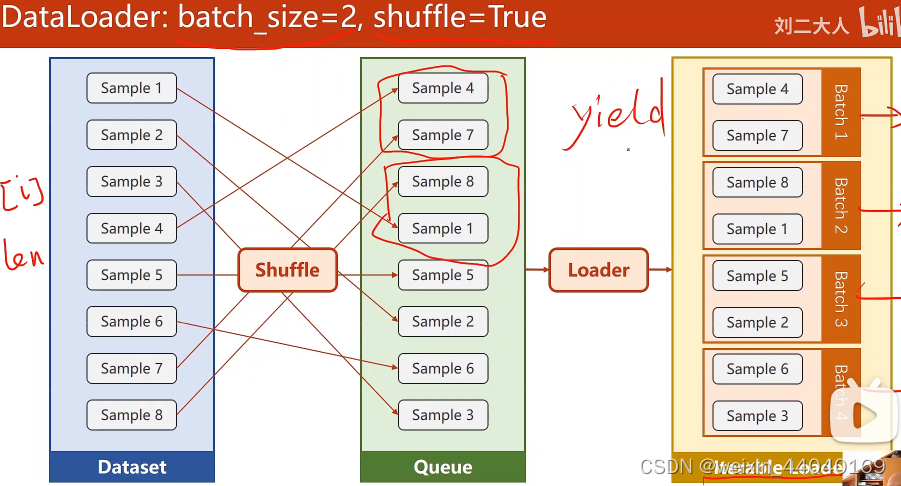

dataset dataloader

加载数据

dataset 构造数据集(支持索引)

dataloader 拿出minibatch

batch: 加快计算速度

1样本: 能较好随机性克服鞍点(缺点时间长)

minibatch 用来均衡两者

10,000 样本

batch-size 1000

iteration 10

先打乱,再分成minibatch(如下图)

import torch

import numpy as np

from torch.utils.data import Dataset #Dataset抽象类,不可实例需要有类去继承它

from torch.utils.data import DataLoader #可实例

#数据集实现

class DiabetesDataset(Dataset):

def __init__(self, filepath):

xy = np.loadtxt(filepath, delimiter=',', dtype=np.float32)

self.len = xy.shape[0] # shape(多少行,多少列)(n,9)以此得到数据集个数

self.x_data = torch.from_numpy(xy[:, :-1])

self.y_data = torch.from_numpy(xy[:, [-1]])

def __getitem__(self, index):#魔法方法,使能支持下标操作dataset[index]

return self.x_data[index], self.y_data[index]#根据索引返回样本

def __len__(self):#魔法方法,返回数据条数

return self.len

dataset = DiabetesDataset('diabetes.csv')#加载器

train_loader = DataLoader(dataset=dataset, batch_size=32, shuffle=True, num_workers=2) #num_workers 多线程

#shuffle=True打乱,num_workers几个并行的进程

#模型

class Model(torch.nn.Module):

def __init__(self):

super(Model, self).__init__()

self.linear1 = torch.nn.Linear(8, 6)

self.linear2 = torch.nn.Linear(6, 4)

self.linear3 = torch.nn.Linear(4, 1)

self.sigmoid = torch.nn.Sigmoid()

def forward(self, x):

x = self.sigmoid(self.linear1(x))

x = self.sigmoid(self.linear2(x))

x = self.sigmoid(self.linear3(x))

return x

model = Model()

# construct loss and optimizer

criterion = torch.nn.BCELoss(reduction='mean')

optimizer = torch.optim.SGD(model.parameters(), lr=0.01)

# training cycle forward, backward, update

if __name__ == '__main__':

for epoch in range(100): #enumerate获得当前迭代次数i ,train_loade拿出来的是Dataset里__getitem()__的值(x,y)并自动变成矩阵tensor

for i, data in enumerate(train_loader, 0): # train_loader 是先shuffle后划分mini_batch ,for--在几个minibatch

inputs, labels = data #minibatch里所有数据进行的

y_pred = model(inputs)

loss = criterion(y_pred, labels)

print(epoch, i, loss.item())

optimizer.zero_grad()

loss.backward()

optimizer.step()

多线程时会遇到问题:

解决:将需要迭代的代码封装起来用 if 或 函数

if name==“main”: