外包 | LBP/HOG/CNN 实现对 CK/jaffe/fer2013 人脸表情数据集分类

文章目录

1. Data

csdn下载

如果下载不了可以底下评论留下邮箱地址~

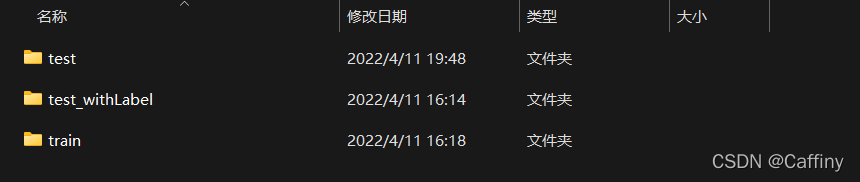

三个数据集已经经过处理, 大小 resize 为 (48, 48), 并且分好了训练集和测试集, 有需要自取噢

数据集和代码要放在同一个目录下

2. Code

2-1. LBP and HOG

a. 读取数据集

def readData(dataName, label2id):

X, Y = [], []

path = f'./{dataName}/train'

for label in os.listdir(path):

for image in os.listdir(os.path.join(path, label)):

img = cv2.imread(os.path.join(path, label, image), cv2.IMREAD_GRAYSCALE)

img = img / 255.0

X.append(img)

Y.append(label2id[label])

return X, Y

b. LBP

LBP算法内容主要参考知乎, 代码重新写了如下:

def lbpSingle(img):

h, w = img.shape

# 新建一个和图像大小一样的全零矩阵

temp_1 = np.zeros(img.shape)

for i in range(1, h - 1):

for j in range(1, w - 1):

# 判断选中的3x3矩阵中四周数字是否大于矩阵矩阵中心数字

temp_2 = (img[i - 1:i + 2, j - 1:j + 2] > img[i][j]).astype(np.int8)

# 重整形状为一列

temp_2 = temp_2.reshape(9)

# 删掉中心值

temp_2 = np.delete(temp_2, 4)

# 由于要按照顺时针排序, 因此对调顺序

temp_2[3], temp_2[4] = temp_2[4], temp_2[3]

temp_2[5], temp_2[7] = temp_2[7], temp_2[5]

temp_2 = ''.join('%s' % i for i in temp_2)

# 01二进制转成十进制

temp_1[i][j] = int(temp_2, 2)

# 返回的是这个图像经过LBP处理的特征

return [temp_1.flatten()]

def lbpBatch(imgs):

imgsFeatureList = []

for img in imgs:

imgsFeatureList.append(lbpSingle(img)[0])

return imgsFeatureList

c. HOG

HOG算法直接调用sklearn

def hogSingle(img):

feature, _ = hog(img, orientations=9, pixels_per_cell=(6, 6), cells_per_block=(6, 6),

block_norm='L2-Hys', visualize=True)

return [feature]

def hogBatch(imgs):

featureList = []

for img in imgs:

feature, _ = hog(img, orientations=9, pixels_per_cell=(6, 6), cells_per_block=(6, 6),

block_norm='L2-Hys', visualize=True)

featureList.append(feature)

return featureList

d. 分类模型

def prepareModel(name):

if name == 'svm':

m = sklearn.svm.SVC(C=2, kernel='rbf', gamma=10, decision_function_shape='ovr') # acc=0.9534

elif name == 'knn':

m = KNeighborsClassifier(n_neighbors=1)

elif name == 'dt':

m = DecisionTreeClassifier()

elif name == 'nb':

m = GaussianNB()

elif name == 'lg':

m = LogisticRegression()

else:

m = RandomForestClassifier(n_estimators=180, random_state=0)

return m

?

2-2. CNN

a. 准备数据集

# todo: 准备数据集

whichDataSet = 'CK' # 选择用哪个数据集 CK / fer2013 / jaffe

trainDir = f'./{whichDataSet}/train/' # 训练集路径

trainingDataGenerator = ImageDataGenerator(

rescale=1. / 255, # 值将在执行其他处理前乘到整个图像上

rotation_range=40, # 整数,数据提升时图片随机转动的角度

width_shift_range=0.2, # 浮点数,图片宽度的某个比例,数据提升时图片随机水平偏移的幅度

height_shift_range=0.2, # 浮点数,图片高度的某个比例,数据提升时图片随机竖直偏移的幅度

shear_range=0.2, # 浮点数,剪切强度(逆时针方向的剪切变换角度)。是用来进行剪切变换的程度

zoom_range=0.2, # 用来进行随机的放大

validation_split=0.25,

horizontal_flip=True, # 布尔值,进行随机水平翻转。随机的对图片进行水平翻转,这个参数适用于水平翻转不影响图片语义的时候

fill_mode='nearest' # 'constant','nearest','reflect','wrap'之一,当进行变换时超出边界的点将根据本参数给定的方法进行处理

)

trainGenerator = trainingDataGenerator.flow_from_directory(

trainDir, subset='training', target_size=(48, 48), class_mode='categorical'

)

validGenerator = trainingDataGenerator.flow_from_directory(

trainDir, subset='validation', target_size=(48, 48), class_mode='categorical'

)

b. 准备模型

这里用的是三层CNN:

# todo: 准备模型

model = tf.keras.models.Sequential([

# 1

Conv2D(16, (5, 5), activation='relu', input_shape=(48, 48, 3), padding='same'),

MaxPooling2D(2, 2),

# 2

Conv2D(32, (5, 5), activation='relu', padding='same'),

MaxPooling2D(2, 2),

# 3

Conv2D(32, (5, 5), activation='relu', padding='same'),

MaxPooling2D(2, 2),

# 4

Flatten(),

Dense(128, activation='relu'),

Dropout(0.5),

Dense(7, activation='softmax')

])

model.summary()

model.compile(loss='categorical_crossentropy', optimizer='Adam', metrics=['accuracy'])

c. 准备测试

读取test文件夹下没有标签的图片, 然后批量传进训练好的模型, 预测后将结果输出到一个csv文件

# todo: 测试模型

# 准备测试集

model = load_model(f'./{whichDataSet}/{whichDataSet}.h5')

with open(f'./{whichDataSet}/class2id.pkl', 'rb') as f:

class2id = pickle.load(f)

f.close()

fileList = os.listdir(f'./{whichDataSet}/test/')

testSet = []

for file in fileList:

img = image.load_img(f'./{whichDataSet}/test/{file}', target_size=(48, 48))

testSet.append(image.img_to_array(img))

testSet = np.array(testSet)

# 模型预测

modelOutput = model.predict(testSet, batch_size=10)

# 保存结果

getLabel = np.argmax(modelOutput, axis=1).flatten()

df = pd.DataFrame(columns=['file', 'label'])

for i in range(len(getLabel)):

df.append({

'file': fileList[i], 'label': class2id[getLabel[i]]

}, ignore_index=True)

df.to_csv(f'./{whichDataSet}/result.csv')

?

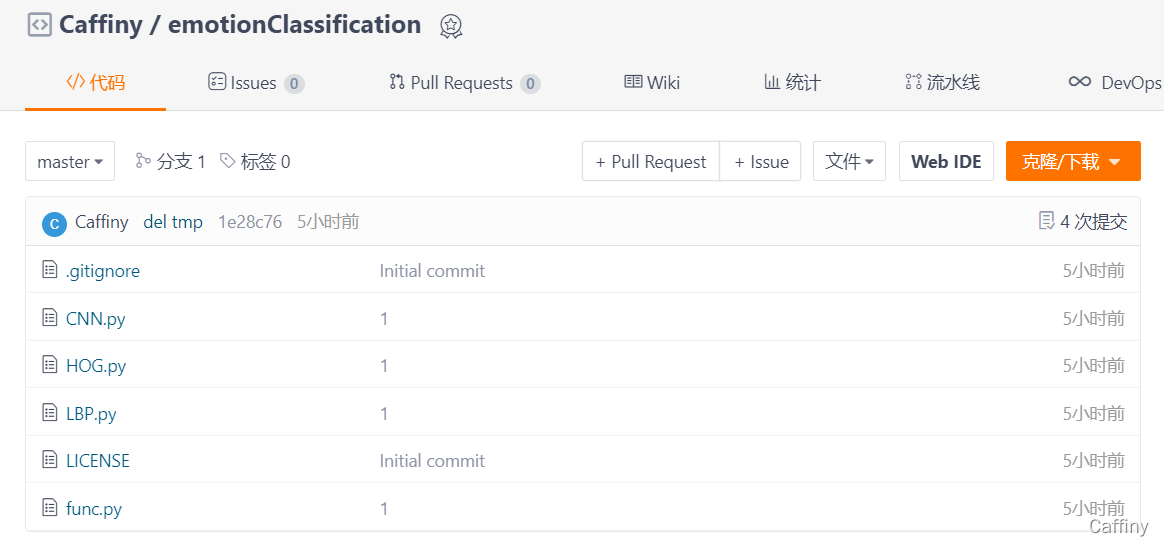

完整代码

完整代码在gitee上,点击跳转, 有需要自取, 欢迎??star??!

由于这个代码只是帮别人做的一个小毕设, 所以关于里面的 HOG和LBP 算法都没有深入了解, 因此这篇blog只是记录一下, 顺便分享一下数据集和代码~

如果数据集下载不了的欢迎底下留学邮箱地址, 看到后我会通过邮件发送给你