1、环境搭建(PC端ubuntu16.04搭建rknn环境)

(1)安装anaconda环境(为了便于管理自己的环境建议安装,安装步骤请自行搜索,本人安装anaconda版本为Anaconda3-2019-Linux-x86_64.sh)

(2)下载rknn安装包

关于版本问题:建议安装瑞芯微更新的最新版本,本人之前用1.6在模型转换过程中出现莫名错误。

下载链接https://github.com/rockchip-linux/rknn-toolkit

本人安装(版本1.7.1)链接:

????????1)源码链接https://pan.baidu.com/s/1r7zg8MPWIKagUkAguYTyhA 提取码:ajbk

????????2)集成开发环境链接https://pan.baidu.com/s/1JH6HFfM9VLIynV_pqw-jRw 提取码:7fks

说明:红色框是需要安装的rknn的sdk开发环境,绿色框为瑞芯微官方提供的开发source源码

(3)安装rknn环境

1)创建虚拟环境

conda create -n rk_env_1.7 python=3.62)安装依赖

pip install tensorflow-gpu==1.14.0

pip install torch==1.5.1

pip install torchvision==0.4.0

pip install mxnet-cu101==1.5.0

pip3 install opencv-python

pip3 install gluoncv3)安装rknn包

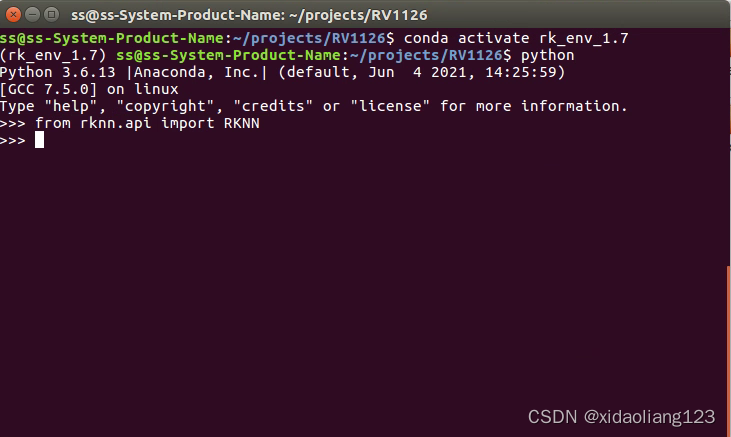

pip install rknn_toolkit-1.7.1-cp36-cp36m-linux_x86_64.whl?4)测试是否安装成功

2、模型转换

(1)yolov5s.pt转yolov5.onnx

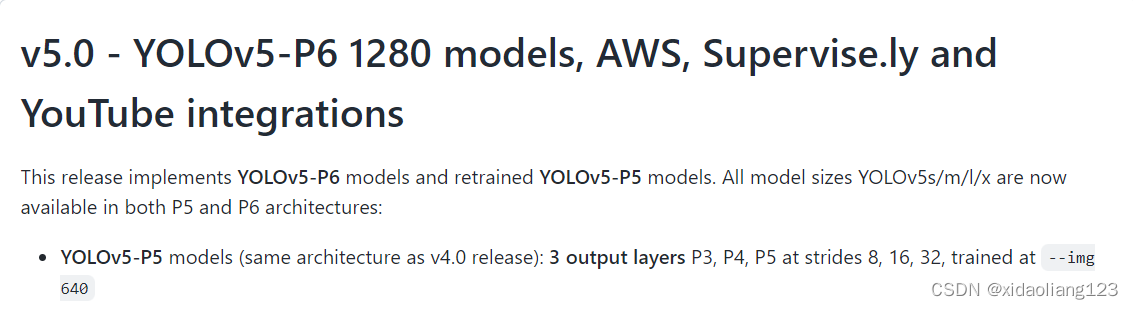

Yolov5版本一直再不停的更换,瑞芯微使用的是yolov5 5.0版本

工程源码以及下载链接:https://github.com/ultralytics/yolov5/releases

百度云链接:(待补充)

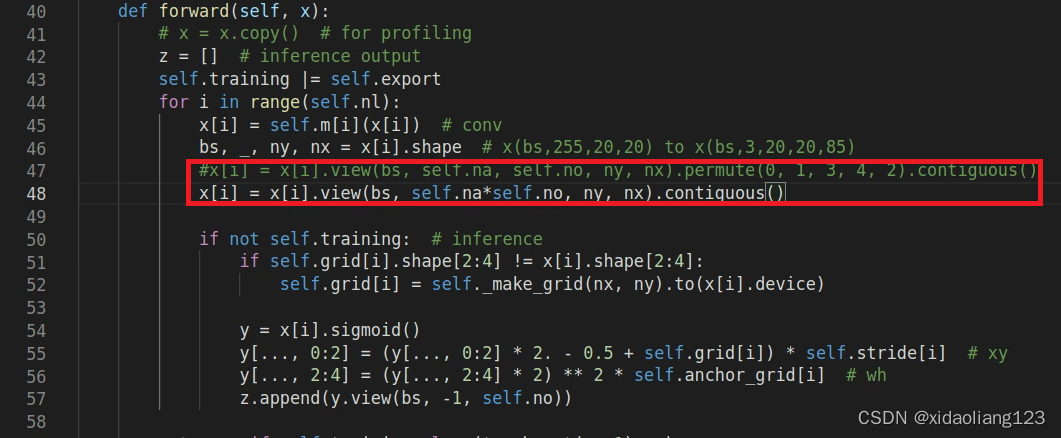

ps:转换成onnx过程中修改yolo.py,修改如下:

(2)转换成onnx命令

export PYTHONPATH="$PWD" && python models/export.py --weights ./weights/yolov5s.pt --img 640 --batch 1?(3)onnx转换从成rknn

命令:

python yolov5_rknn.py?源码:

import os

import sys

import numpy as np

from rknn.api import RKNN

ONNX_MODEL = 'yolov5s.onnx'

RKNN_MODEL = 'yolov5s.rknn'

if __name__ == '__main__':

# Create RKNN object

rknn = RKNN(verbose=True)

# pre-process config

print('--> config model')

rknn.config(mean_values=[[0, 0, 0]], std_values=[[255, 255, 255]], reorder_channel='0 1 2', target_platform='rv1126',

quantized_dtype='asymmetric_affine-u8', optimization_level=3, output_optimize=1)

print('done')

print('--> Loading model')

ret = rknn.load_onnx(model=ONNX_MODEL)

if ret != 0:

print('Load model failed!')

exit(ret)

print('done')

# Build model

print('--> Building model')

ret = rknn.build(do_quantization=True, dataset='./dataset.txt')#,pre_compile=True

if ret != 0:

print('Build yolov5s failed!')

exit(ret)

print('done')

# Export rknn model

print('--> Export RKNN model')

ret = rknn.export_rknn(RKNN_MODEL)

if ret != 0:

print('Export yolov5s.rknn failed!')

exit(ret)

print('done')

rknn.release()

3、可视化推理测试rknn模型

import os

import urllib

import traceback

import time

import sys

import numpy as np

import cv2

from rknn.api import RKNN

RKNN_MODEL = 'yolov5s.rknn'

IMG_PATH = 'dog.jpg'

QUANTIZE_ON = True

BOX_THRESH = 0.5

NMS_THRESH = 0.6

IMG_SIZE = 640

CLASSES = ("person", "bicycle", "car","motorbike ","aeroplane ","bus ","train","truck ","boat","traffic light",

"fire hydrant","stop sign ","parking meter","bench","bird","cat","dog ","horse ","sheep","cow","elephant",

"bear","zebra ","giraffe","backpack","umbrella","handbag","tie","suitcase","frisbee","skis","snowboard","sports ball","kite",

"baseball bat","baseball glove","skateboard","surfboard","tennis racket","bottle","wine glass","cup","fork","knife ",

"spoon","bowl","banana","apple","sandwich","orange","broccoli","carrot","hot dog","pizza ","donut","cake","chair","sofa",

"pottedplant","bed","diningtable","toilet ","tvmonitor","laptop ","mouse ","remote ","keyboard ","cell phone","microwave ",

"oven ","toaster","sink","refrigerator ","book","clock","vase","scissors ","teddy bear ","hair drier", "toothbrush ")

def sigmoid(x):

return 1 / (1 + np.exp(-x))

def xywh2xyxy(x):

# Convert [x, y, w, h] to [x1, y1, x2, y2]

y = np.copy(x)

y[:, 0] = x[:, 0] - x[:, 2] / 2 # top left x

y[:, 1] = x[:, 1] - x[:, 3] / 2 # top left y

y[:, 2] = x[:, 0] + x[:, 2] / 2 # bottom right x

y[:, 3] = x[:, 1] + x[:, 3] / 2 # bottom right y

return y

def resize_postprocess(x, offset_x, offset_y):

# Convert [x1, y1, x2, y2] to [x1, y1, x2, y2]

y = np.copy(x)

y[:, 0] = x[:, 0] / offset_x # top left x

y[:, 1] = x[:, 1] / offset_y # top left y

y[:, 2] = x[:, 2] / offset_x # bottom right x

y[:, 3] = x[:, 3] / offset_y # bottom right y

return y

def process(input, mask, anchors):

anchors = [anchors[i] for i in mask]

grid_h, grid_w = map(int, input.shape[0:2])

box_confidence = sigmoid(input[..., 4])

box_confidence = np.expand_dims(box_confidence, axis=-1)

box_class_probs = sigmoid(input[..., 5:])

box_xy = sigmoid(input[..., :2])*2 - 0.5

col = np.tile(np.arange(0, grid_w), grid_w).reshape(-1, grid_w)

row = np.tile(np.arange(0, grid_h).reshape(-1, 1), grid_h)

col = col.reshape(grid_h, grid_w, 1, 1).repeat(3, axis=-2)

row = row.reshape(grid_h, grid_w, 1, 1).repeat(3, axis=-2)

grid = np.concatenate((col, row), axis=-1)

box_xy += grid

box_xy *= int(IMG_SIZE/grid_h)

box_wh = pow(sigmoid(input[..., 2:4])*2, 2)

box_wh = box_wh * anchors

box = np.concatenate((box_xy, box_wh), axis=-1)

return box, box_confidence, box_class_probs

def filter_boxes(boxes, box_confidences, box_class_probs):

"""Filter boxes with box threshold. It's a bit different with origin yolov5 post process!

# Arguments

boxes: ndarray, boxes of objects.

box_confidences: ndarray, confidences of objects.

box_class_probs: ndarray, class_probs of objects.

# Returns

boxes: ndarray, filtered boxes.

classes: ndarray, classes for boxes.

scores: ndarray, scores for boxes.

"""

box_classes = np.argmax(box_class_probs, axis=-1)

box_class_scores = np.max(box_class_probs, axis=-1)

pos = np.where(box_confidences[...,0] >= BOX_THRESH)

boxes = boxes[pos]

classes = box_classes[pos]

scores = box_class_scores[pos]

return boxes, classes, scores

def nms_boxes(boxes, scores):

"""Suppress non-maximal boxes.

# Arguments

boxes: ndarray, boxes of objects.

scores: ndarray, scores of objects.

# Returns

keep: ndarray, index of effective boxes.

"""

x = boxes[:, 0]

y = boxes[:, 1]

w = boxes[:, 2] - boxes[:, 0]

h = boxes[:, 3] - boxes[:, 1]

areas = w * h

order = scores.argsort()[::-1]

keep = []

while order.size > 0:

i = order[0]

keep.append(i)

xx1 = np.maximum(x[i], x[order[1:]])

yy1 = np.maximum(y[i], y[order[1:]])

xx2 = np.minimum(x[i] + w[i], x[order[1:]] + w[order[1:]])

yy2 = np.minimum(y[i] + h[i], y[order[1:]] + h[order[1:]])

w1 = np.maximum(0.0, xx2 - xx1 + 0.00001)

h1 = np.maximum(0.0, yy2 - yy1 + 0.00001)

inter = w1 * h1

ovr = inter / (areas[i] + areas[order[1:]] - inter)

inds = np.where(ovr <= NMS_THRESH)[0]

order = order[inds + 1]

keep = np.array(keep)

return keep

def yolov5_post_process(input_data):

masks = [[0, 1, 2], [3, 4, 5], [6, 7, 8]]

anchors = [[10, 13], [16, 30], [33, 23], [30, 61], [62, 45],

[59, 119], [116, 90], [156, 198], [373, 326]]

boxes, classes, scores = [], [], []

for input,mask in zip(input_data, masks):

b, c, s = process(input, mask, anchors)

b, c, s = filter_boxes(b, c, s)

boxes.append(b)

classes.append(c)

scores.append(s)

boxes = np.concatenate(boxes)

boxes = xywh2xyxy(boxes)

classes = np.concatenate(classes)

scores = np.concatenate(scores)

nboxes, nclasses, nscores = [], [], []

for c in set(classes):

inds = np.where(classes == c)

b = boxes[inds]

c = classes[inds]

s = scores[inds]

keep = nms_boxes(b, s)

nboxes.append(b[keep])

nclasses.append(c[keep])

nscores.append(s[keep])

if not nclasses and not nscores:

return None, None, None

boxes = np.concatenate(nboxes)

classes = np.concatenate(nclasses)

scores = np.concatenate(nscores)

return boxes, classes, scores

def draw(image, boxes, scores, classes):

"""Draw the boxes on the image.

# Argument:

image: original image.

boxes: ndarray, boxes of objects.

classes: ndarray, classes of objects.

scores: ndarray, scores of objects.

all_classes: all classes name.

"""

for box, score, cl in zip(boxes, scores, classes):

top, left, right, bottom = box

print('class: {}, score: {}'.format(CLASSES[cl], score))

print('box coordinate left,top,right,down: [{}, {}, {}, {}]'.format(top, left, right, bottom))

top = int(top)

left = int(left)

right = int(right)

bottom = int(bottom)

cv2.rectangle(image, (top, left), (right, bottom), (255, 0, 0), 2)

cv2.putText(image, '{0} {1:.2f}'.format(CLASSES[cl], score),

(top, left - 6),

cv2.FONT_HERSHEY_SIMPLEX,

0.6, (0, 0, 255), 2)

def letterbox(im, new_shape=(640, 640), color=(0, 0, 0)):

# Resize and pad image while meeting stride-multiple constraints

shape = im.shape[:2] # current shape [height, width]

if isinstance(new_shape, int):

new_shape = (new_shape, new_shape)

# Scale ratio (new / old)

r = min(new_shape[0] / shape[0], new_shape[1] / shape[1])

# Compute padding

ratio = r, r # width, height ratios

new_unpad = int(round(shape[1] * r)), int(round(shape[0] * r))

dw, dh = new_shape[1] - new_unpad[0], new_shape[0] - new_unpad[1] # wh padding

dw /= 2 # divide padding into 2 sides

dh /= 2

if shape[::-1] != new_unpad: # resize

im = cv2.resize(im, new_unpad, interpolation=cv2.INTER_LINEAR)

top, bottom = int(round(dh - 0.1)), int(round(dh + 0.1))

left, right = int(round(dw - 0.1)), int(round(dw + 0.1))

im = cv2.copyMakeBorder(im, top, bottom, left, right, cv2.BORDER_CONSTANT, value=color) # add border

return im, ratio, (dw, dh)

def letter_box_postprocess(x, scalingfactor, xy_correction):

y = np.copy(x)

y[:, 0] = (x[:, 0]-xy_correction[0]) / scalingfactor # top left x

y[:, 1] = (x[:, 1]-xy_correction[1]) / scalingfactor # top left y

y[:, 2] = (x[:, 2]-xy_correction[0]) / scalingfactor # bottom right x

y[:, 3] = (x[:, 3]-xy_correction[1]) / scalingfactor # bottom right y

return y

def get_file(filepath):

templist = []

with open(filepath, "r") as f:

for item in f.readlines():

templist.append(item.strip())

return templist

if __name__ == '__main__':

# Create RKNN object

rknn = RKNN()

image_process_mode = "letter_box"

print("image_process_mode = ", image_process_mode)

if not os.path.exists(RKNN_MODEL):

print('model not exist')

exit(-1)

# Load ONNX model

print('--> Loading model')

ret = rknn.load_rknn(RKNN_MODEL)

if ret != 0:

print('Load rknn model failed!')

exit(ret)

print('done')

# init runtime environment

print('--> Init runtime environment')

ret = rknn.init_runtime()

# ret = rknn.init_runtime('rk180_8', device_id='1808')

if ret != 0:

print('Init runtime environment failed')

exit(ret)

print('done')

image_list = get_file("test_image.txt")

for image_path in image_list:

# Set inputs

image = cv2.imread(image_path)

image_name = image_path.split("/")[-1]

img_height = image.shape[0]

img_width = image.shape[1]

# img, ratio, (dw, dh) = letterbox(img, new_shape=(IMG_SIZE, IMG_SIZE))

img = cv2.cvtColor(image, cv2.COLOR_BGR2RGB)

if image_process_mode == "resize":

img = cv2.resize(img,(IMG_SIZE, IMG_SIZE))

elif image_process_mode == "letter_box":

img, scale_factor, correction = letterbox(img)

# Inference

print('--> Running model')

outputs = rknn.inference(inputs=[img])

# post process

input0_data = outputs[0]

input1_data = outputs[1]

input2_data = outputs[2]

input0_data = input0_data.reshape([3,-1]+list(input0_data.shape[-2:]))

input1_data = input1_data.reshape([3,-1]+list(input1_data.shape[-2:]))

input2_data = input2_data.reshape([3,-1]+list(input2_data.shape[-2:]))

input_data = list()

input_data.append(np.transpose(input0_data, (2, 3, 0, 1)))

input_data.append(np.transpose(input1_data, (2, 3, 0, 1)))

input_data.append(np.transpose(input2_data, (2, 3, 0, 1)))

boxes, classes, scores = yolov5_post_process(input_data)

if image_process_mode == "resize":

scale_h = IMG_SIZE / img_height

scale_w = IMG_SIZE / img_width

boxes = resize_postprocess(boxes, scale_w, scale_h)

elif image_process_mode == "letter_box":

boxes = letter_box_postprocess(boxes, scale_factor[0], correction)

# img_1 = cv2.cvtColor(img, cv2.COLOR_RGB2BGR)

if boxes is not None:

draw(image, boxes, scores, classes)

cv2.imwrite("./" + image_name, image)

rknn.release()

ps:本人对瑞芯微给的rknn测试demo程序做了修改-官方demo只给出了对图片resize的预处理方式且后处理并未还原到原始图片尺寸的大小;本人已经加入了resize和letterbox方式且不同的预处理会有不同的后处理方式

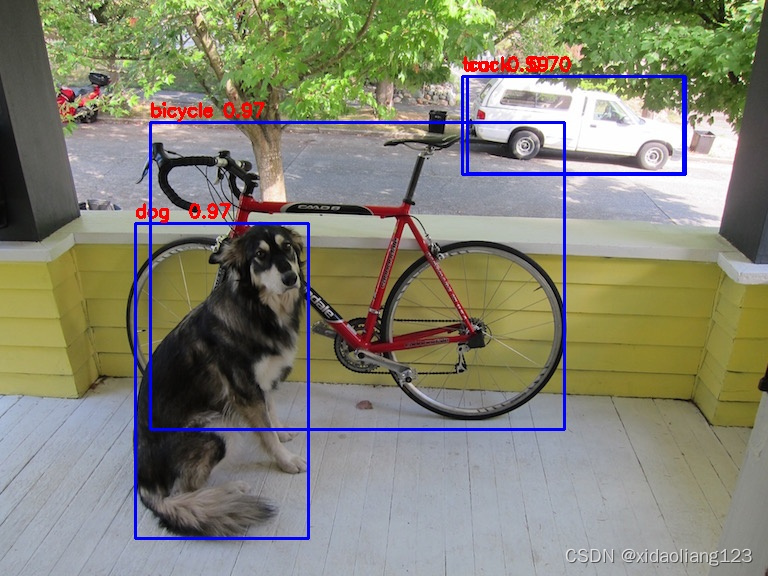

可视化测试结果: