MobileNet���

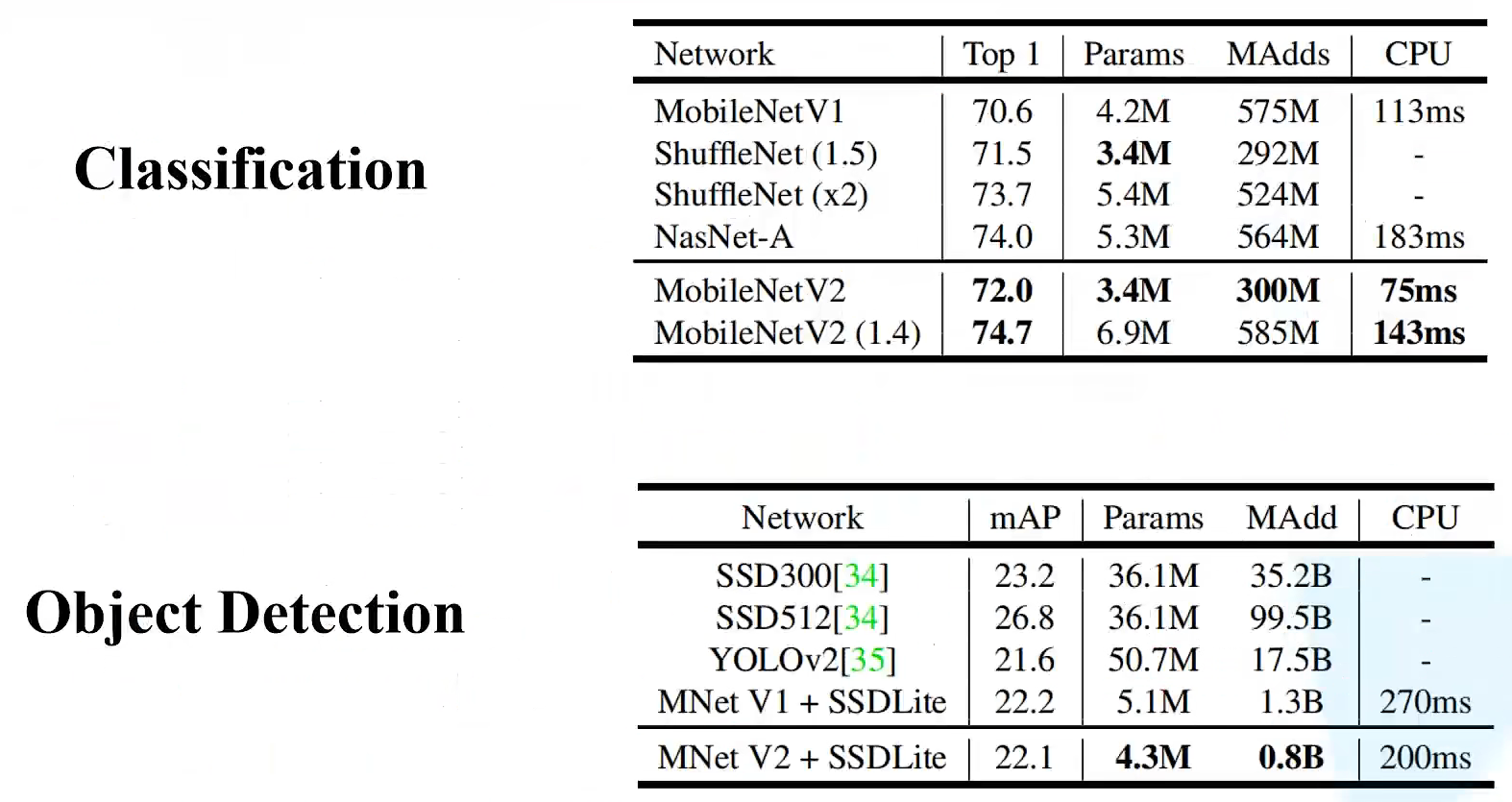

MobileNet��������google�Ŷ���2017�������,רע���ƶ��˻���Ƕ��ʽ�豸�е�������CNN���硣��ȴ�ͳ����������,��ȷ��С�����͵�ǰ���´�����ģ�Ͳ����������������VGG16ȷ�ʼ�����0.9%,��ģ�Ͳ���ֻ��VGG��1/32��

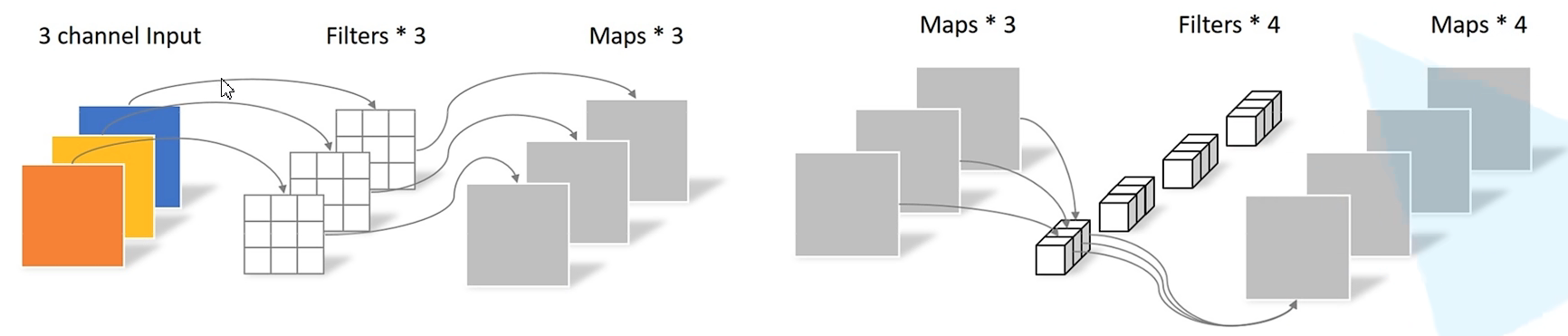

DW����

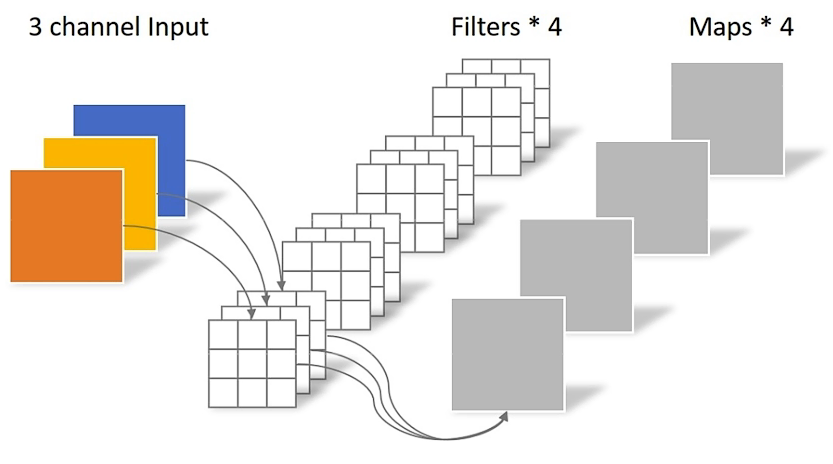

��ͳ����

- ������channel=������������channel

- �����������channel=�����˸���

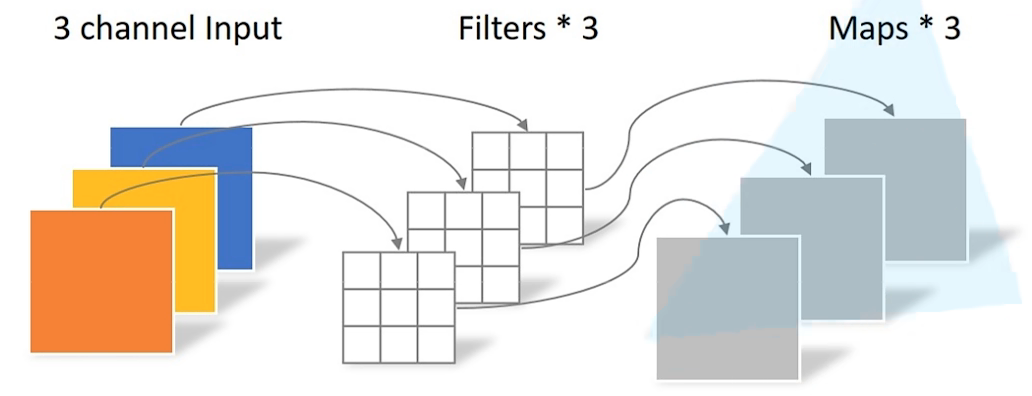

DW(Depthwise Conv)����

-

������channel=1

-

������������channel=�����˸���=�����������channel

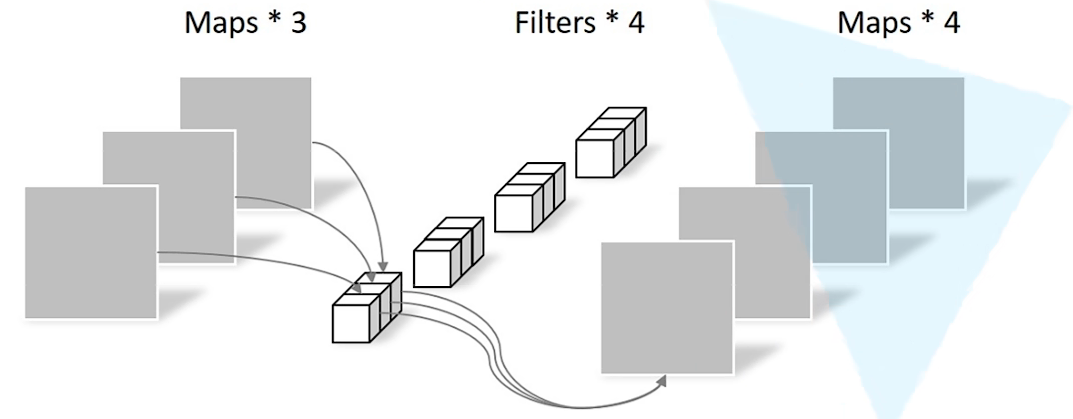

PW(Pointwise Conv)����

������size=1�Ĵ�ͳ����

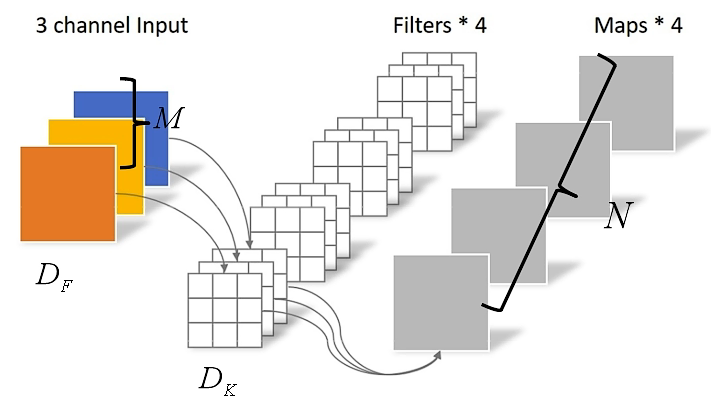

��ͨ����������

D

K

?

D

K

?

M

?

N

?

D

F

?

D

F

D_K \cdot D_K \cdot M \cdot N \cdot D_F \cdot D_F

DK??DK??M?N?DF??DF?

DW + PW������

D

K

?

D

K

?

M

?

D

F

?

D

F

+

M

?

N

?

D

E

?

D

E

D_K \cdot D_K \cdot M \cdot D_F \cdot D_F + M \cdot N \cdot D_E \cdot D_E

DK??DK??M?DF??DF?+M?N?DE??DE?

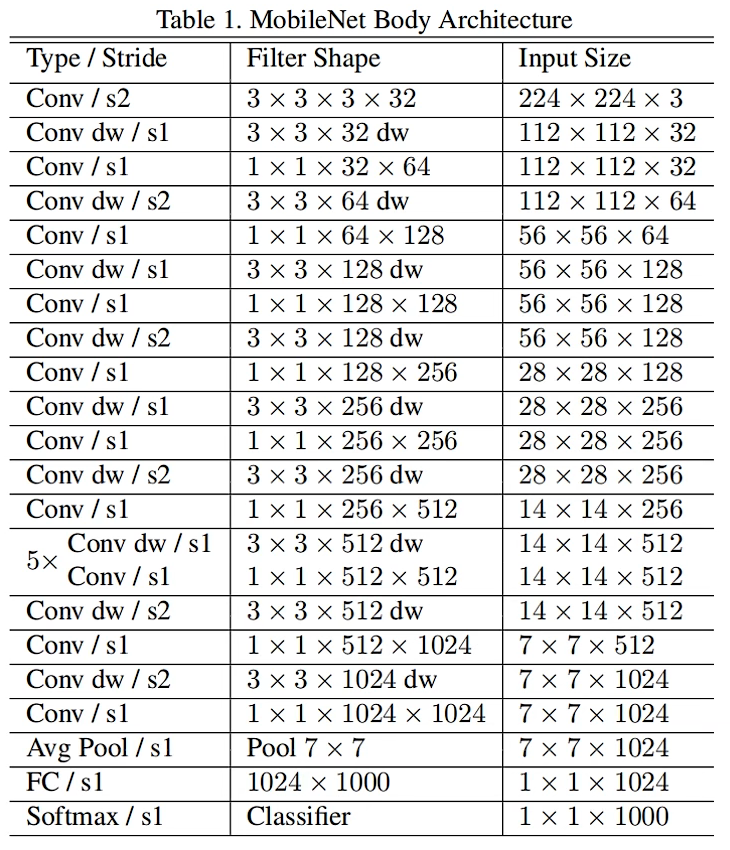

��������ͨ������������DW+PW��8��9��$\frac{DW+PW}{��ͨ����} = \frac{1}{N} + \frac{1}{D_{K}^2} $

MobileNetV1

���������:

- Depthwise Convolution(�������������Ͳ�������)

- ���ӳ�����������

�� \alpha �������˵ĸ���, �� \beta ������ͼ��ķֱ���

depthwise���ֵľ��������ѵ�,�������˲�����Ϊ�㡣

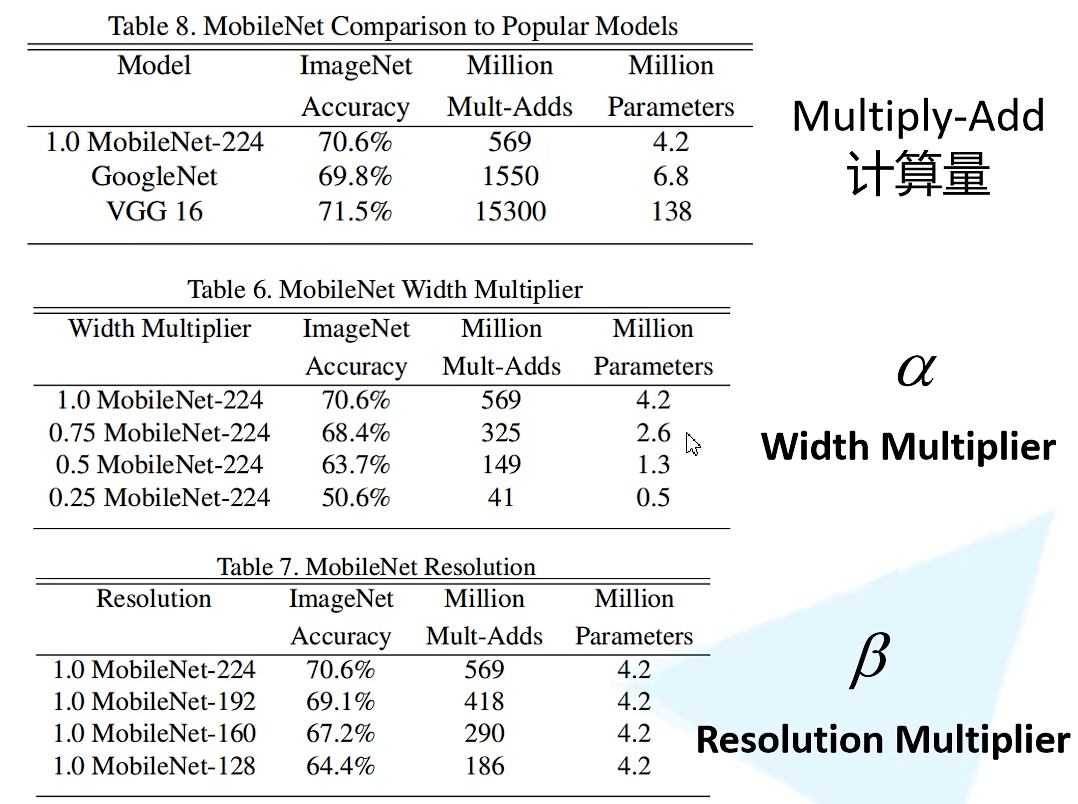

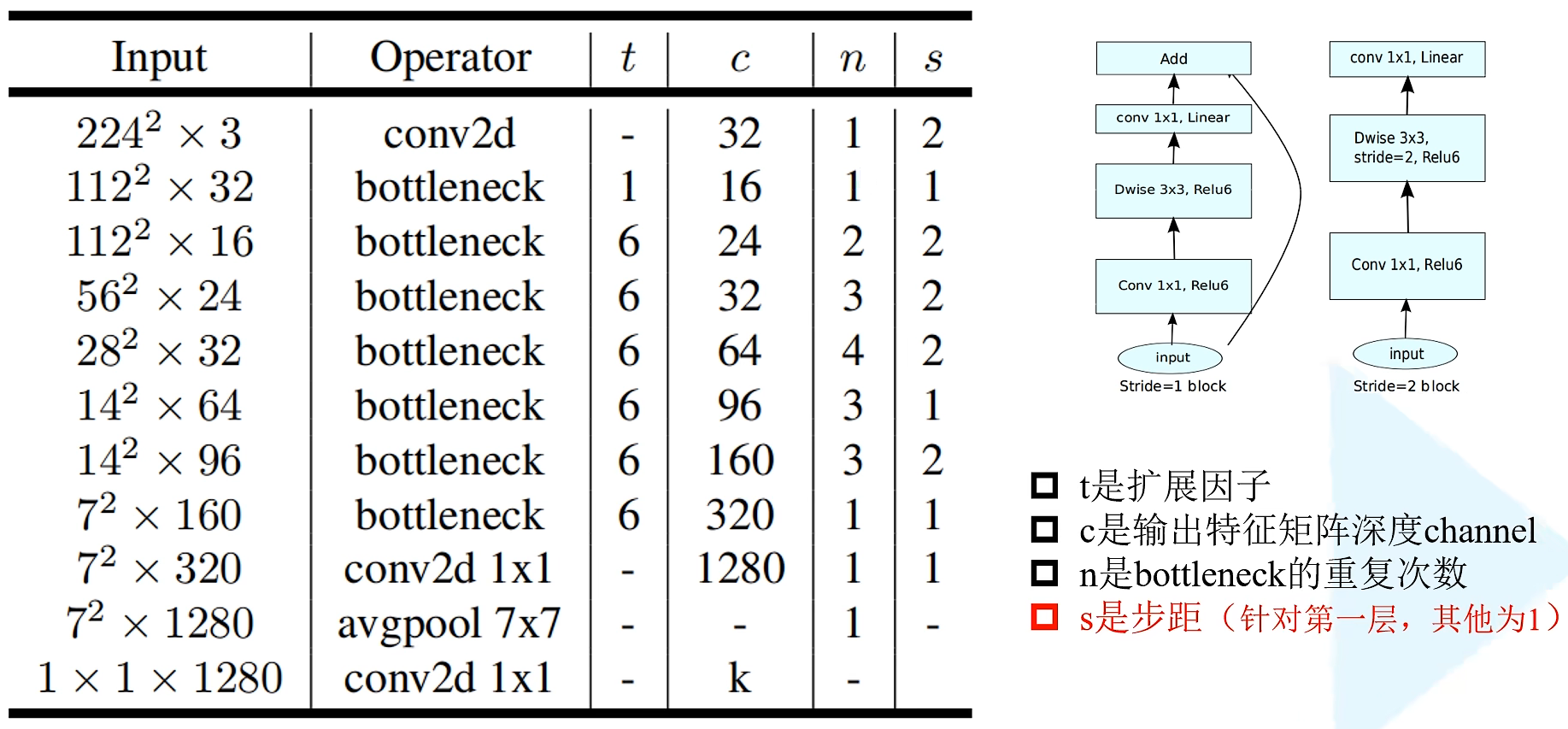

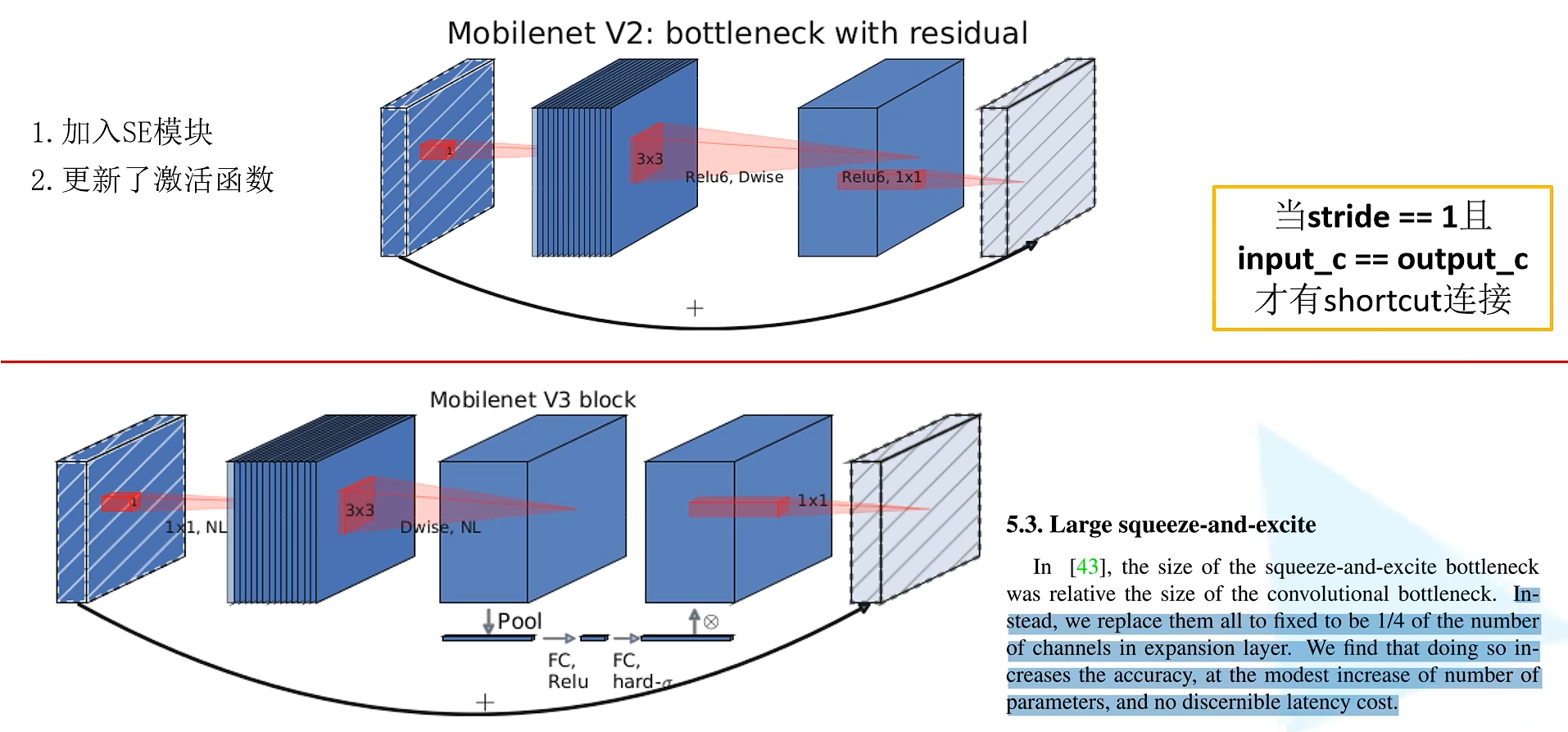

MobileNetV2

MobileNet v2��������google�Ŷ���2018�������,���MobileNet V1����,ȷ�ʸ���,ģ��С��

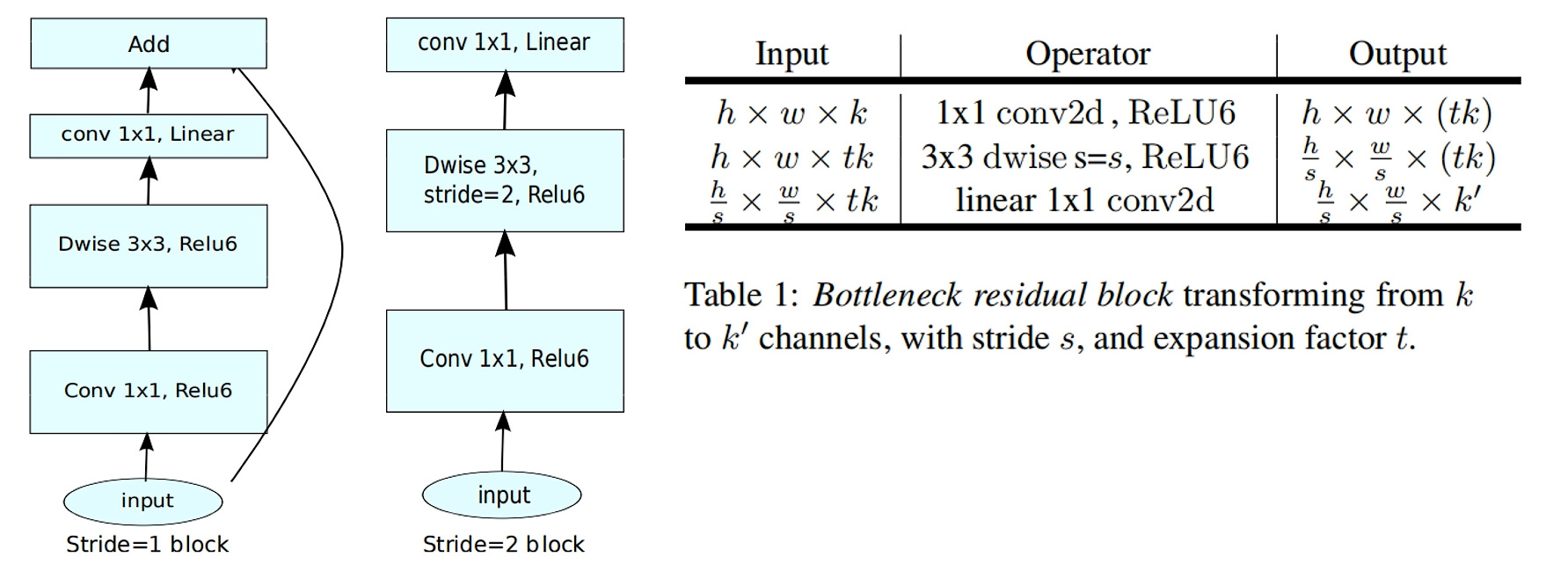

���������

-

Inverted Residuals (���в�ṹ)

-

Linear Bottlenecks

Inverted Residuals

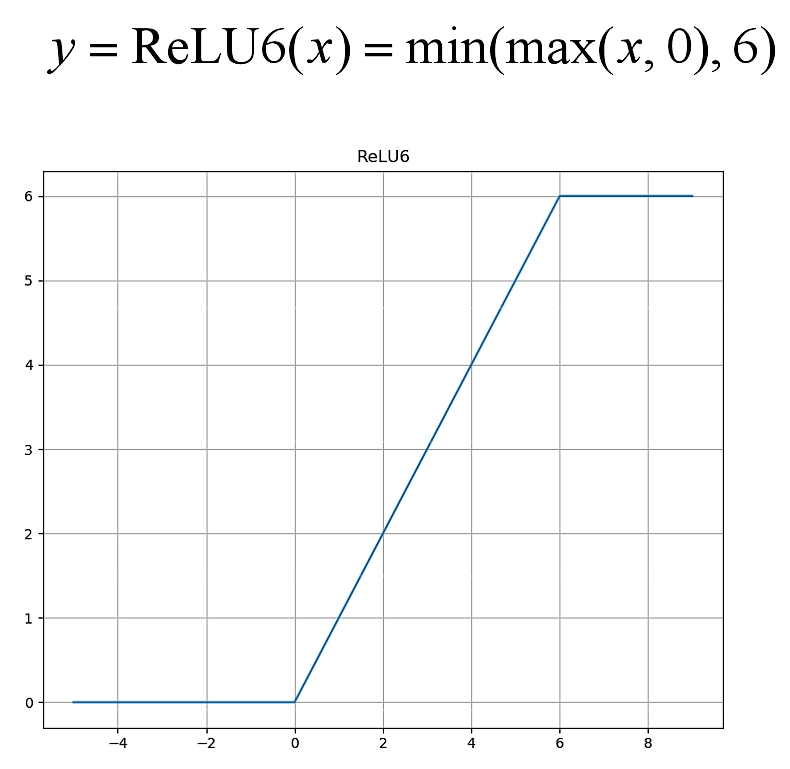

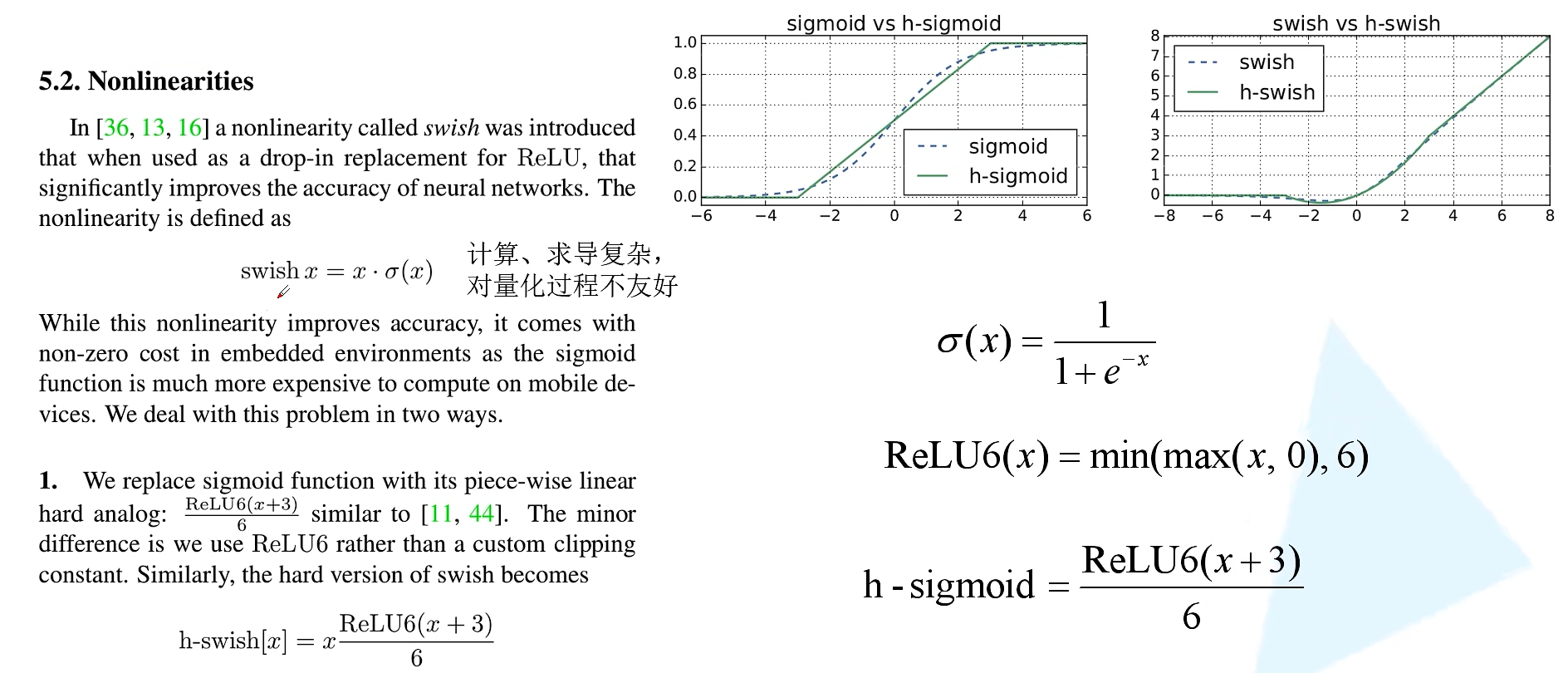

����ReLU6�����

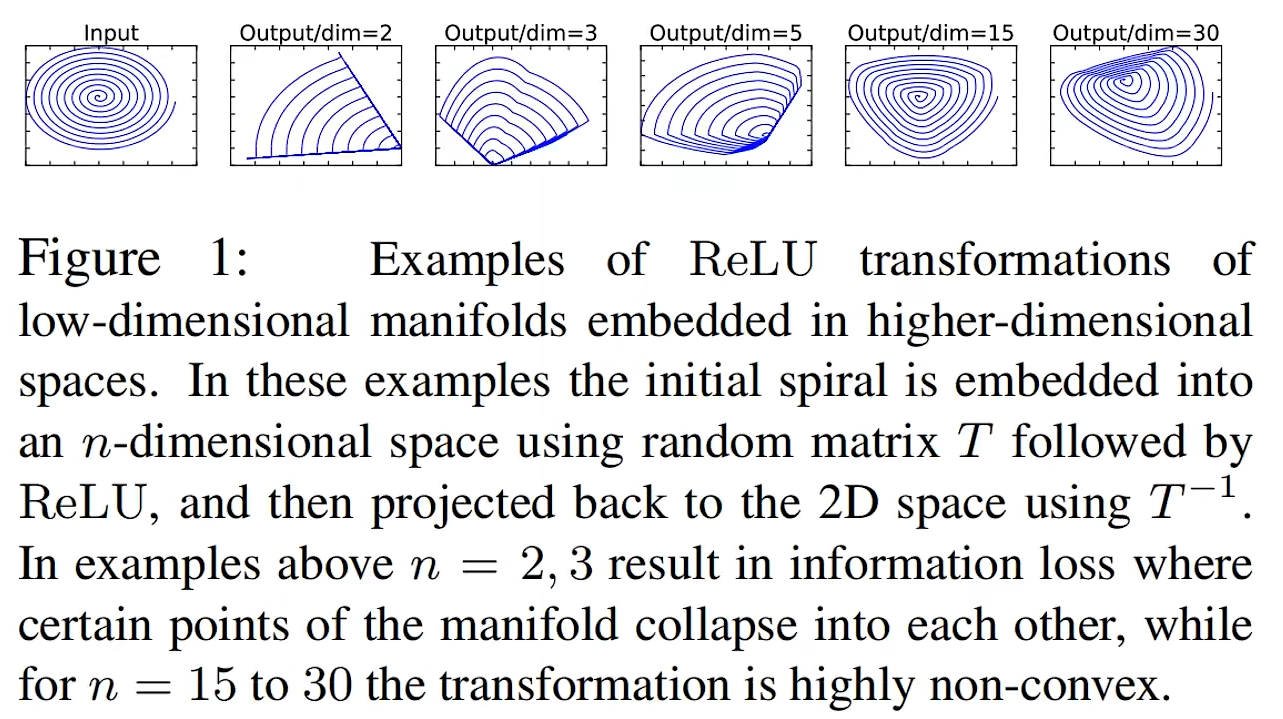

Linear Bottlenecks

��Ե��в�ṹ�����һ��

1

��

1

1 \times 1

1��1�ľ�����,ʹ�����Լ������ReLU������Ե�ά������Ϣ��ɴ�����ʧ��

��stride=1���������������������������shape��ͬʱ����shortcut���ӡ�

����ṹ

��һ���ж��bottleneck(��

n

=?

2

n \not = 2

n��=2ʱ),����һ��bottleneck�IJ���Ϊs,ʣ�µ�bottleneck��Ϊ1��

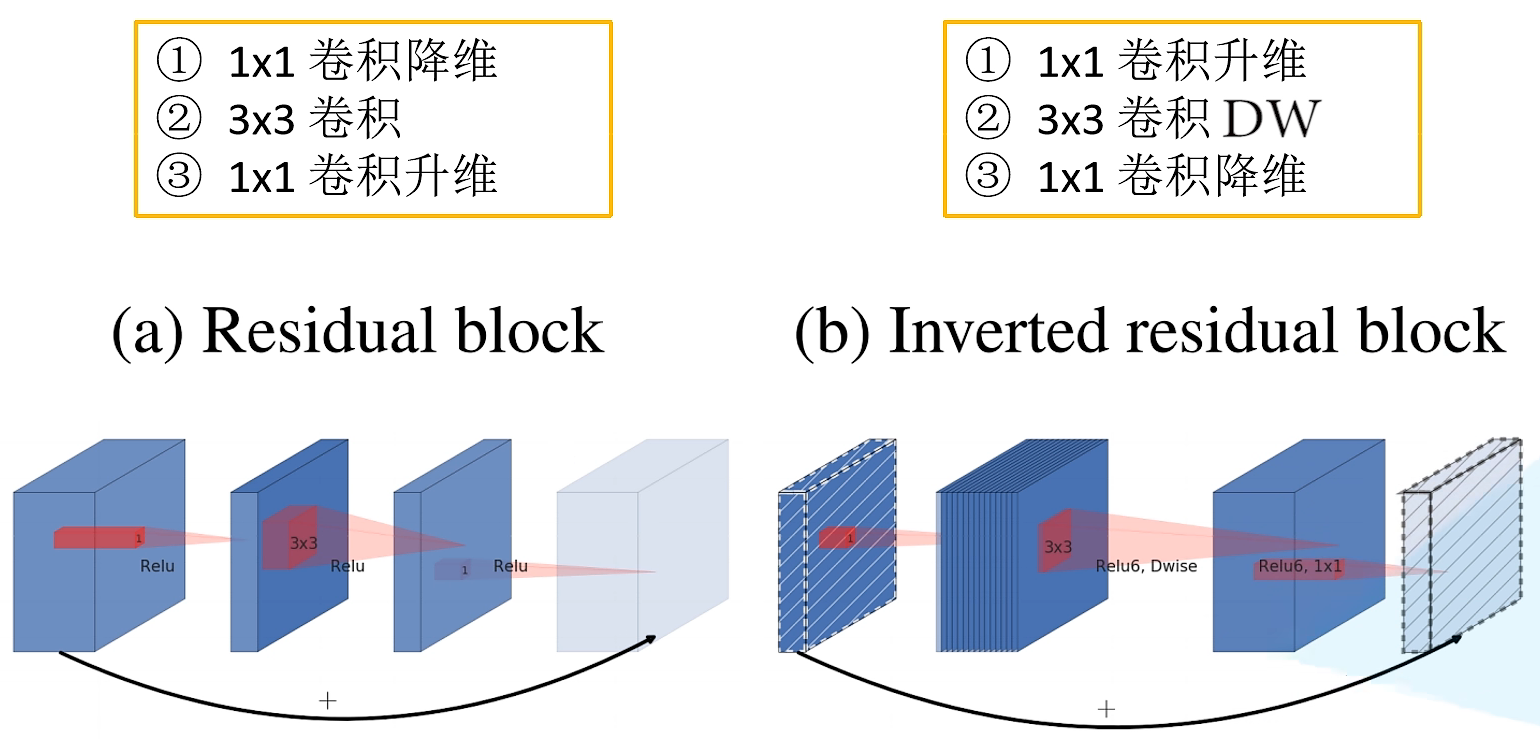

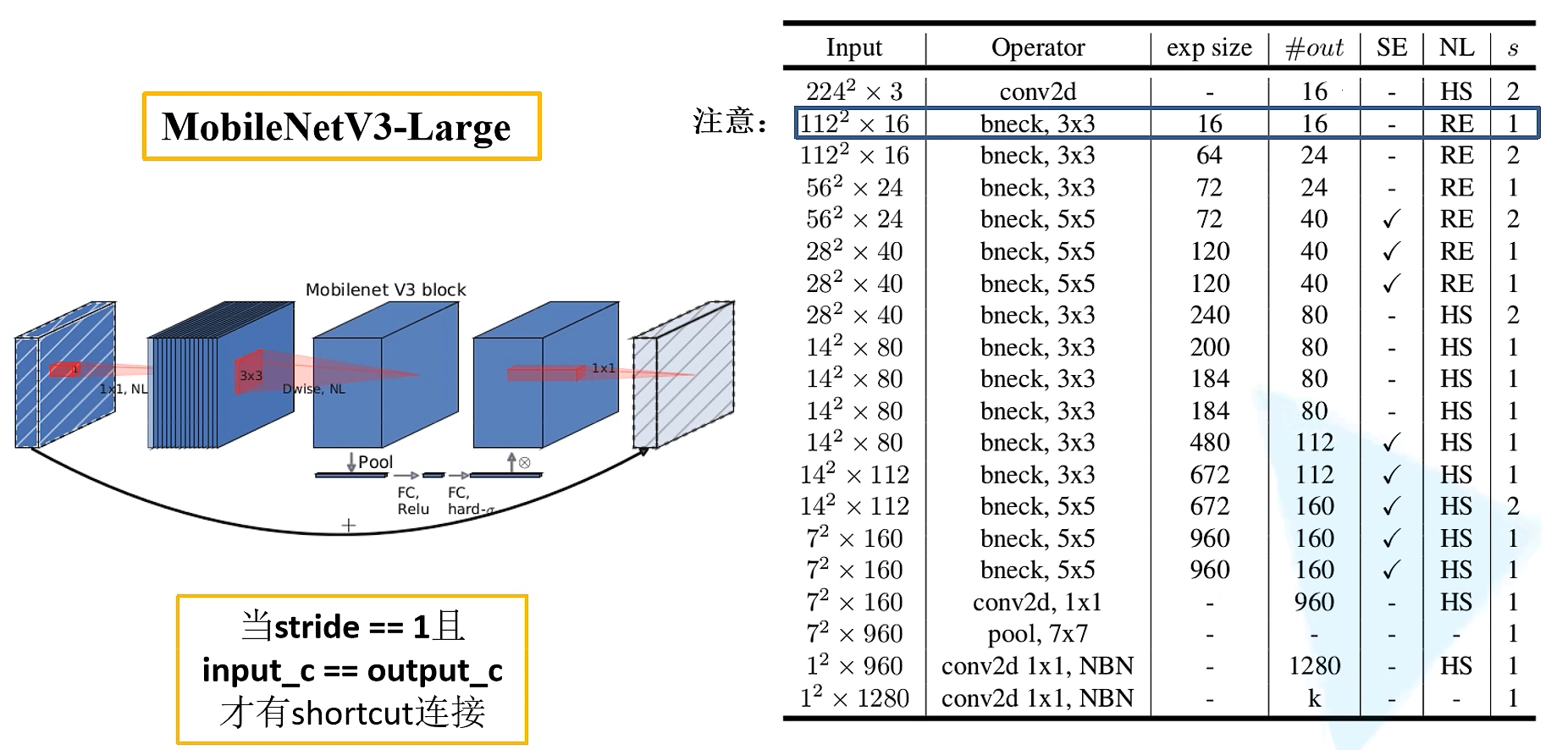

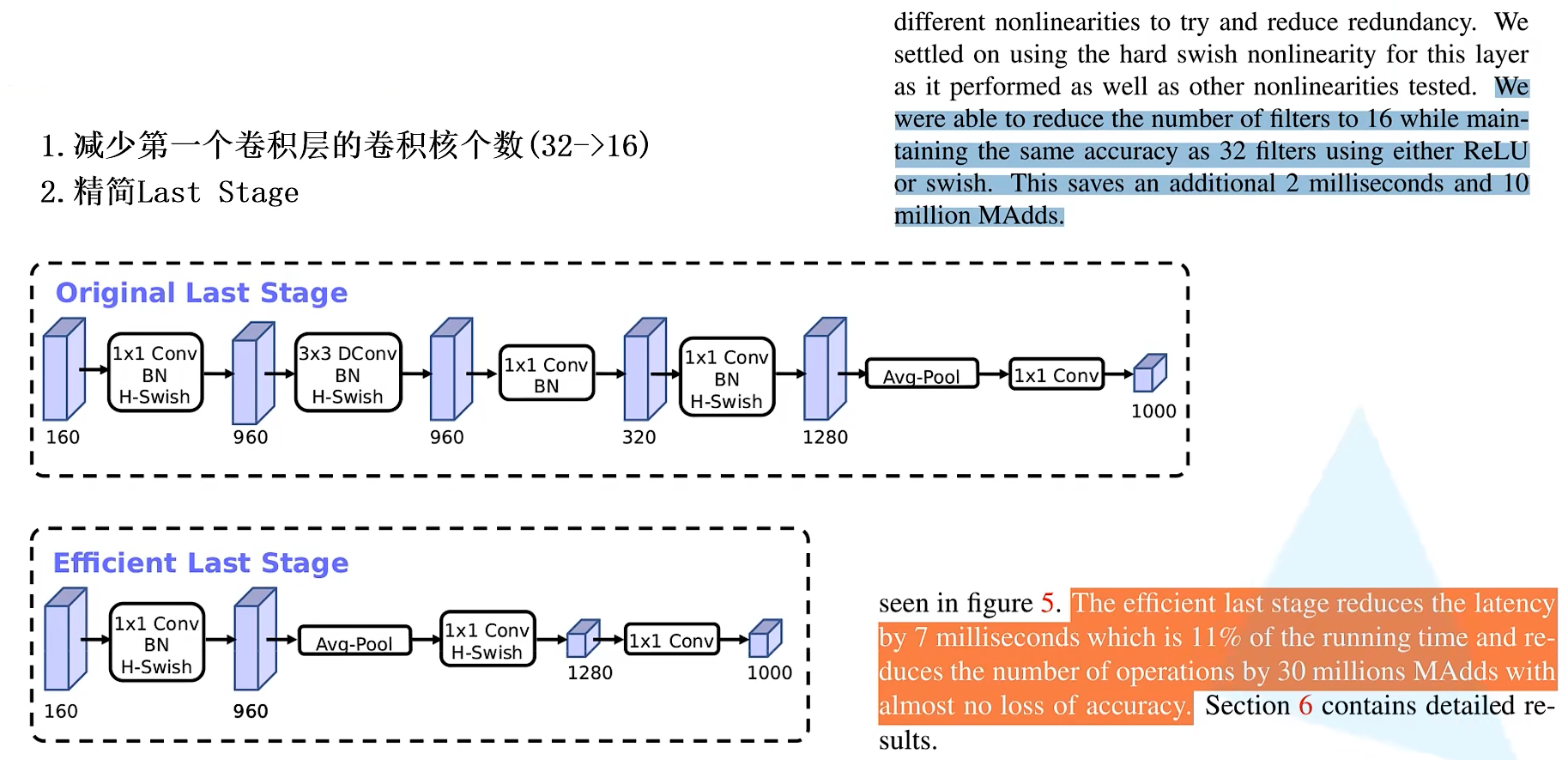

MobileNetV3

-

����Block(bneck)

-

ʹ��NAS(Neural Architecture Search)��������

-

������ƺ�ʱ��ṹ

����Block

������ƺ�ʱ��ṹ

������Ƽ����

SE-Net

��������Ϊ����������ĺ���,ͨ�����������ھֲ�����Ұ��,���ռ���(spatial)����Ϣ������ά����(channel-wise)����Ϣ���оۺϵ���Ϣ�ۺ��塣������������һϵ�о����㡢�����Բ���²����㹹��,���������ܹ���ȫ�ָ���Ұ��ȥ����ͼ�������������ͼ���������

�Ѿ��кܶ���ڿռ�ά�������������������,Squeeze-and-Excitation Networks (���SENet)������ͨ��֮��Ĺ�ϵȥ���������������ܡ��䶯����ϣ����ʽ�ؽ�ģ����ͨ��֮����������ϵ,�ڲ�����һ���µĿռ�ά�ȵ�����½�������ͨ������ں�,���Dz�����һ��ȫ�µġ������ر궨�����ԡ�������˵,����ͨ��ѧϰ�ķ�ʽ���Զ���ȡ��ÿ������ͨ������Ҫ�̶�,Ȼ�����������Ҫ�̶�ȥ�������õ����������ƶԵ�ǰ�����ô������������

![[����ͼƬת��ʧ��,Դվ�����з���������,���齫ͼƬ��������ֱ���ϴ�(img-t8HmjDjv-1659342565782)(img/�������ܡ�MobileNetV1, V2, V3/1577346401348442.png)]](https://img-blog.csdnimg.cn/1619cd31cd544411a907682ecac1a8aa.png)

Squeeze ����

˳�ſռ�ά������������ѹ��,��ÿ����ά������ͨ�����һ��ʵ��,���ʵ��ij�̶ֳ��Ͼ���ȫ�ֵĸ���Ұ,���������ά�Ⱥ����������ͨ������ƥ�䡣��������������ͨ������Ӧ��ȫ�ֲַ�,����ʹ�ÿ�������IJ�Ҳ���Ի��ȫ�ֵĸ���Ұ,��һ���ںܶ������ж��Ƿdz����õġ�

Excitation ����

ͨ��������Ϊÿ������ͨ������Ȩ��,���в�����ѧϰ������ʽ�ؽ�ģ����ͨ���������ԡ�

Reweight ����

��Excitation�������Ȩ�ؿ����ǽ�������ѡ����ÿ������ͨ������Ҫ��,Ȼ��ͨ���˷���ͨ����Ȩ����ǰ��������,�����ͨ��ά���ϵĶ�ԭʼ�������ر궨��

![[����ͼƬת��ʧ��,Դվ�����з���������,���齫ͼƬ��������ֱ���ϴ�(img-AMTcMuJX-1659342565783)(img/�������ܡ�MobileNetV1, V2, V3/1577346448967123.png)]](https://img-blog.csdnimg.cn/a648d0c25bda49848922bc98532df804.png)

����ʹ��global average pooling ��ΪSqueeze ������

����������Fully Connected �����һ��Bottleneck�ṹȥ��ģͨ����������,���������������ͬ����Ŀ��Ȩ�ء��������Ƚ�����ά�Ƚ��͵������1/16 ,Ȼ��ReLu�������ͨ��һ��Fully Connected �����ص�ԭ����ά�ȡ� ��������ֱ����һ��Fully Connected ��ĺô�����:1)���и���ķ�����,���Ը��õ����ͨ���临�ӵ������;2)����ؼ����˲������ͼ�������

Ȼ��ͨ��һ��Sigmoid���Ż��0~1֮���һ����Ȩ��,���ͨ��һ��Scale�IJ���������һ�����Ȩ�ؼ�Ȩ��ÿ��ͨ���������ϡ�

��SE Ƕ�뵽ResNetģ���еIJ������̻�����SE-Inceptionһ��,ֻ��������Additionǰ�Է�֧��Residual�����������������ر궨�������Addition����֧�ϵ����������ر궨,�����������ϴ���0~1��scale����,���������BP�Ż�ʱ�ͻ��ڿ�����������׳����ݶ���ɢ�����,����ģ�������Ż���

SENet����dz���,���Һ����ױ�����,����Ҫ�����µĺ������߲㡣����֮��,������ģ�ͺͼ��㸴�Ӷ��Ͼ������õ����ԡ�

import torch

import torch.nn as nn

import torch.nn.functional as F

import torchvision

import torchvision.transforms as transforms

import matplotlib.pyplot as plt

import numpy as np

import torch.optim as optim

class BasicBlock(nn.Module):

def __init__(self, in_channels, out_channels, stride=1):

super(BasicBlock, self).__init__()

self.conv1 = nn.Conv2d(in_channels, out_channels, kernel_size=3, stride=stride, padding=1, bias=False)

self.bn1 = nn.BatchNorm2d(out_channels)

self.conv2 = nn.Conv2d(out_channels, out_channels, kernel_size=3, stride=1, padding=1, bias=False)

self.bn2 = nn.BatchNorm2d(out_channels)

# shortcut�����ά�Ⱥ������һ��ʱ,��1*1�ľ�����ƥ��ά��

self.shortcut = nn.Sequential()

if stride != 1 or in_channels != out_channels:

self.shortcut = nn.Sequential(

nn.Conv2d(in_channels, out_channels, kernel_size=1, stride=stride, bias=False),

nn.BatchNorm2d(out_channels))

# �� excitation ������ȫ����

self.fc1 = nn.Conv2d(out_channels, out_channels//16, kernel_size=1)

self.fc2 = nn.Conv2d(out_channels//16, out_channels, kernel_size=1)

#��������ṹ

def forward(self, x):

#feature map�������ξ����õ�ѹ��

out = F.relu(self.bn1(self.conv1(x)))

out = self.bn2(self.conv2(out))

# Squeeze ����:global average pooling

w = F.avg_pool2d(out, out.size(2))

# Excitation ����: fc(ѹ����16��֮һ)--Relu--fc(����֮ǰά��)--Sigmoid(��֤���Ϊ 0 �� 1 ֮��)

w = F.relu(self.fc1(w))

w = F.sigmoid(self.fc2(w))

# �ر궨����: ���������feature map�� w ���

out = out * w

# ����dz������ͼ

out += self.shortcut(x)

#R elu����

out = F.relu(out)

return out

Hybrid Spectral Network

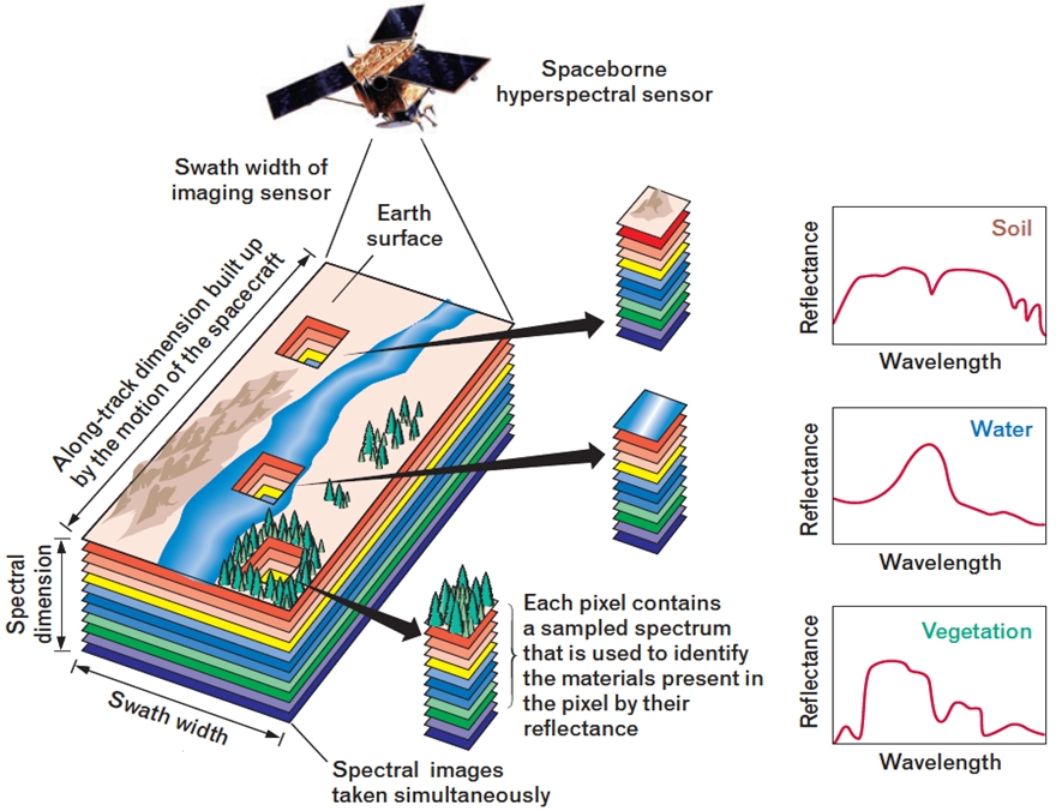

�߹���ͼ��

�߹���ͼ������ڹ���ά�Ƚ�����ϸ�µķָ�,�������Ǵ�ͳ�ĺ�,��,����R��G��B������,�����ڹ���ά����Ҳ��N��ͨ��,����:���ǿ���400nm-1000nm��Ϊ300��ͨ��,һ��,ͨ���߹����豸��ȡ����һ����������,������ͼ�����Ϣ,�����ڹ���ά���Ͻ���չ��,����������Ի��ͼ����ÿ����Ĺ�������,�����Ի������һ���ε�Ӱ����Ϣ��

�߹��׳������ǻ��ڷdz����խ���ε�Ӱ�����ݼ���,���������������������,̽��Ŀ��Ķ�ά���οռ������Ϣ,��ȡ�߷ֱ��ʵ�������խ���ε�ͼ�����ݡ�

��ͬ�����ڲ�ͬ���ι����ź��µIJ�ͬ����,���Ի��Ƴ�һ�����ڹ��ײ��κ���ֵ֮�������,�������ߵIJ���,���ǿ��ԶԸ߹���ͼ���в�ͬ���ʽ��з��ࡣ

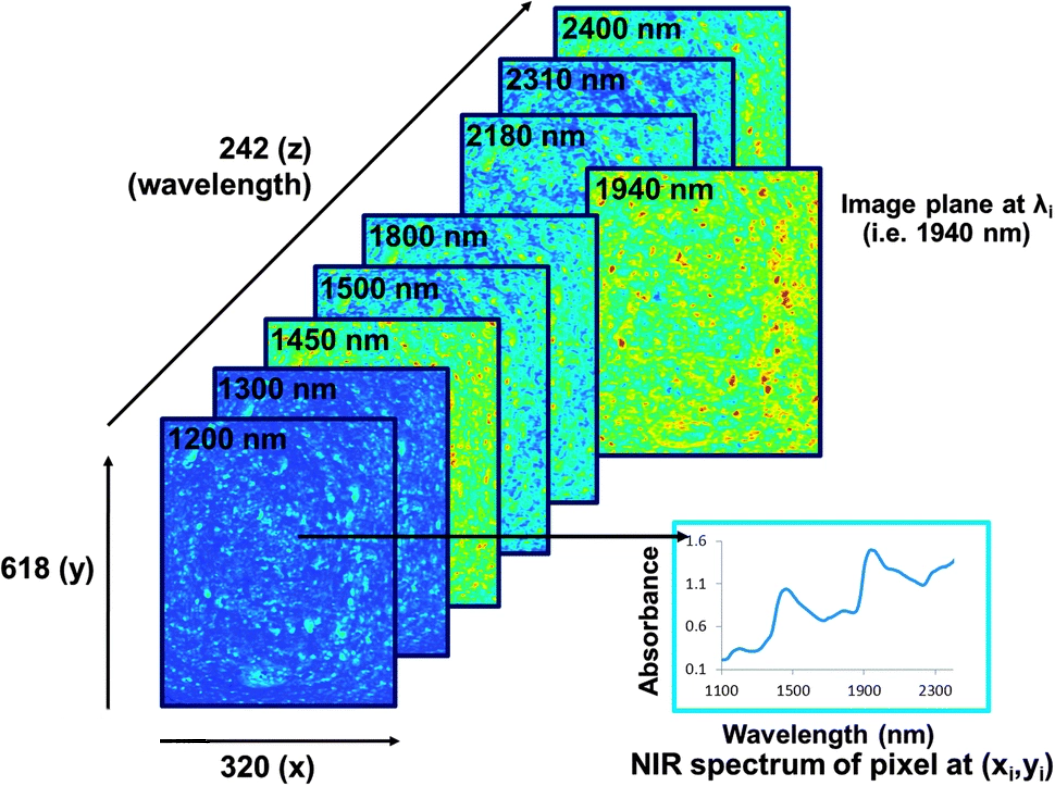

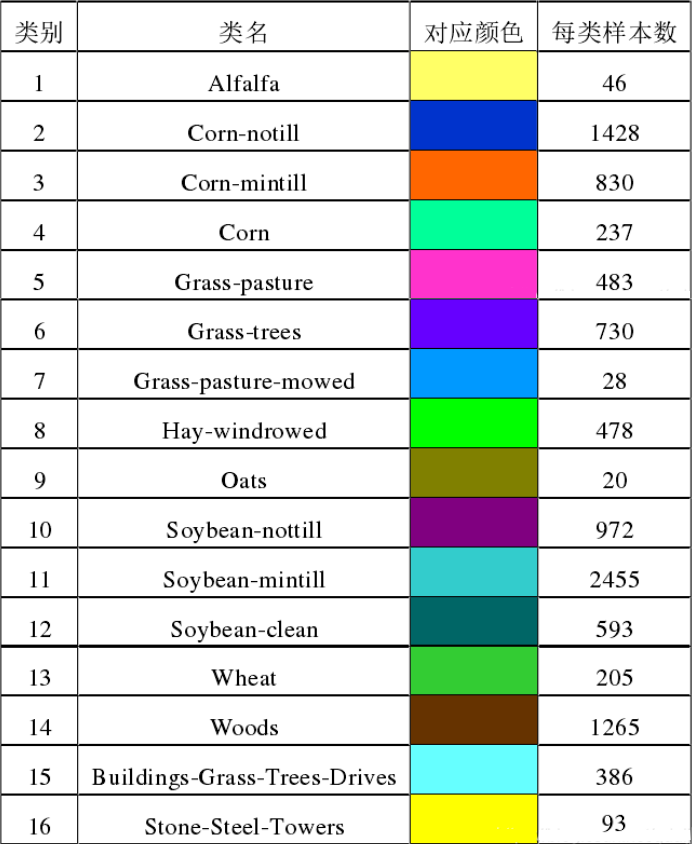

��������ʹ�õĸ߹���ͼ�����ݼ���һ����Indian Pines,������������ڸ߹���ͼ�����IJ������ݡ�

�����ݼ��ܹ���21025������,��������ֻ��10249�������ǵ�������,����10776�����ؾ�Ϊ��������,������Ҫ�������,���Ƕ���10249�����ؽ���16-����,�õ��߹���ͼ��ķ��ࡣ

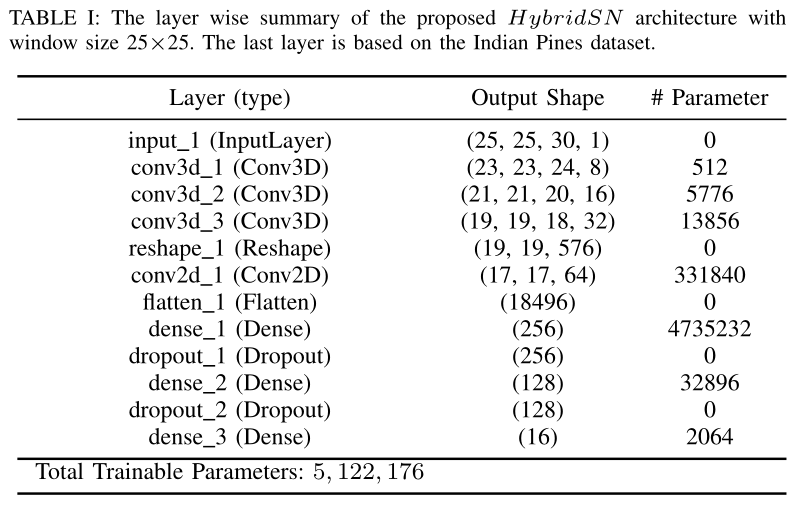

HybridSN

��ʹ��2-D-CNN��3-D-CNN��һЩȱ��,����ȱ��Ƶ����ϵ��Ϣ��ģ�ͷdz����ӡ���Ҳ��ֹ����Щ������HSI�ռ��ϻ�ø��ߵ�ȷ�ԡ���Ҫԭ��������HSI���������,Ҳ�й���ά�ȡ�

������2-D-CNN���ӹ���ά������ȡ���õ���������,ͬ��,�� 3-D-CNN�ļ�����Ӹ���,�������������HybridSNģ��,�˷�����ǰģ�͵���Щȱ�㡣3-D-CNN��2-D-CNN�����Ƽ���ģ����װ�ɺ��ʵ�����,������ù���ͼ�Ϳռ�����ͼ,����ȵ���߾��ȡ�

![[����ͼƬת��ʧ��,Դվ�����з���������,���齫ͼƬ��������ֱ���ϴ�(img-OqkHHIOS-1659342565784)(img/�������ܡ�MobileNetV1, V2, V3/image-20220801100222139.png)]](https://img-blog.csdnimg.cn/e8edc190b863483189f341942df5bf17.png)

Ϊ����������Ƶ������,����ͳ���ɷַ���(PCA)Ӧ�����ع��״���ԭʼHSI���ݡ�PCA�����״���������D���ٵ�B,��������ͬ�Ŀռ�ߴ硣����M�ǿ���,N�Ǹ߶�,B��PCA֮��Ĺ��״�����

���ģ��ͼ��ߵڶ�������ͷ,�������ᵽ:Ϊ������ͼ����༼��, ��HSI���������廮��ΪС���ص�3-D����,����ʵ��ǩ���������صı�ǩ����, ������ά���ڲ��� P �� R S �� S �� B P\in R^{S��S��B} P��RS��S��B��

ģ��ͼ�е������ġ������ͷ������ά����,

- conv1: ( 1, 30, 25, 25), 8�� 7x3x3 �ľ����� ==> ( 8, 24, 23, 23)

- conv2: ( 8, 24, 23, 23),16�� 5x3x3 �ľ����� ==> (16, 20, 21, 21)

- conv3: (16, 20, 21, 21),32�� 3x3x3 �ľ����� ==> (32, 18, 19, 19)

��������ͷ������ά����,���Ǵ���ά����ά,�м���Ҫһ�����εĹ���,������һ��ά�ȵIJ�����

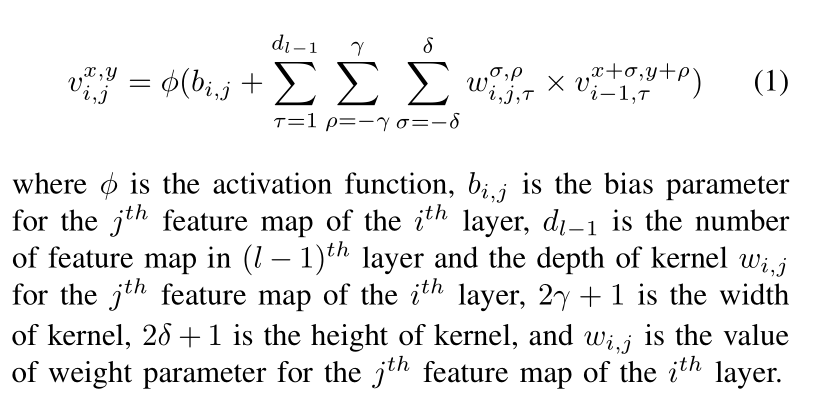

�������ᵽ,�ڶ�ά������,��

i

i

i��ĵ�

j

j

j������ӳ���пռ�λ��

(

x

,

y

)

(x,y)

(x,y)�ļ���ֵ��ʾΪ

v

i

,

j

x

,

y

v^{x,y}_{i,j}

vi,jx,y?ʹ�����µ�ʽ:

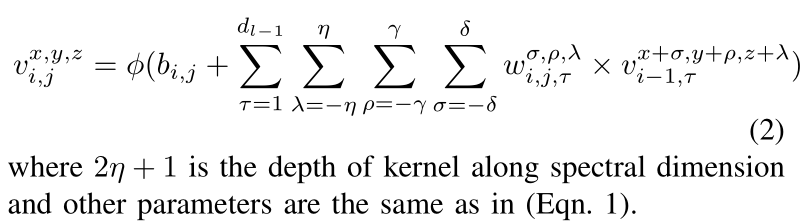

����������,��

i

i

i���

j

j

j������ͼ�Ŀռ�λ��

(

x

,

y

,

z

)

(x,y,z)

(x,y,z)�ļ���ֵ��

v

i

,

j

x

,

y

,

z

v^{x,y,z}_{i,j}

vi,jx,y,z?��ʾ,����:

��������ṹ:

HybridSN

����ȡ������,��������������⡣

! wget http://www.ehu.eus/ccwintco/uploads/6/67/Indian_pines_corrected.mat

! wget http://www.ehu.eus/ccwintco/uploads/c/c4/Indian_pines_gt.mat

! pip install spectral

import numpy as np

import matplotlib.pyplot as plt

import scipy.io as sio

from sklearn.decomposition import PCA

from sklearn.model_selection import train_test_split

from sklearn.metrics import confusion_matrix, accuracy_score, classification_report, cohen_kappa_score

import spectral

import torch

import torchvision

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

���� HybridSN ��

from torch.nn.modules.activation import ReLU

class_num = 16

class HybridSN(nn.Module):

def __init__(self):

super(HybridSN, self).__init__()

self.conv1 = nn.Sequential(

nn.Conv3d(in_channels=1, out_channels=8, kernel_size=(7, 3, 3)),

nn.BatchNorm3d(8),

nn.ReLU(inplace=True)

)

self.conv2 = nn.Sequential(

nn.Conv3d(in_channels=8, out_channels=16, kernel_size=(5, 3, 3)),

nn.BatchNorm3d(16),

nn.ReLU(inplace=True)

)

self.conv3 = nn.Sequential(

nn.Conv3d(in_channels=16, out_channels=32, kernel_size=(3, 3, 3)),

nn.BatchNorm3d(32),

nn.ReLU(inplace=True)

)

self.conv4 = nn.Sequential(

nn.Conv2d(in_channels=576, out_channels=64, kernel_size=(3, 3)),

nn.BatchNorm2d(64),

nn.ReLU(inplace=True)

)

self.fc1 = nn.Linear(in_features=18496, out_features=256)

self.fc2 = nn.Linear(in_features=256, out_features=128)

self.fc3 = nn.Linear(in_features=128, out_features=class_num)

self.drop = nn.Dropout(p=0.4)

def forward(self, x):

out = self.conv1(x)

out = self.conv2(out)

out = self.conv3(out)

out = out.reshape(out.shape[0], -1, 19, 19)

out = self.conv4(out)

out = out.reshape(out.shape[0],-1)

out = F.relu(self.drop(self.fc1(out)))

out = F.relu(self.drop(self.fc2(out)))

out = self.fc3(out)

return out

# �������,��������ṹ�Ƿ�ͨ

# x = torch.randn(1, 1, 30, 25, 25)

# net = HybridSN()

# y = net(x)

# print(y.shape)

�������ݼ�

���ȶԸ߹�������ʵʩPCA��ά;Ȼ�� keras ���㴦�������ݸ�ʽ;Ȼ�������ȡ 10% ������Ϊѵ����,ʣ�����Ϊ���Լ���

���ȶ����������

# �Ը߹������� X Ӧ�� PCA �任

def applyPCA(X, numComponents):

newX = np.reshape(X, (-1, X.shape[2]))

pca = PCA(n_components=numComponents, whiten=True)

newX = pca.fit_transform(newX)

newX = np.reshape(newX, (X.shape[0], X.shape[1], numComponents))

return newX

# �Ե���������Χ��ȡ patch ʱ,��Ե���ؾ���ȡ��,���,���ⲿ�����ؽ��� padding ����

def padWithZeros(X, margin=2):

newX = np.zeros((X.shape[0] + 2 * margin, X.shape[1] + 2* margin, X.shape[2]))

x_offset = margin

y_offset = margin

newX[x_offset:X.shape[0] + x_offset, y_offset:X.shape[1] + y_offset, :] = X

return newX

# ��ÿ��������Χ��ȡ patch ,Ȼ���ɷ��� keras �����ĸ�ʽ

def createImageCubes(X, y, windowSize=5, removeZeroLabels = True):

# �� X �� padding

margin = int((windowSize - 1) / 2)

zeroPaddedX = padWithZeros(X, margin=margin)

# split patches

patchesData = np.zeros((X.shape[0] * X.shape[1], windowSize, windowSize, X.shape[2]))

patchesLabels = np.zeros((X.shape[0] * X.shape[1]))

patchIndex = 0

for r in range(margin, zeroPaddedX.shape[0] - margin):

for c in range(margin, zeroPaddedX.shape[1] - margin):

patch = zeroPaddedX[r - margin:r + margin + 1, c - margin:c + margin + 1]

patchesData[patchIndex, :, :, :] = patch

patchesLabels[patchIndex] = y[r-margin, c-margin]

patchIndex = patchIndex + 1

if removeZeroLabels:

patchesData = patchesData[patchesLabels>0,:,:,:]

patchesLabels = patchesLabels[patchesLabels>0]

patchesLabels -= 1

return patchesData, patchesLabels

def splitTrainTestSet(X, y, testRatio, randomState=345):

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=testRatio, random_state=randomState, stratify=y)

return X_train, X_test, y_train, y_test

�����ȡ���������ݼ�:

# �������

class_num = 16

X = sio.loadmat('Indian_pines_corrected.mat')['indian_pines_corrected']

y = sio.loadmat('Indian_pines_gt.mat')['indian_pines_gt']

# ���ڲ��������ı���

test_ratio = 0.90

# ÿ��������Χ��ȡ patch �ijߴ�

patch_size = 25

# ʹ�� PCA ��ά,�õ����ɷֵ�����

pca_components = 30

print('Hyperspectral data shape: ', X.shape)

print('Label shape: ', y.shape)

print('\n... ... PCA tranformation ... ...')

X_pca = applyPCA(X, numComponents=pca_components)

print('Data shape after PCA: ', X_pca.shape)

print('\n... ... create data cubes ... ...')

X_pca, y = createImageCubes(X_pca, y, windowSize=patch_size)

print('Data cube X shape: ', X_pca.shape)

print('Data cube y shape: ', y.shape)

print('\n... ... create train & test data ... ...')

Xtrain, Xtest, ytrain, ytest = splitTrainTestSet(X_pca, y, test_ratio)

print('Xtrain shape: ', Xtrain.shape)

print('Xtest shape: ', Xtest.shape)

# �ı� Xtrain, Ytrain ����״,�Է��� keras ��Ҫ��

Xtrain = Xtrain.reshape(-1, patch_size, patch_size, pca_components, 1)

Xtest = Xtest.reshape(-1, patch_size, patch_size, pca_components, 1)

print('before transpose: Xtrain shape: ', Xtrain.shape)

print('before transpose: Xtest shape: ', Xtest.shape)

# Ϊ����Ӧ pytorch �ṹ,����Ҫ�� transpose

Xtrain = Xtrain.transpose(0, 4, 3, 1, 2)

Xtest = Xtest.transpose(0, 4, 3, 1, 2)

print('after transpose: Xtrain shape: ', Xtrain.shape)

print('after transpose: Xtest shape: ', Xtest.shape)

""" Training dataset"""

class TrainDS(torch.utils.data.Dataset):

def __init__(self):

self.len = Xtrain.shape[0]

self.x_data = torch.FloatTensor(Xtrain)

self.y_data = torch.LongTensor(ytrain)

def __getitem__(self, index):

# ���������������ݺͶ�Ӧ�ı�ǩ

return self.x_data[index], self.y_data[index]

def __len__(self):

# �����ļ����ݵ���Ŀ

return self.len

""" Testing dataset"""

class TestDS(torch.utils.data.Dataset):

def __init__(self):

self.len = Xtest.shape[0]

self.x_data = torch.FloatTensor(Xtest)

self.y_data = torch.LongTensor(ytest)

def __getitem__(self, index):

# ���������������ݺͶ�Ӧ�ı�ǩ

return self.x_data[index], self.y_data[index]

def __len__(self):

# �����ļ����ݵ���Ŀ

return self.len

# ���� trainloader �� testloader

trainset = TrainDS()

testset = TestDS()

train_loader = torch.utils.data.DataLoader(dataset=trainset, batch_size=128, shuffle=True, num_workers=2)

test_loader = torch.utils.data.DataLoader(dataset=testset, batch_size=128, shuffle=False, num_workers=2)

Hyperspectral data shape: (145, 145, 200)

Label shape: (145, 145)

... ... PCA tranformation ... ...

Data shape after PCA: (145, 145, 30)

... ... create data cubes ... ...

Data cube X shape: (10249, 25, 25, 30)

Data cube y shape: (10249,)

... ... create train & test data ... ...

Xtrain shape: (1024, 25, 25, 30)

Xtest shape: (9225, 25, 25, 30)

before transpose: Xtrain shape: (1024, 25, 25, 30, 1)

before transpose: Xtest shape: (9225, 25, 25, 30, 1)

after transpose: Xtrain shape: (1024, 1, 30, 25, 25)

after transpose: Xtest shape: (9225, 1, 30, 25, 25)

��ʼѵ��

# ʹ��GPUѵ��,�����ڲ˵� "����ִ�й���" -> "��������ʱ����" ���������

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

# ����ŵ�GPU��

net = HybridSN().to(device)

criterion = nn.CrossEntropyLoss()

optimizer = optim.Adam(net.parameters(), lr=0.001)

# ��ʼѵ��

total_loss = 0

for epoch in range(100):

for i, (inputs, labels) in enumerate(train_loader):

inputs = inputs.to(device)

labels = labels.to(device)

# �Ż����ݶȹ���

optimizer.zero_grad()

# ���� +������ + �Ż�

outputs = net(inputs)

loss = criterion(outputs, labels)

loss.backward()

optimizer.step()

total_loss += loss.item()

print('[Epoch: %d] [loss avg: %.4f] [current loss: %.4f]' %(epoch + 1, total_loss/(epoch+1), loss.item()))

print('Finished Training')

[Epoch: 1] [loss avg: 19.5844] [current loss: 2.2236]

[Epoch: 2] [loss avg: 16.0000] [current loss: 1.4301]

[Epoch: 3] [loss avg: 13.5599] [current loss: 0.9643]

[Epoch: 4] [loss avg: 11.6337] [current loss: 0.7105]

[Epoch: 5] [loss avg: 10.0389] [current loss: 0.4395]

[Epoch: 6] [loss avg: 8.8303] [current loss: 0.2407]

[Epoch: 7] [loss avg: 7.8381] [current loss: 0.1715]

[Epoch: 8] [loss avg: 7.0392] [current loss: 0.1103]

[Epoch: 9] [loss avg: 6.3954] [current loss: 0.0850]

[Epoch: 10] [loss avg: 5.8631] [current loss: 0.0484]

[Epoch: 11] [loss avg: 5.4049] [current loss: 0.1184]

[Epoch: 12] [loss avg: 5.0116] [current loss: 0.1239]

[Epoch: 13] [loss avg: 4.6704] [current loss: 0.0740]

[Epoch: 14] [loss avg: 4.3656] [current loss: 0.0205]

[Epoch: 15] [loss avg: 4.1010] [current loss: 0.0327]

[Epoch: 16] [loss avg: 3.8714] [current loss: 0.0280]

[Epoch: 17] [loss avg: 3.6668] [current loss: 0.0512]

[Epoch: 18] [loss avg: 3.4766] [current loss: 0.0080]

[Epoch: 19] [loss avg: 3.3089] [current loss: 0.0375]

[Epoch: 20] [loss avg: 3.1551] [current loss: 0.0063]

[Epoch: 21] [loss avg: 3.0158] [current loss: 0.0049]

[Epoch: 22] [loss avg: 2.8960] [current loss: 0.0428]

[Epoch: 23] [loss avg: 2.7826] [current loss: 0.0779]

[Epoch: 24] [loss avg: 2.6782] [current loss: 0.0567]

[Epoch: 25] [loss avg: 2.5847] [current loss: 0.0071]

[Epoch: 26] [loss avg: 2.4922] [current loss: 0.0516]

[Epoch: 27] [loss avg: 2.4073] [current loss: 0.0138]

[Epoch: 28] [loss avg: 2.3343] [current loss: 0.0248]

[Epoch: 29] [loss avg: 2.2654] [current loss: 0.0944]

[Epoch: 30] [loss avg: 2.1967] [current loss: 0.0047]

[Epoch: 31] [loss avg: 2.1312] [current loss: 0.0048]

[Epoch: 32] [loss avg: 2.0680] [current loss: 0.0246]

[Epoch: 33] [loss avg: 2.0120] [current loss: 0.0565]

[Epoch: 34] [loss avg: 1.9580] [current loss: 0.0040]

[Epoch: 35] [loss avg: 1.9072] [current loss: 0.0296]

[Epoch: 36] [loss avg: 1.8595] [current loss: 0.0075]

[Epoch: 37] [loss avg: 1.8127] [current loss: 0.0049]

[Epoch: 38] [loss avg: 1.7683] [current loss: 0.0023]

[Epoch: 39] [loss avg: 1.7287] [current loss: 0.0472]

[Epoch: 40] [loss avg: 1.6908] [current loss: 0.0506]

[Epoch: 41] [loss avg: 1.6527] [current loss: 0.0130]

[Epoch: 42] [loss avg: 1.6156] [current loss: 0.0036]

[Epoch: 43] [loss avg: 1.5809] [current loss: 0.0260]

[Epoch: 44] [loss avg: 1.5468] [current loss: 0.0052]

[Epoch: 45] [loss avg: 1.5146] [current loss: 0.0217]

[Epoch: 46] [loss avg: 1.4828] [current loss: 0.0051]

[Epoch: 47] [loss avg: 1.4529] [current loss: 0.0176]

[Epoch: 48] [loss avg: 1.4231] [current loss: 0.0010]

[Epoch: 49] [loss avg: 1.3954] [current loss: 0.0353]

[Epoch: 50] [loss avg: 1.3698] [current loss: 0.0009]

[Epoch: 51] [loss avg: 1.3445] [current loss: 0.0053]

[Epoch: 52] [loss avg: 1.3196] [current loss: 0.0099]

[Epoch: 53] [loss avg: 1.2956] [current loss: 0.0164]

[Epoch: 54] [loss avg: 1.2722] [current loss: 0.0056]

[Epoch: 55] [loss avg: 1.2501] [current loss: 0.0006]

[Epoch: 56] [loss avg: 1.2284] [current loss: 0.0044]

[Epoch: 57] [loss avg: 1.2086] [current loss: 0.0006]

[Epoch: 58] [loss avg: 1.1886] [current loss: 0.0010]

[Epoch: 59] [loss avg: 1.1705] [current loss: 0.0097]

[Epoch: 60] [loss avg: 1.1521] [current loss: 0.0008]

[Epoch: 61] [loss avg: 1.1344] [current loss: 0.0001]

[Epoch: 62] [loss avg: 1.1172] [current loss: 0.0056]

[Epoch: 63] [loss avg: 1.1016] [current loss: 0.0064]

[Epoch: 64] [loss avg: 1.0868] [current loss: 0.0178]

[Epoch: 65] [loss avg: 1.0741] [current loss: 0.0257]

[Epoch: 66] [loss avg: 1.0674] [current loss: 0.0535]

[Epoch: 67] [loss avg: 1.0555] [current loss: 0.0115]

[Epoch: 68] [loss avg: 1.0449] [current loss: 0.0736]

[Epoch: 69] [loss avg: 1.0371] [current loss: 0.1449]

[Epoch: 70] [loss avg: 1.0269] [current loss: 0.0515]

[Epoch: 71] [loss avg: 1.0193] [current loss: 0.0283]

[Epoch: 72] [loss avg: 1.0093] [current loss: 0.0576]

[Epoch: 73] [loss avg: 0.9980] [current loss: 0.0195]

[Epoch: 74] [loss avg: 0.9864] [current loss: 0.0095]

[Epoch: 75] [loss avg: 0.9746] [current loss: 0.0385]

[Epoch: 76] [loss avg: 0.9645] [current loss: 0.0139]

[Epoch: 77] [loss avg: 0.9550] [current loss: 0.1231]

[Epoch: 78] [loss avg: 0.9449] [current loss: 0.0402]

[Epoch: 79] [loss avg: 0.9345] [current loss: 0.0021]

[Epoch: 80] [loss avg: 0.9244] [current loss: 0.0119]

[Epoch: 81] [loss avg: 0.9168] [current loss: 0.0020]

[Epoch: 82] [loss avg: 0.9088] [current loss: 0.0023]

[Epoch: 83] [loss avg: 0.9006] [current loss: 0.0344]

[Epoch: 84] [loss avg: 0.8927] [current loss: 0.0765]

[Epoch: 85] [loss avg: 0.8828] [current loss: 0.0108]

[Epoch: 86] [loss avg: 0.8736] [current loss: 0.0029]

[Epoch: 87] [loss avg: 0.8647] [current loss: 0.0084]

[Epoch: 88] [loss avg: 0.8561] [current loss: 0.0017]

[Epoch: 89] [loss avg: 0.8484] [current loss: 0.0444]

[Epoch: 90] [loss avg: 0.8408] [current loss: 0.0044]

[Epoch: 91] [loss avg: 0.8327] [current loss: 0.0009]

[Epoch: 92] [loss avg: 0.8249] [current loss: 0.0085]

[Epoch: 93] [loss avg: 0.8175] [current loss: 0.0292]

[Epoch: 94] [loss avg: 0.8094] [current loss: 0.0105]

[Epoch: 95] [loss avg: 0.8018] [current loss: 0.0015]

[Epoch: 96] [loss avg: 0.7946] [current loss: 0.0396]

[Epoch: 97] [loss avg: 0.7880] [current loss: 0.0086]

[Epoch: 98] [loss avg: 0.7815] [current loss: 0.0079]

[Epoch: 99] [loss avg: 0.7744] [current loss: 0.0035]

[Epoch: 100] [loss avg: 0.7674] [current loss: 0.0001]

Finished Training

ģ�Ͳ���

count = 0

# ģ�Ͳ���

for inputs, _ in test_loader:

inputs = inputs.to(device)

outputs = net(inputs)

outputs = np.argmax(outputs.detach().cpu().numpy(), axis=1)

if count == 0:

y_pred_test = outputs

count = 1

else:

y_pred_test = np.concatenate( (y_pred_test, outputs) )

# ���ɷ��౨��

classification = classification_report(ytest, y_pred_test, digits=4)

print(classification)

# ��һ�η�����

precision recall f1-score support

0.0 0.9070 0.9512 0.9286 41

1.0 0.9892 0.9300 0.9587 1285

2.0 0.9552 0.9987 0.9764 747

3.0 0.9810 0.9718 0.9764 213

4.0 0.9954 0.9931 0.9942 435

5.0 0.9703 0.9939 0.9820 657

6.0 0.9615 1.0000 0.9804 25

7.0 0.9977 1.0000 0.9988 430

8.0 0.9412 0.8889 0.9143 18

9.0 0.9742 0.9909 0.9824 875

10.0 0.9694 0.9878 0.9785 2210

11.0 0.9660 0.9588 0.9624 534

12.0 0.9714 0.9189 0.9444 185

13.0 0.9973 0.9895 0.9934 1139

14.0 0.9913 0.9885 0.9899 347

15.0 0.9359 0.8690 0.9012 84

accuracy 0.9776 9225

macro avg 0.9690 0.9644 0.9664 9225

weighted avg 0.9778 0.9776 0.9774 9225

# �ڶ��η�����

precision recall f1-score support

0.0 0.9524 0.9756 0.9639 41

1.0 0.9876 0.9331 0.9596 1285

2.0 0.9562 0.9933 0.9744 747

3.0 0.9589 0.9859 0.9722 213

4.0 0.9795 0.9908 0.9851 435

5.0 0.9746 0.9939 0.9842 657

6.0 0.8276 0.9600 0.8889 25

7.0 0.9977 0.9977 0.9977 430

8.0 0.8889 0.8889 0.8889 18

9.0 0.9729 0.9863 0.9796 875

10.0 0.9711 0.9878 0.9794 2210

11.0 0.9551 0.9551 0.9551 534

12.0 0.9817 0.8703 0.9226 185

13.0 0.9956 0.9921 0.9938 1139

14.0 0.9856 0.9885 0.9871 347

15.0 0.9155 0.7738 0.8387 84

accuracy 0.9755 9225

macro avg 0.9563 0.9546 0.9544 9225

weighted avg 0.9757 0.9755 0.9753 9225

����˼��

3D������2D����������?

2D�����ľ����˴�СΪ ( c , h , w ) (c, h, w) (c,h,w),�ܴӶ�ά����������ȡ���õ���������,��������ά����������ȡ���õ�����������

3D�����ľ����˴�СΪ ( c , d , h , w ) (c,d,h,w) (c,d,h,w), d d d���Ƕ�����ĵ���ά,���ݾ���Ӧ��,����Ƶ�о���ʱ��ά,��HSI�о��Dz���ά��3D�����ܴ���ά����������ȡ���õ���������,��������Ӹ��ӡ�

ÿ�η���Ľ������һ��?

û��ʹ��model.eval()��ģ������Ϊ����ģʽ��

��trainģʽ��,dropout�����ᰴ���趨�IJ���p���ñ������Ԫ�ĸ���(��������=p);batchnorm�������������ݵ�mean��var�Ȳ��������¡�

��evalģʽ��,dropout��������еļ��Ԫ��ͨ��,��batchnorm���ֹͣ�������mean��var,ֱ��ʹ����ѵ�����Ѿ�ѧ����mean��varֵ��

ʵ������ȷ����ģʽ�����Ľ�����ٷ����ı䡣

��θĽ��߹���ͼ��ķ�������?

����ע�������ƺͲв�ṹ,����������ȡ�