- 🍨 本文为🔗365天深度学习训练营 内部限免文章(版权归 K同学啊 所有)

- 🍦 参考文章地址: 🔗第四周:猴痘病识别 | 365天深度学习训练营

- 🍖 作者:K同学啊 | 接辅导、程序定制

文章目录

我的环境:

- 语言环境:Python3.6.8

- 编译器:jupyter notebook

- 深度学习环境:TensorFlow2.3

一、前期工作

1. 设置 GPU

import tensorflow as tf

gpus = tf.config.list_physical_devices("GPU")

if gpus:

gpu0 = gpus[0] #如果有多个GPU,仅使用第0个GPU

tf.config.experimental.set_memory_growth(gpu0, True) #设置GPU显存用量按需使用

tf.config.set_visible_devices([gpu0],"GPU")

gpus

[PhysicalDevice(name='/physical_device:GPU:0', device_type='GPU')]

2. 导入数据

import os,PIL,pathlib

import matplotlib.pyplot as plt

import numpy as np

from tensorflow import keras

from tensorflow.keras import layers,models

data_dir = "D:/jupyter notebook/DL-100-days/datasets/45-data/" # 图片存放目录

data_dir = pathlib.Path(data_dir) # 构造 pathlib 模块下的 Path 对象

3. 查看数据

数据集一共分为 Monkeypox、Others 两类,分别存放于 45-data 文件夹中以各自名字命名的子文件夹中

image_count = len(list(data_dir.glob('*/*.jpg'))) # 使用 Path 对象的 glob() 方法获取 45-data 目录下的两个文件夹所有图片

print("图片总数为:",image_count)

图片总数为: 2142

4. 可视化图片

# 返回图片路径

Monkeypox = list(data_dir.glob('Monkeypox/*.jpg')) # 使用 Path 对象的 glob() 方法获取 45-data/Monkeypox 目录下的所有图片对象

PIL.Image.open(str(Monkeypox[0])) # 读取第一张图片

二、数据预处理

1. 加载数据

使用 image_dataset_from_directory 方法将磁盘中的数据加载到 tf.data.Dataset 中

batch_size = 32 # 批量大小,一次训练 32 张图片

img_height = 224 # 图片高度,把图片进行统一处理,因为图片尺寸不一,需要我们自己定义图片高度

img_width = 224 # 图片宽度,把图片进行统一处理,因为图片尺寸不一,需要我们自己定义图片宽度

tf.keras.preprocessing.image_dataset_from_directory() 的参数:

- directory, # 存放目录

- labels=“inferred”, # 图片标签

- label_mode=“int”, # 图片模式

- class_names=None, # 分类

- color_mode=“rgb”, # 颜色模式

- batch_size=32, # 批量大小

- image_size=(256, 256), # 从磁盘读取数据后将其重新调整大小。

- shuffle=True, # 是否打乱

- seed=None, # 随机种子

- validation_split=None, # 0 和 1 之间的数,可保留一部分数据用于验证。如:0.2=20%

- subset=None, # “training” 或 “validation”。仅在设置 validation_split 时使用。

- interpolation=“bilinear”, # 插值方式:双线性插值

- follow_links=False, # 是否跟踪类子目录中的符号链接

#!pip install tf-nightly

train_ds = tf.keras.preprocessing.image_dataset_from_directory(

data_dir,

validation_split=0.2,

subset="training",

seed=123,

image_size=(img_height, img_width),

batch_size=batch_size)

输出:

Found 2142 files belonging to 2 classes.

Using 1714 files for training.

val_ds = tf.keras.preprocessing.image_dataset_from_directory(

data_dir,

validation_split=0.2,

subset="validation",

seed=123,

image_size=(img_height, img_width),

batch_size=batch_size)

输出:

Found 2142 files belonging to 2 classes.

Using 428 files for validation.

class_names = train_ds.class_names

print(class_names)

输出:

[‘Monkeypox’, ‘Others’]

2. 可视化数据

# 设置画板的宽高,单位为英寸

plt.figure(figsize=(20, 10))

# train_ds.take(1) 是指从训练集数据集中取出 1 个 batch 大小的数据,返回值 images 存放图像数据,labels 存放图像的标签。

for images, labels in train_ds.take(1):

for i in range(20):

# plt.subplot('行', '列','编号') 绘制画板的子区域 将整个图像窗口分为5行10列,当前位置为 i + 1

ax = plt.subplot(5, 10, i + 1)

# imshow() 其实就是将数组的值以图片的形式展示出来,数组的值对应着不同的颜色深浅,而数值的横纵坐标就是数组的索引

plt.imshow(images[i].numpy().astype("uint8"))

# labels[i] 的值为 0, 1, 2, 3,映射到 class_names 可以得到图片的类别

plt.title(class_names[labels[i]])

# 不显示轴线

plt.axis("off")

3. 再次检查数据

for image_batch, labels_batch in train_ds:

print(image_batch.shape)

print(labels_batch.shape)

break

输出

(32, 224, 224, 3)

(32,)

- image_batch : (32, 224, 224, 3) 第一个32是批次尺寸,224是我们修改后的宽高,3是RGB三个通道

- labels_batch : (32,) 一维,32个标签

4. 配置数据集

AUTOTUNE = tf.data.AUTOTUNE

train_ds = train_ds.cache().shuffle(1000).prefetch(buffer_size=AUTOTUNE)

val_ds = val_ds.cache().prefetch(buffer_size=AUTOTUNE)

- shuffle() : 数据乱序

- prefetch() : 预取数据加速运行

- cache() : 数据集缓存到内存中,加速

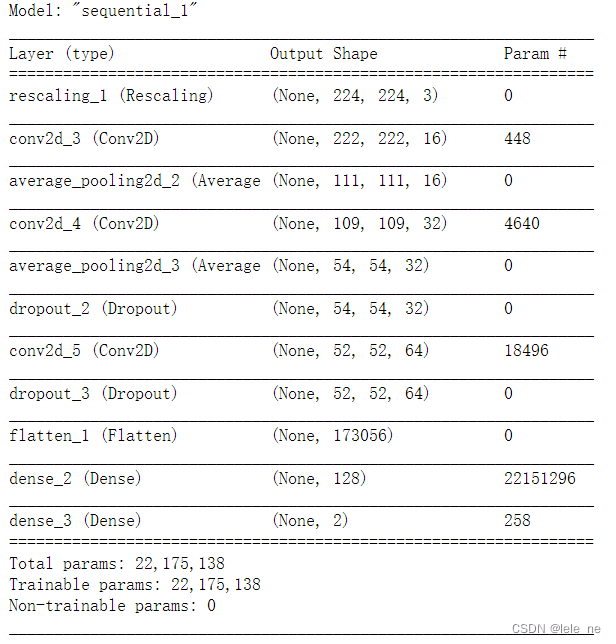

三、构建网络

num_classes = 2

"""

关于卷积核的计算不懂的可以参考文章:https://blog.csdn.net/qq_38251616/article/details/114278995

layers.Dropout(0.4) 作用是防止过拟合,提高模型的泛化能力。

在上一篇文章花朵识别中,训练准确率与验证准确率相差巨大就是由于模型过拟合导致的

关于Dropout层的更多介绍可以参考文章:https://mtyjkh.blog.csdn.net/article/details/115826689

"""

model = models.Sequential([

layers.experimental.preprocessing.Rescaling(1./255, input_shape=(img_height, img_width, 3)),

layers.Conv2D(16, (3, 3), activation='relu', input_shape=(img_height, img_width, 3)), # 卷积层1,卷积核3*3

layers.AveragePooling2D((2, 2)), # 池化层1,2*2采样

layers.Conv2D(32, (3, 3), activation='relu'), # 卷积层2,卷积核3*3

layers.AveragePooling2D((2, 2)), # 池化层2,2*2采样

layers.Dropout(0.3),

layers.Conv2D(64, (3, 3), activation='relu'), # 卷积层3,卷积核3*3

layers.Dropout(0.3),

layers.Flatten(), # Flatten层,连接卷积层与全连接层

layers.Dense(128, activation='relu'), # 全连接层,特征进一步提取

layers.Dense(num_classes) # 输出层,输出预期结果

])

model.summary() # 打印网络结构

四、编译

# 设置优化器

opt = tf.keras.optimizers.Adam(learning_rate=1e-4)

model.compile(optimizer=opt,

loss=tf.keras.losses.SparseCategoricalCrossentropy(from_logits=True),

metrics=['accuracy'])

五、训练模型

from tensorflow.keras.callbacks import ModelCheckpoint

epochs = 50

checkpointer = ModelCheckpoint('best_model.h5',

monitor='val_accuracy',

verbose=1,

save_best_only=True,

save_weights_only=True)

history = model.fit(train_ds,

validation_data=val_ds,

epochs=epochs,

callbacks=[checkpointer])

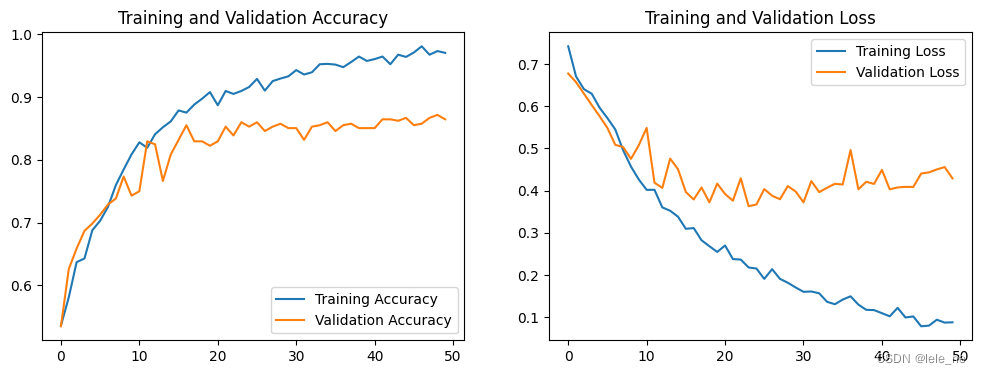

六、模型评估

1. Loss 与 Accuracy 图

acc = history.history['accuracy']

val_acc = history.history['val_accuracy']

loss = history.history['loss']

val_loss = history.history['val_loss']

epochs_range = range(epochs)

plt.figure(figsize=(12, 4))

plt.subplot(1, 2, 1)

plt.plot(epochs_range, acc, label='Training Accuracy')

plt.plot(epochs_range, val_acc, label='Validation Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.subplot(1, 2, 2)

plt.plot(epochs_range, loss, label='Training Loss')

plt.plot(epochs_range, val_loss, label='Validation Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

2. 指定图片进行预测

# 加载效果最好的模型权重

model.load_weights('best_model.h5')

from PIL import Image

import numpy as np

# img = Image.open("D:/jupyter notebook/DL-100-days/datasets/45-data/Monkeypox/M06_01_04.jpg") #这里选择你需要预测的图片

img = Image.open("D:/jupyter notebook/DL-100-days/datasets/45-data/Others/NM15_02_11.jpg") #这里选择你需要预测的图片

img = np.array(img)

image = tf.image.resize(img, [img_height, img_width])

img_array = tf.expand_dims(image, 0)

predictions = model.predict(img_array) # 这里选用你已经训练好的模型

print("预测结果为:",class_names[np.argmax(predictions)])

预测结果为: Others