u B = 1 B �� i �� B x i u_B=\frac{1}{B}\sum\limits_{i \in B}x_i uB?=B1?i��B��?xi?

�� B 2 = 1 �O B �O �� i �� B ( x i ? u B ) 2 + ? \sigma^2_B=\frac{1}{|B|}\sum\limits_{i\in B}(x_i-u_B)^2+\epsilon ��B2?=�OB�O1?i��B��?(xi??uB?)2+?, ? \epsilon ? ��1����С����,��ֹ����Ϊ0

x i + 1 = �� x i ? �� ^ B �� ^ B + �� x_{i+1}=\gamma\frac{x_i-\hat{\mu}_B}{\hat{\sigma}_B}+\beta xi+1?=����^B?xi??��^?B??+��, B is mini_batch_data, �� \gamma ������Ҫѧϰ�ķ���, �� \beta ������Ҫѧϰ������, �� ^ B \hat{\mu}_B ��^?B? is mean, �� ^ B \hat{\sigma}_B ��^B? is var,

import torch

from torch import nn

from d2l import torch as d2l

def batch_norm(X, gamma, beta, moving_mean, moving_var, eps, momentum):

'''

mini_batch_norm

:param X ����������

:param gamma ��Ҫѧϰ�ķ���

:param beta ��Ҫѧϰ������

:param moving_mean �������ݼ�������,�������С�������ݵ�����,������ʱʹ��

:param moving_var �������ݼ��ķ���,�������С�������ݵķ���,������ʱʹ��

:param eps �г���ֵ,ÿ����ܶ���ͬ,ͨ��ȡ1e-5

:param momentum ���ڸ���moving_mean��moving_var,�г���ֵ,ÿ����ܶ���ͬ,ͨ��ȡ0.9

'''

# ��������ʱ��

# û�п����ݶȼ���

if not torch.is_grad_enabled():

# Ϊʲô����ʹ��ȫ�ֵľ�ֵ��ȫ�ַ���,���������ܾ�1��ͼƬ,û�������ĸ���

X_hat = (X - moving_mean) / torch.sqrt(moving_var + eps)

else:

# x�������2����4

# x=2,ȫ���Ӳ�

# x=4,��������

assert len(X.shape) in (2, 4)

# x=2,ȫ���Ӳ�,���������ֵ�ͷ���

if len(X.shape) == 2:

# Ĭ��,����һά���ֵ,��һ��ά����ʧ

# ����0ά���ֵ,��ÿ�������ľ�ֵ,������ÿ��������

# !����0ά���ֵ,���������ڵ�2ά��

# mean.shape=1xn

mean = X.mean(dim=0)

# �����������ķ���

var = ((X - mean) ** 2).mean(dim=0)

# x=4,��ά������,��ͨ�����ֵ�ͷ���

else:

# ������

# 0ά,������С��1ά,ǰһ�����ͨ������2ά,�ߡ�3ά,��

# !����0ά,2ά,3ά,���ֵ,���������ڵ�1ά��

# 1xnx1x1 ��ά

mean = X.mean(dim=(0, 2, 3), keepdim=True)

var = ((X - mean) ** 2).mean(dim=(0, 2, 3), keepdim=True)

# mean��var�����ڵ�ǰmini_batch_data������ľ�ֵ�ͷ���

# X_hat=1xnx1x1

X_hat = (X - mean) / torch.sqrt(var + eps)

moving_mean = momentum * moving_mean + (1.0 - momentum) * mean

moving_var = momentum * moving_var + (1.0 - momentum) * var

# beta,ֱ�Ӱ���μ��������

# moving_mean��moving_varֻ��������ʱ������,ѵ����ʱ��û��

# gamma.shape=beta.shape=1xnx1x1

Y = gamma * X_hat + beta

return Y, moving_mean.data, moving_var.data

class BatchNorm(nn.Module):

'''

BatchNorm��

'''

def __init__(self, num_features, num_dims):

'''

:param num_features=2,��������num_features=4,ǰ1�����ͨ����

:param num_dims=2,ȫ���Ӳ㡣num_dims=4,��ά������

'''

super().__init__()

if num_dims == 2:

shape = (1, num_features)

else:

shape = (1, num_features, 1, 1)

# ȫ1��ʼ����Ҫѧϰ�ķ���,���ȫ0,�ڹ�ʽ�г�֮��ȫ0

self.gamma = nn.Parameter(torch.ones(shape))

# ȫ0��ʼ����Ҫѧϰ�ľ�ֵ

self.beta = nn.Parameter(torch.zeros(shape))

# ȫ1��ʼ���������ݼ��ķ���

self.moving_var = torch.ones(shape)

# ȫ0��ʼ���������ݼ�������

self.moving_mean = torch.zeros(shape)

def forward(self, X):

# С��������X���������ݾ�ֵҪ�ŵ�ͬһ�豸��

if self.moving_mean.device != X.device:

self.moving_mean = self.moving_mean.to(X.device)

self.moving_var = self.moving_var.to(X.device)

Y, self.moving_mean, self.moving_var = batch_norm(

X, self.gamma, self.beta, self.moving_mean,

self.moving_var, eps=1e-5, momentum=0.9)

return Y

Ӧ��BatchNorm ��LeNetģ��

net = nn.Sequential(

nn.Conv2d(1, 6, kernel_size=5),

# 6��ά����������ͨ������num_dims=4 ��ʾ�����ڶ�ά����������ͨ����

BatchNorm(6, num_dims=4),

nn.Sigmoid(),

nn.AvgPool2d(kernel_size=2, stride=2),

nn.Conv2d(6, 16, kernel_size=5),

# 16��ά����������ͨ������num_dims=4 ��ʾ�����ڶ�ά����������ͨ����

BatchNorm(16, num_dims=4),

nn.Sigmoid(),

nn.AvgPool2d(kernel_size=2, stride=2),

nn.Flatten(),

nn.Linear(16*4*4, 120), BatchNorm(120, num_dims=2),

nn.Sigmoid(),

nn.Linear(120, 84),

# 84��������num_dims=2 ��ʾ������ȫ���Ӳ��������

BatchNorm(84, num_dims=2),

nn.Sigmoid(),

nn.Linear(84, 10))

��Fashion-MNIST���ݼ���ѵ������

lr, num_epochs, batch_size = 1.0, 10, 256

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size)

d2l.train_ch6(net, train_iter, test_iter, num_epochs, lr, d2l.try_gpu())

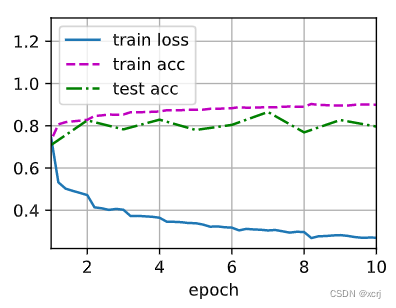

loss 0.270, train acc 0.900, test acc 0.796

25335.7 examples/sec on cuda:0

# �鿴net��1��(batchNorm��)�IJ���,����ѧϰ����gamma�;�ֵѧϰ����beta

net[1].gamma.reshape((-1,)), net[1].beta.reshape((-1,))

(tensor([2.4066, 2.6878, 3.8948, 0.4407, 2.3572, 4.0047], device='cuda:0',

grad_fn=<ReshapeAliasBackward0>),

tensor([ 0.6224, 0.5743, -3.8525, 0.8712, 2.7259, -2.4347], device='cuda:0',

grad_fn=<ReshapeAliasBackward0>))

���ʵ��

net = nn.Sequential(

nn.Conv2d(1, 6, kernel_size=5),

# 6���ͨ����

nn.BatchNorm2d(6),

nn.Sigmoid(),

nn.AvgPool2d(kernel_size=2, stride=2),

nn.Conv2d(6, 16, kernel_size=5),

# 16���ͨ����

nn.BatchNorm2d(16),

nn.Sigmoid(),

nn.AvgPool2d(kernel_size=2, stride=2),

nn.Flatten(),

nn.Linear(256, 120),

# 120������

nn.BatchNorm1d(120),

nn.Sigmoid(),

nn.Linear(120, 84),

# 84������

nn.BatchNorm1d(84),

nn.Sigmoid(),

nn.Linear(84, 10))

ʹ����ͬ��������ѵ��ģ��

d2l.train_ch6(net, train_iter, test_iter, num_epochs, lr, d2l.try_gpu())

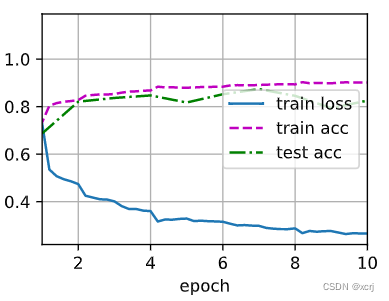

loss 0.266, train acc 0.902, test acc 0.826

48070.5 examples/sec on cuda:0

�ܽ�

- ������һ��������:ѧϰ������ʱ��,�����ı�ʱ,����仯�����

- ������һ��������:������������ݵ��ȶ���

- ������һ���̶�С�����еľ�ֵ�ͷ���,ѧϰ�����ʵ�����gamma��ƫ��mean

- ȫ���Ӳ㡷������һ��(������ȫ���Ӳ��������)�������

- �����㡷������һ��(�����ھ���������ͨ����)�������

- ȫ���Ӳ㡷������һ�������������ʹ�ö�������

query

һ��ģ���ȶ���,�����Ͳ������

��ֵ�ȶ���:

- ������Ȩ�س�ʼ��

- �����ļ����

- ��һ��:batch normalization

- ����ܹ�:�˷���ӷ�

!!!��ֵ�ȶ���:

- ��:��ֵ�ȶ�������,��ֹ�ݶ���ʧ���ݶȱ�ը,��֤����ÿ��������ȶ��Ժͷ����ݶȵ��ȶ���

- ��:Ȩ�س�ʼ������,ѵ����ʼʱ��ֵ�ȶ���,��֤ѵ����ʼʱ������ȶ���,���ܱ�֤ѵ����������ȶ���

- ��:ʹ�ÿ���y=x�ļ��������,��֤����ÿ��������ȶ��Ժͷ����ݶȵ��ȶ���

- ��:��һ������,ѵ������ֵ�ȶ���,ѵ����������������ݵ��ȶ���

MLP�����batch normalization

- ��:��������dz��������,���Խ��Խ����������

batch normalization

- ��:Ҳ�����Ա任,�൱�����Բ�,���ź�ƫ��

batch normalization����ʱ����

- ��:�ݶȱ仯,�ݶ��ȶ�,����ʹ�ø����ѧϰ�ʽ���ѵ��,Ȩ�صĸ��±��

����num_epochs, batch_size, lr,

- ��:���������

- ��:num_epochs��һЩû��ϵ,�����˷�һЩ��Դ,������������ͣ��,�´�ֱ��Ҫ�趨�ĺ���ֵ��

- ��:batch_size��Ҫ���������ʴ�С,batch_size�����ÿ�봦����������û�б仯���ҵ�����ֵ��,��������ڴ�+gpu��������ֵ

- ��:lr

xxx normalization

- ��:�����кõĶԱ�ͼ,normalization������ʲô�ط�

- ��:https://blog.csdn.net/u013289254/article/details/99690730