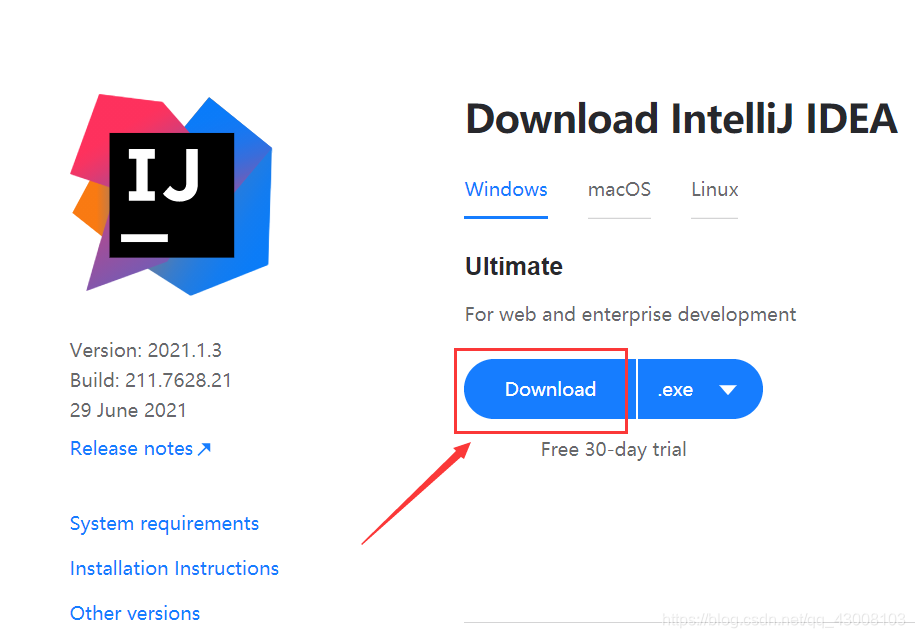

现在Windows上操作

去IntelliJ IDEA官网下载https://www.jetbrains.com/idea/download/#section=windows

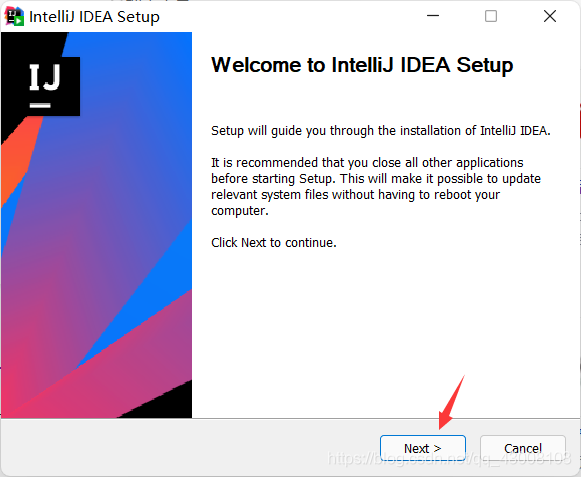

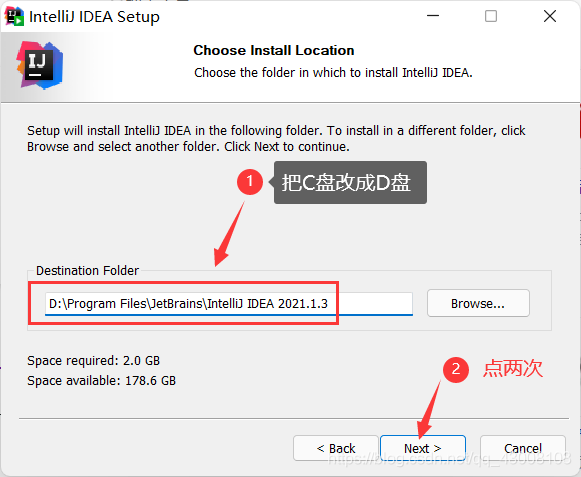

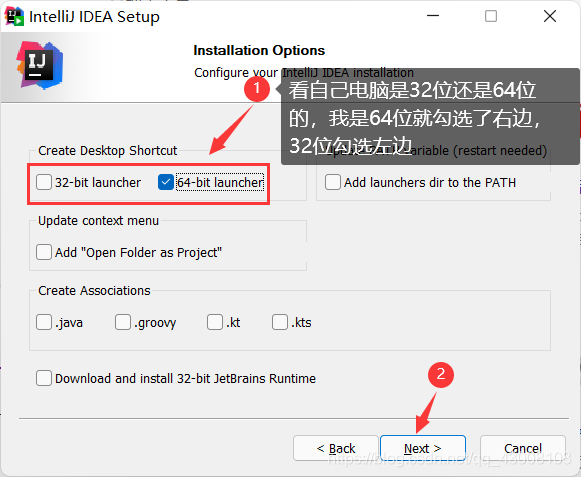

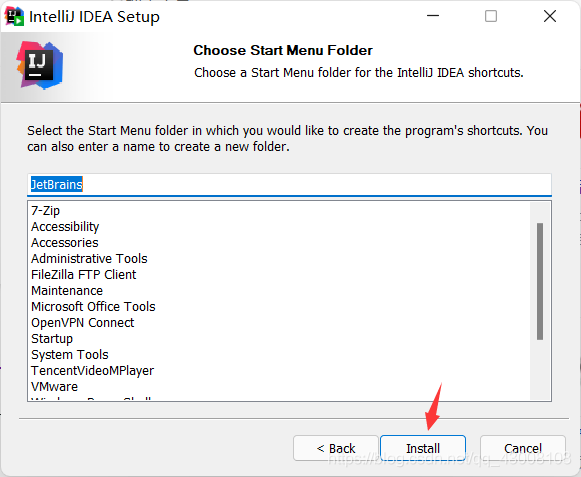

安装包下载好后双击打开

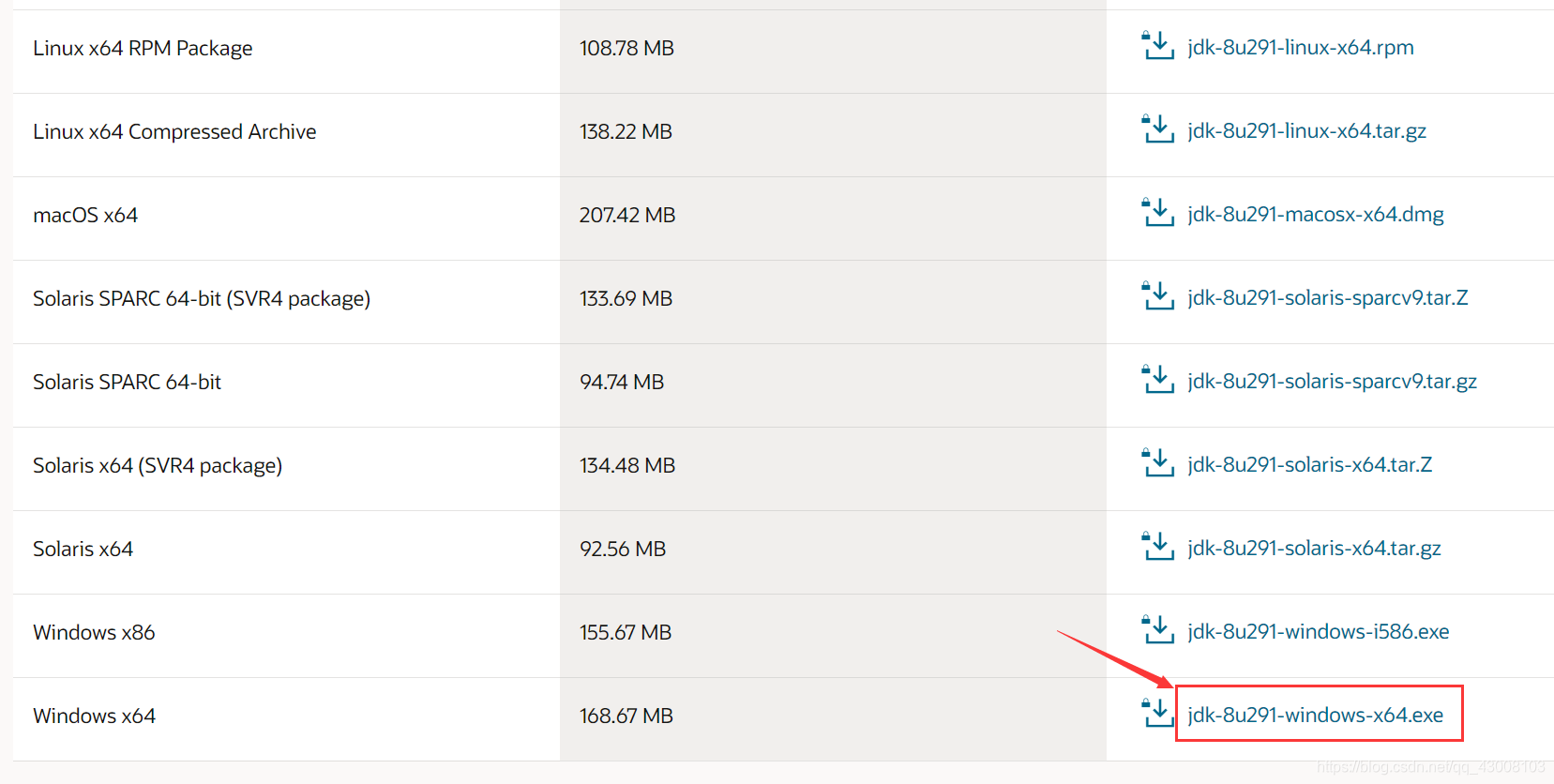

下载jdk和hadoop

https://www.oracle.com/java/technologies/javase/javase-jdk8-downloads.html

不懂下载的看我之前发的https://blog.csdn.net/qq_43008103/article/details/118668959

?双击打开下载好的jdk安装包

?什么都不用改,全部默认下一步

?安装完成

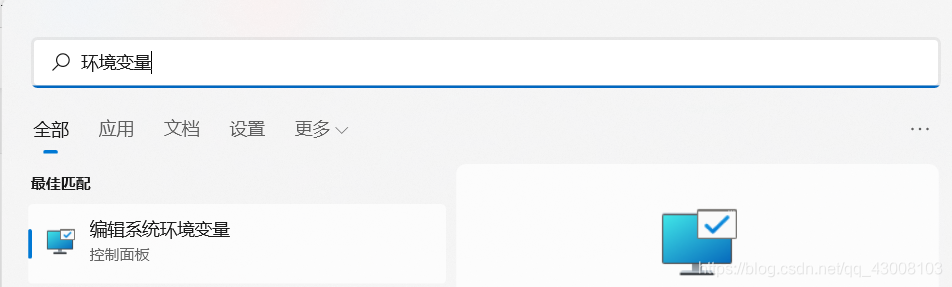

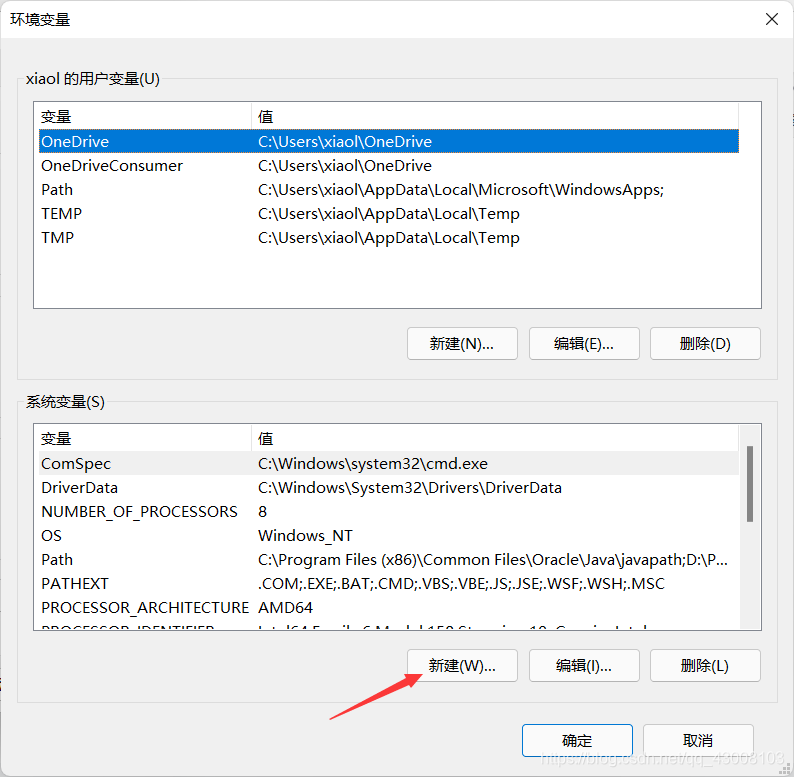

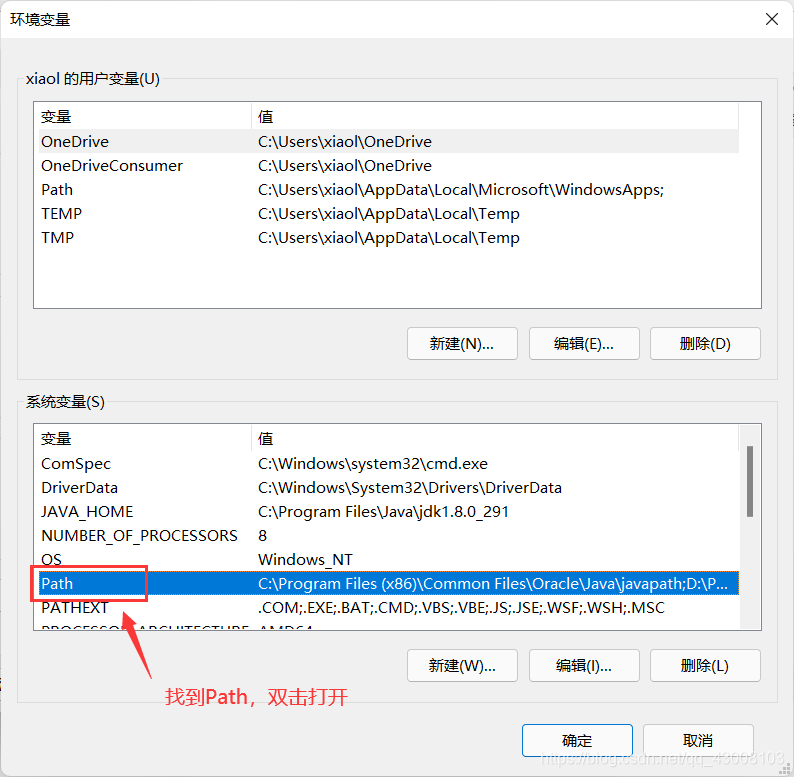

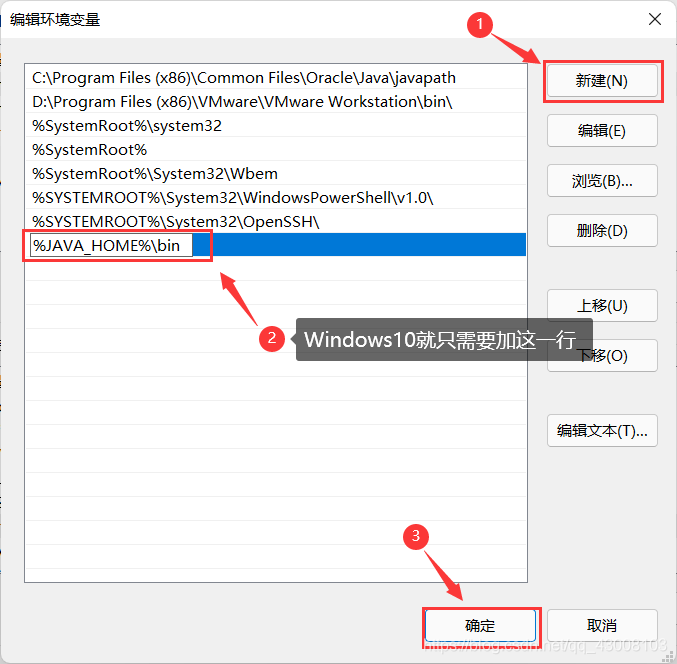

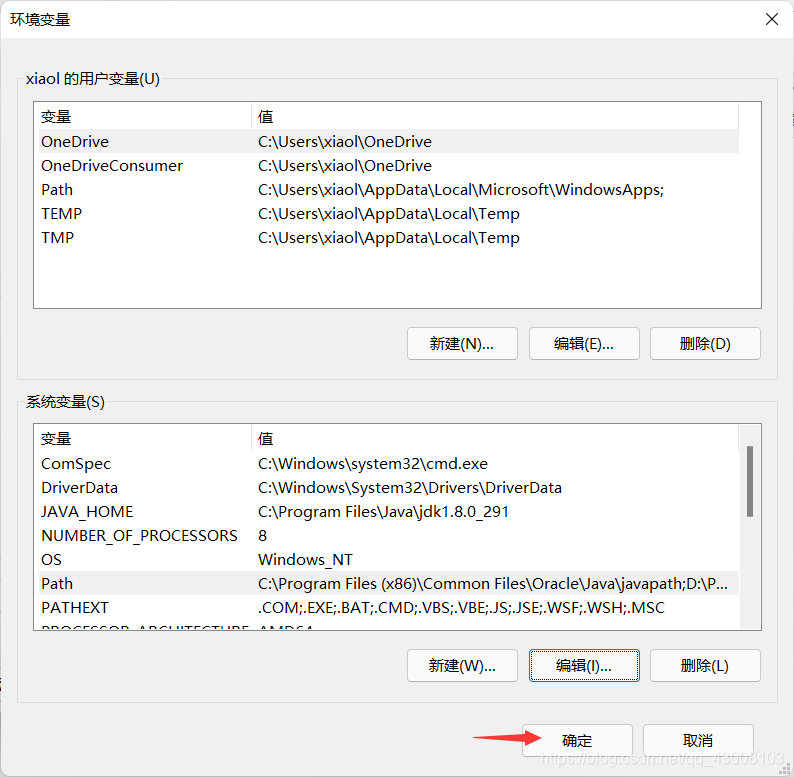

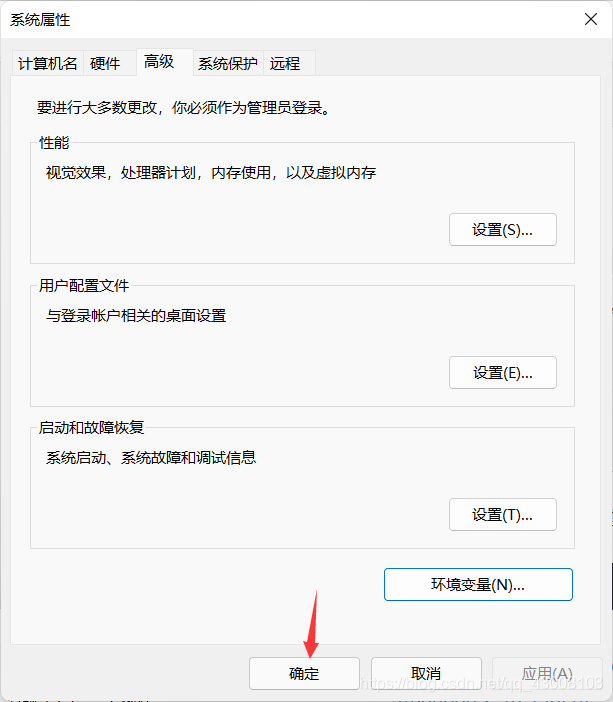

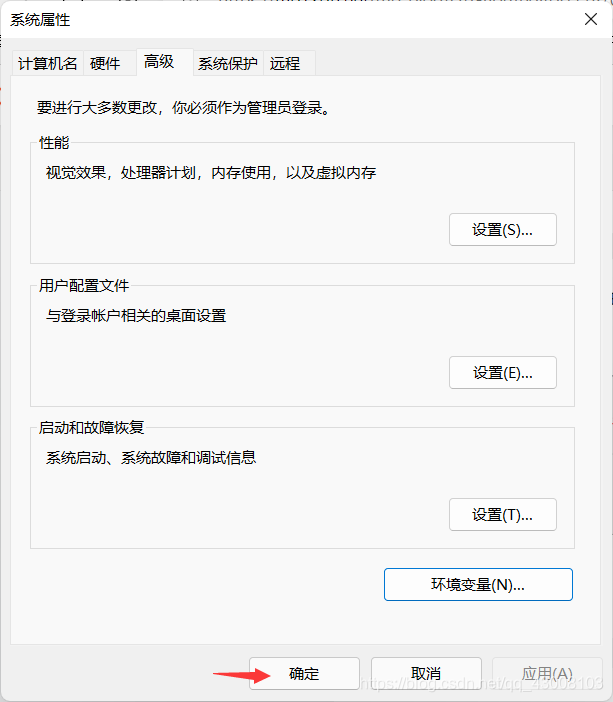

?给jdk配置环境变量

在Windows左下角开始菜单右边有个放大镜的图标,点击输入“环境变量”

点击编辑系统环境变量

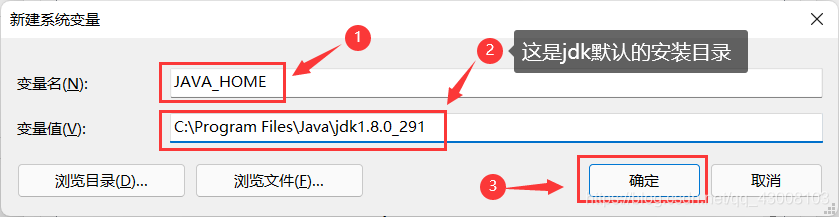

JAVA_HOME

C:\Program Files\Java\jdk1.8.0_291

%JAVA_HOME%\bin

然后检验以下jdk环境变量配置成功没有

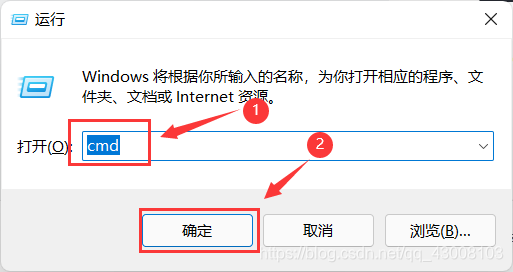

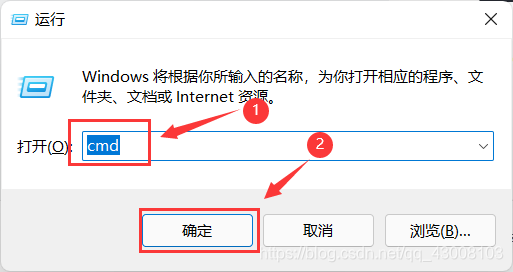

在键盘上按“win+R”(win是键盘左下角的开始菜单)然后输入

cmd

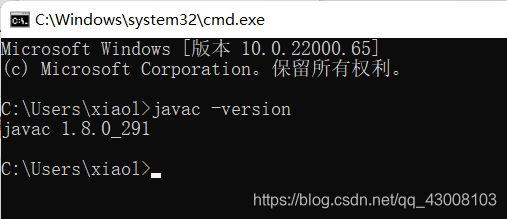

javac -version

?能出来版本号就说明成功了,cmd窗口可以关掉了,接下来在Windows简单的安装Hadoop

hadoop官网的下载地址https://mirrors.bfsu.edu.cn/apache/hadoop/common/hadoop-2.10.1/hadoop-2.10.1.tar.gz

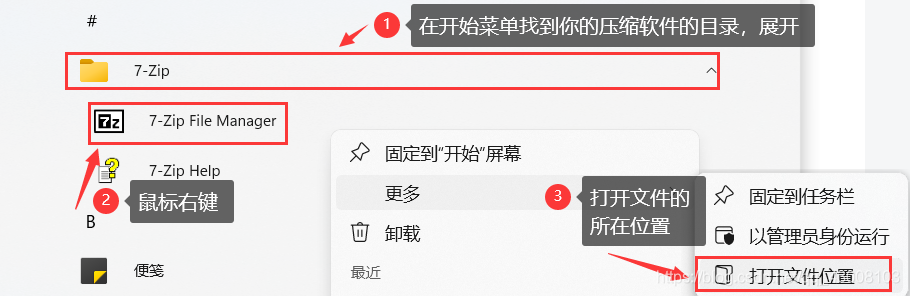

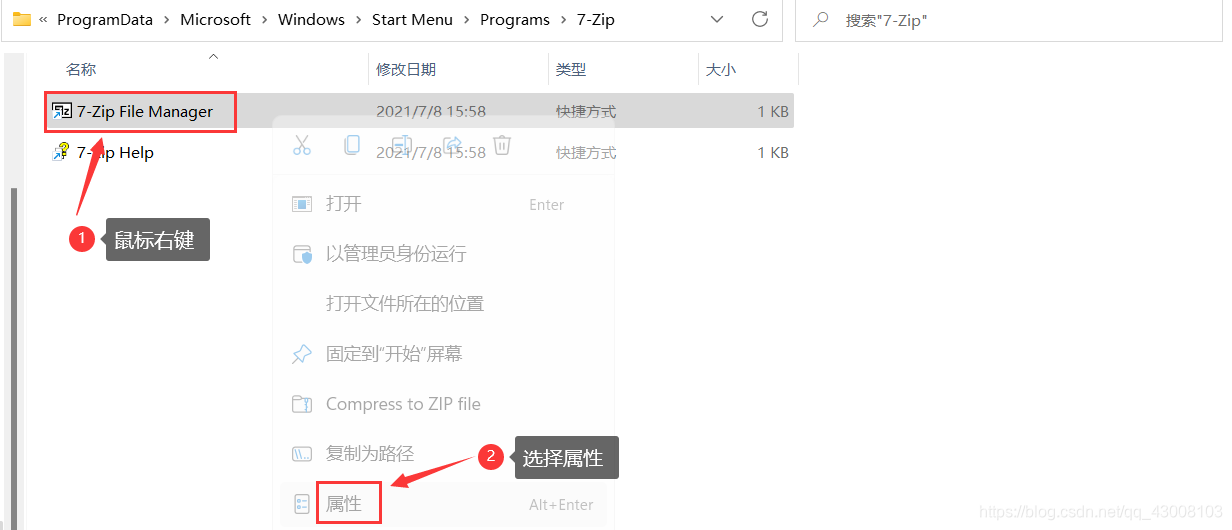

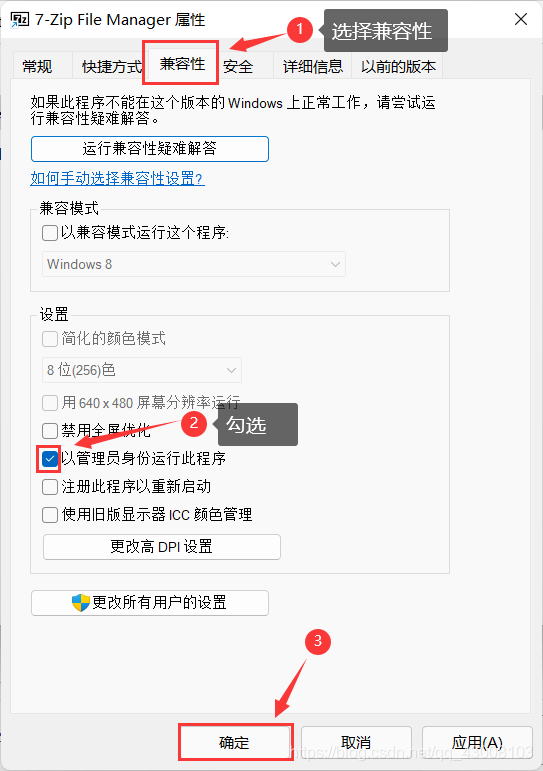

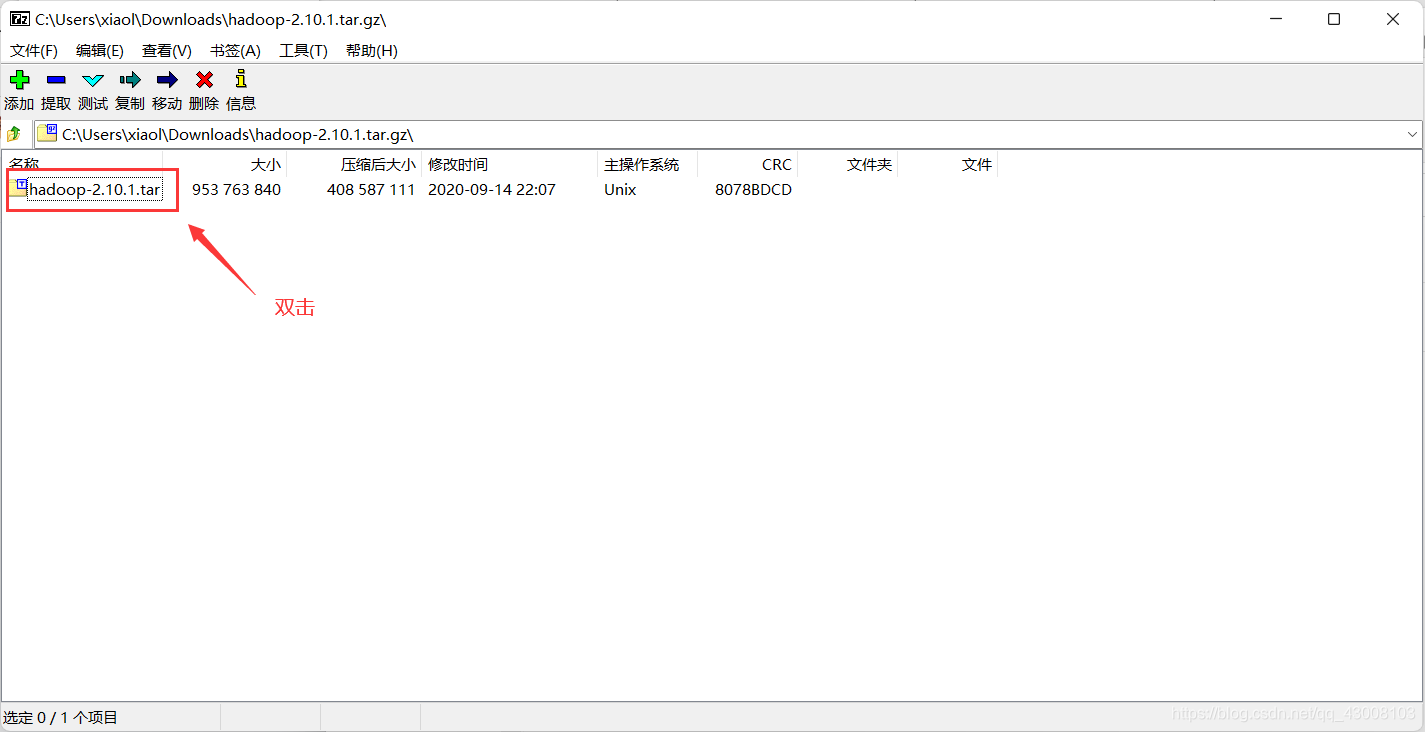

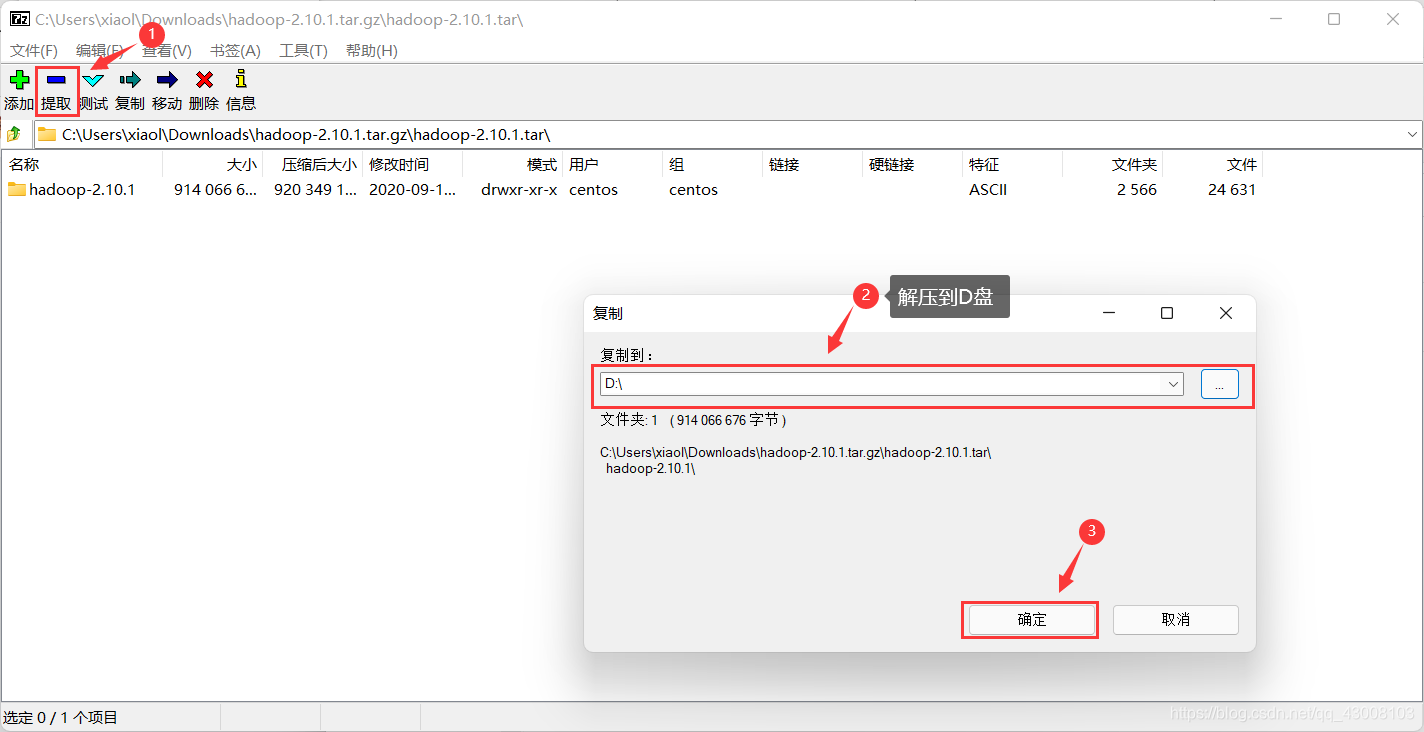

解压hadoop的压缩包需要管理员权限,推荐使用WinRAR,7-Zip或者Bandizip解压,因为我试过像2345好压和360压缩都解压不了,我这里用的是7-Zip

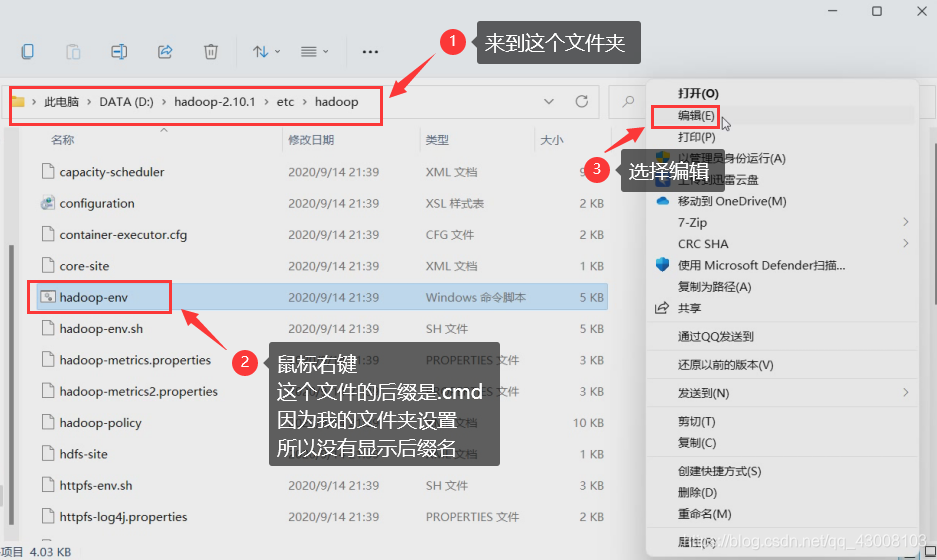

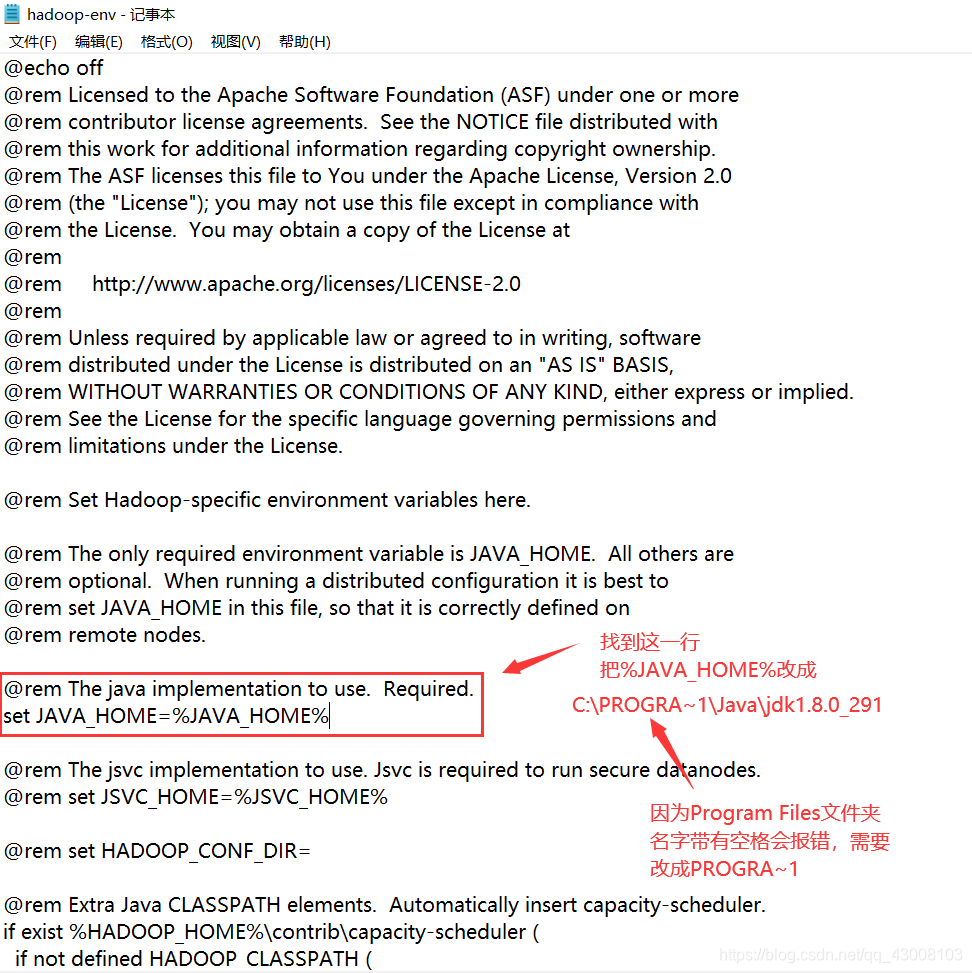

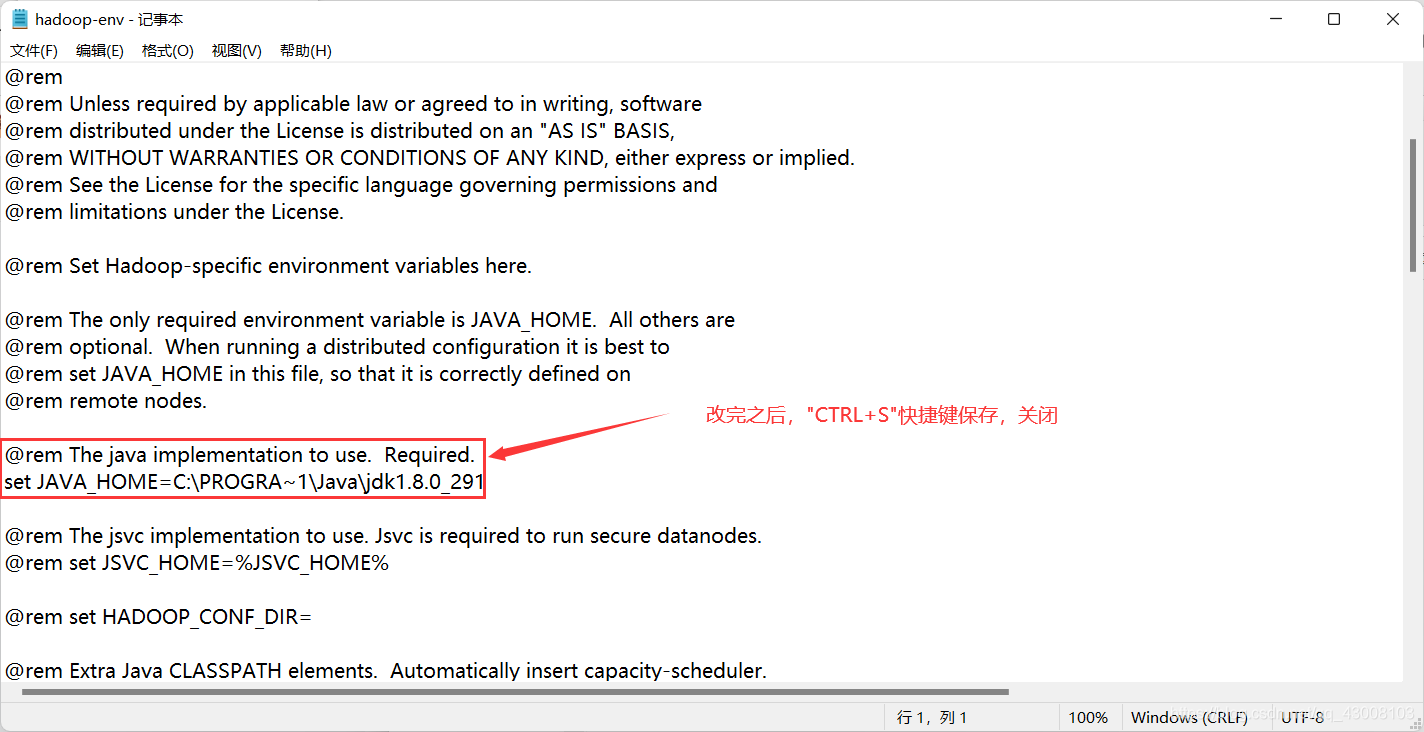

等待解压完成后就可以关闭压缩软件的窗口了,接下来配置hadoop环境变量

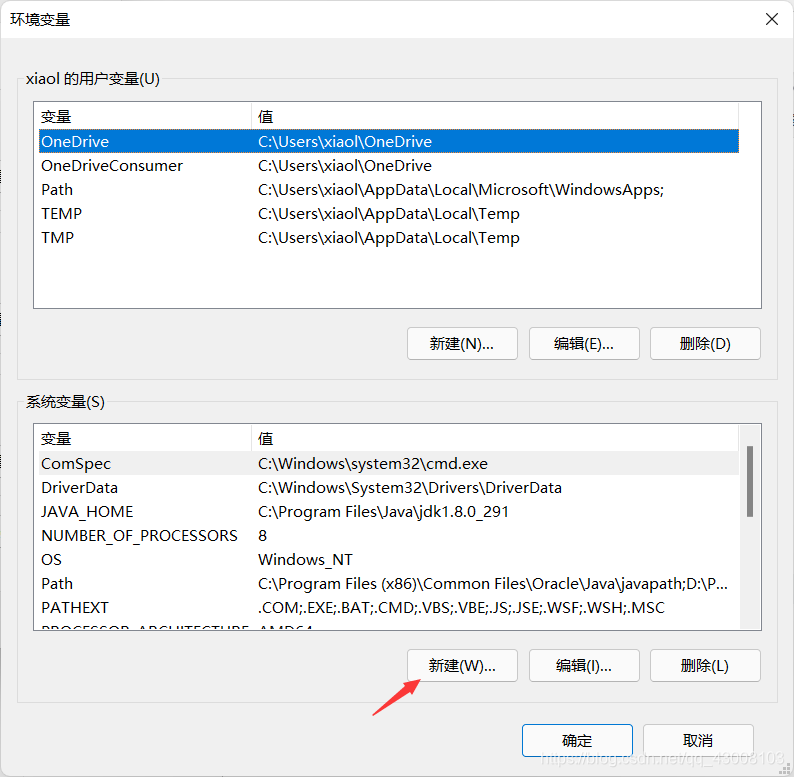

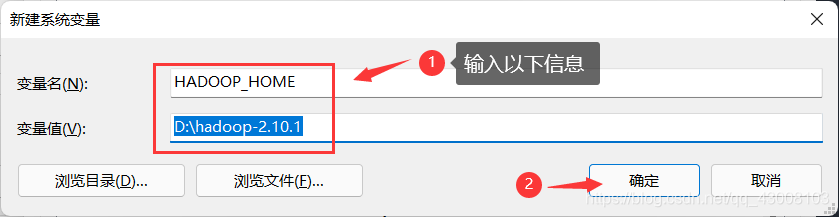

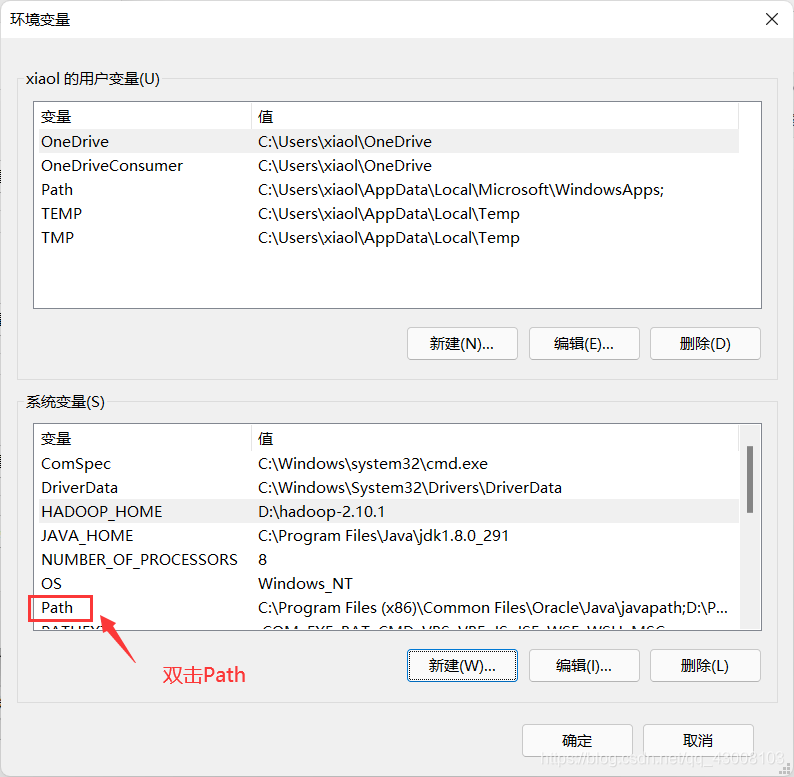

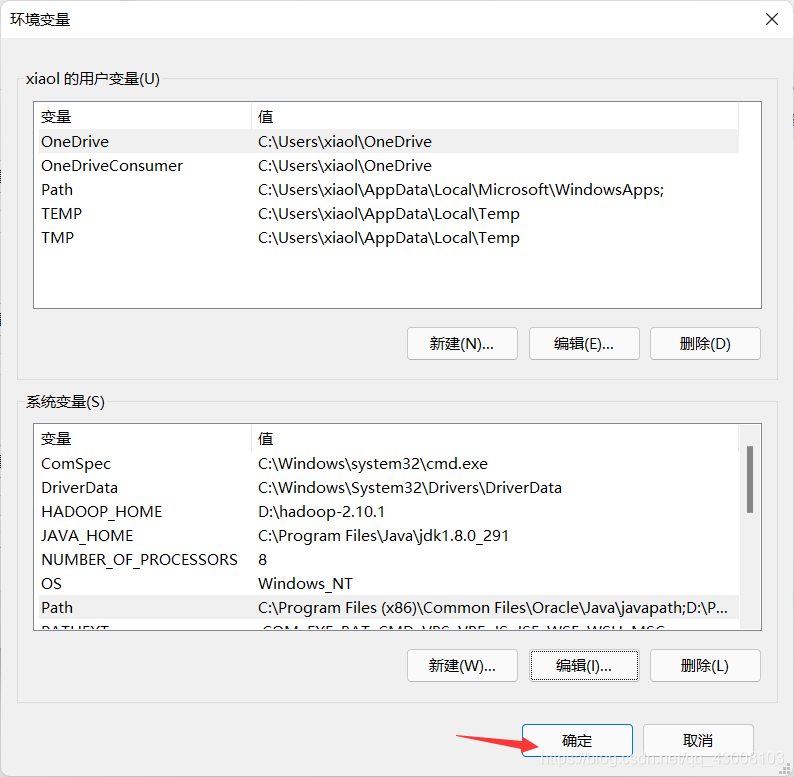

还是那个方法,在左下角“搜索”里输入环境变量,选择“编辑系统环境变量”,然后选择“环境变量”

HADOOP_HOME

D:\hadoop-2.10.1

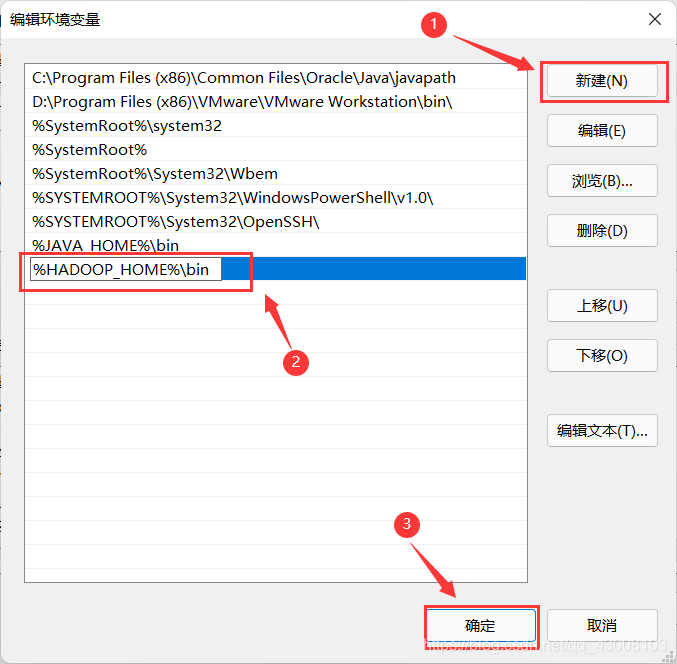

%HADOOP_HOME%\bin

C:\PROGRA~1\Java\jdk1.8.0_291

键盘按“win+R”打开运行窗口输入“cmd”

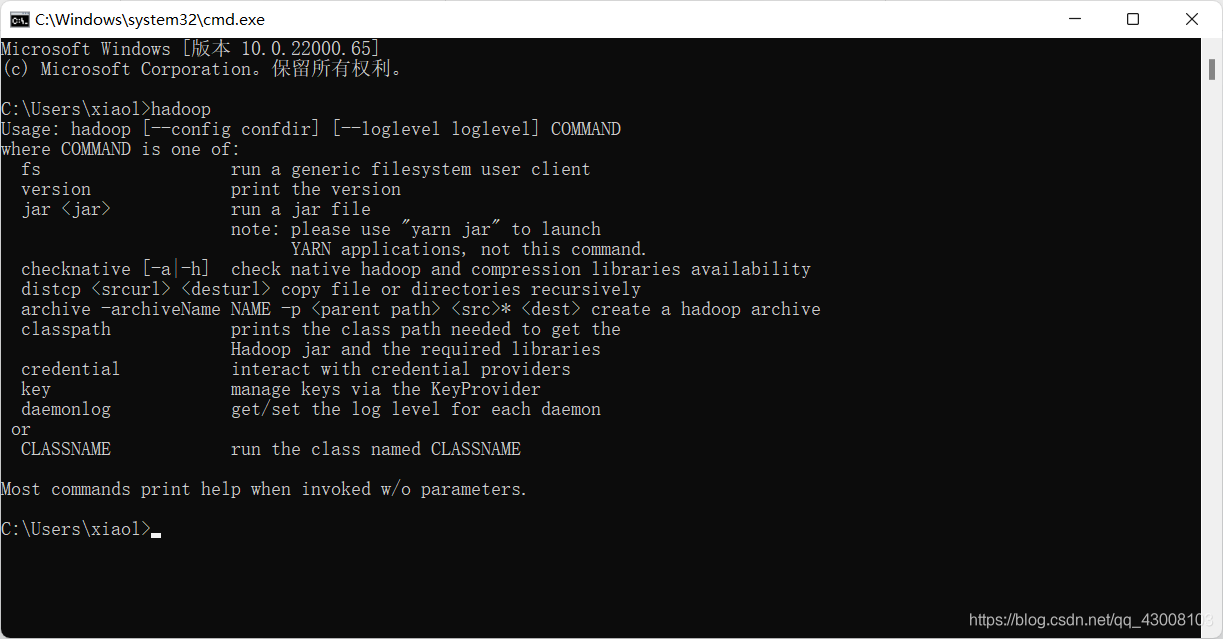

?输入hadoop检验安装是否完成

hadoop

要想在Windows上使用Hadoop,我们还需要对应的hadoop.dll和winutils.exe

链接:https://wwr.lanzoui.com/b02c8tovc

密码:9zta

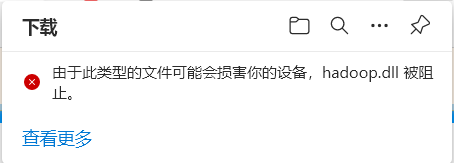

可能会弹出有风险

选择“保留”

或者你打开的下载页面是这样的,直接点保留

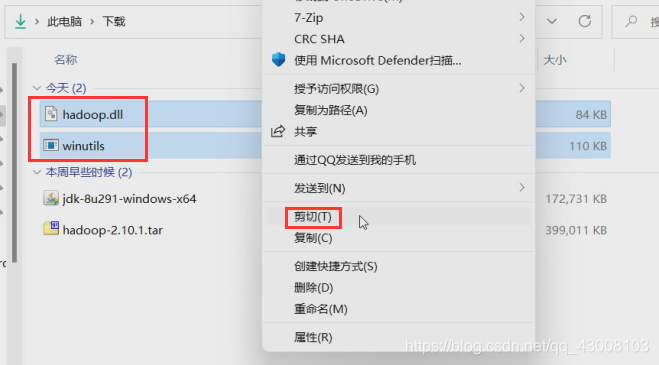

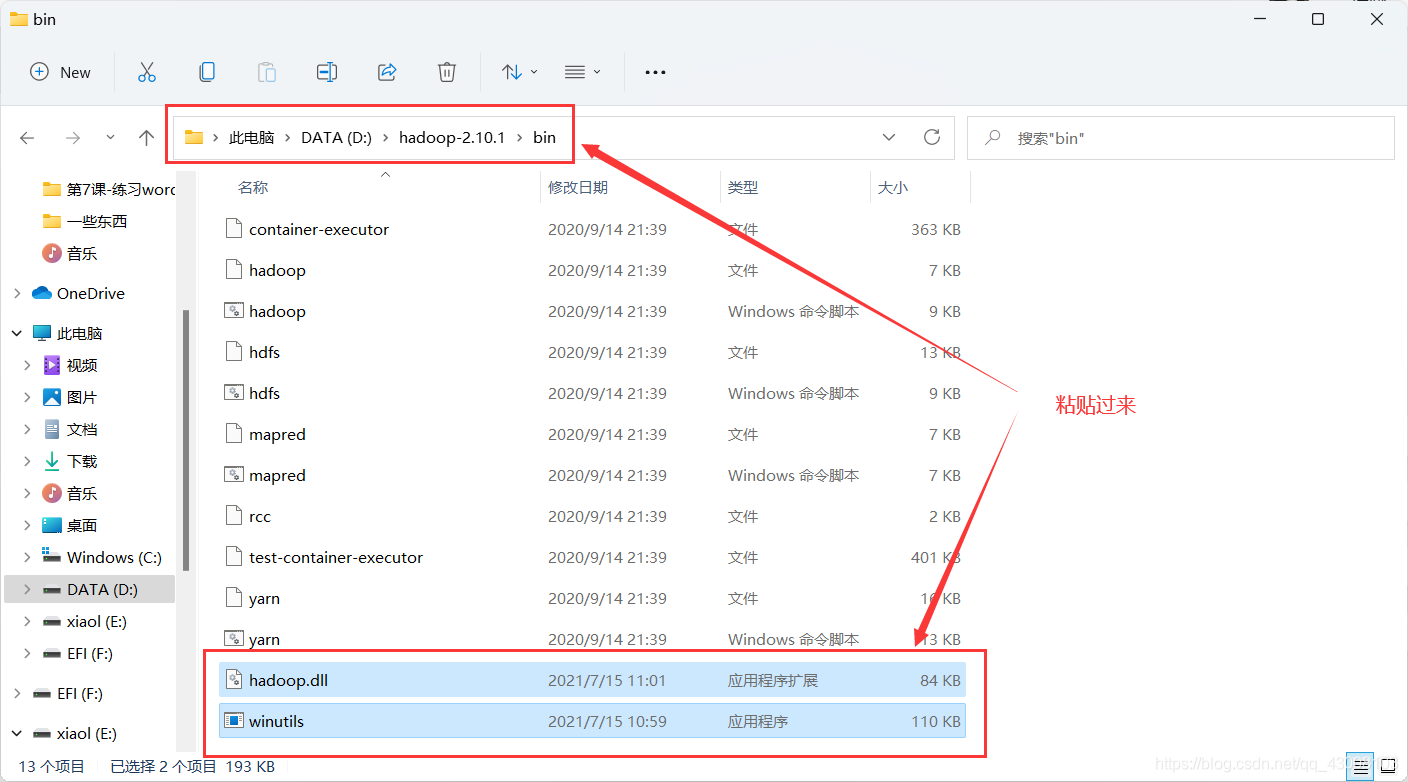

?下载好hadoop.dll和winutils.exe后,把他们移动到hadoop的bin目录下D:\hadoop-2.10.1\bin

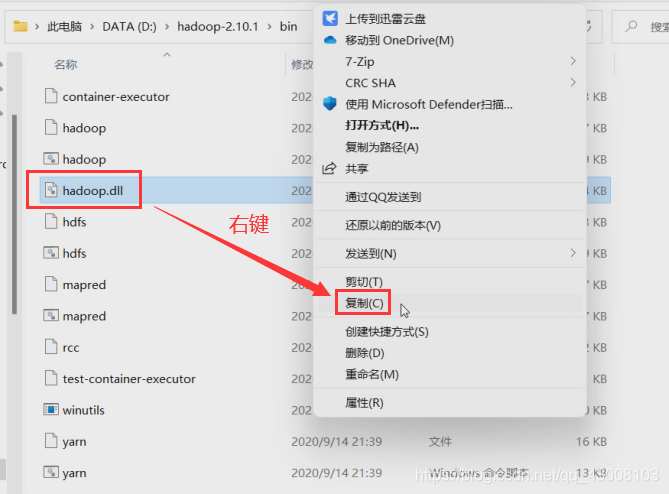

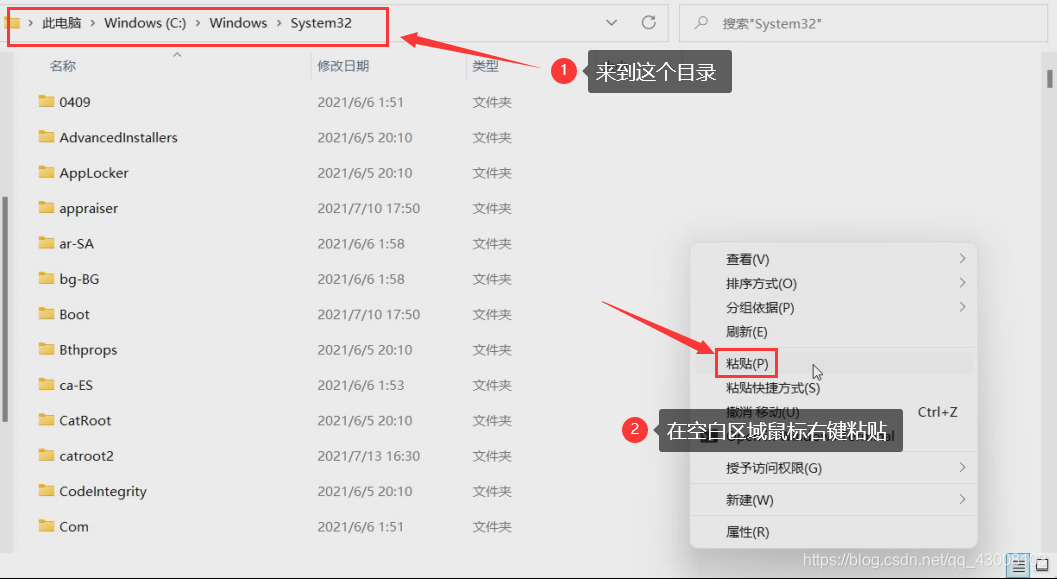

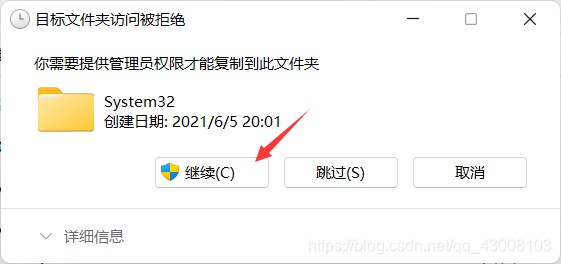

然后再复制hadoop.dll放到C:\Windows\System32目录下

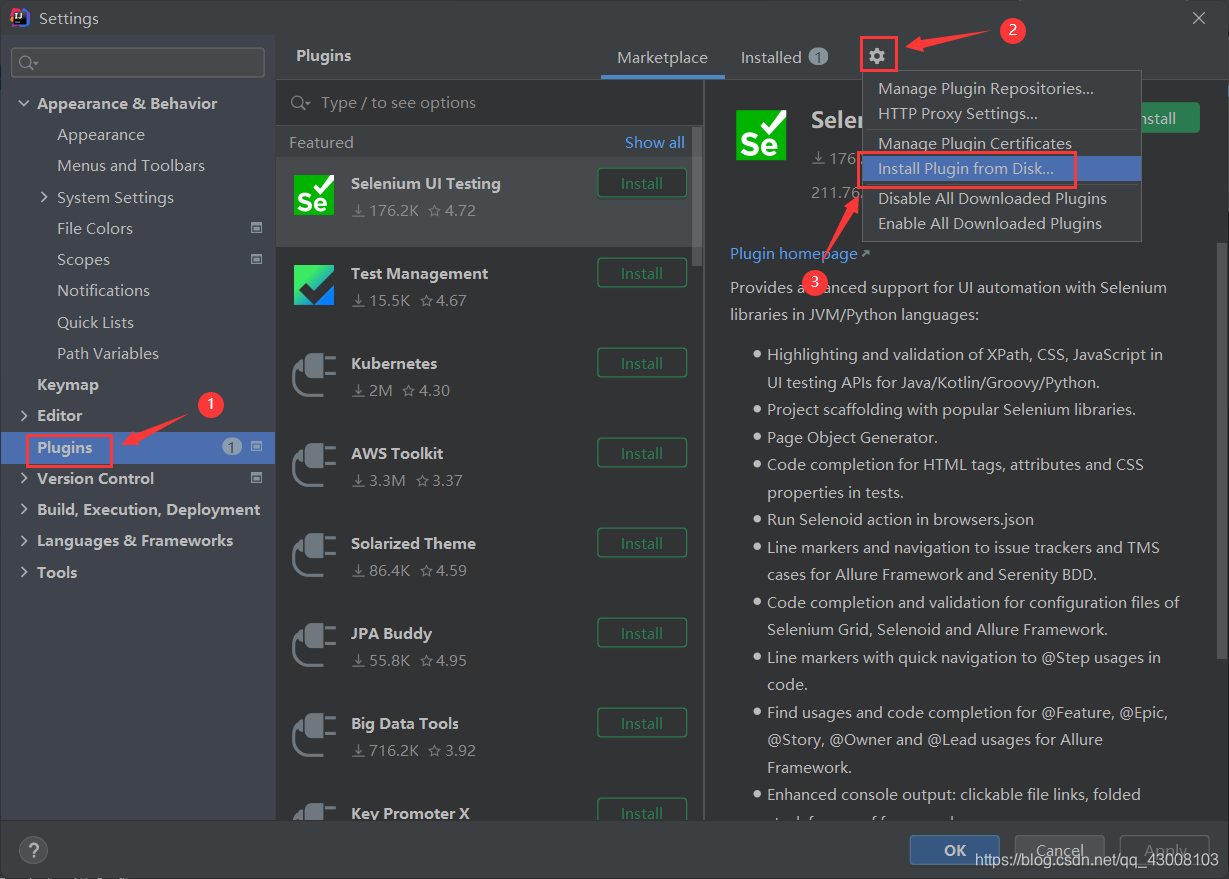

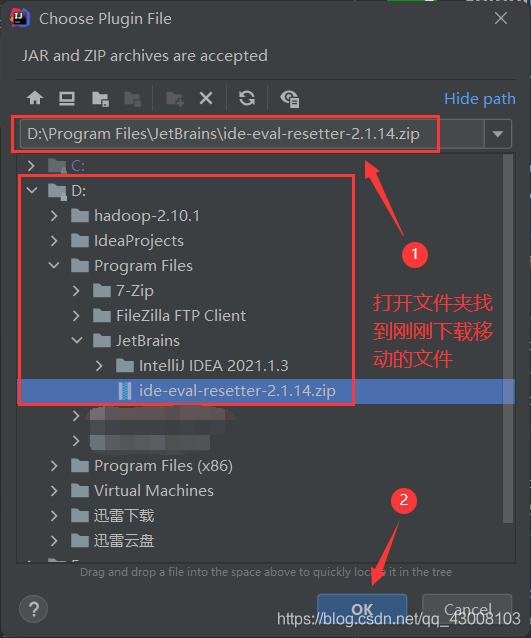

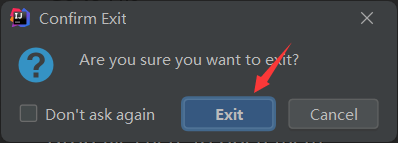

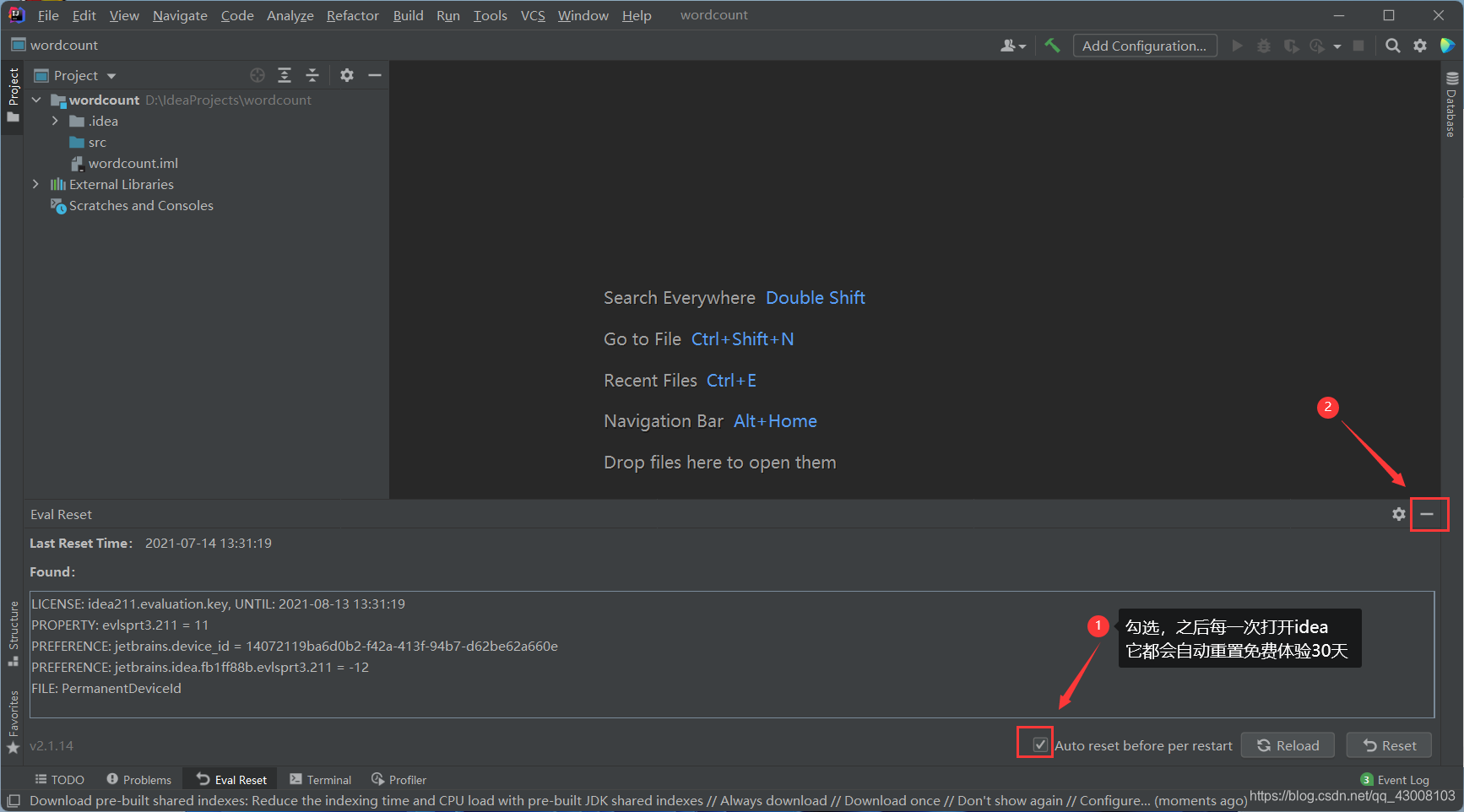

下一个idea免费体验30天的重置工具

链接:?https://pan.baidu.com/s/129rlAeNMlNJQ0gbBhs5ZgQ

提取码: 4ppy

把下载好的ide-eval-resetter-2.1.14.zip放到D:\Program Files\JetBrains防备误删

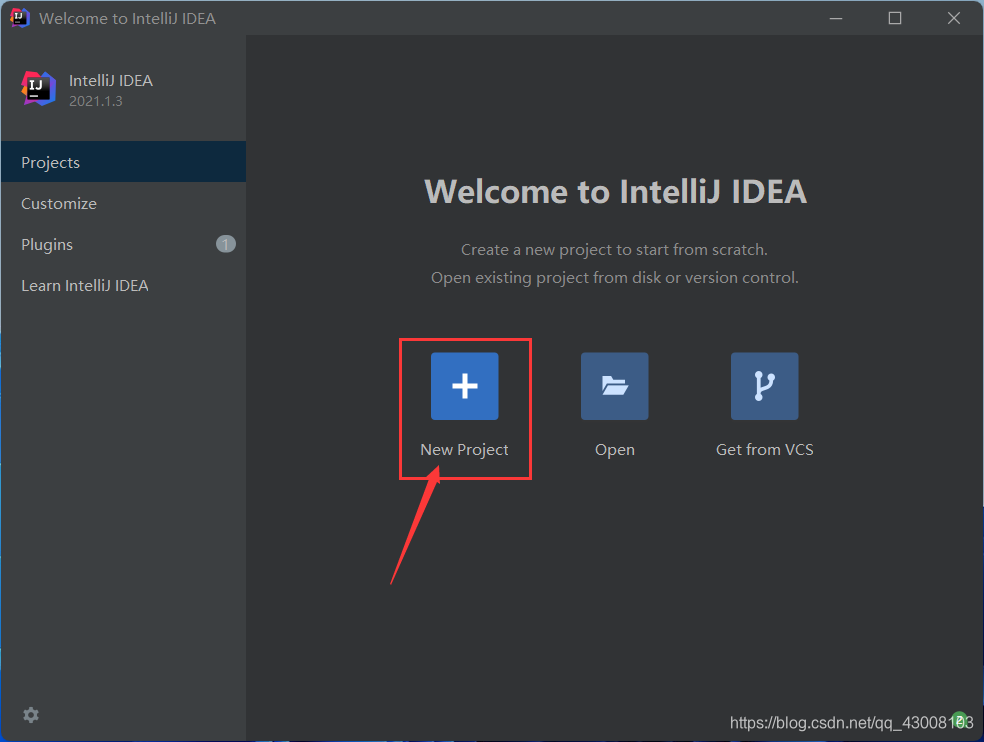

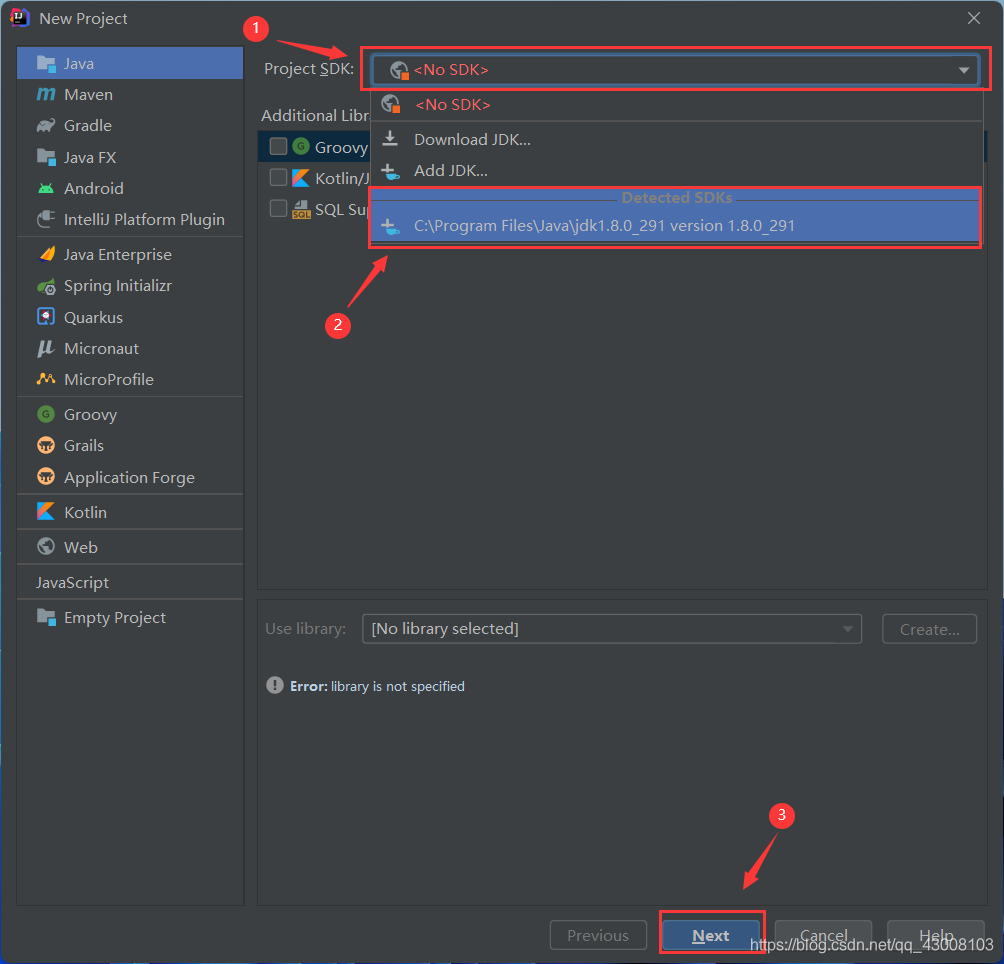

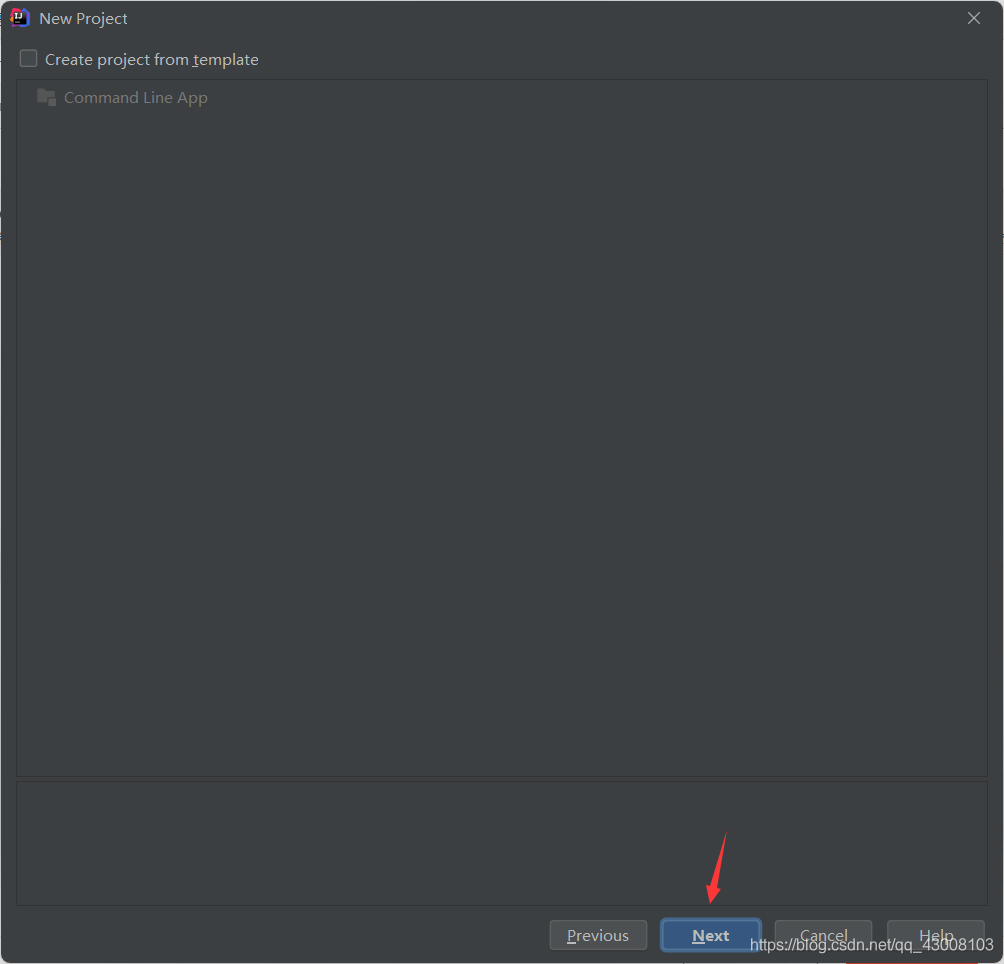

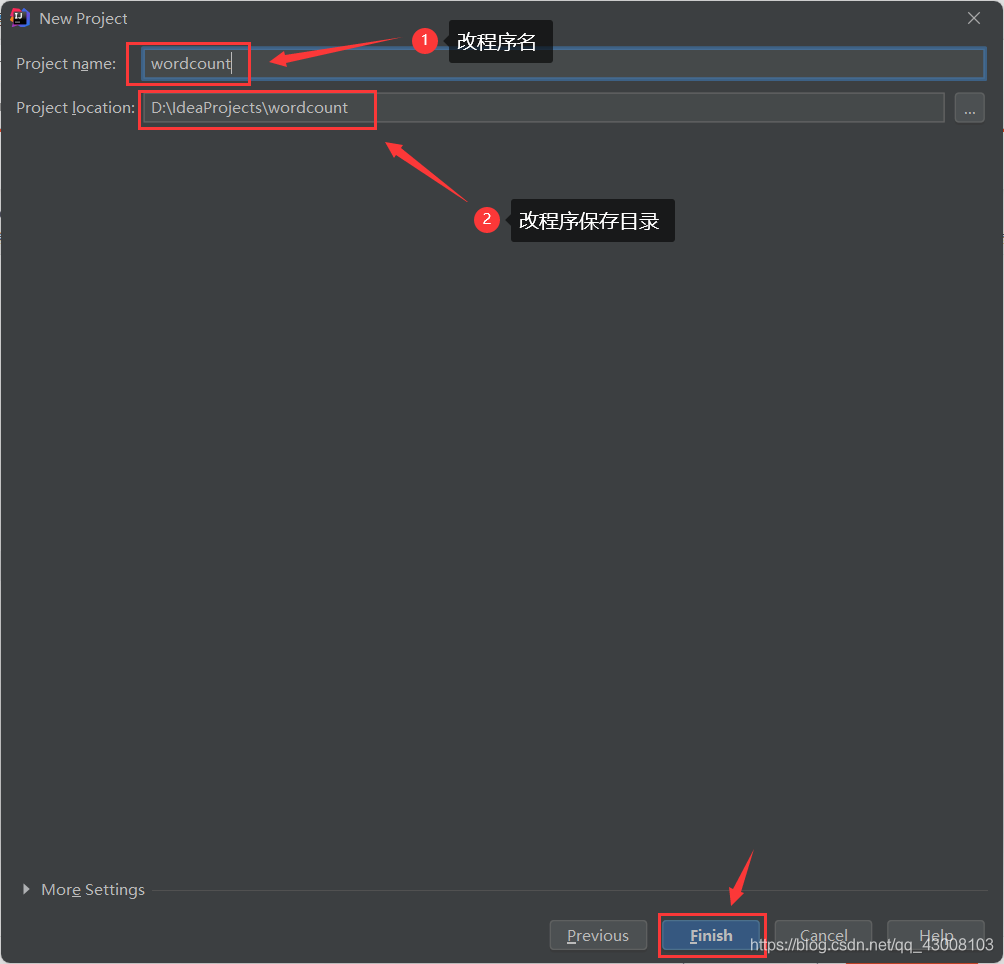

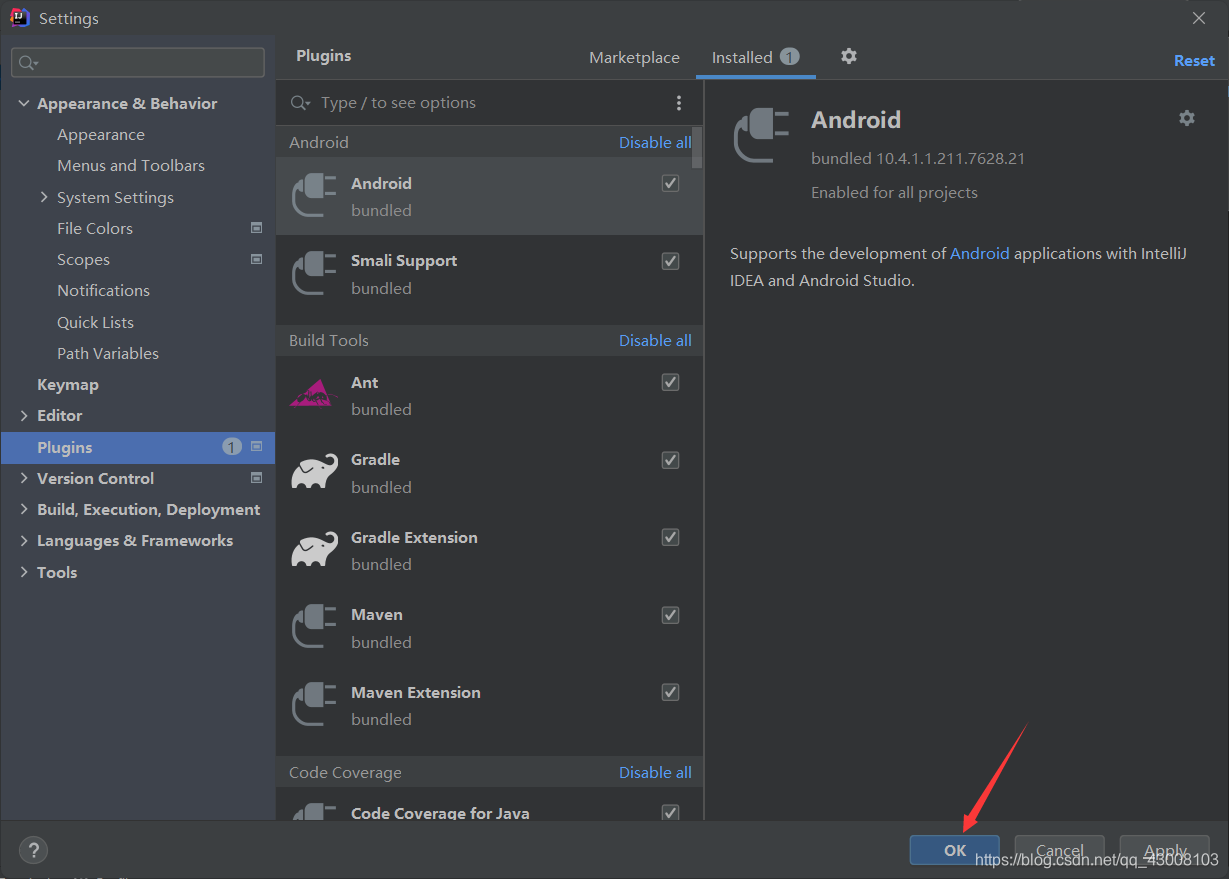

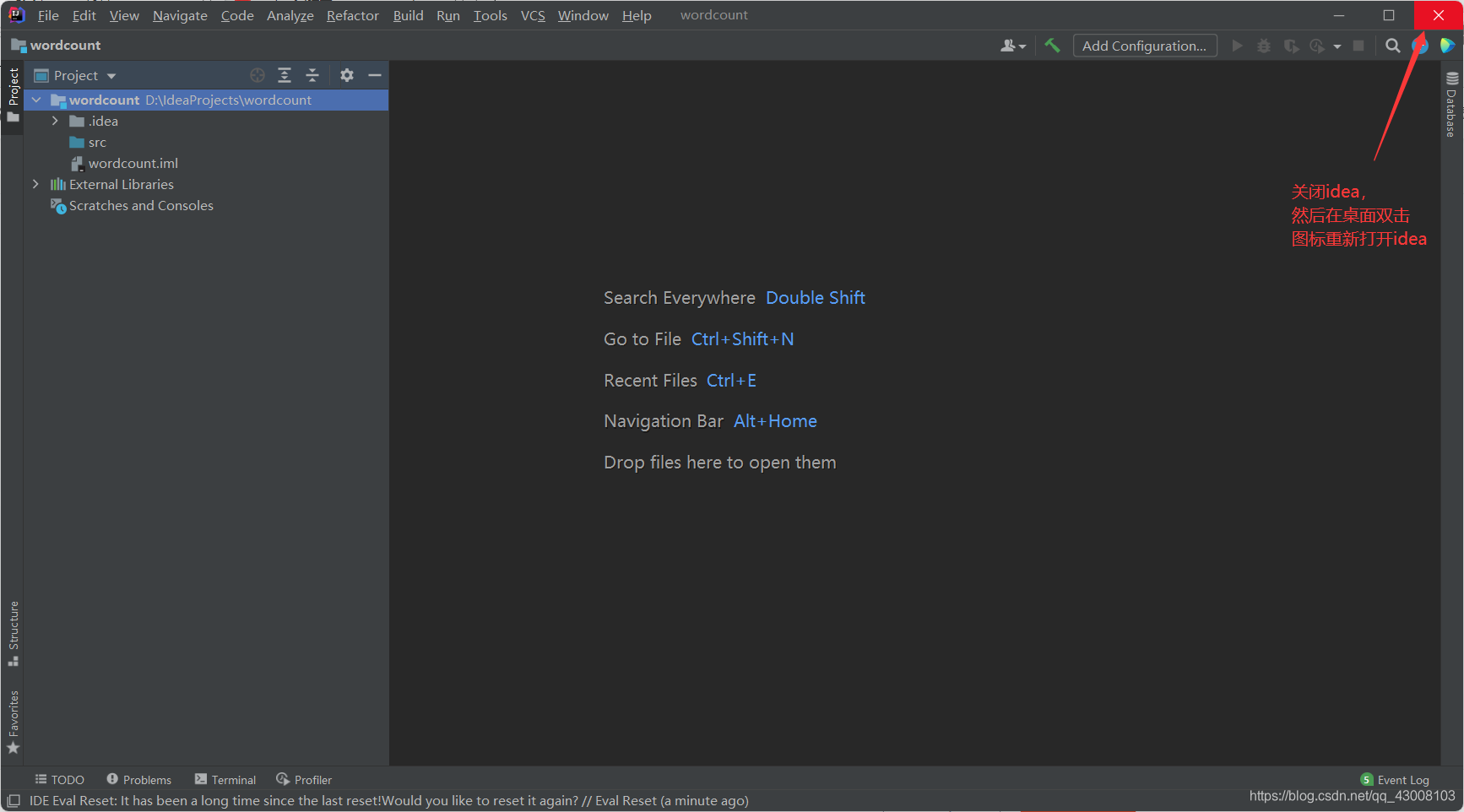

在桌面双击图标打开idea

重新打开idea它会自动加载上一次打开的程序

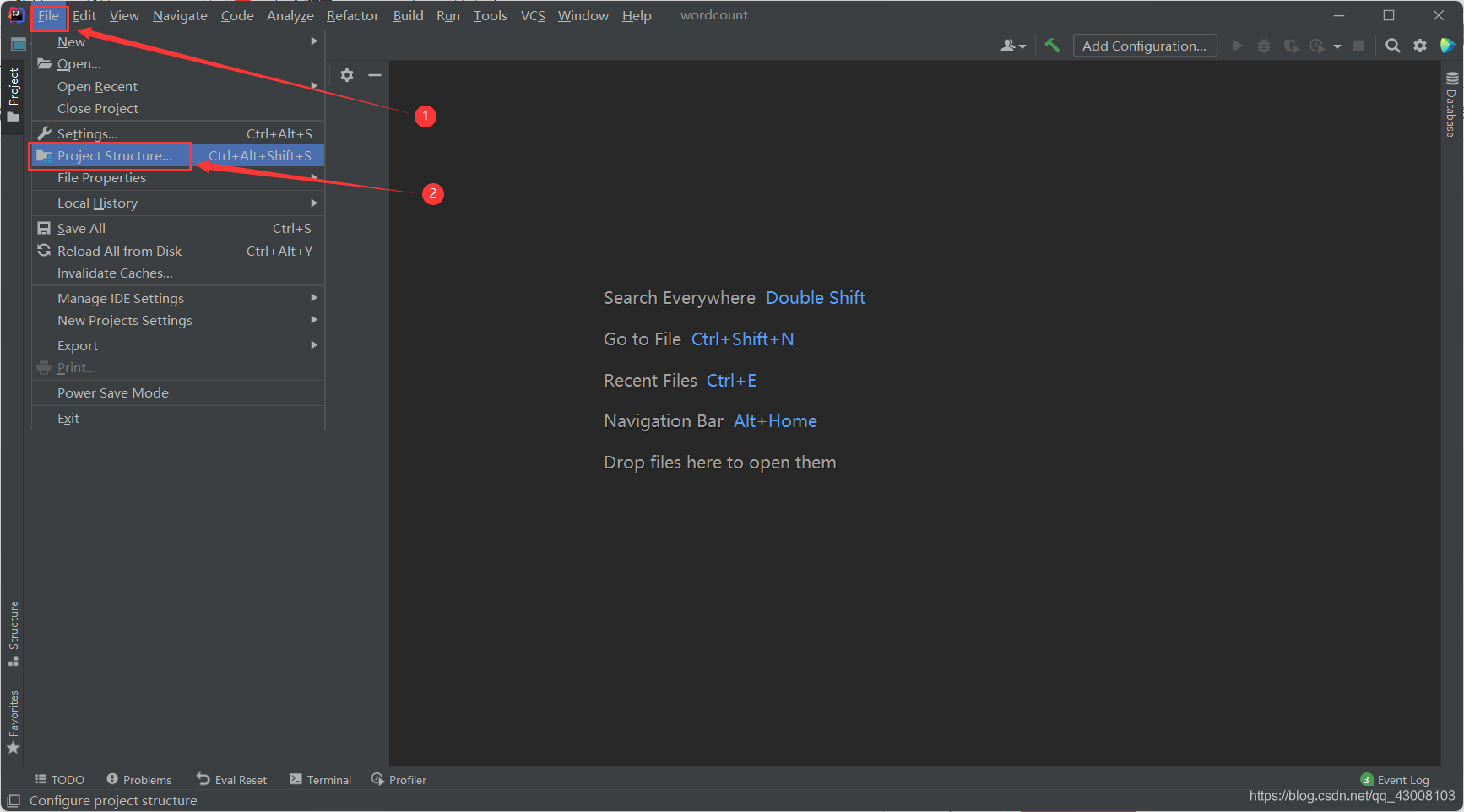

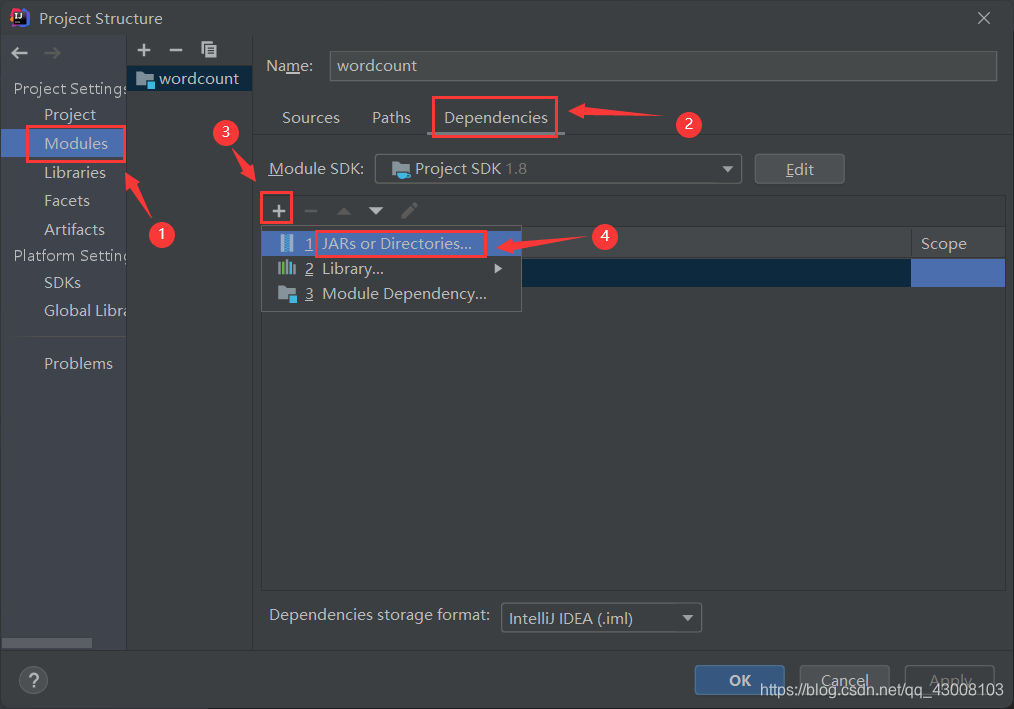

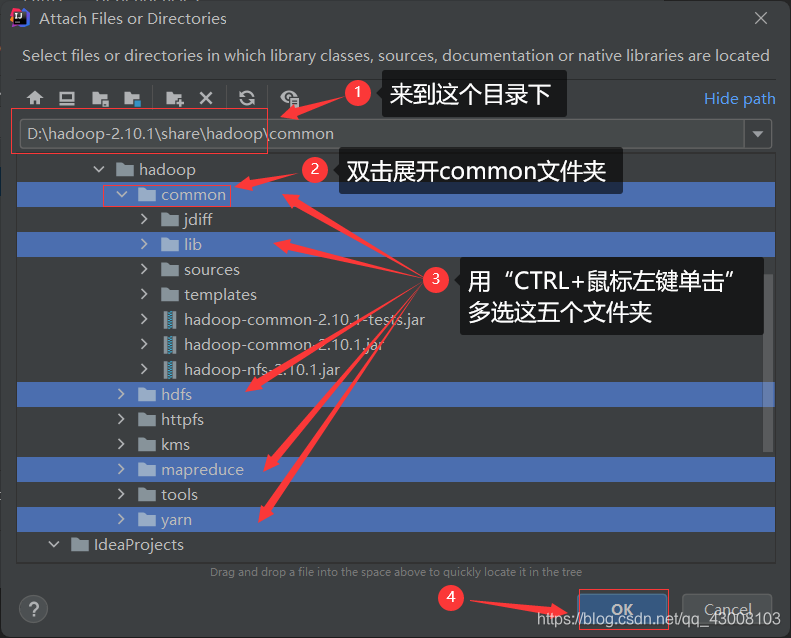

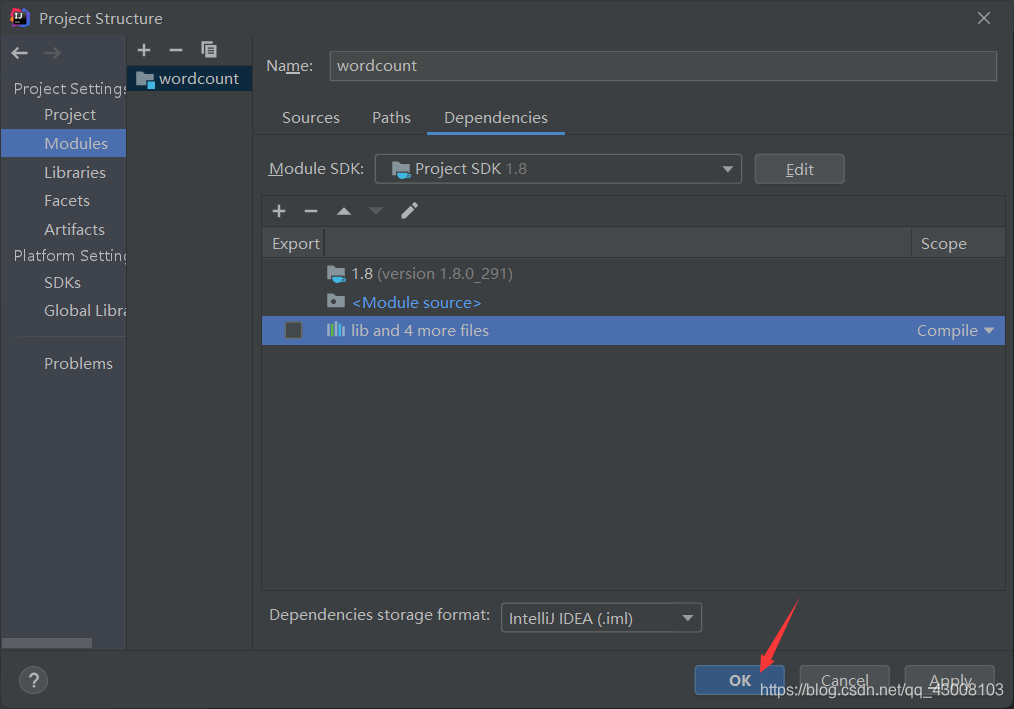

接下来导入hadoop的依赖包

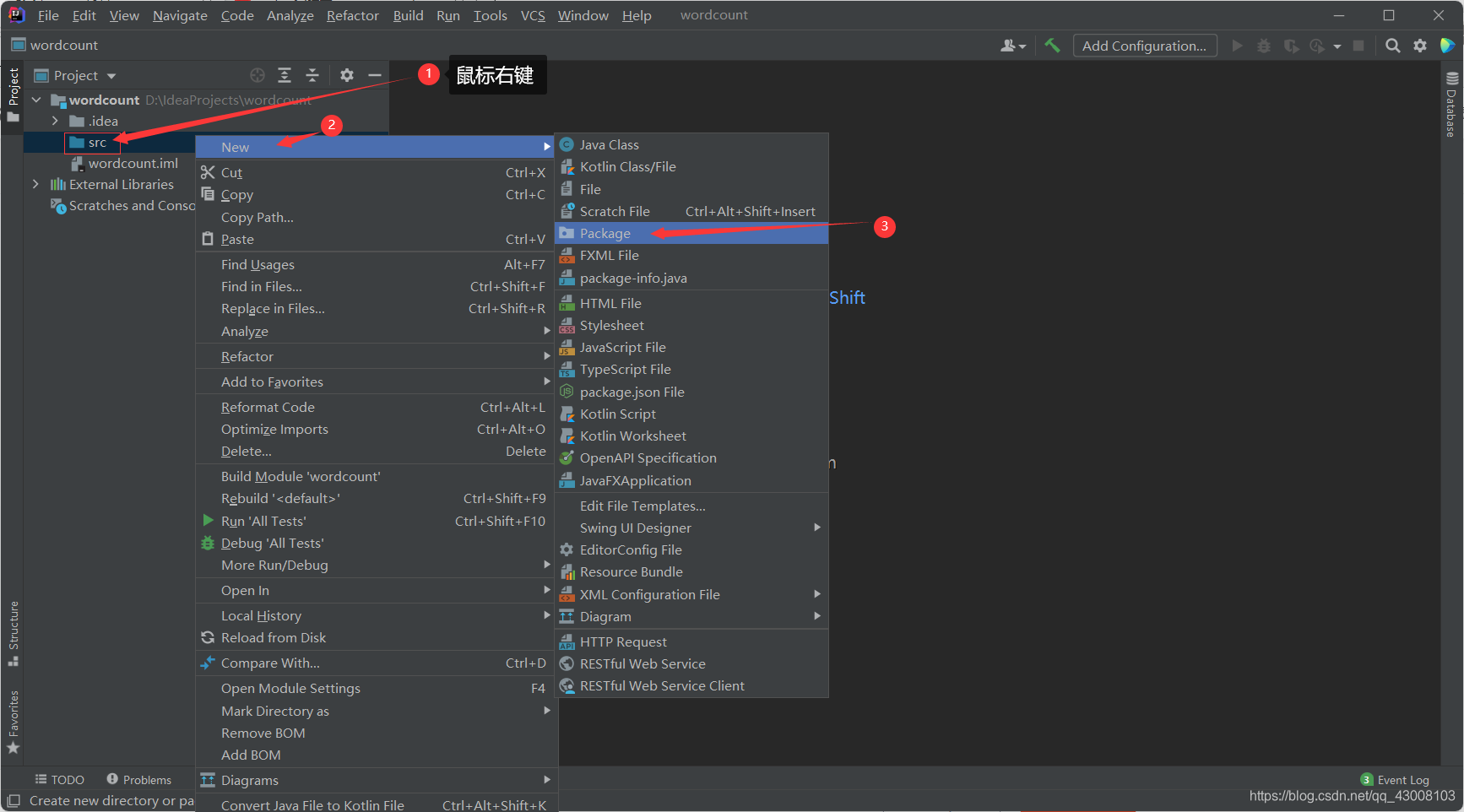

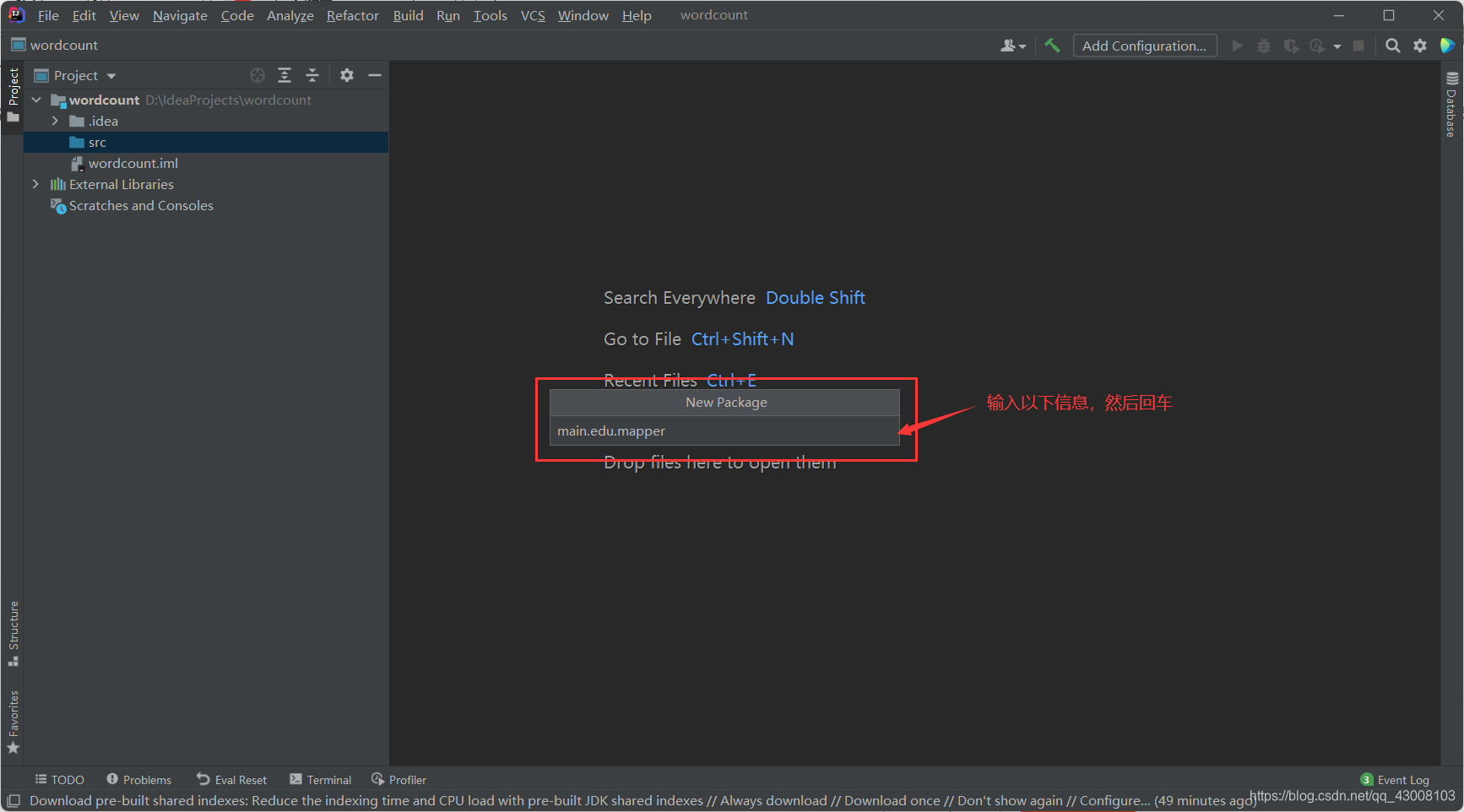

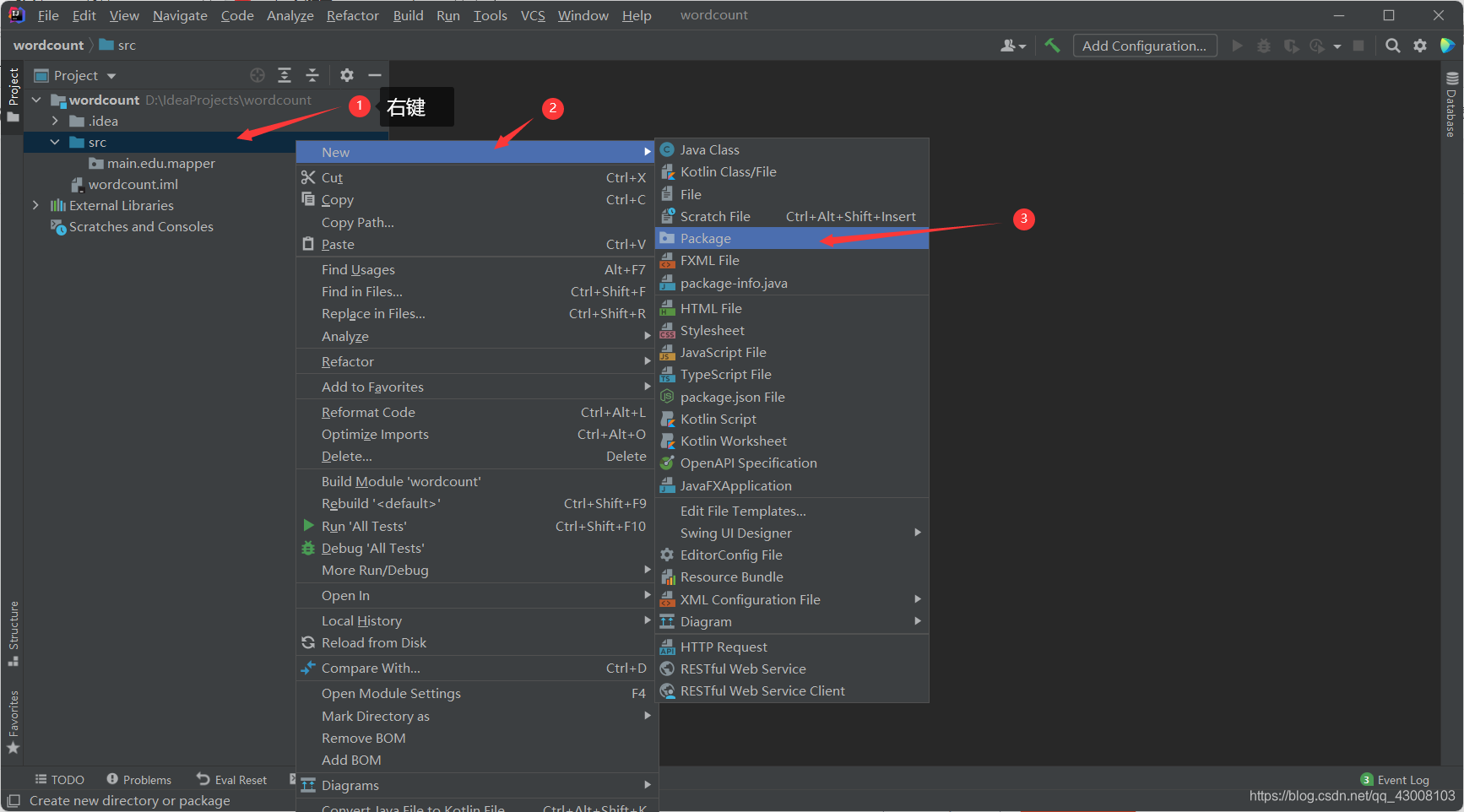

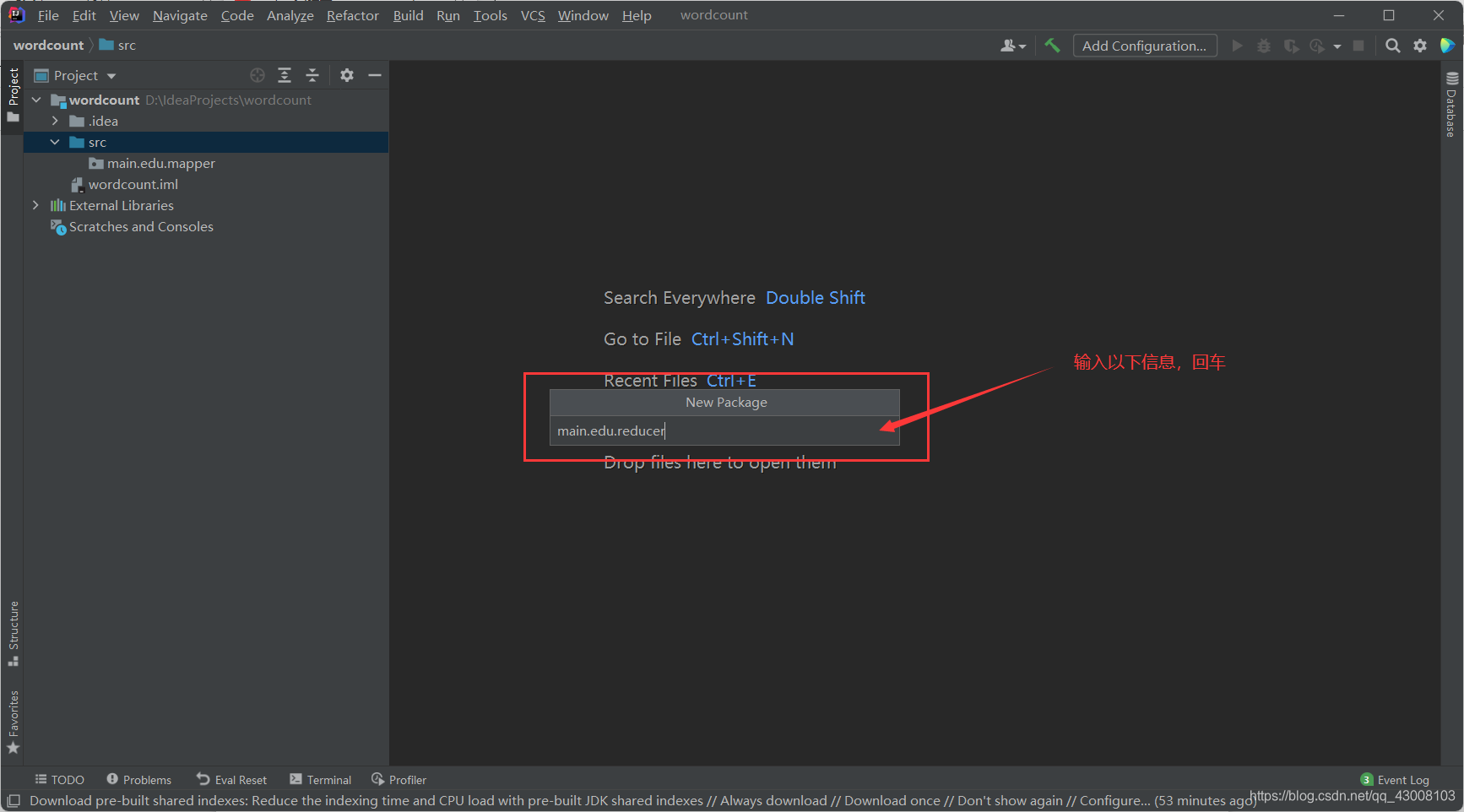

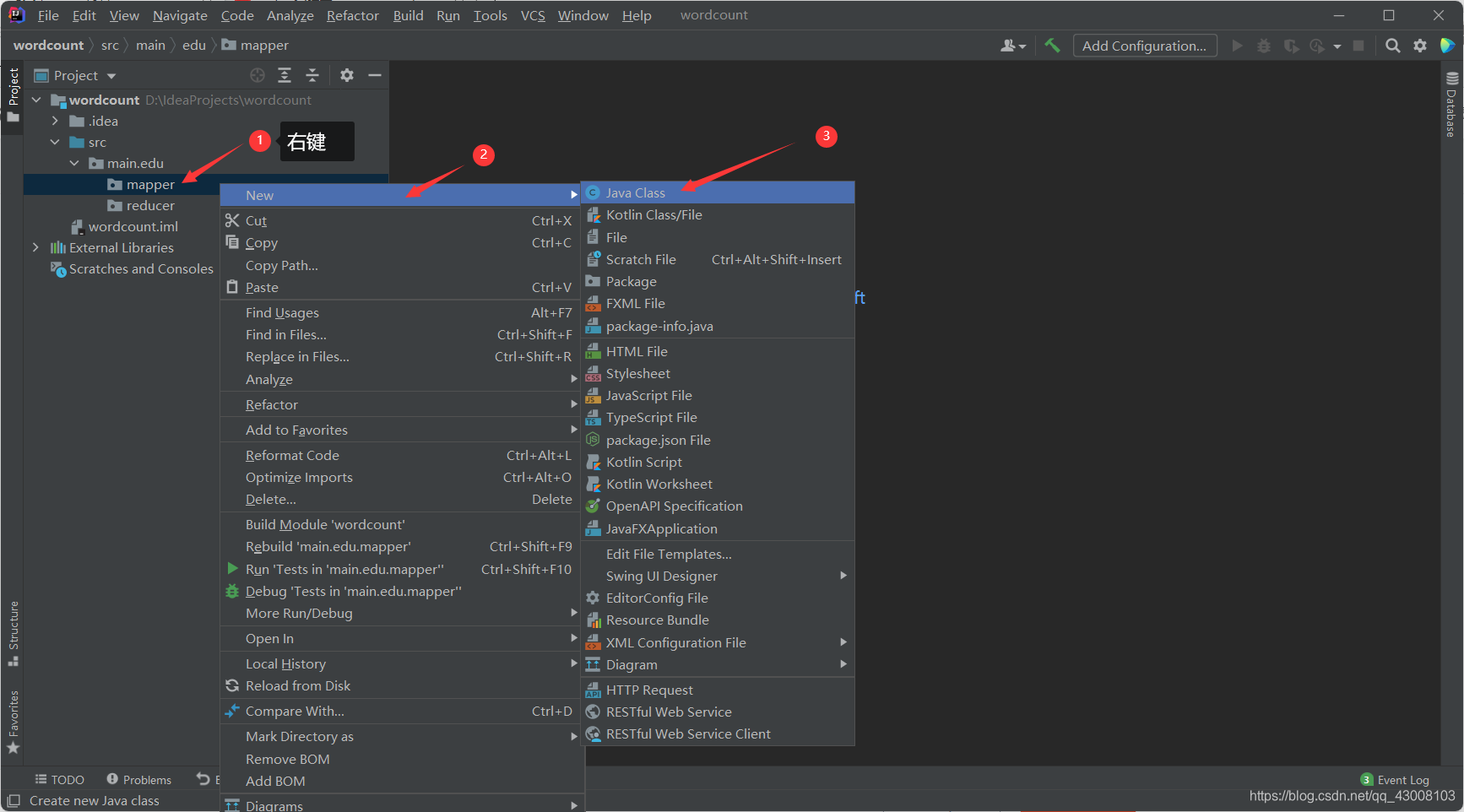

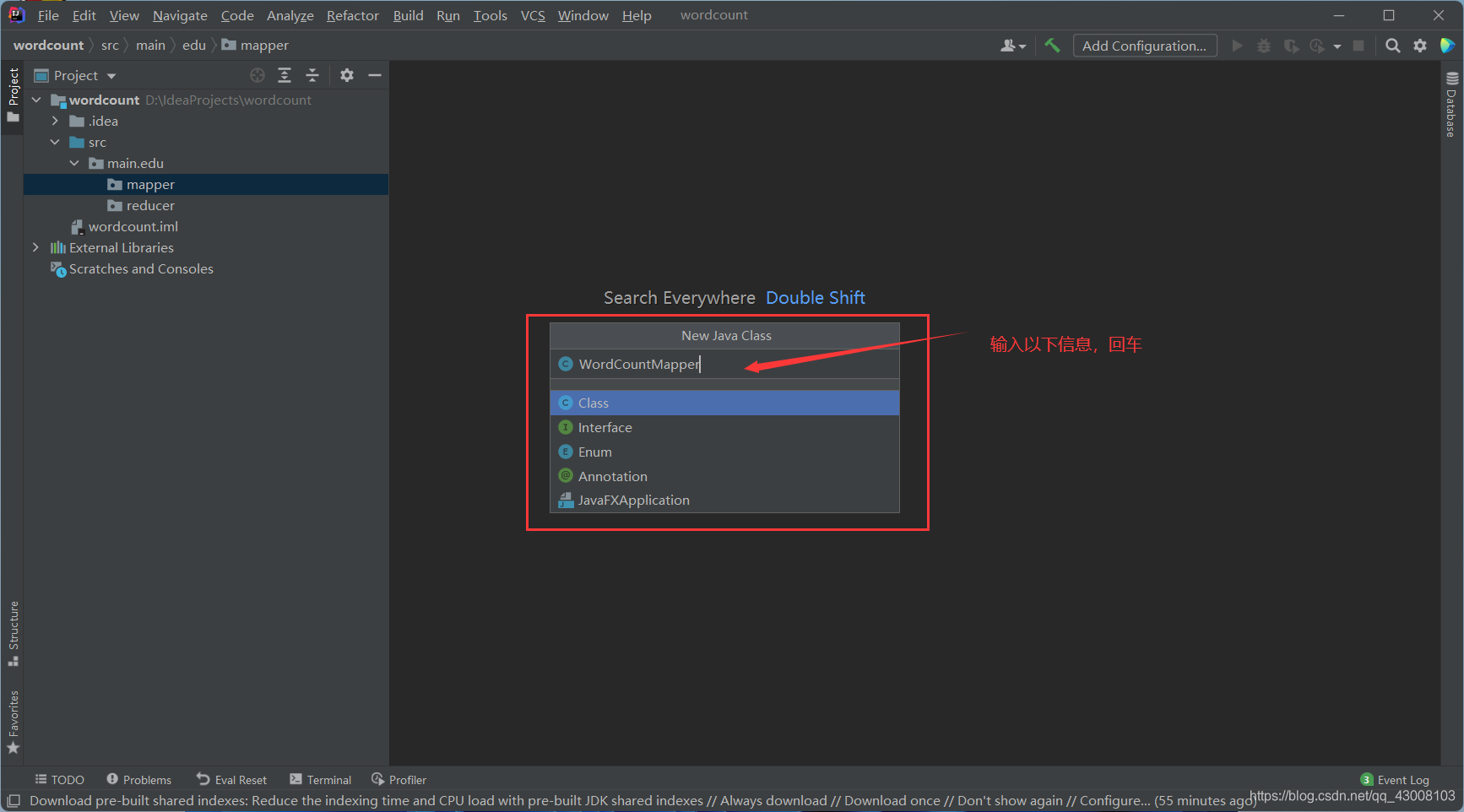

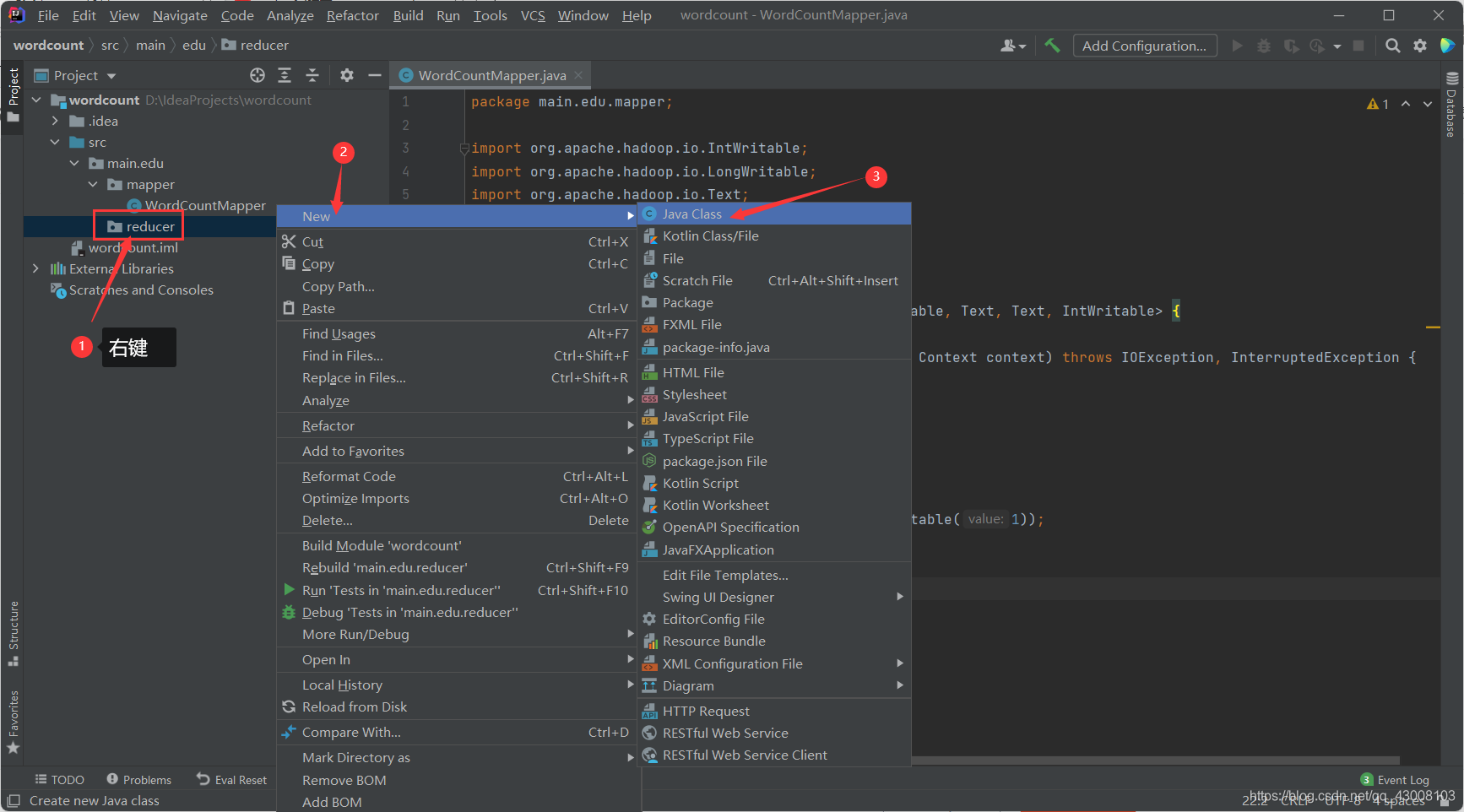

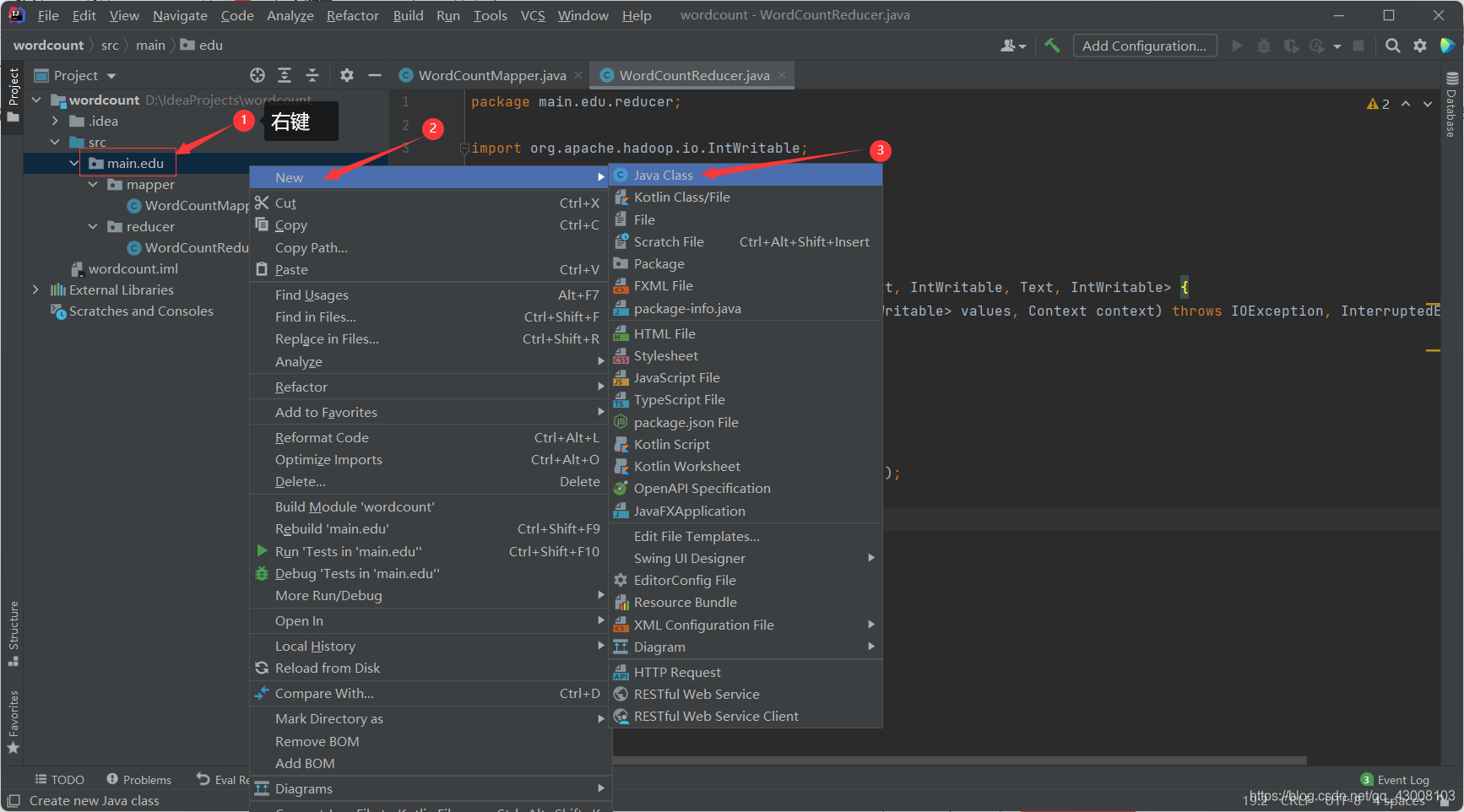

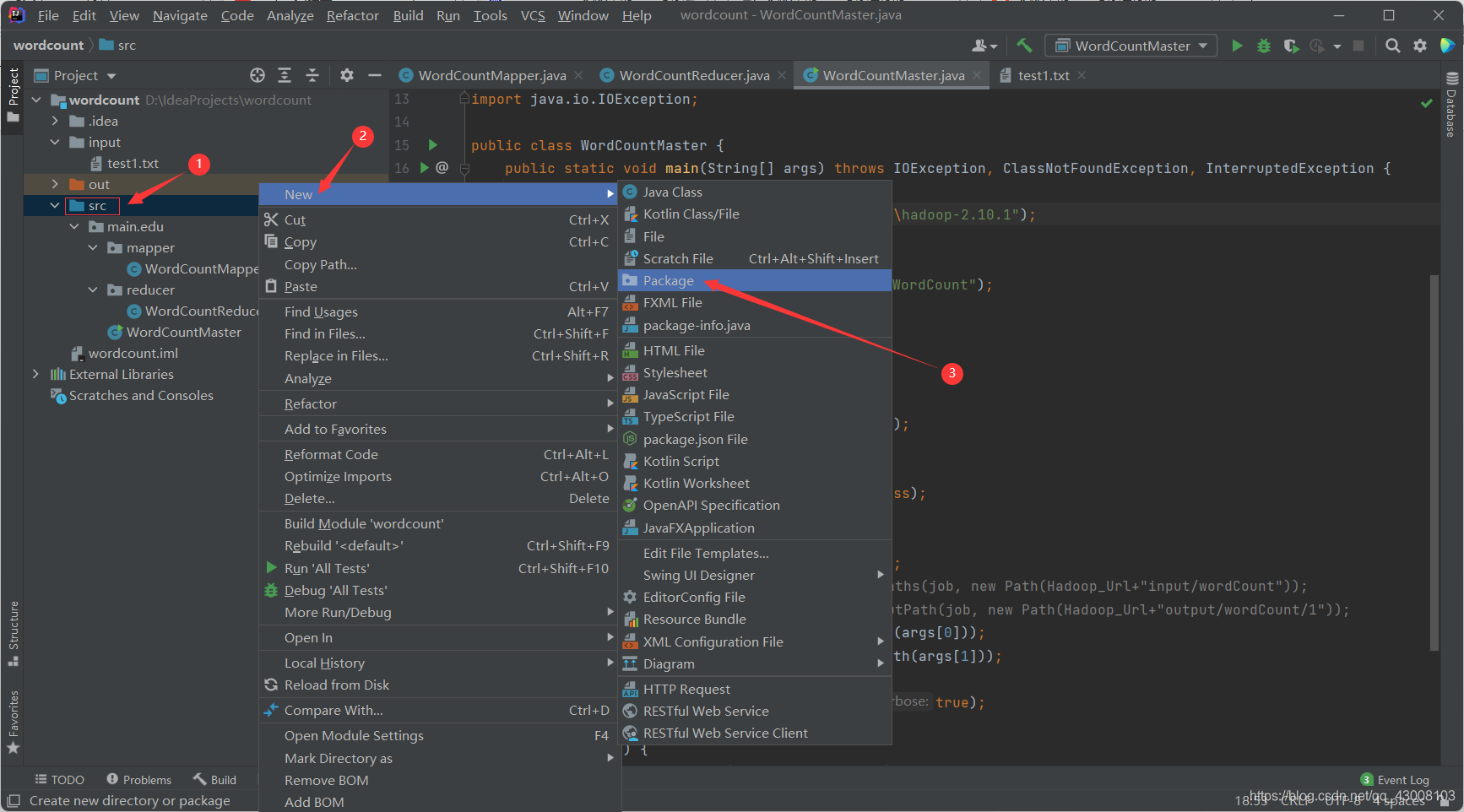

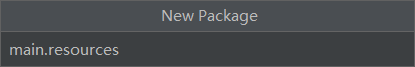

根据各自的编程习惯组织源码结构,本人的目录结构如下建立

?WordCountMapper.java代码如下:

package main.edu.mapper;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class WordCountMapper extends Mapper<LongWritable, Text, Text, IntWritable> {

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

//获取读取到的每一行数据

String line = value.toString();

//通过空格进行分割

String[] words = line.split(" ");

//将初步处理的结果进行输出

for (String word : words) {

context.write(new Text(word), new IntWritable(1));

}

}

}“CTRL+S”保存代码

WordCountReducer.java代码如下:

package main.edu.reducer;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class WordCountReducer extends Reducer<Text, IntWritable, Text, IntWritable> {

protected void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException {

Integer count = 0;

for (IntWritable value : values) {

//将每一个分组中的数据进行累加计数

count += value.get();

}

//将最终结果进行输出

context.write(key, new IntWritable(count));

}

}“CTRL+S”保存代码

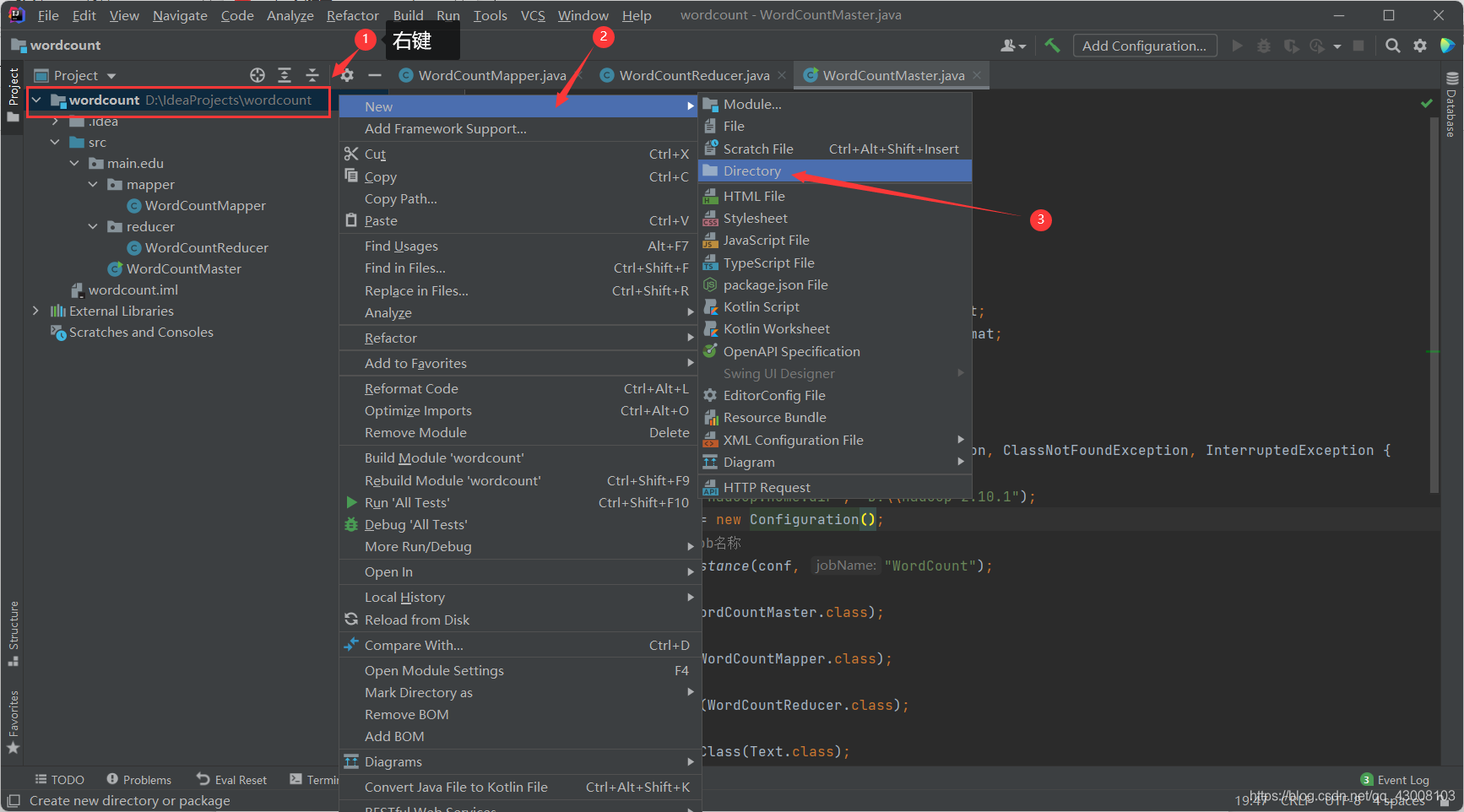

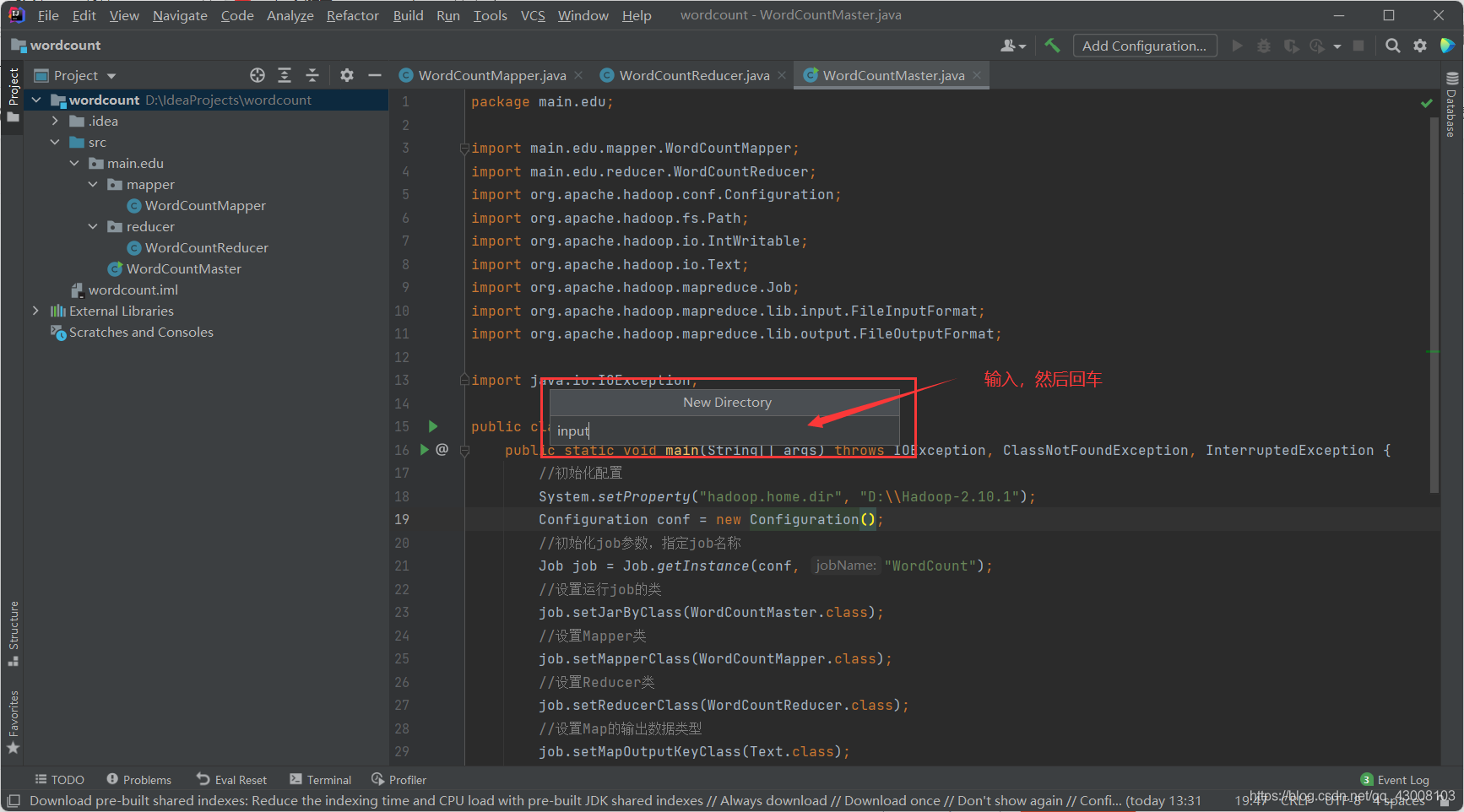

WordCountMaster.java代码如下:

package main.edu;

import main.edu.mapper.WordCountMapper;

import main.edu.reducer.WordCountReducer;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class WordCountMaster {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

//初始化配置

System.setProperty("hadoop.home.dir", "D:\\hadoop-2.10.1");

Configuration conf = new Configuration();

//初始化job参数,指定job名称

Job job = Job.getInstance(conf, "WordCount");

//设置运行job的类

job.setJarByClass(WordCountMaster.class);

//设置Mapper类

job.setMapperClass(WordCountMapper.class);

//设置Reducer类

job.setReducerClass(WordCountReducer.class);

//设置Map的输出数据类型

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(IntWritable.class);

//设置Reducer的输出数据类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

//设置输入的路径 //FileInputFormat.setInputPaths(job, new Path(Hadoop_Url+"input/wordCount"));

// 设置输出的路径 //FileOutputFormat.setOutputPath(job, new Path(Hadoop_Url+"output/wordCount/1"));

FileInputFormat.addInputPath(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

//提交job

boolean result = job.waitForCompletion(true);

//执行成功后进行后续操作

if (result) {

System.out.println("Congratulations!");

}

}

}“CTRL+S”保存代码

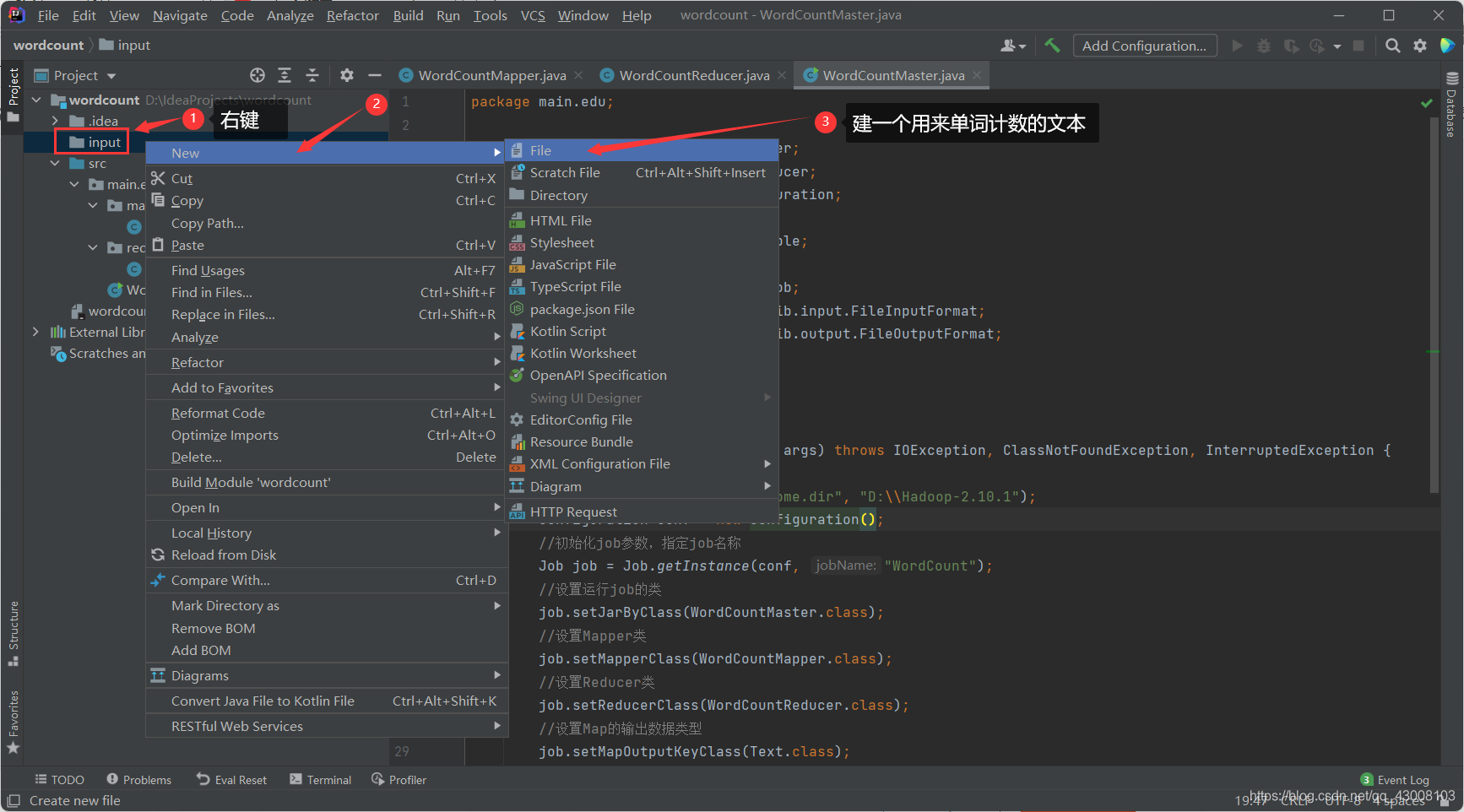

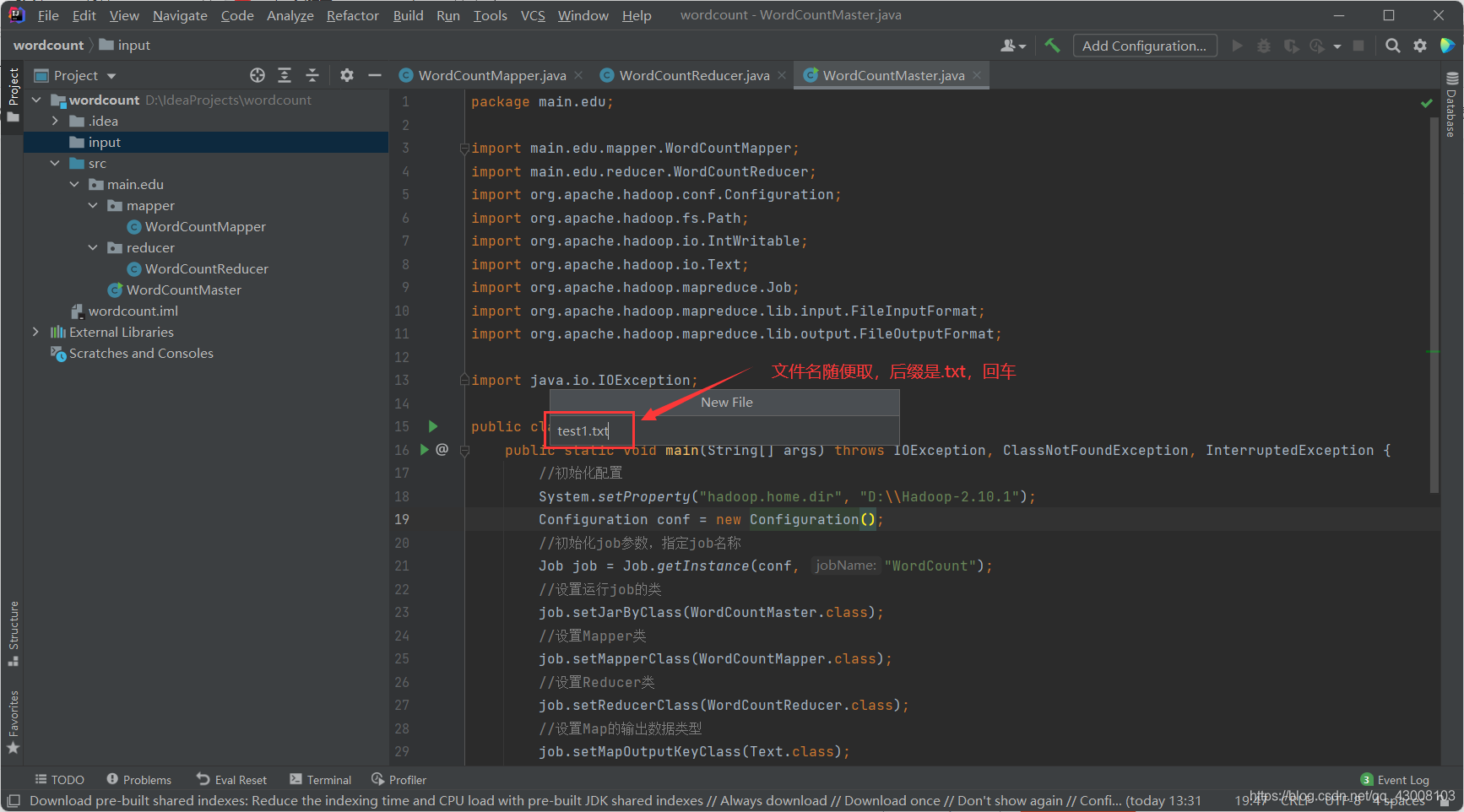

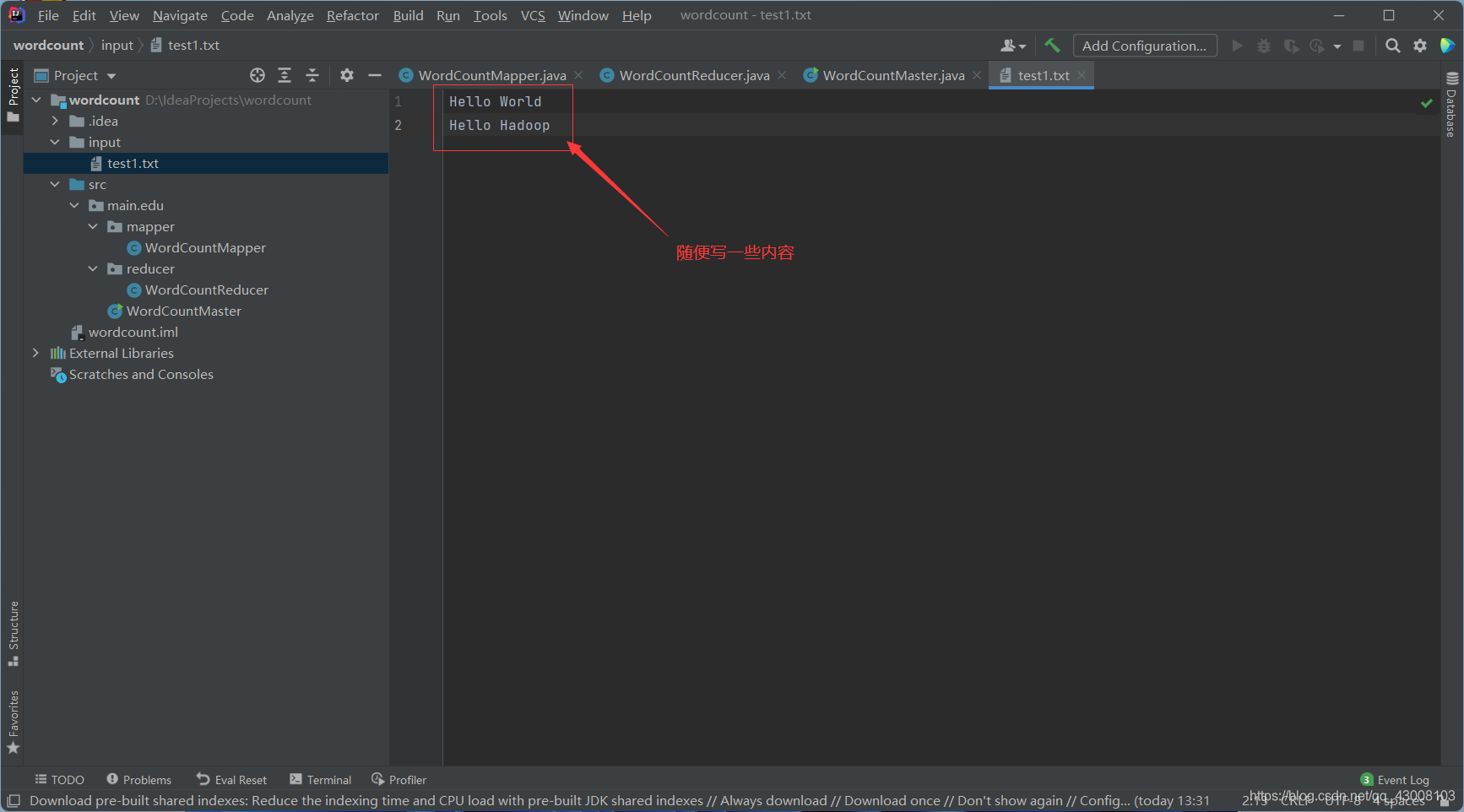

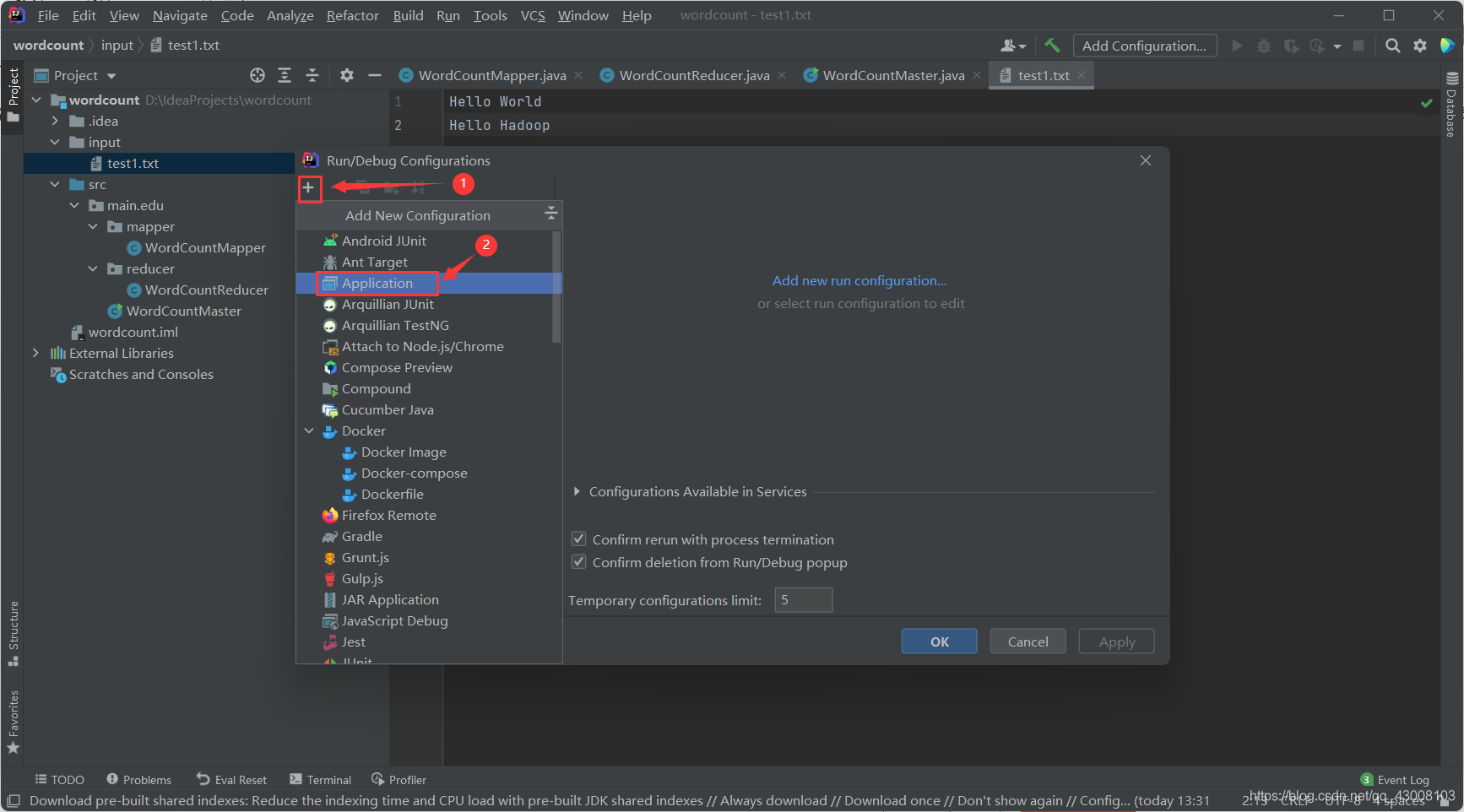

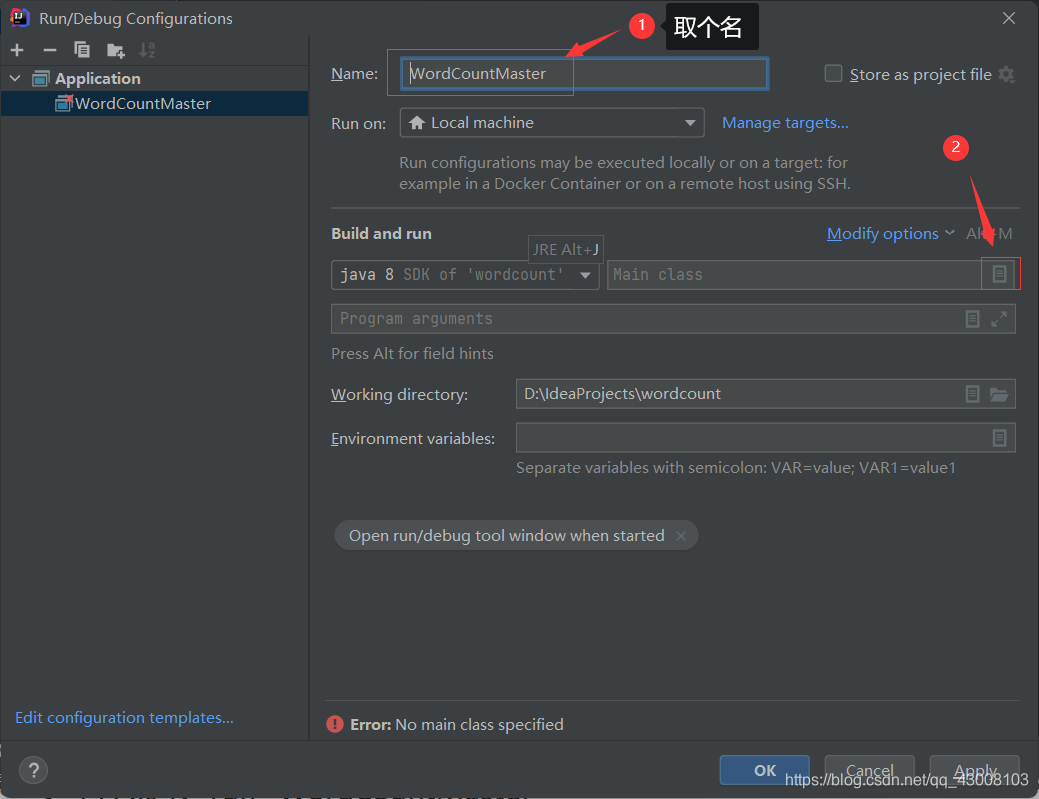

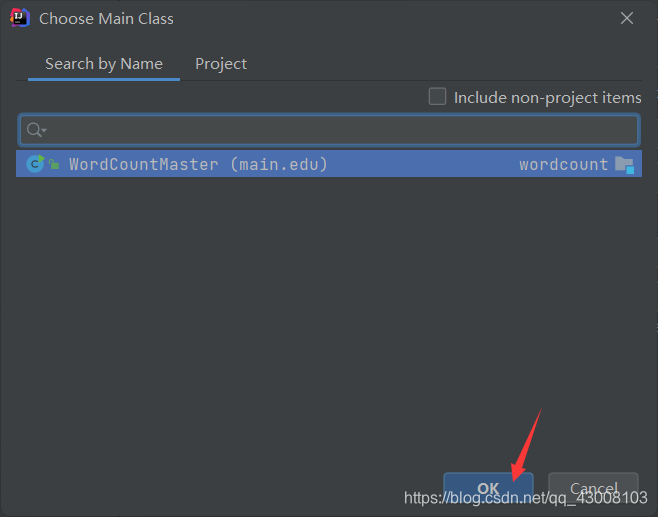

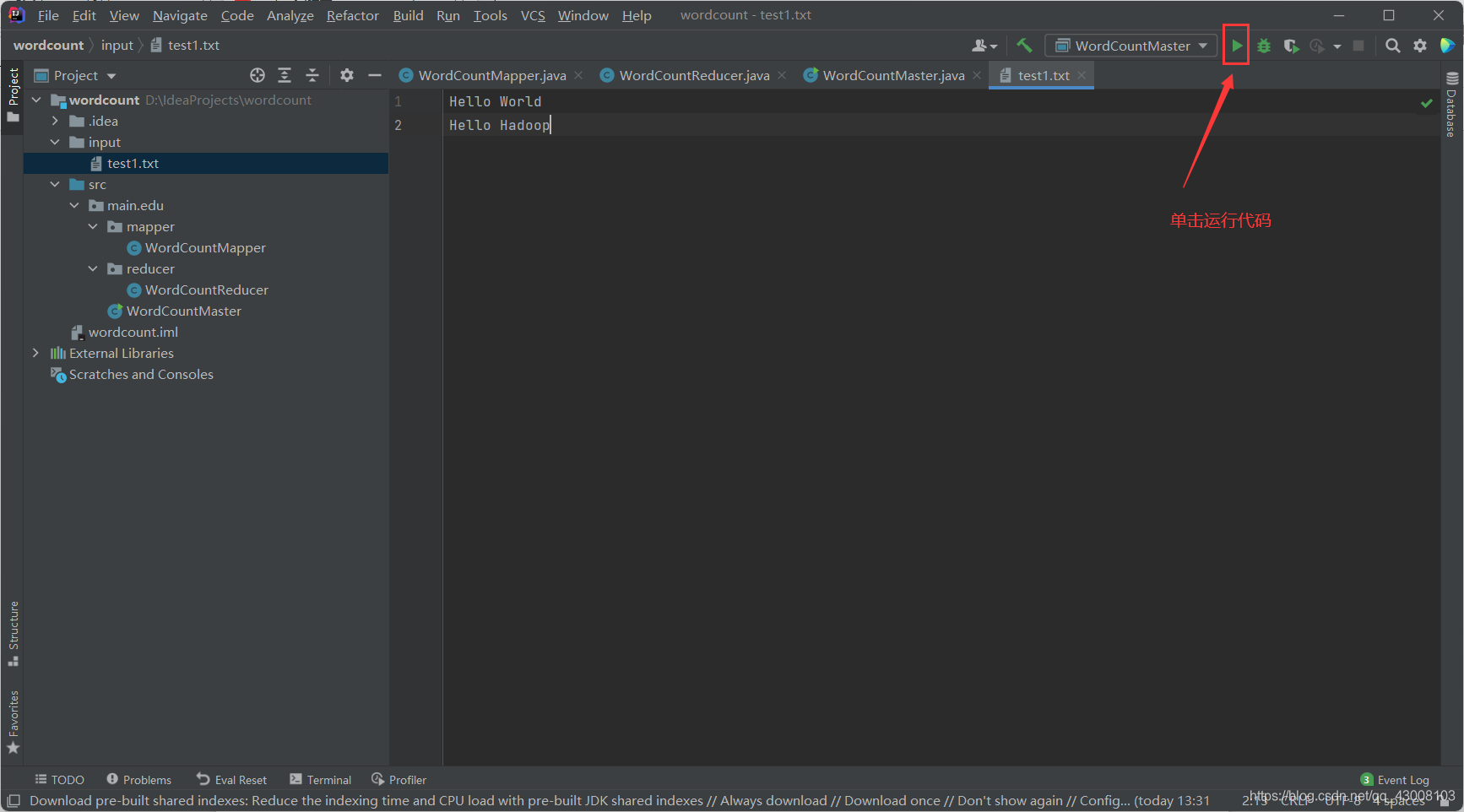

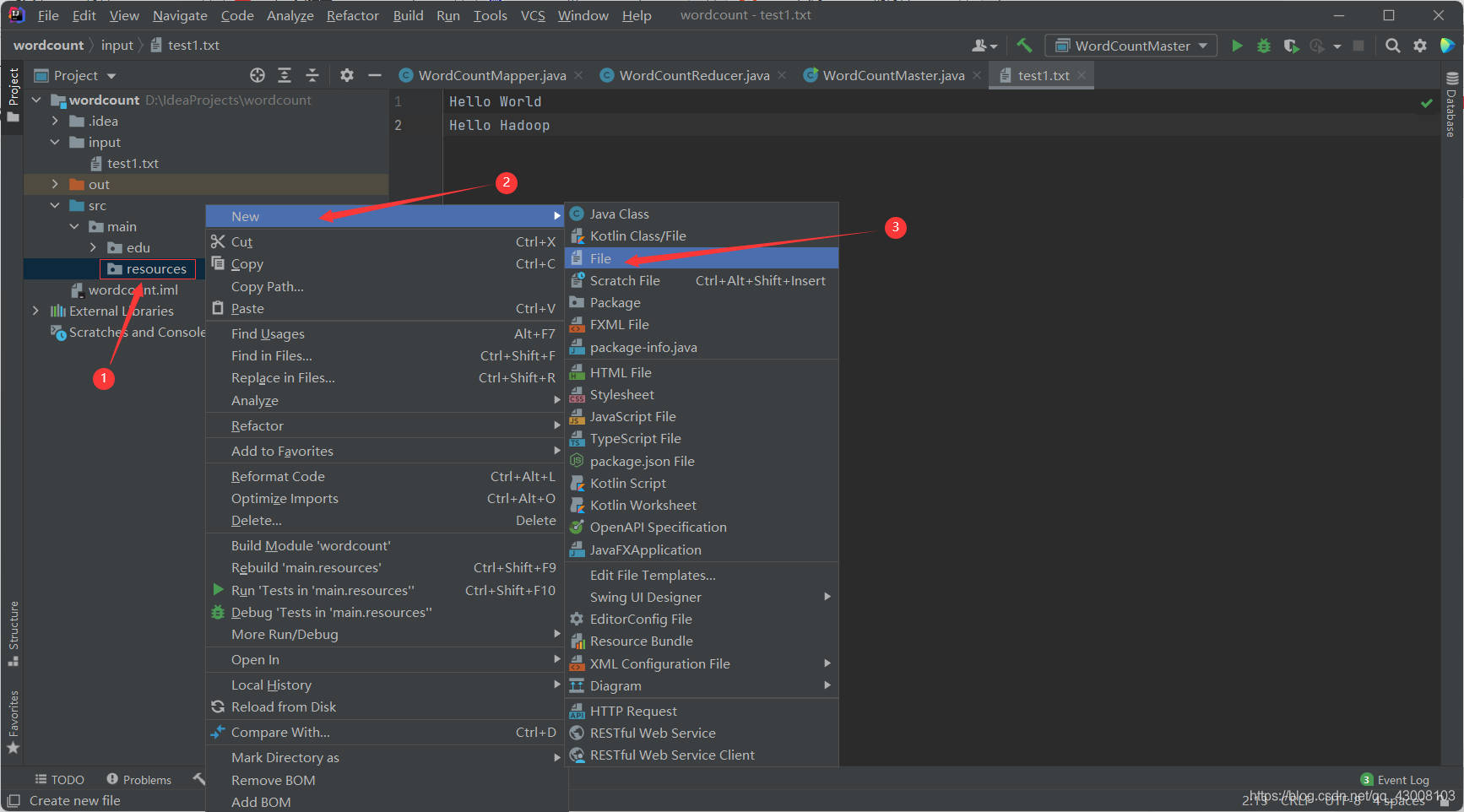

运行代码前需要一些准备工作,比如 /input目录要存在,并且要把相应的数据文件复制过去。

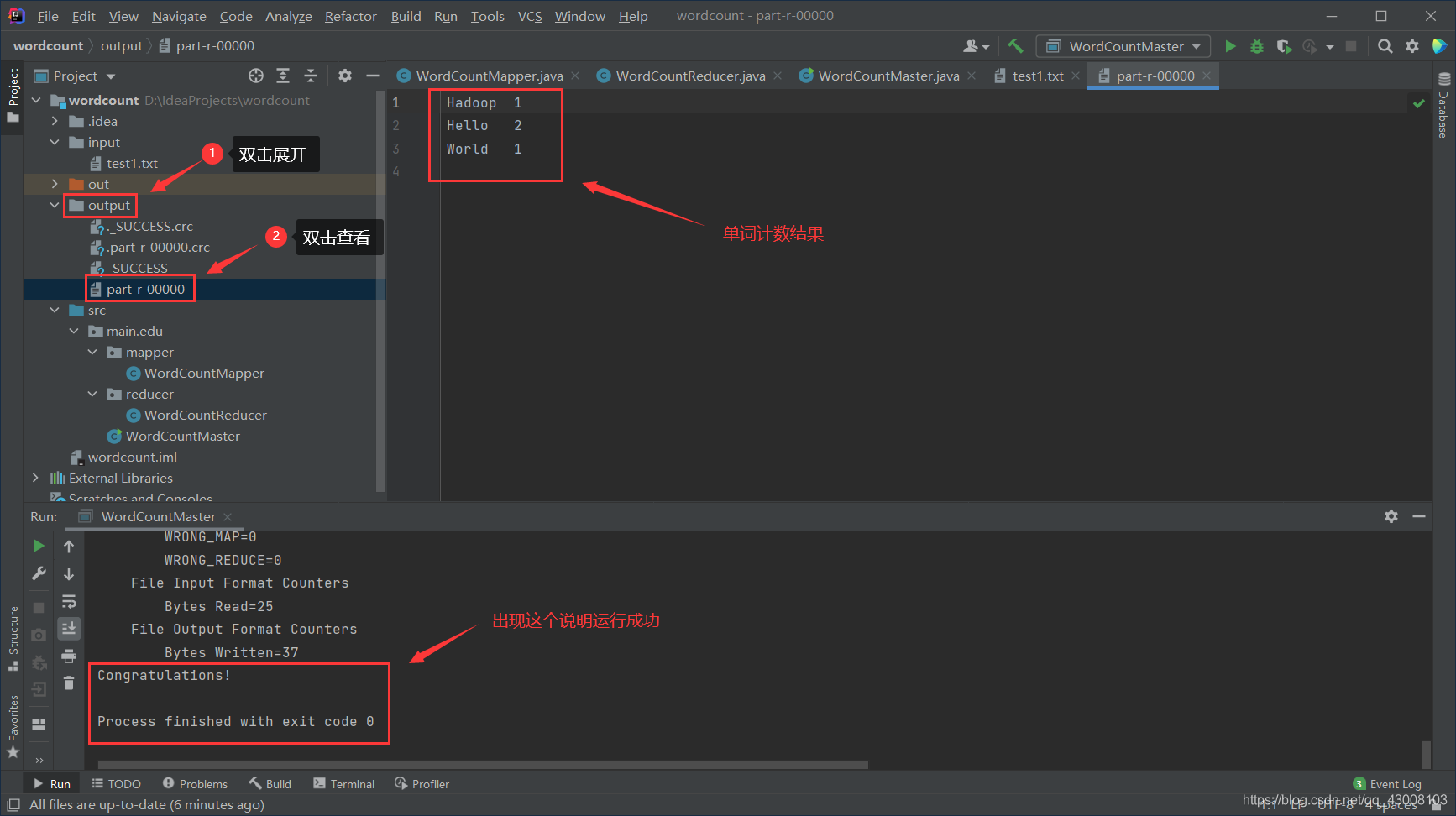

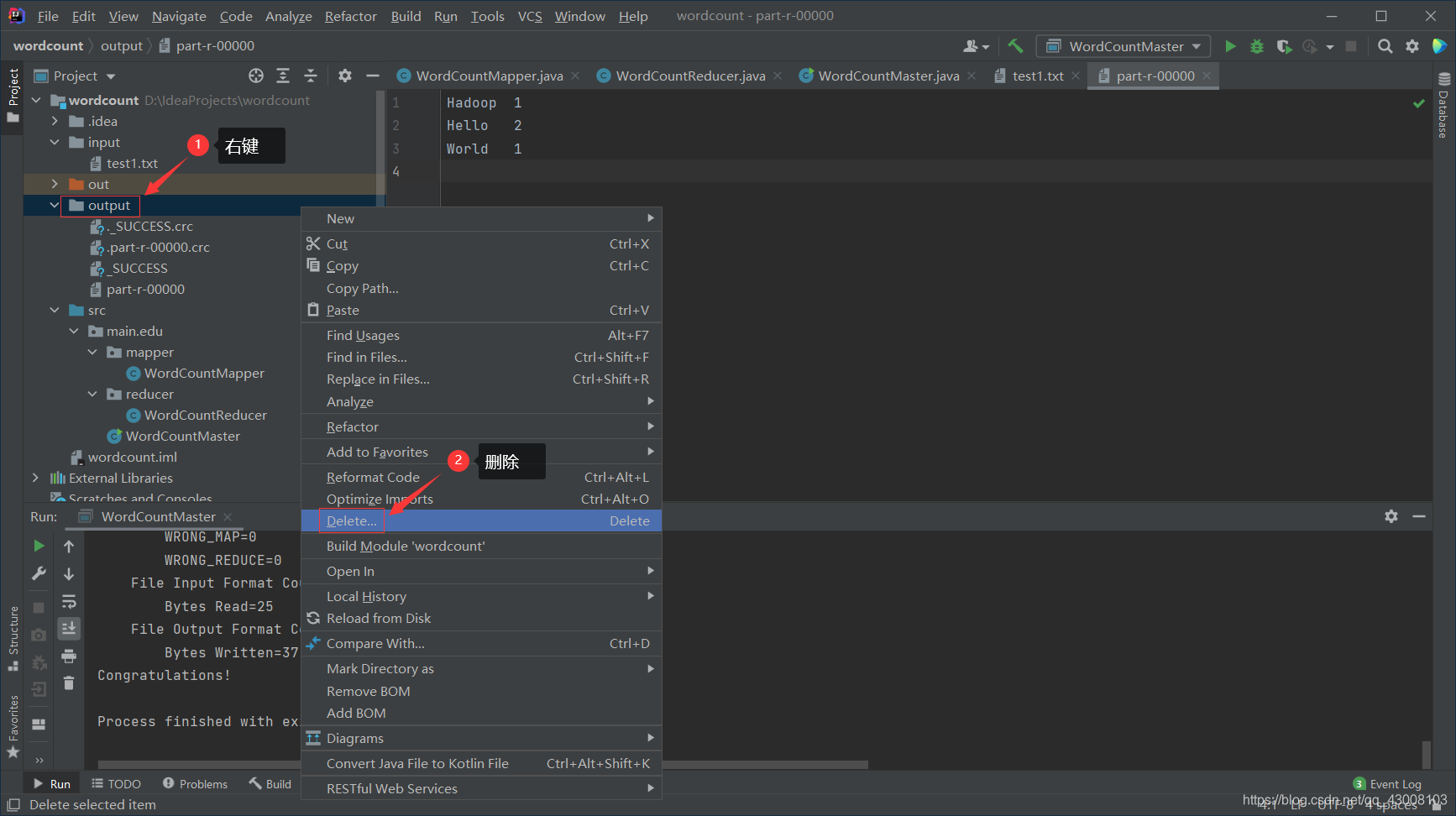

等待运行完成会发现项目目录下多了一个output文件夹, 打开里面的‘part-r-00000’文件就会发现里面是对你输入字符串的出现个数的统计。

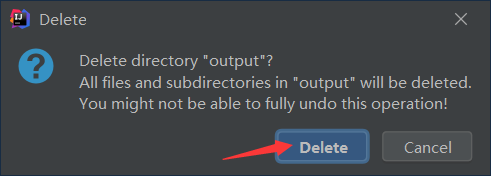

当你第二次运行的时候因为hadoop不会自动删除output目录所以会出现错误,请手动删除之后再运行。

这样就可以使用intellij来开发hadoop程序并进行调试了。

?代码如下:

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost:9000</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/usr/hadoop/hadoop-2.10.1/hadoop_tmp</value>

</property>

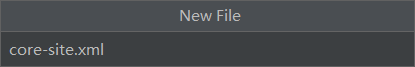

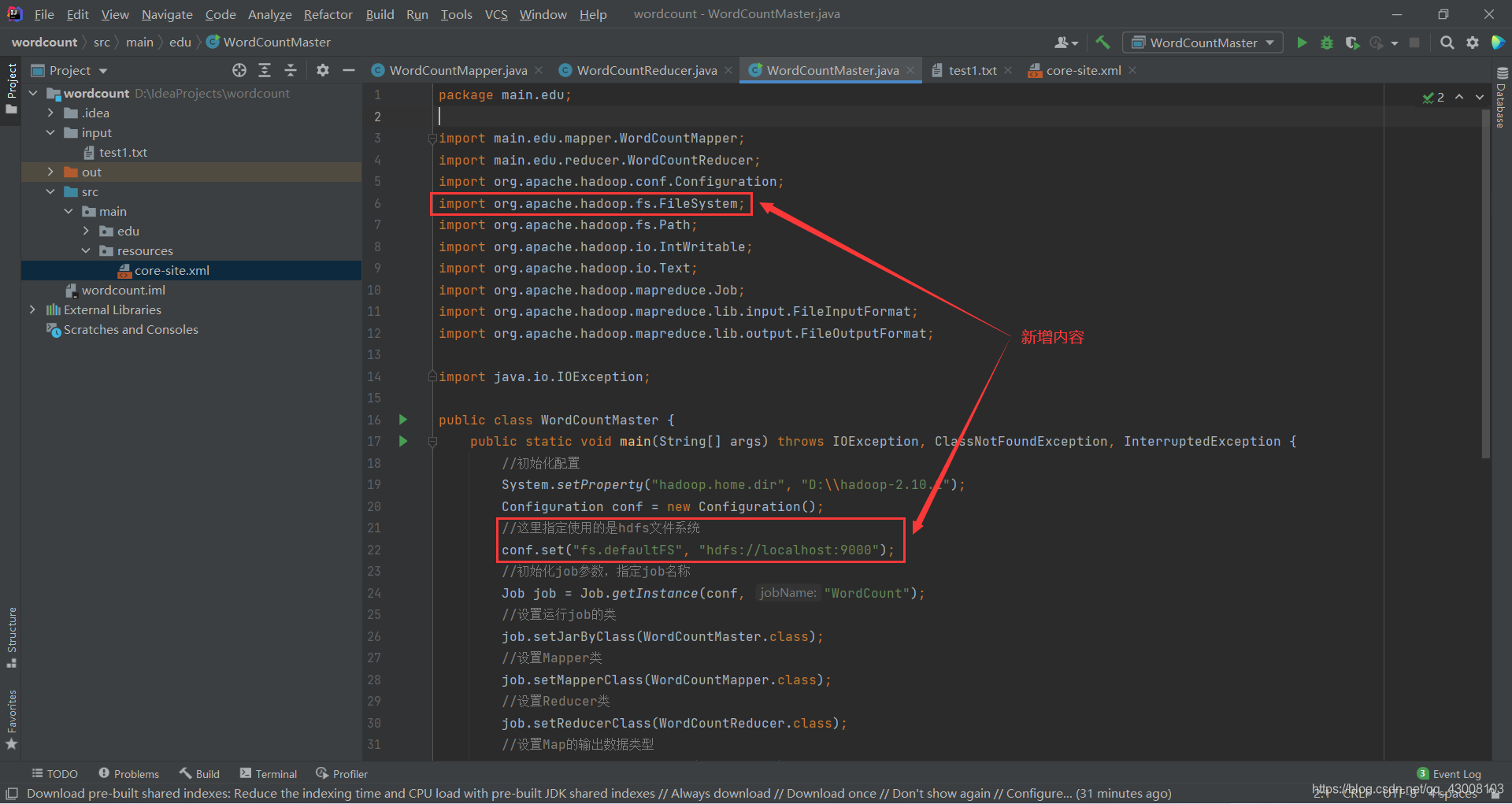

</configuration>修改WordCountMaster.java的代码

?WordCountMaster.java修改之后代码如下:

package main.edu;

import main.edu.mapper.WordCountMapper;

import main.edu.reducer.WordCountReducer;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class WordCountMaster {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

//初始化配置

System.setProperty("hadoop.home.dir", "D:\\hadoop-2.10.1");

Configuration conf = new Configuration();

//这里指定使用的是hdfs文件系统

conf.set("fs.defaultFS", "hdfs://localhost:9000");

//初始化job参数,指定job名称

Job job = Job.getInstance(conf, "WordCount");

//设置运行job的类

job.setJarByClass(WordCountMaster.class);

//设置Mapper类

job.setMapperClass(WordCountMapper.class);

//设置Reducer类

job.setReducerClass(WordCountReducer.class);

//设置Map的输出数据类型

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(IntWritable.class);

//设置Reducer的输出数据类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

// 指定该mapreduce程序数据的输入和输出路径

Path inputPath = new Path("/wordcount/input");

Path outputPath = new Path("/wordcount/output");

FileSystem fs = FileSystem.get(conf);

if (fs.exists(outputPath)) {

fs.delete(outputPath, true);

}

FileInputFormat.setInputPaths(job, inputPath);

FileOutputFormat.setOutputPath(job, outputPath);

//提交job

boolean result = job.waitForCompletion(true);

//执行成功后进行后续操作

if (result) {

System.out.println("Congratulations!");

}

}

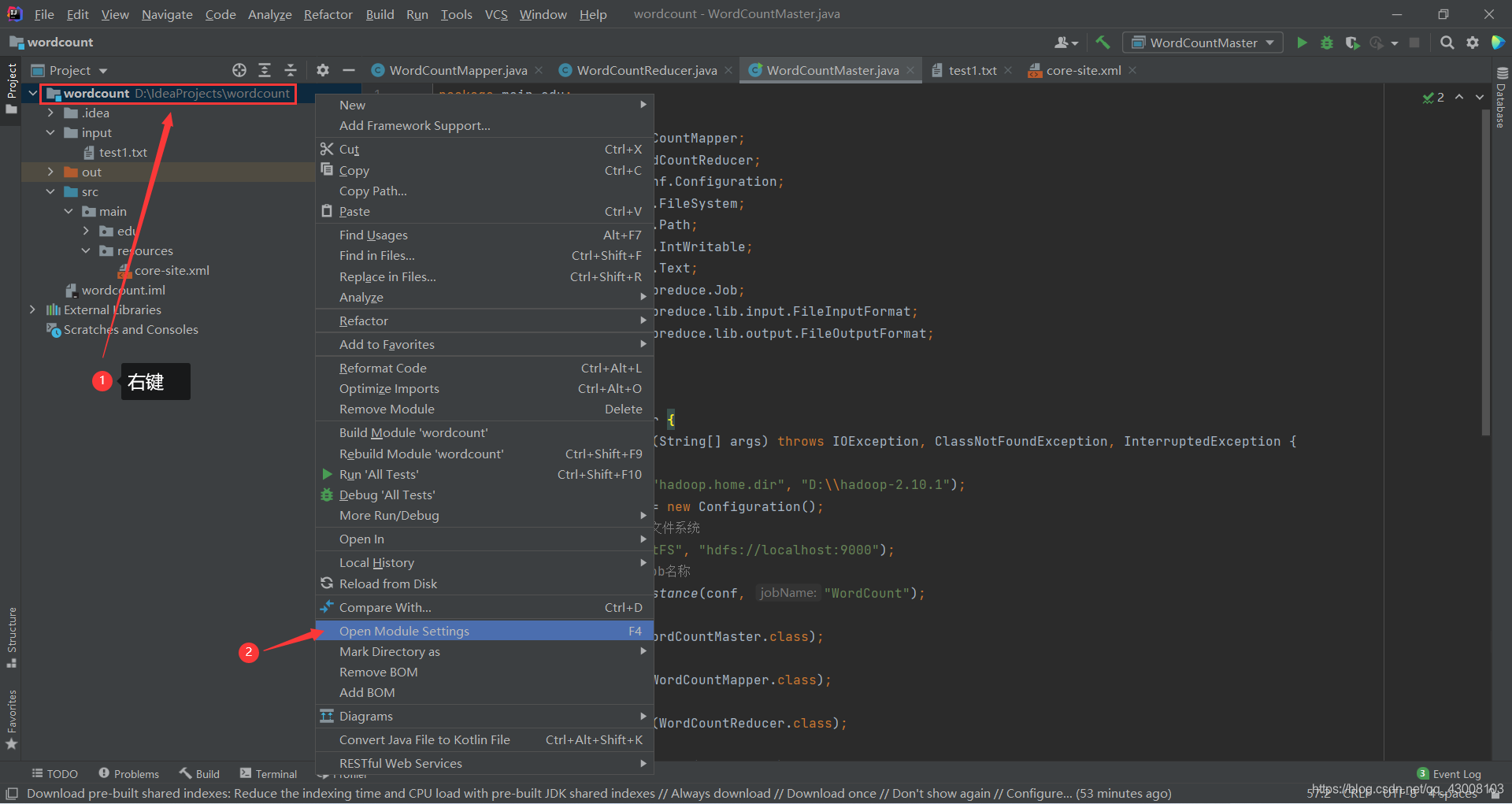

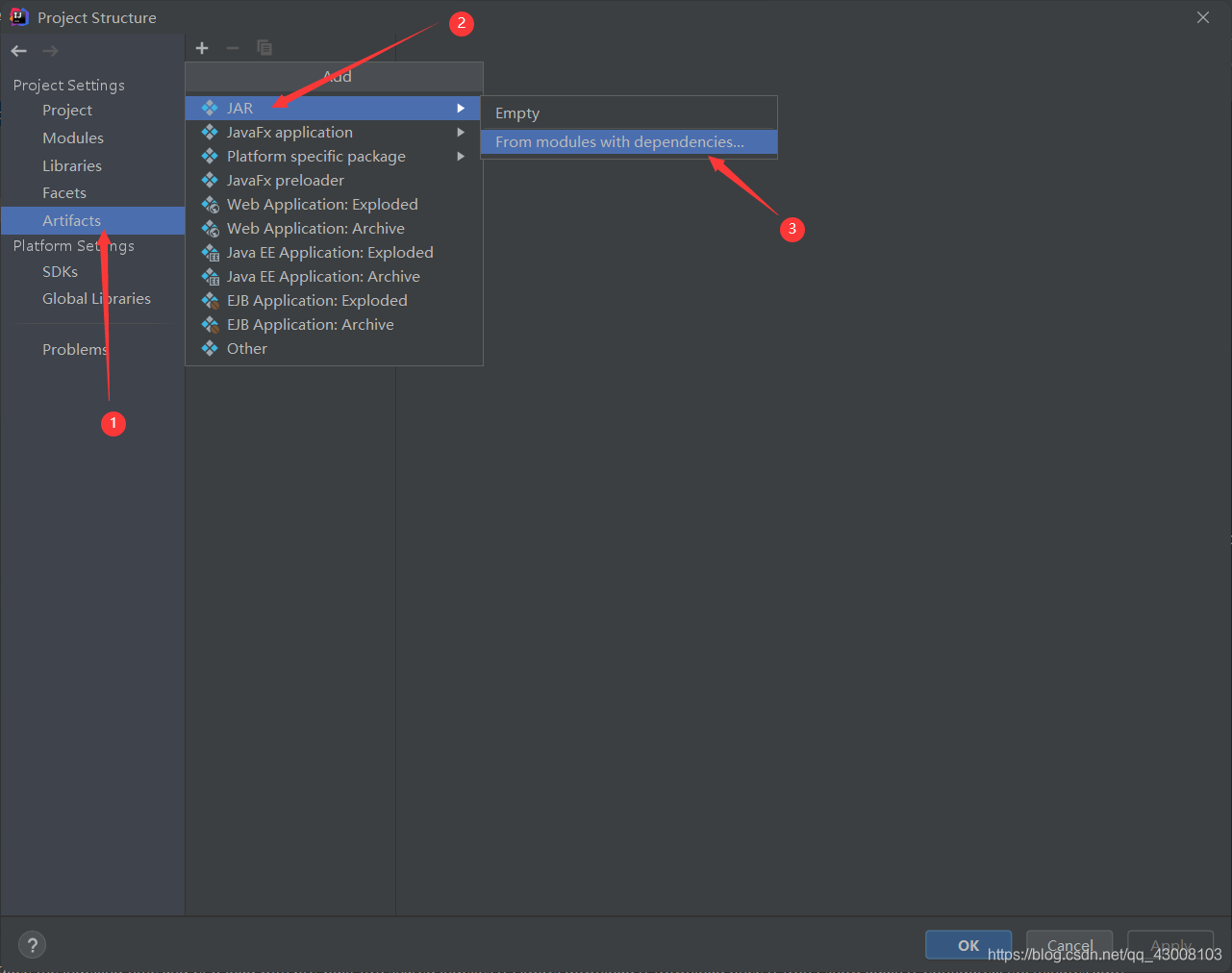

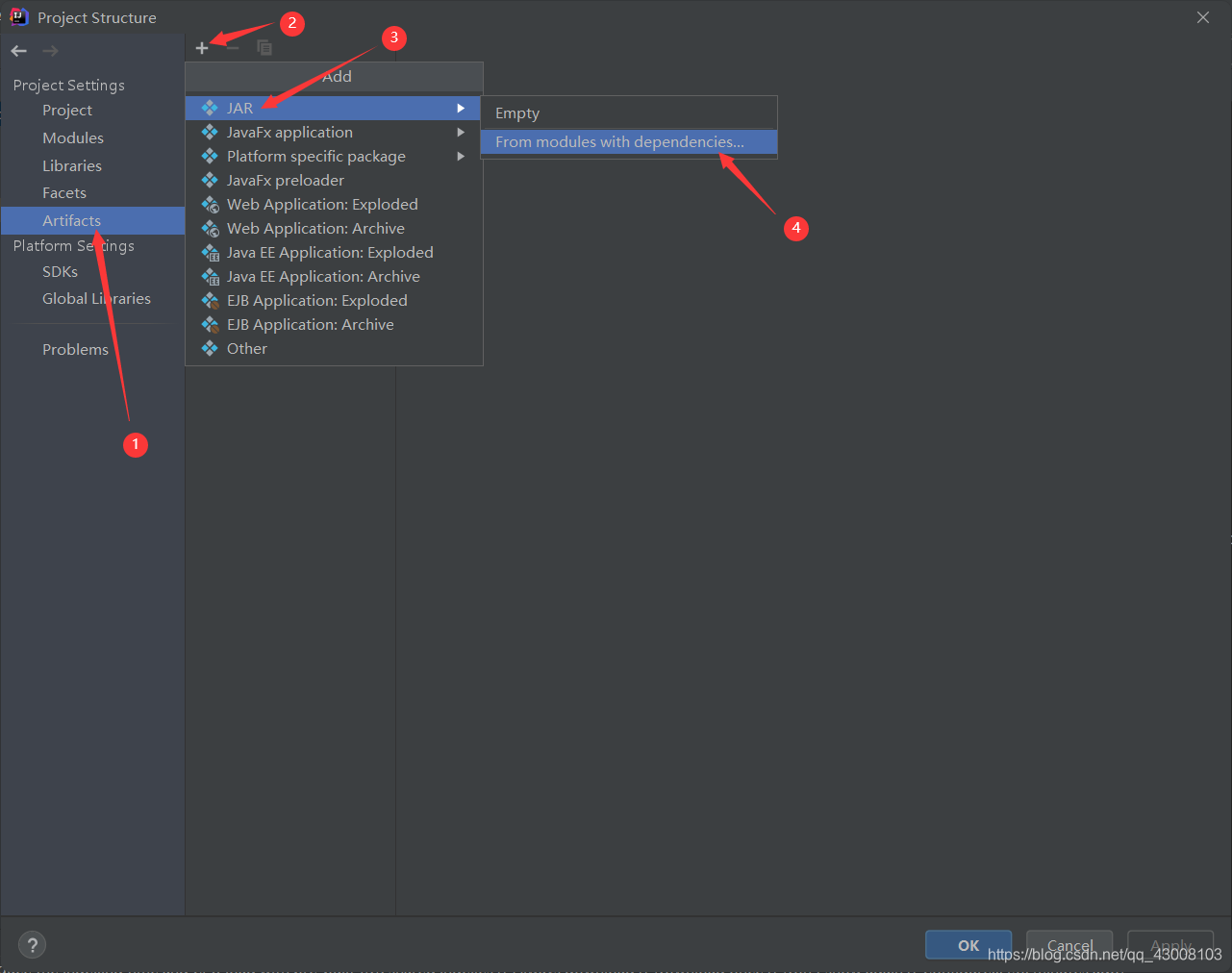

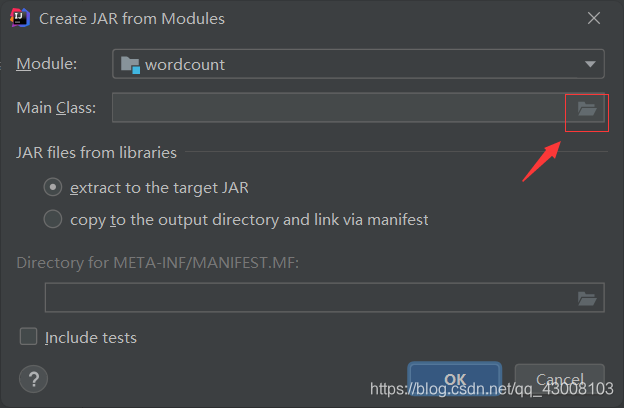

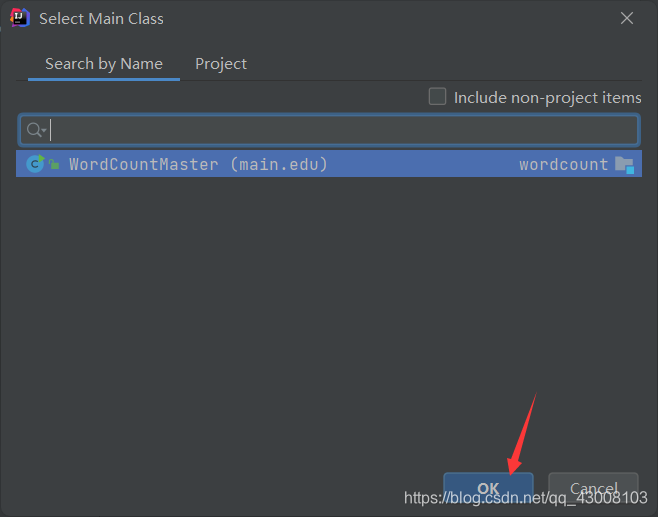

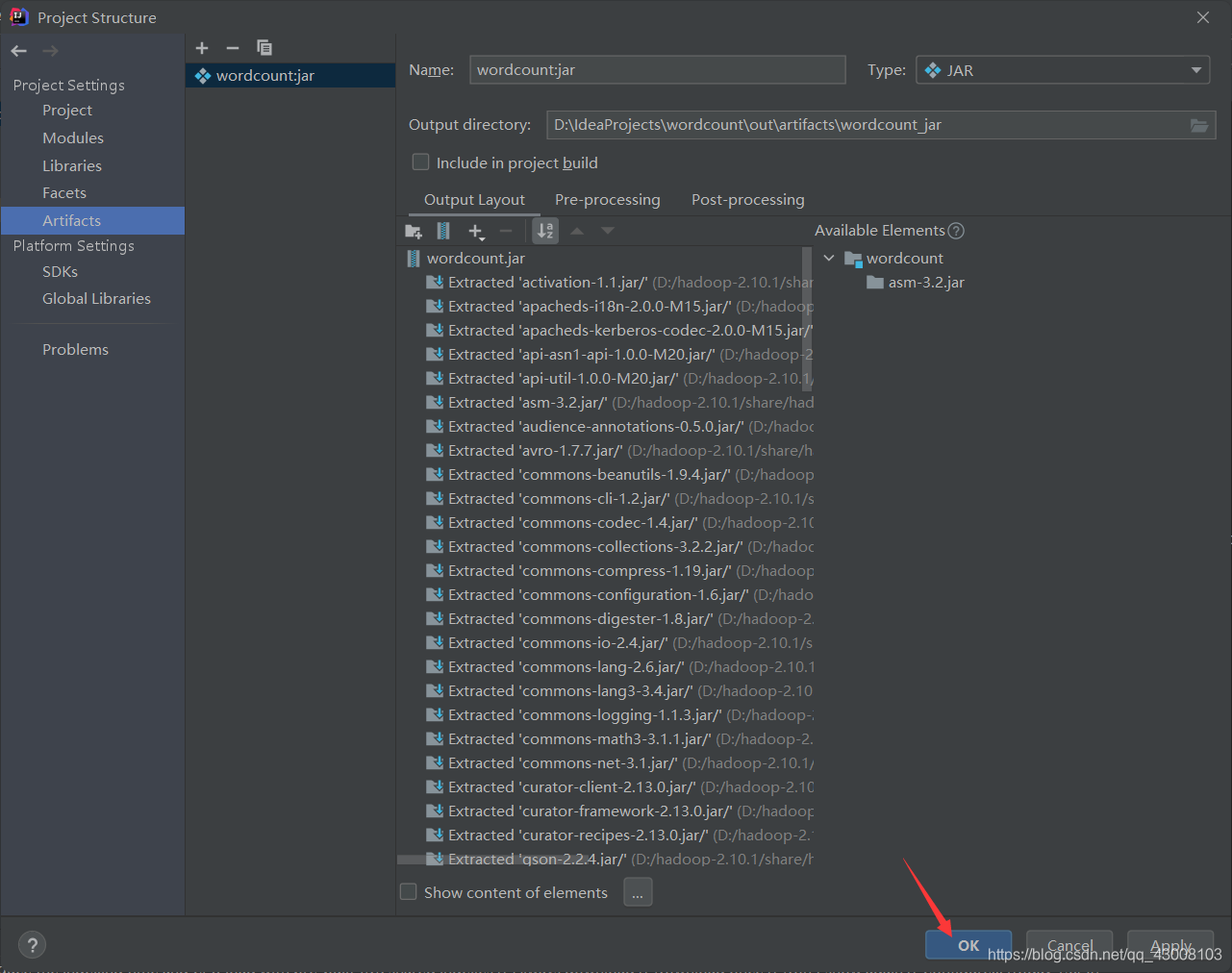

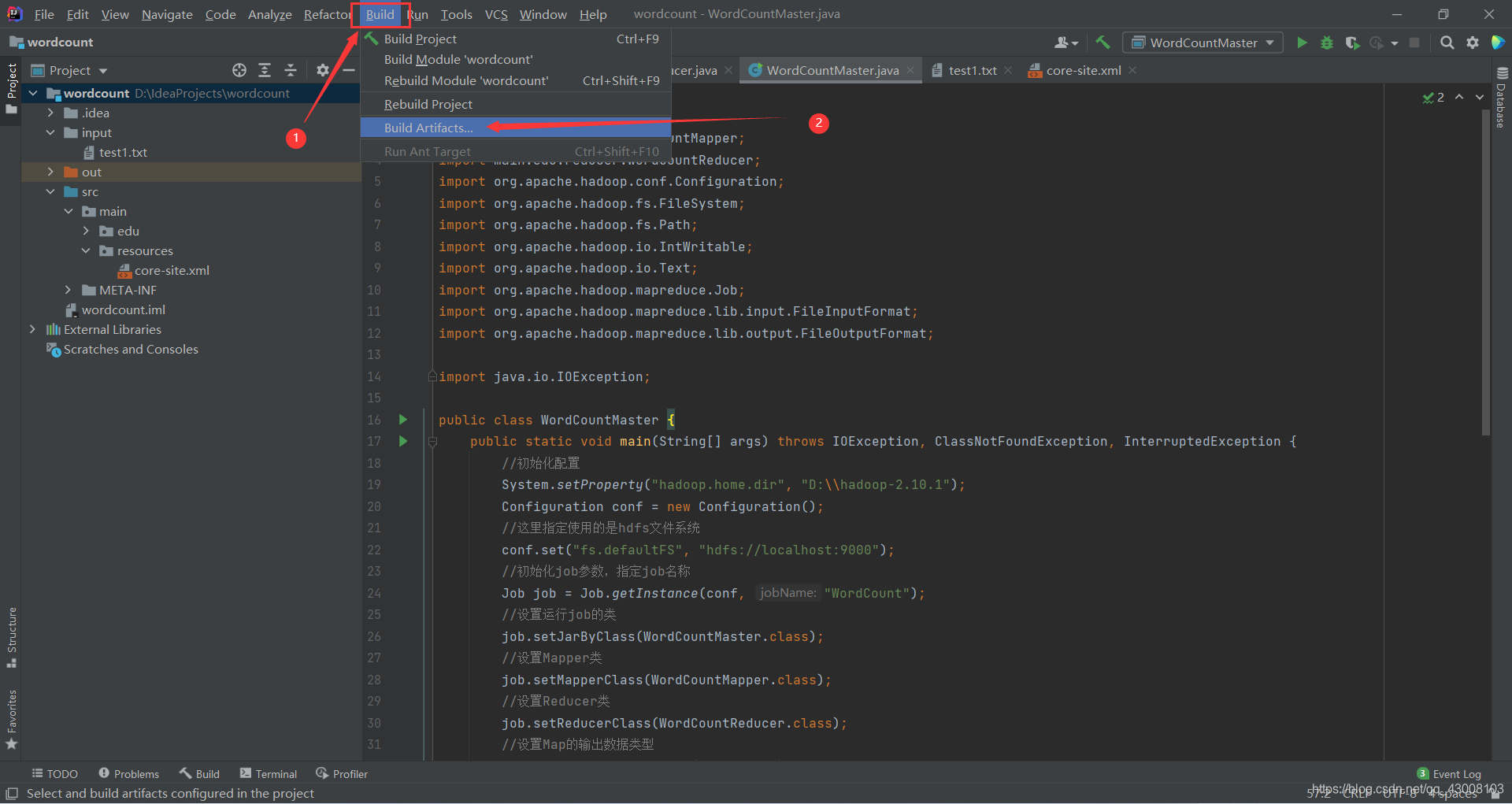

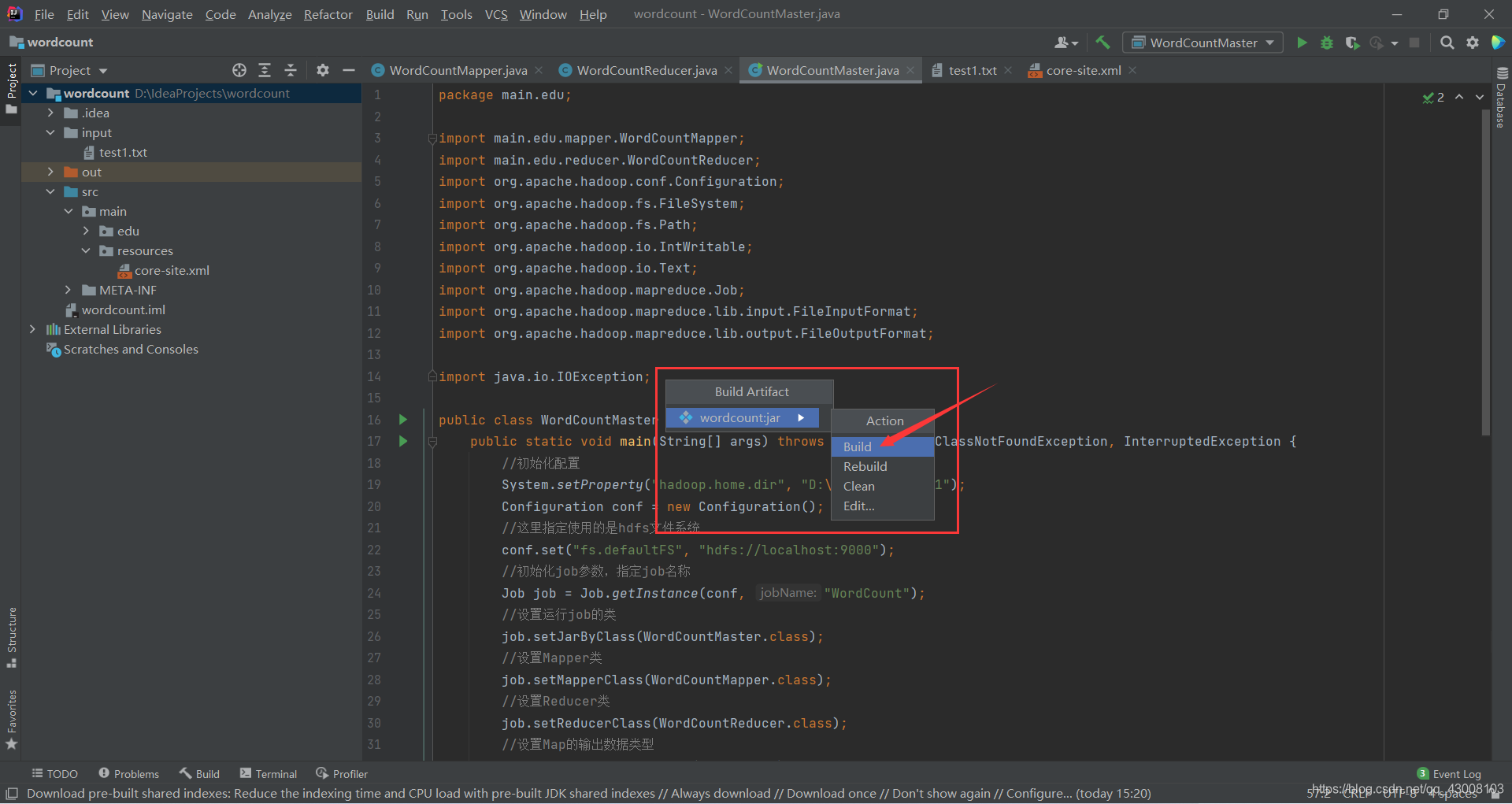

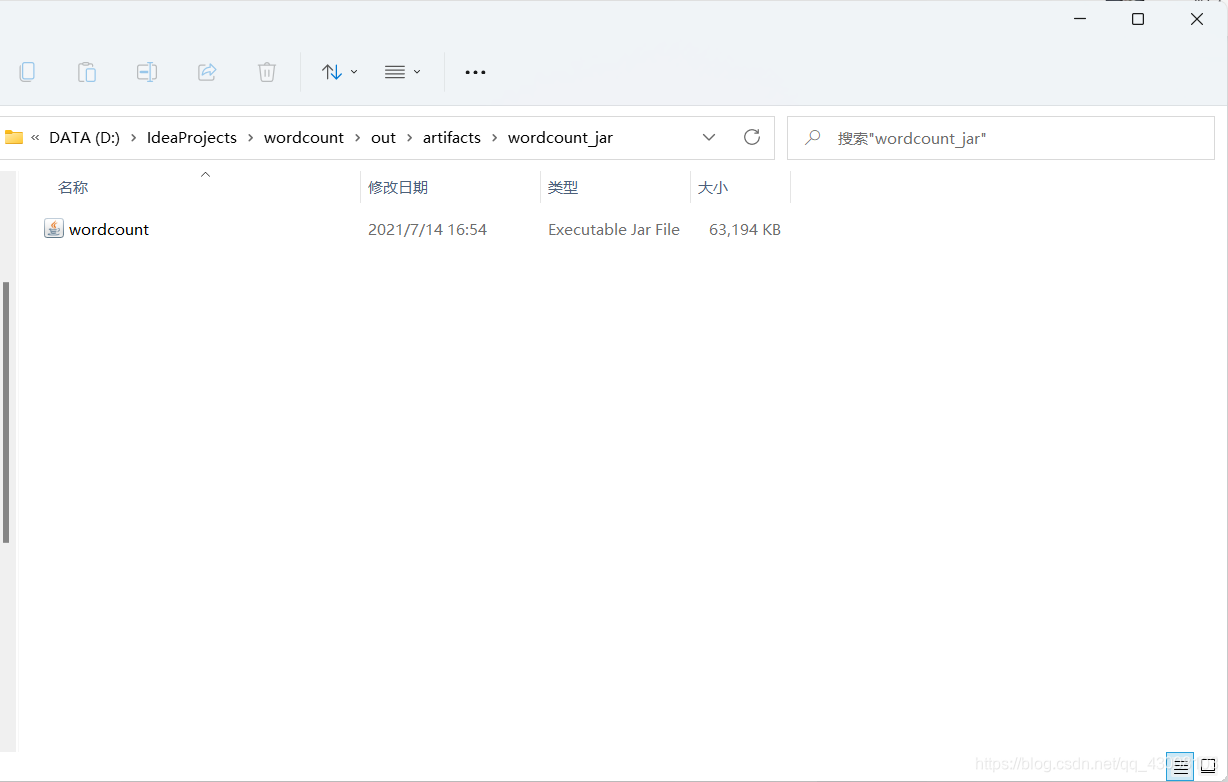

}打包jar

打包完成

用FileZilla把jar文件传输到虚拟机的/usr/hadoop/hadoop-2.10.1目录下

不会的可以看我之前写安装伪分布式Hadoop时把jdk和hadoop安装包传输到虚拟机的教程https://blog.csdn.net/qq_43008103/article/details/118668959

切换到hadoop用户,启动Hadoop服务

su hadoop

start-all.sh

mr-jobhistory-daemon.sh start historyserverjps看一下启动成功没有

jps在Hadoop的HDFS里创建个wordcount/input目录

hadoop fs -mkdir /wordcount

hadoop fs -mkdir /wordcount/input我们把hadoop目录里的README.txt文件上传到HDFS里用作单词计数

cd /usr/hadoop/hadoop-2.10.1

hadoop fs -put README.txt /wordcount/input运行jar

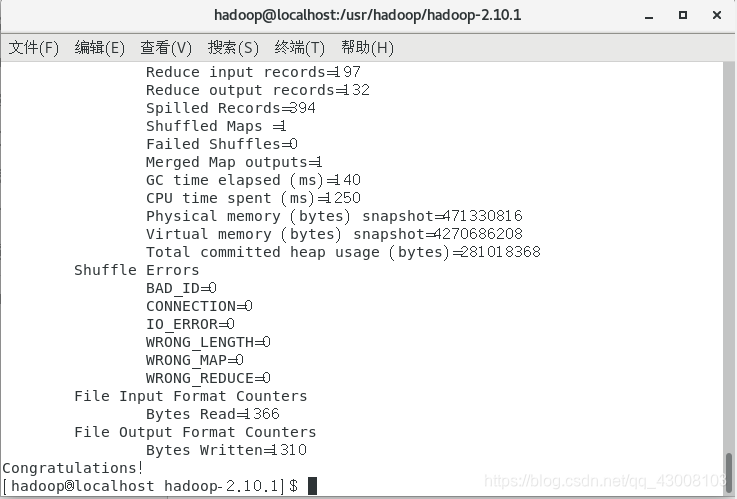

hadoop jar wordcount.jar wordcount?成功长这样↓

查看HDFS里的wordcount目录,我们可以发现多了一个output目录

hadoop fs -ls /wordcount查看output目录

hadoop fs -ls /wordcount/output查看里面的‘part-r-00000’文件就会发现里面是对你输入字符串的出现个数的统计。

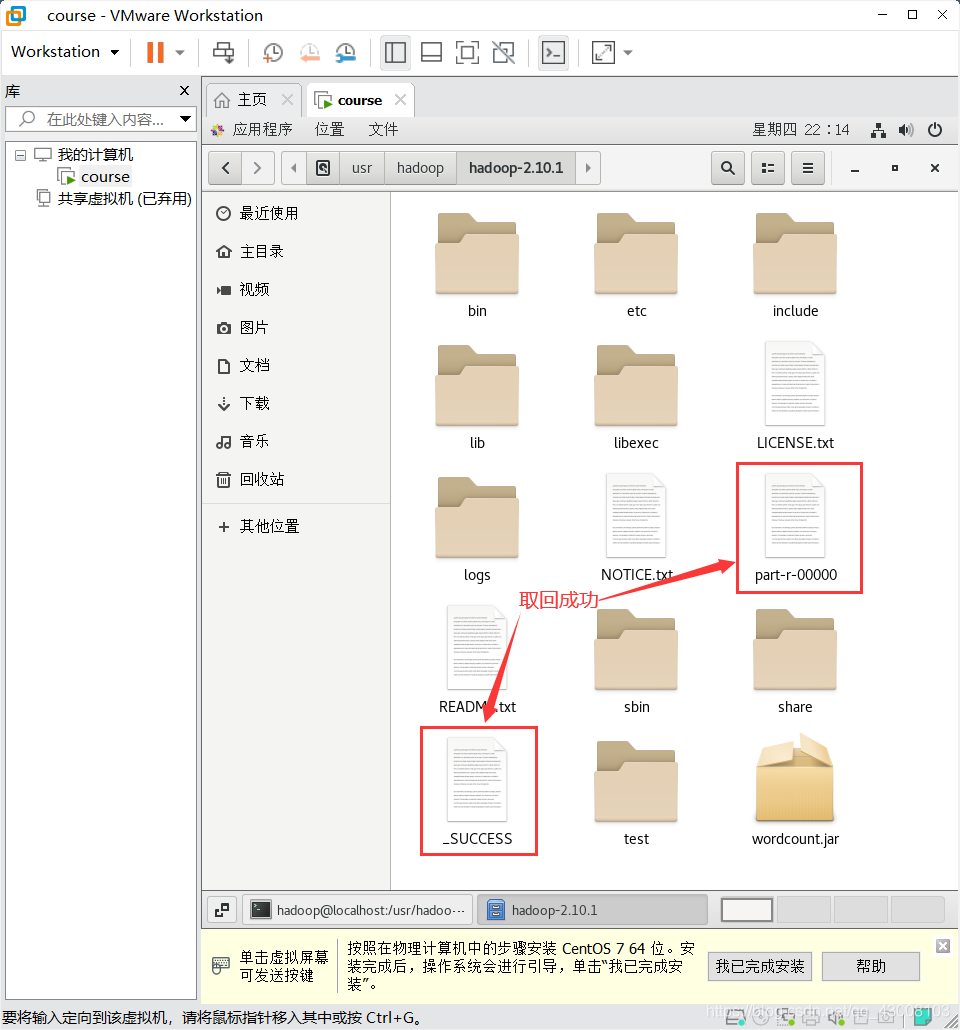

hadoop fs -cat /wordcount/output/part-r-00000可以把output目录里的文件取回本地

hadoop fs -get /wordcount/output/* .

?