环境准备

- zookeeper-3.4.12.tar.gz

- kafka_2.12-2.1.1.tgz

- jdk8(自己设置, 并设置好环境变量)

下载地址

https://pan.baidu.com/s/12iHj1ya-8bu2r3NS1VoAcw

提取码: dw8j

搭建步骤

1.安装zookeeper

- 解压安装包 -> D:\java\zookeeper-3.4.12

- 新建文件夹data -> D:\java\zookeeper-3.4.12\conf\data

- 新建配置文件 : zoo_sample.cfg -> zoo.cfg

- 找到并修改配置文件

dataDir=D:\java\zookeeper-3.4.12\conf\data

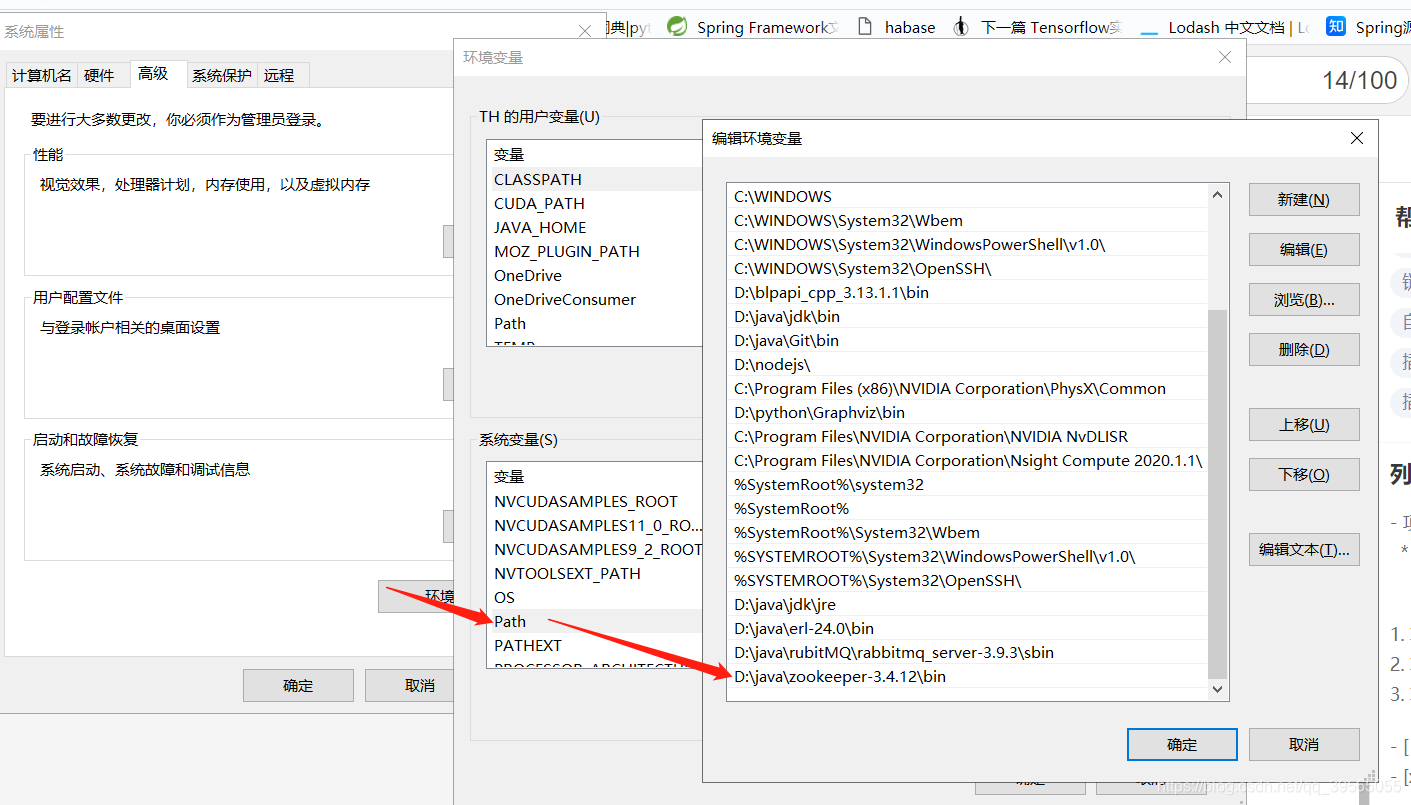

- 将D:\java\zookeeper-3.4.12\bin -> 添加到环境变量

- 启动zookeeper -> 双击D:\java\zookeeper-3.4.12\bin\zkServer.cmd

2.搭建kafak

- 解压文件 -> D:\java\kafka_2.12-2.1.1

- 新建文件夹logs -> D:\java\kafka_2.12-2.1.1\logs

- 找到并修改配置文件 😄:\java\kafka_2.12-2.1.1\config\server.properties

log.dirs=D:\java\kafka_2.12-2.1.1\logs

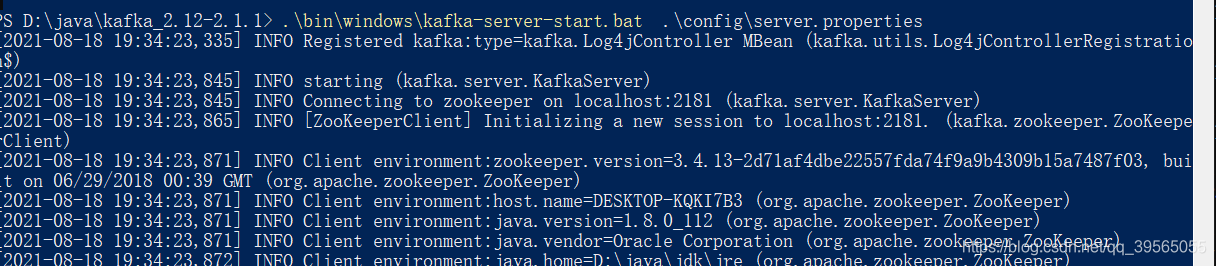

- 启动kafak:

zookeep 一定要先启动

.\bin\windows\kafka-server-start.bat .\config\server.properties

3. 建一个maven项目用作测试:

- 依赖包

<!--kafak-->

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-clients</artifactId>

<version>0.8.2.1</version>

</dependency>

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka_2.11</artifactId>

<version>0.8.2.1</version>

</dependency>

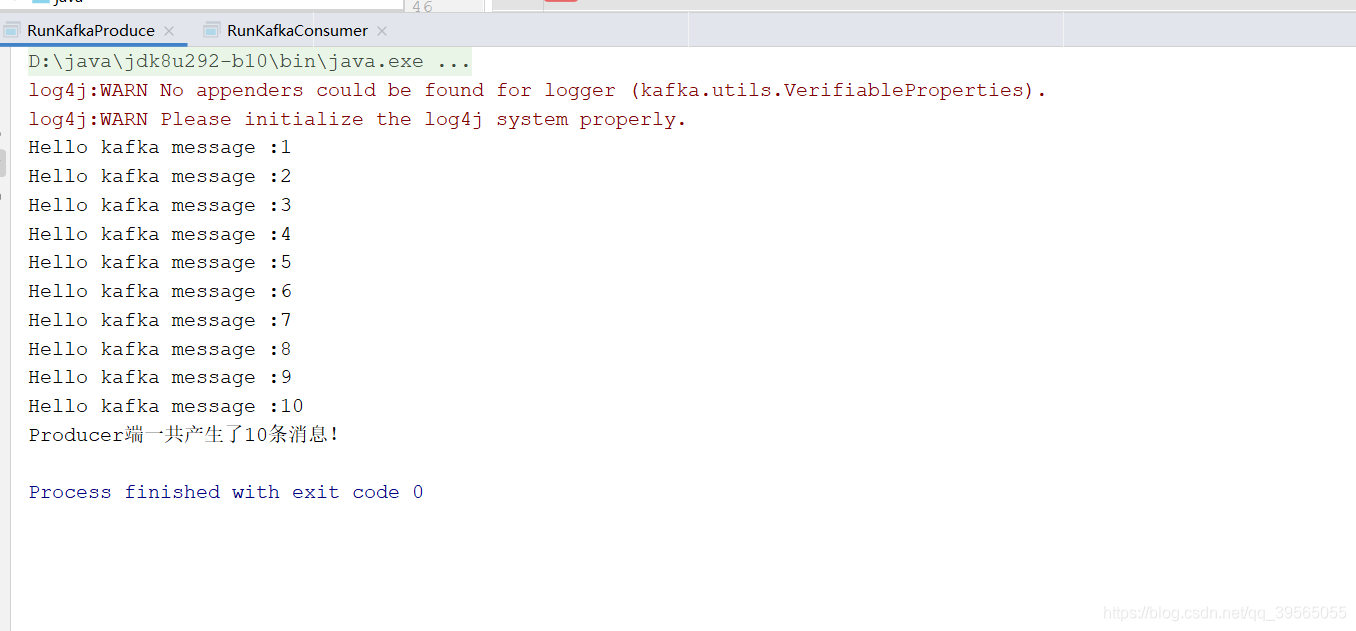

- 新建producer

import kafka.javaapi.producer.Producer;

import kafka.producer.KeyedMessage;

import kafka.producer.ProducerConfig;

import java.util.Properties;

public class RunKafkaProduce {

private final Producer<String, String> producer;

public final static String TOPIC = "logstest";

private RunKafkaProduce() {

Properties props = new Properties();

// 此处配置的是kafka的broker地址:端口列表

props.put("metadata.broker.list", "localhost:9092");

//配置value的序列化类

props.put("serializer.class", "kafka.serializer.StringEncoder");

//配置key的序列化类

props.put("key.serializer.class", "kafka.serializer.StringEncoder");

//request.required.acks

//0, which means that the producer never waits for an acknowledgement from the broker (the same behavior as 0.7). This option provides the lowest latency but the weakest durability guarantees (some data will be lost when a server fails).

//1, which means that the producer gets an acknowledgement after the leader replica has received the data. This option provides better durability as the client waits until the server acknowledges the request as successful (only messages that were written to the now-dead leader but not yet replicated will be lost).

//-1, which means that the producer gets an acknowledgement after all in-sync replicas have received the data. This option provides the best durability, we guarantee that no messages will be lost as long as at least one in sync replica remains.

props.put("request.required.acks", "-1");

producer = new Producer<String, String>(new ProducerConfig(props));

}

void produce() {

int messageNo = 1;

final int COUNT = 11;

int messageCount = 0;

while (messageNo < COUNT) {

String key = String.valueOf(messageNo);

String data = "Hello kafka message :" + key;

producer.send(new KeyedMessage<String, String>(TOPIC, key, data));

System.out.println(data);

messageNo++;

messageCount++;

}

System.out.println("Producer端一共产生了" + messageCount + "条消息!");

}

public static void main(String[] args) {

new RunKafkaProduce().produce();

}

}

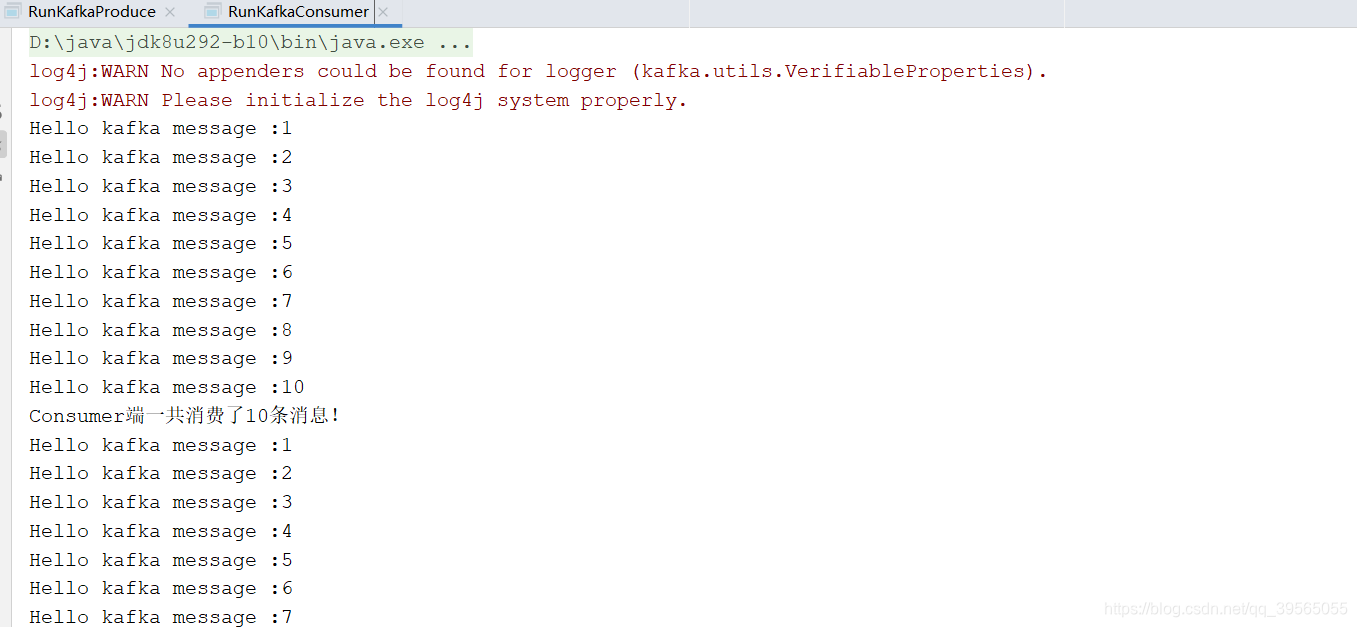

- 建立consumer

import kafka.consumer.ConsumerConfig;

import kafka.consumer.ConsumerIterator;

import kafka.consumer.KafkaStream;

import kafka.javaapi.consumer.ConsumerConnector;

import kafka.serializer.StringDecoder;

import kafka.utils.VerifiableProperties;

import java.util.HashMap;

import java.util.List;

import java.util.Map;

import java.util.Properties;

public class RunKafkaConsumer {

private final ConsumerConnector consumer;

private final static String TOPIC = "logstest";

private RunKafkaConsumer() {

Properties props = new Properties();

//zookeeper

props.put("zookeeper.connect", "localhost:2181");

//topic

props.put("group.id", "logstest");

//Zookeeper 超时

props.put("zookeeper.session.timeout.ms", "50000");

props.put("zookeeper.sync.time.ms", "200");

props.put("auto.commit.interval.ms", "1000");

props.put("auto.offset.reset", "smallest");

props.put("serializer.class", "kafka.serializer.StringEncoder");

ConsumerConfig config = new ConsumerConfig(props);

consumer = kafka.consumer.Consumer.createJavaConsumerConnector(config);

}

void consume() {

Map<String, Integer> topicCountMap = new HashMap<String, Integer>();

topicCountMap.put(TOPIC, 1);

StringDecoder keyDecoder = new StringDecoder(new VerifiableProperties());

StringDecoder valueDecoder = new StringDecoder(new VerifiableProperties());

Map<String, List<KafkaStream<String, String>>> consumerMap = consumer.createMessageStreams(topicCountMap, keyDecoder, valueDecoder);

KafkaStream<String, String> stream = consumerMap.get(TOPIC).get(0);

ConsumerIterator<String, String> it = stream.iterator();

int messageCount = 0;

while (it.hasNext()) {

System.out.println(it.next().message());

messageCount++;

if (messageCount == 10) {

System.out.println("Consumer端一共消费了" + messageCount + "条消息!");

}

}

}

public static void main(String[] args) {

new RunKafkaConsumer().consume();

}

}

- 输出结果