����Ŀ¼

��. Mapping�ֶ�ӳ��

-

��. ӳ��(Mapping)�൱�����ݱ��ı��ṹ��ElasticSearch�е�ӳ��(Mapping)���� ����һ���ĵ�,���Զ������������ֶ��Լ��ֶε����͡��ִ��������Եȵȡ�

-

��. ӳ����Է�Ϊ��̬ӳ��;�̬ӳ��

1.��̬ӳ��(dynamic mapping):

�ڹ�ϵ���ݿ���,��Ҫ���ȴ������ݿ�,Ȼ���� �����ݿ�ʵ���´������ݱ�,Ȼ������ڸ�����

���в������ݡ���ElasticSearch�в��� Ҫ���ȶ���ӳ��(Mapping),�ĵ�д��ElasticSearchʱ,��

�����ĵ��ֶ��Զ�ʶ�����͡����ֻ��Ƴ�֮Ϊ��̬ӳ��

2.��̬ӳ�� :

��ElasticSearch��Ҳ�������ȶ����ӳ��,�����ĵ��ĸ����ֶμ����� �͵�,���ַ�ʽ��֮Ϊ

��̬ӳ��

��. ������������

- ��.�ַ�������

| ���� | ���� |

|---|---|

| text | ��һ���ֶ�Ҫ�DZ�ȫ��������,����Email���ݡ���Ʒ����,Ӧ��ʹ��text���͡�����text�����Ժ�,�ֶ����ݻᱻ����,�����ɵ���������ǰ,�ַ����ᱻ�������ֳ�һ�����Ĵ��text���͵��ֶβ�������,�������ھۺ� |

| keyword | keyword���������������ṹ�����ֶ�,����email��ַ����������״̬��ͱ�ǩ������ֶ���Ҫ���й���(��������ѷ���������status����Ϊpublished������)�����ۺϡ�keyword���͵��ֶ�ֻ��ͨ����ȷֵ������ |

- ��. ��������

| ���� | ȡֵ��Χ |

|---|---|

| byte | -128 - 127 |

| short | -32768 - 32767 |

| integer | -2��31�η� �C 2��31-1 |

| long | -2��63�η� - 2��63�η�-1 |

- ��. ��������

| ���� | ȡֵ��Χ |

|---|---|

| doule | 64λ˫���ȸ������� |

| float | 32λ�����ȸ������� |

| half_float | 16λ�뾫�ȸ������� |

| scaled_float | �������͵ĸ����� |

- ��. date����,�������ͱ�ʾ��ʽ���������¼���:

- ���ڸ�ʽ���ַ���,����"2018-01-13"��"2018-01-13 12:10:30"

- long���͵ĺ�����(��1970�꿪ʼ)

- integer������

-

��. boolean����:������(��������)���Խ���true/false

-

��. binary����

�������ֶ���ָbase64����ʾ�����д���Ķ���������,�����������������ʽ������,����ͼ��Ĭ�������,�����͵��ֶ�ֻ���治������������ֻ֧��index_name���� -

��. array����

-

��. object����:JSON�������в㼶��ϵ,�ĵ������Ƕ�Ķ���

��. ӳ��IJ鿴������

- ��. �鿴mapping��Ϣ:GET bank/_mapping

{

"bank" : {

"mappings" : {

"properties" : {

"account_number" : {

"type" : "long" # long����

},

"address" : {

"type" : "text", # �ı�����,�����ȫ�ļ���,���зִ�

"fields" : {

"keyword" : { # addrss.keyword

"type" : "keyword", # ���ֶα���ȫ��ƥ�䵽

"ignore_above" : 256

}

}

},

"age" : {

"type" : "long"

},

"balance" : {

"type" : "long"

},

"city" : {

"type" : "text",

"fields" : {

"keyword" : {

"type" : "keyword",

"ignore_above" : 256

}

}

},

"email" : {

"type" : "text",

"fields" : {

"keyword" : {

"type" : "keyword",

"ignore_above" : 256

}

}

},

"employer" : {

"type" : "text",

"fields" : {

"keyword" : {

"type" : "keyword",

"ignore_above" : 256

}

}

},

"firstname" : {

"type" : "text",

"fields" : {

"keyword" : {

"type" : "keyword",

"ignore_above" : 256

}

}

},

"gender" : {

"type" : "text",

"fields" : {

"keyword" : {

"type" : "keyword",

"ignore_above" : 256

}

}

},

"lastname" : {

"type" : "text",

"fields" : {

"keyword" : {

"type" : "keyword",

"ignore_above" : 256

}

}

},

"state" : {

"type" : "text",

"fields" : {

"keyword" : {

"type" : "keyword",

"ignore_above" : 256

}

}

}

}

}

}

}

- ��. �°汾�ı�:ElasticSearch7-ȥ��type����

- ��ϵ�����ݿ����������ݱ�ʾ�Ƕ�����,��ʹ������������ͬ���Ƶ���Ҳ��Ӱ��ʹ��,��ES�в��������ġ�elasticsearch�ǻ���Lucene��������������,��ES�в�ͬtype��������ͬ��filed������Lucene�еĴ�����ʽ��һ����

(1). ������ͬtype�µ�����user_name,��ESͬһ����������ʵ����Ϊ��ͬһ��filed,�������������ͬ��type�ж�����ͬ��filedӳ�䡣����,��ͬtype�е���ͬ�ֶ����ƾͻ��ڴ����г��ֳ�ͻ�����,����Lucene����Ч���½���

(2). ȥ��type����Ϊ�����ES�������ݵ�Ч�ʡ� - Elasticsearch 7.x URL�е�type����Ϊ��ѡ������,����һ���ĵ�����Ҫ���ṩ�ĵ�����

- Elasticsearch 8.x ����֧��URL�е�type����

- ���:

�������Ӷ�����Ǩ�Ƶ�������,ÿ�������ĵ�һ����������

���Ѵ��ڵ������µ���������,ȫ��Ǩ�Ƶ�ָ��λ�ü��ɡ��������Ǩ��

- ��. ����ӳ��PUT /my_index

PUT /my_index

{

"mappings": {

"properties": {

"age": {

"type": "integer"

},

"email": {

"type": "keyword" # ָ��Ϊkeyword

},

"name": {

"type": "text" # ȫ�ļ���������ʱ��ִ�,����ʱ����зִ�ƥ��

}

}

}

}

���:

{

"acknowledged" : true,

"shards_acknowledged" : true,

"index" : "my_index"

}

�鿴ӳ��GET /my_index

������:

{

"my_index" : {

"aliases" : { },

"mappings" : {

"properties" : {

"age" : {

"type" : "integer"

},

"email" : {

"type" : "keyword"

},

"employee-id" : {

"type" : "keyword",

"index" : false

},

"name" : {

"type" : "text"

}

}

},

"settings" : {

"index" : {

"creation_date" : "1588410780774",

"number_of_shards" : "1",

"number_of_replicas" : "1",

"uuid" : "ua0lXhtkQCOmn7Kh3iUu0w",

"version" : {

"created" : "7060299"

},

"provided_name" : "my_index"

}

}

}

}

�����µ��ֶ�ӳ��PUT /my_index/_mapping

PUT /my_index/_mapping

{

"properties": {

"employee-id": {

"type": "keyword",

"index": false # �ֶβ��ܱ�����������

}

}

}

����� ��index��: false,�����������ֶβ��ܱ�����,ֻ��һ�������ֶΡ�

- ��. ���ܸ���ӳ��:�����Ѿ����ڵ��ֶ�ӳ��,���Dz��ܸ��¡����±��봴���µ�����,��������Ǩ�ơ�

��. ����Ǩ��

- ��. �ȴ���new_twitter����ȷӳ��,Ȼ��ʹ�����·�ʽ��������Ǩ�ơ�

6.0�Ժ�д��

POST reindex

{

"source":{

"index":"twitter"

},

"dest":{

"index":"new_twitters"

}

}

�ϰ汾д��

POST reindex

{

"source":{

"index":"twitter",

"twitter":"twitter"

},

"dest":{

"index":"new_twitters"

}

}

- ��. ����:ԭ������Ϊaccount,�°汾û��������,�������ǰ���ȥ��

GET /bank/_search

{

"took" : 0,

"timed_out" : false,

"_shards" : {

"total" : 1,

"successful" : 1,

"skipped" : 0,

"failed" : 0

},

"hits" : {

"total" : {

"value" : 1000,

"relation" : "eq"

},

"max_score" : 1.0,

"hits" : [

{

"_index" : "bank",

"_type" : "account",//ԭ������Ϊaccount,�°汾û��������,�������ǰ���ȥ��

"_id" : "1",

"_score" : 1.0,

"_source" : {

"account_number" : 1,

"balance" : 39225,

"firstname" : "Amber",

"lastname" : "Duke",

"age" : 32,

"gender" : "M",

"address" : "880 Holmes Lane",

"employer" : "Pyrami",

"email" : "amberduke@pyrami.com",

"city" : "Brogan",

"state" : "IL"

}

},

...

GET /bank/_search

���

"age":{"type":"long"}

- ��. ��Ҫ��������Ϊinteger,�ȴ����µ�����

PUT /newbank

{

"mappings": {

"properties": {

"account_number": {

"type": "long"

},

"address": {

"type": "text"

},

"age": {

"type": "integer"

},

"balance": {

"type": "long"

},

"city": {

"type": "keyword"

},

"email": {

"type": "keyword"

},

"employer": {

"type": "keyword"

},

"firstname": {

"type": "text"

},

"gender": {

"type": "keyword"

},

"lastname": {

"type": "text",

"fields": {

"keyword": {

"type": "keyword",

"ignore_above": 256

}

}

},

"state": {

"type": "keyword"

}

}

}

}

�鿴��newbank����ӳ��:

GET /newbank/_mapping

�ܹ�����age��ӳ�����ͱ���Ϊ��integer.

"age":{"type":"integer"}

- ��. ��bank�е�����Ǩ�Ƶ�newbank��

POST _reindex

{

"source": {

"index": "bank",

"type": "account"

},

"dest": {

"index": "newbank"

}

}

�������:

#! Deprecation: [types removal] Specifying types in reindex requests is deprecated.

{

"took" : 768,

"timed_out" : false,

"total" : 1000,

"updated" : 0,

"created" : 1000,

"deleted" : 0,

"batches" : 1,

"version_conflicts" : 0,

"noops" : 0,

"retries" : {

"bulk" : 0,

"search" : 0

},

"throttled_millis" : 0,

"requests_per_second" : -1.0,

"throttled_until_millis" : 0,

"failures" : [ ]

}

- ��. �鿴newbank�е�����

GET /newbank/_search

���

"hits" : {

"total" : {

"value" : 1000,

"relation" : "eq"

},

"max_score" : 1.0,

"hits" : [

{

"_index" : "newbank",

"_type" : "_doc", # û��������

��. ik_max_word��ik_smart�ִ���

- ��. һ��tokenizer(�ִ���)����һ���ַ���,��֮�ָ�Ϊ������tokens(��Ԫ,ͨ���Ƕ����ĵ���),Ȼ�����tokens����

����:whitespace tokenizer�����հ��ַ�ʱ�ָ��ı������Ὣ�ı�"Quick brown fox!"�ָ�Ϊ(Quick,brown,fox!)

��tokenizer(�ִ���)�������¼����terms(����)��˳���positionλ��(����phrase�����word proximity�ʽ��ڲ�ѯ),�Լ�term(����)��������ԭʼword(����)��start(��ʼ)��end(����)��character offsets(�ַ���ƫ����)(���ڸ�����ʾ����������)��

elasticsearch�ṩ�˺ܶ����õķִ���(���ִ���),������������custom analyzers(�Զ���ִ���)��

���ڷִ���: https://www.elastic.co/guide/en/elasticsearch/reference/7.6/analysis.html

ע��:��������,������Ҫ��װ����ķִ���(����vagrant�ڴ�Ϊ4G)

POST _analyze

{

"analyzer": "standard",

"text": "The 2 Brown-Foxes bone."

}

ִ�н��:

{

"tokens" : [

{

"token" : "the",

"start_offset" : 0,

"end_offset" : 3,

"type" : "<ALPHANUM>",

"position" : 0

},

{

"token" : "2",

"start_offset" : 4,

"end_offset" : 5,

"type" : "<NUM>",

"position" : 1

},

{

"token" : "brown",

"start_offset" : 6,

"end_offset" : 11,

"type" : "<ALPHANUM>",

"position" : 2

},

{

"token" : "foxes",

"start_offset" : 12,

"end_offset" : 17,

"type" : "<ALPHANUM>",

"position" : 3

},

{

"token" : "bone",

"start_offset" : 18,

"end_offset" : 22,

"type" : "<ALPHANUM>",

"position" : 4

}

]

}

- ��. ��װik�ִ���

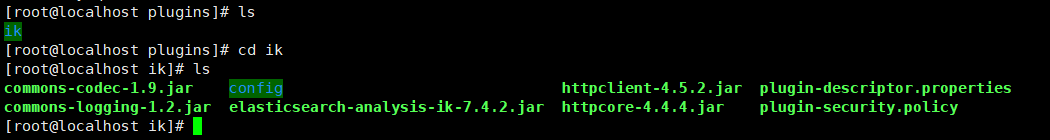

��ǰ�氲װ��elasticsearchʱ,�����Ѿ���elasticsearch�����ġ�/usr/share/elasticsearch/plugins��Ŀ¼,ӳ�䵽�������ġ� /mydata/elasticsearch/plugins��Ŀ¼��,���ԱȽϷ���������������ء�/elasticsearch-analysis-ik-7.4.2.zip���ļ�,Ȼ���ѹ�����ļ����¼��ɡ���װ��Ϻ�,��Ҫ����elasticsearch������

ע����Ҫ��Ȩ������chmod -R 777 plugins/ik

- ��. ik_max_word:�Ὣ�ı�����ϸ���ȵIJ��,����Ὣ���л������������á����Ϊ���л��������л������л��� ���ˡ�������������������á���ᡢ���õȴ���(������ʱ����ik_max_word)

{

"tokens" : [

{

"token" : "�����",

"start_offset" : 0,

"end_offset" : 7,

"type" : "CN_WORD",

"position" : 0

},

{

"token" : "�����",

"start_offset" : 0,

"end_offset" : 4,

"type" : "CN_WORD",

"position" : 1

},

{

"token" : "�л�",

"start_offset" : 0,

"end_offset" : 2,

"type" : "CN_WORD",

"position" : 2

},

{

"token" : "����",

"start_offset" : 1,

"end_offset" : 3,

"type" : "CN_WORD",

"position" : 3

},

{

"token" : "����",

"start_offset" : 2,

"end_offset" : 7,

"type" : "CN_WORD",

"position" : 4

},

{

"token" : "����",

"start_offset" : 2,

"end_offset" : 4,

"type" : "CN_WORD",

"position" : 5

},

{

"token" : "����",

"start_offset" : 4,

"end_offset" : 7,

"type" : "CN_WORD",

"position" : 6

},

{

"token" : "����",

"start_offset" : 4,

"end_offset" : 6,

"type" : "CN_WORD",

"position" : 7

},

{

"token" : "����",

"start_offset" : 6,

"end_offset" : 8,

"type" : "CN_WORD",

"position" : 8

},

{

"token" : "��������",

"start_offset" : 7,

"end_offset" : 12,

"type" : "CN_WORD",

"position" : 9

},

{

"token" : "������",

"start_offset" : 7,

"end_offset" : 11,

"type" : "CN_WORD",

"position" : 10

},

{

"token" : "����",

"start_offset" : 7,

"end_offset" : 9,

"type" : "CN_WORD",

"position" : 11

},

{

"token" : "�����",

"start_offset" : 9,

"end_offset" : 12,

"type" : "CN_WORD",

"position" : 12

},

{

"token" : "���",

"start_offset" : 9,

"end_offset" : 11,

"type" : "CN_WORD",

"position" : 13

},

{

"token" : "����",

"start_offset" : 10,

"end_offset" : 12,

"type" : "CN_WORD",

"position" : 14

}

]

}

- ��. ik_smart:����������ȵIJ��,����Ὣ���л������������á����Ϊ�л��������������á�(ǰ̨������ʱ���� ik_smart)

GET _analyze

{

"analyzer": "ik_smart",

"text":"�������������"

}

{

"tokens" : [

{

"token" : "�����",

"start_offset" : 0,

"end_offset" : 7,

"type" : "CN_WORD",

"position" : 0

},

{

"token" : "��������",

"start_offset" : 7,

"end_offset" : 12,

"type" : "CN_WORD",

"position" : 1

}

]

}

��. �Զ���ִ���

- ��. ��/usr/share/elasticsearch/plugins/ik/config�е�IKAnalyzer.cfg.xml

<?xml version="1.0" encoding="UTF-8"?>

<!DOCTYPE properties SYSTEM "http://java.sun.com/dtd/properties.dtd">

<properties>

<comment>IK Analyzer ��չ����</comment>

<!--�û����������������Լ�����չ�ֵ� -->

<entry key="ext_dict"></entry>

<!--�û����������������Լ�����չֹͣ���ֵ�-->

<entry key="ext_stopwords"></entry>

<!--�û���������������Զ����չ�ֵ� -->

<entry key="remote_ext_dict">http://192.168.56.10/es/fenci.txt</entry>

<!--�û���������������Զ����չֹͣ���ֵ�-->

<!-- <entry key="remote_ext_stopwords">words_location</entry> -->

</properties>

- ��. ����ɺ�,��Ҫ����elasticsearch����,�����IJ���Ч��docker restart elasticsearch

GET _analyze

{

"analyzer": "ik_smart",

"text":"���ǹ����̳�"

}

{

"tokens" : [

{

"token" : "���ǹ����̳�",

"start_offset" : 0,

"end_offset" : 6,

"type" : "CN_WORD",

"position" : 0

}

]

}

- ��. ����IJ�������

[root@localhost ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

95de12634192 elasticsearch:7.4.2 "/usr/local/bin/dock��" 4 seconds ago Up 3 seconds 0.0.0.0:9200->9200/tcp, :::9200->9200/tcp, 0.0.0.0:9300->9300/tcp, :::9300->9300/tcp elasticsearch

a197c1d2cf05 kibana:7.4.2 "/usr/local/bin/dumb��" 30 hours ago Up About a minute 0.0.0.0:5601->5601/tcp, :::5601->5601/tcp kibana

a18680bef63e redis "docker-entrypoint.s��" 5 weeks ago Up 2 minutes 0.0.0.0:6379->6379/tcp, :::6379->6379/tcp redis

91e02812975d mysql:5.7 "docker-entrypoint.s��" 5 weeks ago Up 2 minutes 0.0.0.0:3306->3306/tcp, :::3306->3306/tcp, 33060/tcp mysql

[root@localhost ~]# cd /mydata/

[root@localhost mydata]# ls

elasticsearch mysql redis

[root@localhost mydata]# mkdir nginx

[root@localhost mydata]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

redis latest 08502081bff6 8 weeks ago 105MB

mysql 5.7 09361feeb475 2 months ago 447MB

kibana 7.4.2 230d3ded1abc 22 months ago 1.1GB

elasticsearch 7.4.2 b1179d41a7b4 22 months ago 855MB

[root@localhost mydata]# docker run -p80:80 --name nginx -d nginx:1.10

Unable to find image 'nginx:1.10' locally

1.10: Pulling from library/nginx

6d827a3ef358: Pull complete

1e3e18a64ea9: Pull complete

556c62bb43ac: Pull complete

Digest: sha256:6202beb06ea61f44179e02ca965e8e13b961d12640101fca213efbfd145d7575

Status: Downloaded newer image for nginx:1.10

24c1454acf9f8419f762f3369b59557df57cd6209864ef64000f2f26d9f0d05b

[root@localhost mydata]# mkdir -p /mydata/nginx/html

[root@localhost mydata]# mkdir -p /mydata/nginx/logs

[root@localhost mydata]# mkdir -p /mydata/nginx/conf

[root@localhost mydata]# ls

elasticsearch mysql nginx redis

[root@localhost mydata]# cd nginx/

[root@localhost nginx]# ls

conf html logs

[root@localhost nginx]# cd ..

[root@localhost mydata]# rm -rf nginx/

[root@localhost mydata]# docker container cp nginx:/etc/nginx .

[root@localhost mydata]# ls

elasticsearch mysql nginx redis

[root@localhost mydata]# docker stop nginx

nginx

[root@localhost mydata]# docker rm nginx

nginx

[root@localhost mydata]# ls

elasticsearch mysql nginx redis

[root@localhost mydata]# cd nginx

[root@localhost nginx]# ls

conf.d fastcgi_params koi-utf koi-win mime.types modules nginx.conf scgi_params uwsgi_params win-utf

[root@localhost nginx]# cd ..

[root@localhost mydata]# mv nginx conf

[root@localhost mydata]# ls

conf elasticsearch mysql redis

[root@localhost mydata]# mkdir nginx

[root@localhost mydata]# mv conf nginx/

[root@localhost mydata]# ls

elasticsearch mysql nginx redis

[root@localhost mydata]# cd nginx/

[root@localhost nginx]# ls

conf

[root@localhost nginx]# docker run -p 80:80 --name nginx \

> -v /mydata/nginx/html:/usr/share/nginx/html \

> -v /mydata/nginx/logs:/var/log/nginx \

> -v /mydata/nginx/conf/:/etc/nginx \

> -d nginx:1.10

01bfbb6a8cd0e3f6af476793ad33fdc696740eadb125f8adad573303524adb55

[root@localhost nginx]# ls

conf html logs

[root@localhost nginx]# docker update nginx --restart=always

nginx

[root@localhost nginx]# echo '<h2>hello nginx!</h2>' >index.html

[root@localhost nginx]# ls

conf html index.html logs

[root@localhost nginx]# rm -rf index.html

[root@localhost nginx]# cd html

[root@localhost html]# echo '<h2>hello nginx!</h2>' >index.html

[root@localhost html]#

[root@localhost html]# mkdir es

[root@localhost html]# cd es

[root@localhost es]# vi fenci.text

[root@localhost es]# ls

fenci.text

[root@localhost es]# mv fenci.text fenci.txt

[root@localhost es]# cd /mydata/

[root@localhost mydata]# cd elasticsearch/

[root@localhost elasticsearch]# ls

config data plugins

[root@localhost elasticsearch]# cd plugins/

[root@localhost plugins]# ls

ik

[root@localhost plugins]# cd ik/

[root@localhost ik]# ls

commons-codec-1.9.jar config httpclient-4.5.2.jar plugin-descriptor.properties

commons-logging-1.2.jar elasticsearch-analysis-ik-7.4.2.jar httpcore-4.4.4.jar plugin-security.policy

[root@localhost ik]# cd config/

[root@localhost config]# ls

extra_main.dic extra_single_word_full.dic extra_stopword.dic main.dic quantifier.dic suffix.dic

extra_single_word.dic extra_single_word_low_freq.dic IKAnalyzer.cfg.xml preposition.dic stopword.dic surname.dic

[root@localhost config]# vi IKAnalyzer.cfg.xml

[root@localhost config]# docker restart elasticsearch

elasticsearch

[root@localhost config]# cd /mydata/nginx/

[root@localhost nginx]# ls

conf html logs

[root@localhost nginx]# cd html/es/

[root@localhost es]# ls

fenci.txt

[root@localhost es]# cat fenci.txt

���ǹ����̳�