wget https://archive.apache.org/dist/spark/spark-3.1.2/spark-3.1.2.tgz

编译Saprk源码前置条件

Maven 3.3.9 or newer

Java 8+

Scala

修改文件 make-distribution.sh

MVN="/data/java/apache-maven-3.8.1/bin/mvn"

先通过mvn下载相应的jar包

mvn -Pyarn -Phive -Phive-thriftserver -Psparkr -DskipTests clean package

编译spark

hadoop版本根据自身安装hadoop版本,

./dev/make-distribution.sh --name custom-spark --tgz -Psparkr -Phadoop-3.2 -Dhadoop.version=3.2.0 -Phive -Phive-thriftserver -Pyarn

cp /data/java/compire/spark-3.1.2/spark-3.1.2-bin-custom-spark.tgz /data/java

vim spark-env.xml

scala版本看pom.xml的version

<scala.version>2.12.10</scala.version>

export JAVA_HOME=/usr/lib/jvm/jdk1.8.0_301

export SCALA_HOME=/data/scala/scala-2.12.10

export HADOOP_HOME=/data/java/hadoop-3.2.2

export HADOOP_HDFS_HOME=${HADOOP_HOME}

export HADOOP_CONF_DIR=${HADOOP_HOME}/etc/hadoop

export SPARK_HOME=/data/java/spark-3.1.2-bin-custom-spark

export SPARK_DIST_CLASSPATH=$(hadoop classpath)

export HIVE_HOME=/data/java/apache-hive-3.1.2-bin

export MASTER_WEBUI_PORT=8079

export SPARK_LOG_DIR=/data/java/spark-3.1.2-bin-custom-spark/logs

export SPARK_LIBRARY_PATH=${SPARK_HOME}/jars

export PATH=$SCALA_HOME/bin:$SPARK_HOME/bin:$PATH

vim hive-defaults.conf

# Example:

# spark.master spark://master:7077

# spark.eventLog.enabled true

# spark.eventLog.dir hdfs://namenode:8021/directory

# spark.serializer org.apache.spark.serializer.KryoSerializer

# spark.driver.memory 5g

spark.executor.extraJavaOptions -XX:+PrintGCDetails -Dkey=value -Dnumbers="one two three"

spark.executor.extraClassPath /data/java/apache-hive-3.1.2-bin/lib/mysql-connector-java-8.0.26.jar

spark.driver.extraClassPath /data/java/apache-hive-3.1.2-bin/lib/mysql-connector-java-8.0.26.jar

vim log4j.properties

#

# Licensed to the Apache Software Foundation (ASF) under one or more

# contributor license agreements. See the NOTICE file distributed with

# this work for additional information regarding copyright ownership.

# The ASF licenses this file to You under the Apache License, Version 2.0

# (the "License"); you may not use this file except in compliance with

# the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

#

# Set everything to be logged to the console

log4j.rootCategory=WARN, console

log4j.appender.console=org.apache.log4j.ConsoleAppender

log4j.appender.console.target=System.err

log4j.appender.console.layout=org.apache.log4j.PatternLayout

log4j.appender.console.layout.ConversionPattern=%d{yy/MM/dd HH:mm:ss} %p %c{1}: %m%n

# Set the default spark-shell log level to WARN. When running the spark-shell, the

# log level for this class is used to overwrite the root logger's log level, so that

# the user can have different defaults for the shell and regular Spark apps.

log4j.logger.org.apache.spark.repl.Main=WARN

# Settings to quiet third party logs that are too verbose

log4j.logger.org.sparkproject.jetty=WARN

log4j.logger.org.sparkproject.jetty.util.component.AbstractLifeCycle=ERROR

log4j.logger.org.apache.spark.repl.SparkIMain$exprTyper=INFO

log4j.logger.org.apache.spark.repl.SparkILoop$SparkILoopInterpreter=INFO

log4j.logger.org.apache.parquet=ERROR

log4j.logger.parquet=ERROR

# SPARK-9183: Settings to avoid annoying messages when looking up nonexistent UDFs in SparkSQL with Hive support

log4j.logger.org.apache.hadoop.hive.metastore.RetryingHMSHandler=FATAL

log4j.logger.org.apache.hadoop.hive.ql.exec.FunctionRegistry=ERROR

# For deploying Spark ThriftServer

# SPARK-34128:Suppress undesirable TTransportException warnings involved in THRIFT-4805

log4j.appender.console.filter.1=org.apache.log4j.varia.StringMatchFilter

log4j.appender.console.filter.1.StringToMatch=Thrift error occurred during processing of message

log4j.appender.console.filter.1.AcceptOnMatch=false

cd /data/java/apache-hive-3.1.2-bin/conf/

cp hive-site.xml /data/java/spark-3.1.2-bin-custom-spark/conf/

export SPARK_HOME=/data/java/spark-3.1.2-bin-custom-spark

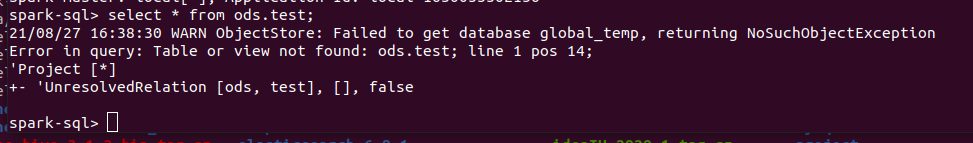

测试是否生效

spark-sql

select * from ods.test;

Error in query: Table or view not found: