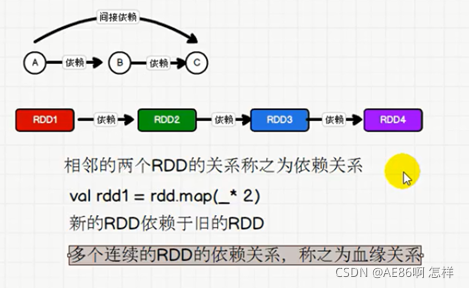

1.RDD 血缘关系

依赖关系:两个相邻RDD之间的关系

血缘关系:多个连续的RDD的依赖关系

2.RDD血缘关系的演示

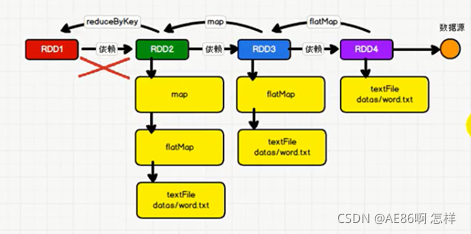

下图演示了RDD的血缘关系:

- RDD是不会保存数据的,但是每个RDD会保存自己的血缘关系;

- 血缘关系的意义:因为RDD不保存数据,一旦计算失败了,不能从上一个RDD重新计算,必须重头计算,那么RDD必须要知道数据源在哪里,血缘关系就用于追溯数据源,提高了容错性

血缘关系演示

package SparkCore._04_血缘关系

import org.apache.spark.rdd.RDD

import org.apache.spark.{SparkConf, SparkContext}

/**

* yatolovefantasy

* 2021-10-08-21:12

*/

object wordcount {

def main(args: Array[String]): Unit = {

val conf: SparkConf = new SparkConf().setAppName("wc").setMaster("local[*]")

val sc = new SparkContext(conf)

val fileRDD: RDD[String] = sc.textFile("SparkCore/target/classes/wc.txt")

println(fileRDD.toDebugString)

println("***********************")

val words: RDD[String] = fileRDD.flatMap(_.split(" "))

println(words.toDebugString)

println("***********************")

val wordToOne: RDD[(String, Int)] = words.map((_, 1))

println(wordToOne.toDebugString)

println("***********************")

val wordToSum: RDD[(String, Int)] = wordToOne.reduceByKey(_ + _)

println(wordToSum.toDebugString)

println("***********************")

val tuples: Array[(String, Int)] = wordToSum.collect()

tuples.foreach(println)

//todo 3.关闭链接

sc.stop()

}

}

执行结果:

(2) SparkCore/target/classes/wc.txt MapPartitionsRDD[1] at textFile at wordcount.scala:17 []

| SparkCore/target/classes/wc.txt HadoopRDD[0] at textFile at wordcount.scala:17 []

***********************

(2) MapPartitionsRDD[2] at flatMap at wordcount.scala:21 []

| SparkCore/target/classes/wc.txt MapPartitionsRDD[1] at textFile at wordcount.scala:17 []

| SparkCore/target/classes/wc.txt HadoopRDD[0] at textFile at wordcount.scala:17 []

***********************

(2) MapPartitionsRDD[3] at map at wordcount.scala:25 []

| MapPartitionsRDD[2] at flatMap at wordcount.scala:21 []

| SparkCore/target/classes/wc.txt MapPartitionsRDD[1] at textFile at wordcount.scala:17 []

| SparkCore/target/classes/wc.txt HadoopRDD[0] at textFile at wordcount.scala:17 []

***********************

(2) ShuffledRDD[4] at reduceByKey at wordcount.scala:29 []

+-(2) MapPartitionsRDD[3] at map at wordcount.scala:25 []

| MapPartitionsRDD[2] at flatMap at wordcount.scala:21 []

| SparkCore/target/classes/wc.txt MapPartitionsRDD[1] at textFile at wordcount.scala:17 []

| SparkCore/target/classes/wc.txt HadoopRDD[0] at textFile at wordcount.scala:17 []

***********************

(hive,1)

(mapreduce,1)

(flink,1)

(spark,1)

(hadoop,2)

Process finished with exit code 0

从上面可以看到一个RDD所经过的算子

并且可以看到这个算子是否有shuffle

±:表示依赖断开,也就是经历了shuffle

(1) 表示分区

3.RDD依赖关系演示

package SparkCore._04_血缘关系

import org.apache.spark.rdd.RDD

import org.apache.spark.{SparkConf, SparkContext}

/**

* yatolovefantasy

* 2021-10-08-21:12

*/

object wordcountdep {

def main(args: Array[String]): Unit = {

//todo 1. 建立和Spark框架的链接

val conf: SparkConf = new SparkConf().setAppName("wc").setMaster("local[*]")

val sc = new SparkContext(conf)

//todo 2.业务逻辑处理

val fileRDD: RDD[String] = sc.textFile("SparkCore/target/classes/wc.txt")

println(fileRDD.dependencies)

println("***********************")

val words: RDD[String] = fileRDD.flatMap(_.split(" "))

println(words.dependencies)

println("***********************")

val wordToOne: RDD[(String, Int)] = words.map((_, 1))

println(wordToOne.dependencies)

println("***********************")

val wordToSum: RDD[(String, Int)] = wordToOne.reduceByKey(_ + _)

println(wordToSum.dependencies)

println("***********************")

val tuples: Array[(String, Int)] = wordToSum.collect()

tuples.foreach(println)

//todo 3.关闭链接

sc.stop()

}

}

执行结果:

"D:\development software\Java\jdk1.8.0_281\bin\java.exe" "-javaagent:D:\development software\IDEA\IntelliJ IDEA 2021.2\lib\idea_rt.jar=9900:D:\development software\IDEA\IntelliJ IDEA 2021.2\bin" -Dfile.encoding=UTF-8 -classpath "D:\development software\Java\jdk1.8.0_281\jre\lib\charsets.jar;D:\development software\Java\jdk1.8.0_281\jre\lib\deploy.jar;D:\development software\Java\jdk1.8.0_281\jre\lib\ext\access-bridge-64.jar;D:\development software\Java\jdk1.8.0_281\jre\lib\ext\cldrdata.jar;D:\development software\Java\jdk1.8.0_281\jre\lib\ext\dnsns.jar;D:\development software\Java\jdk1.8.0_281\jre\lib\ext\jaccess.jar;D:\development software\Java\jdk1.8.0_281\jre\lib\ext\jfxrt.jar;D:\development software\Java\jdk1.8.0_281\jre\lib\ext\localedata.jar;D:\development software\Java\jdk1.8.0_281\jre\lib\ext\nashorn.jar;D:\development software\Java\jdk1.8.0_281\jre\lib\ext\sunec.jar;D:\development software\Java\jdk1.8.0_281\jre\lib\ext\sunjce_provider.jar;D:\development software\Java\jdk1.8.0_281\jre\lib\ext\sunmscapi.jar;D:\development software\Java\jdk1.8.0_281\jre\lib\ext\sunpkcs11.jar;D:\development software\Java\jdk1.8.0_281\jre\lib\ext\zipfs.jar;D:\development software\Java\jdk1.8.0_281\jre\lib\javaws.jar;D:\development software\Java\jdk1.8.0_281\jre\lib\jce.jar;D:\development software\Java\jdk1.8.0_281\jre\lib\jfr.jar;D:\development software\Java\jdk1.8.0_281\jre\lib\jfxswt.jar;D:\development software\Java\jdk1.8.0_281\jre\lib\jsse.jar;D:\development software\Java\jdk1.8.0_281\jre\lib\management-agent.jar;D:\development software\Java\jdk1.8.0_281\jre\lib\plugin.jar;D:\development software\Java\jdk1.8.0_281\jre\lib\resources.jar;D:\development software\Java\jdk1.8.0_281\jre\lib\rt.jar;D:\IdeaProjects\com-yato-bigdata\SparkCore\target\classes;D:\development software\scala\scala-2.12.11\lib\scala-library.jar;D:\development software\scala\scala-2.12.11\lib\scala-parser-combinators_2.12-1.0.7.jar;D:\development software\scala\scala-2.12.11\lib\scala-reflect.jar;D:\development software\scala\scala-2.12.11\lib\scala-swing_2.12-2.0.3.jar;D:\development software\scala\scala-2.12.11\lib\scala-xml_2.12-1.0.6.jar;D:\development software\RepMaven\org\apache\spark\spark-core_2.12\3.0.0\spark-core_2.12-3.0.0.jar;D:\development software\RepMaven\com\thoughtworks\paranamer\paranamer\2.8\paranamer-2.8.jar;D:\development software\RepMaven\org\apache\avro\avro\1.8.2\avro-1.8.2.jar;D:\development software\RepMaven\org\codehaus\jackson\jackson-core-asl\1.9.13\jackson-core-asl-1.9.13.jar;D:\development software\RepMaven\org\codehaus\jackson\jackson-mapper-asl\1.9.13\jackson-mapper-asl-1.9.13.jar;D:\development software\RepMaven\org\apache\commons\commons-compress\1.8.1\commons-compress-1.8.1.jar;D:\development software\RepMaven\org\tukaani\xz\1.5\xz-1.5.jar;D:\development software\RepMaven\org\apache\avro\avro-mapred\1.8.2\avro-mapred-1.8.2-hadoop2.jar;D:\development software\RepMaven\org\apache\avro\avro-ipc\1.8.2\avro-ipc-1.8.2.jar;D:\development software\RepMaven\commons-codec\commons-codec\1.9\commons-codec-1.9.jar;D:\development software\RepMaven\com\twitter\chill_2.12\0.9.5\chill_2.12-0.9.5.jar;D:\development software\RepMaven\com\esotericsoftware\kryo-shaded\4.0.2\kryo-shaded-4.0.2.jar;D:\development software\RepMaven\com\esotericsoftware\minlog\1.3.0\minlog-1.3.0.jar;D:\development software\RepMaven\org\objenesis\objenesis\2.5.1\objenesis-2.5.1.jar;D:\development software\RepMaven\com\twitter\chill-java\0.9.5\chill-java-0.9.5.jar;D:\development software\RepMaven\org\apache\xbean\xbean-asm7-shaded\4.15\xbean-asm7-shaded-4.15.jar;D:\development software\RepMaven\org\apache\hadoop\hadoop-client\2.7.4\hadoop-client-2.7.4.jar;D:\development software\RepMaven\org\apache\hadoop\hadoop-common\2.7.4\hadoop-common-2.7.4.jar;D:\development software\RepMaven\commons-cli\commons-cli\1.2\commons-cli-1.2.jar;D:\development software\RepMaven\xmlenc\xmlenc\0.52\xmlenc-0.52.jar;D:\development software\RepMaven\commons-httpclient\commons-httpclient\3.1\commons-httpclient-3.1.jar;D:\development software\RepMaven\commons-io\commons-io\2.4\commons-io-2.4.jar;D:\development software\RepMaven\commons-collections\commons-collections\3.2.2\commons-collections-3.2.2.jar;D:\development software\RepMaven\org\mortbay\jetty\jetty-sslengine\6.1.26\jetty-sslengine-6.1.26.jar;D:\development software\RepMaven\javax\servlet\jsp\jsp-api\2.1\jsp-api-2.1.jar;D:\development software\RepMaven\commons-lang\commons-lang\2.6\commons-lang-2.6.jar;D:\development software\RepMaven\commons-configuration\commons-configuration\1.6\commons-configuration-1.6.jar;D:\development software\RepMaven\commons-digester\commons-digester\1.8\commons-digester-1.8.jar;D:\development software\RepMaven\commons-beanutils\commons-beanutils\1.7.0\commons-beanutils-1.7.0.jar;D:\development software\RepMaven\com\google\protobuf\protobuf-java\2.5.0\protobuf-java-2.5.0.jar;D:\development software\RepMaven\com\google\code\gson\gson\2.2.4\gson-2.2.4.jar;D:\development software\RepMaven\org\apache\hadoop\hadoop-auth\2.7.4\hadoop-auth-2.7.4.jar;D:\development software\RepMaven\org\apache\httpcomponents\httpclient\4.2.5\httpclient-4.2.5.jar;D:\development software\RepMaven\org\apache\httpcomponents\httpcore\4.2.4\httpcore-4.2.4.jar;D:\development software\RepMaven\org\apache\directory\server\apacheds-kerberos-codec\2.0.0-M15\apacheds-kerberos-codec-2.0.0-M15.jar;D:\development software\RepMaven\org\apache\directory\server\apacheds-i18n\2.0.0-M15\apacheds-i18n-2.0.0-M15.jar;D:\development software\RepMaven\org\apache\directory\api\api-asn1-api\1.0.0-M20\api-asn1-api-1.0.0-M20.jar;D:\development software\RepMaven\org\apache\directory\api\api-util\1.0.0-M20\api-util-1.0.0-M20.jar;D:\development software\RepMaven\org\apache\curator\curator-client\2.7.1\curator-client-2.7.1.jar;D:\development software\RepMaven\org\apache\htrace\htrace-core\3.1.0-incubating\htrace-core-3.1.0-incubating.jar;D:\development software\RepMaven\org\apache\hadoop\hadoop-hdfs\2.7.4\hadoop-hdfs-2.7.4.jar;D:\development software\RepMaven\org\mortbay\jetty\jetty-util\6.1.26\jetty-util-6.1.26.jar;D:\development software\RepMaven\xerces\xercesImpl\2.9.1\xercesImpl-2.9.1.jar;D:\development software\RepMaven\xml-apis\xml-apis\1.3.04\xml-apis-1.3.04.jar;D:\development software\RepMaven\org\apache\hadoop\hadoop-mapreduce-client-app\2.7.4\hadoop-mapreduce-client-app-2.7.4.jar;D:\development software\RepMaven\org\apache\hadoop\hadoop-mapreduce-client-common\2.7.4\hadoop-mapreduce-client-common-2.7.4.jar;D:\development software\RepMaven\org\apache\hadoop\hadoop-yarn-client\2.7.4\hadoop-yarn-client-2.7.4.jar;D:\development software\RepMaven\org\apache\hadoop\hadoop-yarn-server-common\2.7.4\hadoop-yarn-server-common-2.7.4.jar;D:\development software\RepMaven\org\apache\hadoop\hadoop-mapreduce-client-shuffle\2.7.4\hadoop-mapreduce-client-shuffle-2.7.4.jar;D:\development software\RepMaven\org\apache\hadoop\hadoop-yarn-api\2.7.4\hadoop-yarn-api-2.7.4.jar;D:\development software\RepMaven\org\apache\hadoop\hadoop-mapreduce-client-core\2.7.4\hadoop-mapreduce-client-core-2.7.4.jar;D:\development software\RepMaven\org\apache\hadoop\hadoop-yarn-common\2.7.4\hadoop-yarn-common-2.7.4.jar;D:\development software\RepMaven\javax\xml\bind\jaxb-api\2.2.2\jaxb-api-2.2.2.jar;D:\development software\RepMaven\javax\xml\stream\stax-api\1.0-2\stax-api-1.0-2.jar;D:\development software\RepMaven\org\codehaus\jackson\jackson-jaxrs\1.9.13\jackson-jaxrs-1.9.13.jar;D:\development software\RepMaven\org\codehaus\jackson\jackson-xc\1.9.13\jackson-xc-1.9.13.jar;D:\development software\RepMaven\org\apache\hadoop\hadoop-mapreduce-client-jobclient\2.7.4\hadoop-mapreduce-client-jobclient-2.7.4.jar;D:\development software\RepMaven\org\apache\hadoop\hadoop-annotations\2.7.4\hadoop-annotations-2.7.4.jar;D:\development software\RepMaven\org\apache\spark\spark-launcher_2.12\3.0.0\spark-launcher_2.12-3.0.0.jar;D:\development software\RepMaven\org\apache\spark\spark-kvstore_2.12\3.0.0\spark-kvstore_2.12-3.0.0.jar;D:\development software\RepMaven\org\fusesource\leveldbjni\leveldbjni-all\1.8\leveldbjni-all-1.8.jar;D:\development software\RepMaven\com\fasterxml\jackson\core\jackson-core\2.10.0\jackson-core-2.10.0.jar;D:\development software\RepMaven\com\fasterxml\jackson\core\jackson-annotations\2.10.0\jackson-annotations-2.10.0.jar;D:\development software\RepMaven\org\apache\spark\spark-network-common_2.12\3.0.0\spark-network-common_2.12-3.0.0.jar;D:\development software\RepMaven\org\apache\spark\spark-network-shuffle_2.12\3.0.0\spark-network-shuffle_2.12-3.0.0.jar;D:\development software\RepMaven\org\apache\spark\spark-unsafe_2.12\3.0.0\spark-unsafe_2.12-3.0.0.jar;D:\development software\RepMaven\javax\activation\activation\1.1.1\activation-1.1.1.jar;D:\development software\RepMaven\org\apache\curator\curator-recipes\2.7.1\curator-recipes-2.7.1.jar;D:\development software\RepMaven\org\apache\curator\curator-framework\2.7.1\curator-framework-2.7.1.jar;D:\development software\RepMaven\com\google\guava\guava\16.0.1\guava-16.0.1.jar;D:\development software\RepMaven\org\apache\zookeeper\zookeeper\3.4.14\zookeeper-3.4.14.jar;D:\development software\RepMaven\org\apache\yetus\audience-annotations\0.5.0\audience-annotations-0.5.0.jar;D:\development software\RepMaven\javax\servlet\javax.servlet-api\3.1.0\javax.servlet-api-3.1.0.jar;D:\development software\RepMaven\org\apache\commons\commons-lang3\3.9\commons-lang3-3.9.jar;D:\development software\RepMaven\org\apache\commons\commons-math3\3.4.1\commons-math3-3.4.1.jar;D:\development software\RepMaven\org\apache\commons\commons-text\1.6\commons-text-1.6.jar;D:\development software\RepMaven\com\google\code\findbugs\jsr305\3.0.0\jsr305-3.0.0.jar;D:\development software\RepMaven\org\slf4j\slf4j-api\1.7.30\slf4j-api-1.7.30.jar;D:\development software\RepMaven\org\slf4j\jul-to-slf4j\1.7.30\jul-to-slf4j-1.7.30.jar;D:\development software\RepMaven\org\slf4j\jcl-over-slf4j\1.7.30\jcl-over-slf4j-1.7.30.jar;D:\development software\RepMaven\log4j\log4j\1.2.17\log4j-1.2.17.jar;D:\development software\RepMaven\org\slf4j\slf4j-log4j12\1.7.30\slf4j-log4j12-1.7.30.jar;D:\development software\RepMaven\com\ning\compress-lzf\1.0.3\compress-lzf-1.0.3.jar;D:\development software\RepMaven\org\xerial\snappy\snappy-java\1.1.7.5\snappy-java-1.1.7.5.jar;D:\development software\RepMaven\org\lz4\lz4-java\1.7.1\lz4-java-1.7.1.jar;D:\development software\RepMaven\com\github\luben\zstd-jni\1.4.4-3\zstd-jni-1.4.4-3.jar;D:\development software\RepMaven\org\roaringbitmap\RoaringBitmap\0.7.45\RoaringBitmap-0.7.45.jar;D:\development software\RepMaven\org\roaringbitmap\shims\0.7.45\shims-0.7.45.jar;D:\development software\RepMaven\commons-net\commons-net\3.1\commons-net-3.1.jar;D:\development software\RepMaven\org\scala-lang\modules\scala-xml_2.12\1.2.0\scala-xml_2.12-1.2.0.jar;D:\development software\RepMaven\org\scala-lang\scala-library\2.12.10\scala-library-2.12.10.jar;D:\development software\RepMaven\org\scala-lang\scala-reflect\2.12.10\scala-reflect-2.12.10.jar;D:\development software\RepMaven\org\json4s\json4s-jackson_2.12\3.6.6\json4s-jackson_2.12-3.6.6.jar;D:\development software\RepMaven\org\json4s\json4s-core_2.12\3.6.6\json4s-core_2.12-3.6.6.jar;D:\development software\RepMaven\org\json4s\json4s-ast_2.12\3.6.6\json4s-ast_2.12-3.6.6.jar;D:\development software\RepMaven\org\json4s\json4s-scalap_2.12\3.6.6\json4s-scalap_2.12-3.6.6.jar;D:\development software\RepMaven\org\glassfish\jersey\core\jersey-client\2.30\jersey-client-2.30.jar;D:\development software\RepMaven\jakarta\ws\rs\jakarta.ws.rs-api\2.1.6\jakarta.ws.rs-api-2.1.6.jar;D:\development software\RepMaven\org\glassfish\hk2\external\jakarta.inject\2.6.1\jakarta.inject-2.6.1.jar;D:\development software\RepMaven\org\glassfish\jersey\core\jersey-common\2.30\jersey-common-2.30.jar;D:\development software\RepMaven\jakarta\annotation\jakarta.annotation-api\1.3.5\jakarta.annotation-api-1.3.5.jar;D:\development software\RepMaven\org\glassfish\hk2\osgi-resource-locator\1.0.3\osgi-resource-locator-1.0.3.jar;D:\development software\RepMaven\org\glassfish\jersey\core\jersey-server\2.30\jersey-server-2.30.jar;D:\development software\RepMaven\org\glassfish\jersey\media\jersey-media-jaxb\2.30\jersey-media-jaxb-2.30.jar;D:\development software\RepMaven\jakarta\validation\jakarta.validation-api\2.0.2\jakarta.validation-api-2.0.2.jar;D:\development software\RepMaven\org\glassfish\jersey\containers\jersey-container-servlet\2.30\jersey-container-servlet-2.30.jar;D:\development software\RepMaven\org\glassfish\jersey\containers\jersey-container-servlet-core\2.30\jersey-container-servlet-core-2.30.jar;D:\development software\RepMaven\org\glassfish\jersey\inject\jersey-hk2\2.30\jersey-hk2-2.30.jar;D:\development software\RepMaven\org\glassfish\hk2\hk2-locator\2.6.1\hk2-locator-2.6.1.jar;D:\development software\RepMaven\org\glassfish\hk2\external\aopalliance-repackaged\2.6.1\aopalliance-repackaged-2.6.1.jar;D:\development software\RepMaven\org\glassfish\hk2\hk2-api\2.6.1\hk2-api-2.6.1.jar;D:\development software\RepMaven\org\glassfish\hk2\hk2-utils\2.6.1\hk2-utils-2.6.1.jar;D:\development software\RepMaven\org\javassist\javassist\3.25.0-GA\javassist-3.25.0-GA.jar;D:\development software\RepMaven\io\netty\netty-all\4.1.47.Final\netty-all-4.1.47.Final.jar;D:\development software\RepMaven\com\clearspring\analytics\stream\2.9.6\stream-2.9.6.jar;D:\development software\RepMaven\io\dropwizard\metrics\metrics-core\4.1.1\metrics-core-4.1.1.jar;D:\development software\RepMaven\io\dropwizard\metrics\metrics-jvm\4.1.1\metrics-jvm-4.1.1.jar;D:\development software\RepMaven\io\dropwizard\metrics\metrics-json\4.1.1\metrics-json-4.1.1.jar;D:\development software\RepMaven\io\dropwizard\metrics\metrics-graphite\4.1.1\metrics-graphite-4.1.1.jar;D:\development software\RepMaven\io\dropwizard\metrics\metrics-jmx\4.1.1\metrics-jmx-4.1.1.jar;D:\development software\RepMaven\com\fasterxml\jackson\core\jackson-databind\2.10.0\jackson-databind-2.10.0.jar;D:\development software\RepMaven\com\fasterxml\jackson\module\jackson-module-scala_2.12\2.10.0\jackson-module-scala_2.12-2.10.0.jar;D:\development software\RepMaven\com\fasterxml\jackson\module\jackson-module-paranamer\2.10.0\jackson-module-paranamer-2.10.0.jar;D:\development software\RepMaven\org\apache\ivy\ivy\2.4.0\ivy-2.4.0.jar;D:\development software\RepMaven\oro\oro\2.0.8\oro-2.0.8.jar;D:\development software\RepMaven\net\razorvine\pyrolite\4.30\pyrolite-4.30.jar;D:\development software\RepMaven\net\sf\py4j\py4j\0.10.9\py4j-0.10.9.jar;D:\development software\RepMaven\org\apache\spark\spark-tags_2.12\3.0.0\spark-tags_2.12-3.0.0.jar;D:\development software\RepMaven\org\apache\commons\commons-crypto\1.0.0\commons-crypto-1.0.0.jar;D:\development software\RepMaven\org\spark-project\spark\unused\1.0.0\unused-1.0.0.jar" SparkCore._04_血缘关系.wordcountdep

List(org.apache.spark.OneToOneDependency@4d41ba0f)

***********************

List(org.apache.spark.OneToOneDependency@59072e9d)

***********************

List(org.apache.spark.OneToOneDependency@2924f1d8)

***********************

List(org.apache.spark.ShuffleDependency@7dffda8b)

***********************

(hive,1)

(mapreduce,1)

(flink,1)

(spark,1)

(hadoop,2)

Process finished with exit code 0

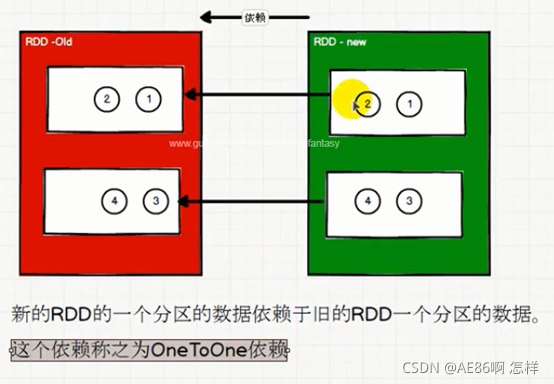

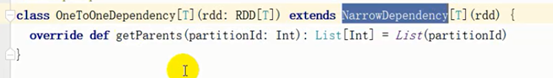

(1)oneToOneDependency

-

oneTooneDependency又叫做窄依赖

-

窄依赖表示每一个父(上游)RDD的Partition最多被子(下游)RDD的一个Partition使用,窄依赖我们形象的比喻为独生子女。

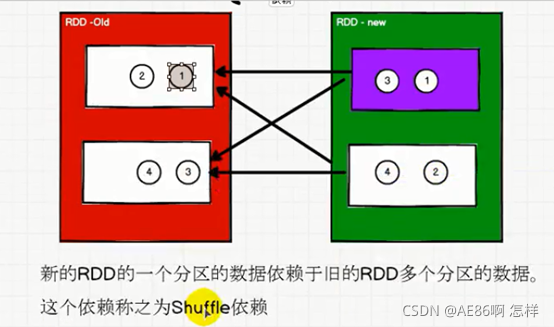

(2)shuffleDependency

-

shuffleDependency又叫做宽依赖

-

宽依赖表示同一个父(上游)RDD的Partition被多个子(下游)RDD的Partition依赖,会引起Shuffle,总结:宽依赖我们形象的比喻为多生。

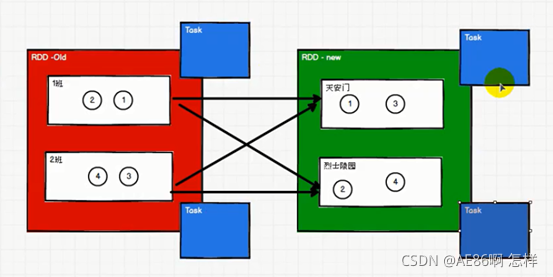

4.stage&partition&task

4.1Task数量和分区的关系

(1)窄依赖

窄依赖数据分区到分区,task个数不变,还是n个task

(2)宽依赖

宽依赖前后的Task数量会改变,shuffle前task数量等于分区数n,shuffle后task数量等于分区数m,一共n+m个task

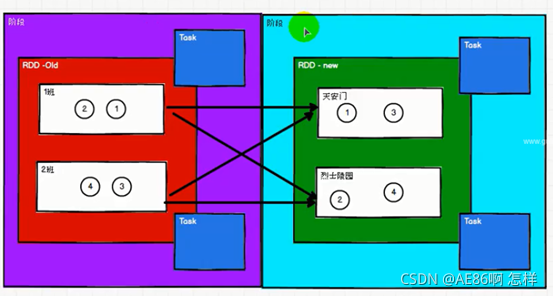

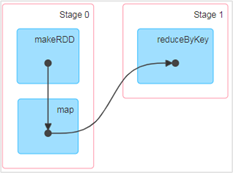

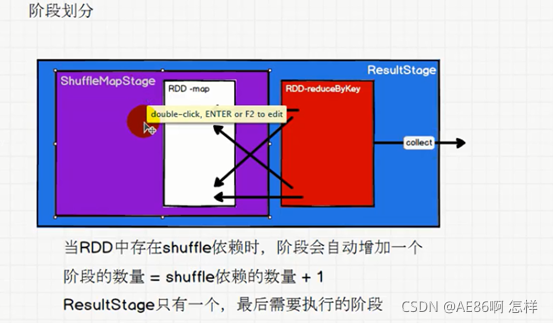

4.2 阶段的划分

- 对于宽依赖来说,下游RDD分区的数据是经过上游RDD各个分区数据打乱重组的,因此,上游RDD必须每个分区的数据都准备好,下游RDD才能进行运算

- 对于窄依赖来说,分区之间可以相互独立运行,分区1不需要等分区2,因此不需要阶段划分

DAG(Directed Acyclic Graph)有向无环图是由点和线组成的拓扑图形,该图形具有方向,不会闭环。例如,DAG记录了RDD的转换过程和任务的阶段。

阶段和shuffle有必然的联系

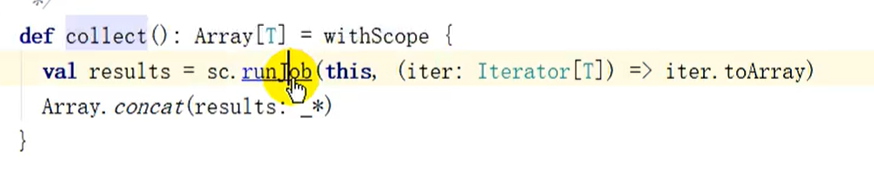

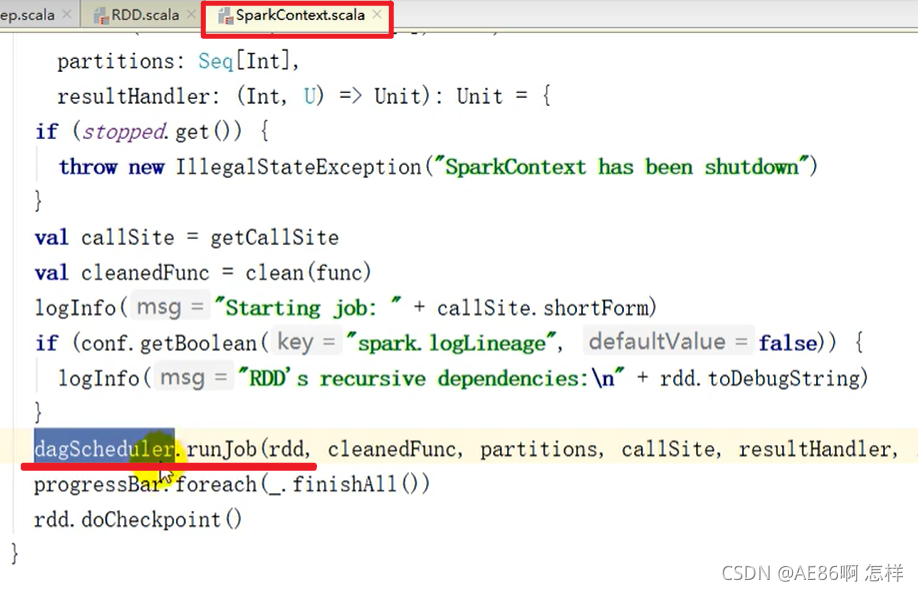

4.3 阶段划分源码

(1)从行动算子进去

一直到

(1) 根据触发行动算子的RDD创建ResultStage

(2) 然后由此RDD沿着依赖往前追溯,如果是shuffleDependency,就会创建ShuffleMapStage

(3) 结论就是:

① 阶段数量= shuffle依赖数量+1,这个1其实指的就是ResultStage,因为没有shuffle依赖,也会有ResultStage;

② ResultStage永远只有一个,就是最后需要执行的stage。

4.4 RDD 任务划分

四个概念:Application、Job、Stage和Task

Application:初始化一个SparkContext即生成一个Application;Job:一个Action算子就会生成一个Job;Stage:Stage等于宽依赖(ShuffleDependency)的个数加1;task:

(1)一个Stage阶段中,最后一个RDD的分区个数就是当前Stage的Task的个数。

(2)一个作业(Application)的task个数就是所有stage的task个数总和

Application->Job->Stage->Task每一层都是1对n的关系。

- 一个Application可以有多个行动算子

- 一个job有>=0数量的shuffle依赖

- 一个stage中最后的Rdd有>=1的分区,一个分区对应一个任务

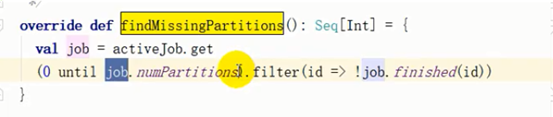

4.5 Task的数量

没有阶段划分,任务数量怎么来的

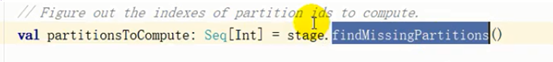

①submitStage(finalStage)

②val missing = getMissingParaentStage(stage)

③submitMissingTasks(stage,jobId.get) 提交没有上一阶段的tasks

④ Seq[Task]:当前阶段中所有的task

1.Task集合的来源:

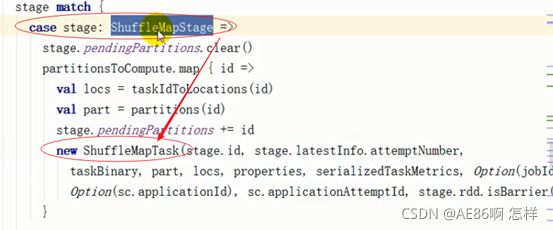

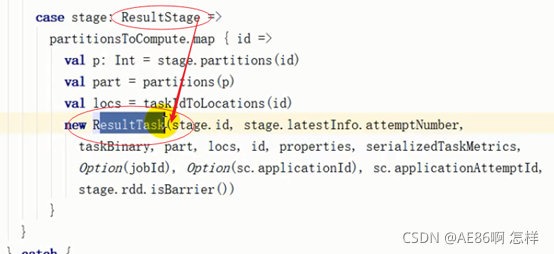

匹配当前阶段的类型(说明不同阶段的任务是不一样的)

2. 从上面的截图中可以看出partitionsToCompute的map算子里面创建Task,因为map是一一映射,所以task数量取决于partitionsToCompute

结论:

Job.numPartitions来自于阶段中最后一个RDD的分区个数,所以一个阶段中的Task数量=当前阶段中最后一个RDD的分区个数。

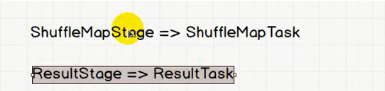

4.6 Task种类的划分

Task的种类和stage是挂钩的

Stage分为ShuffleMapStage和 ResultStage