����Ŀ¼

1:offset�Զ�����

Kafka������Ĭ�϶���δ���ĵ�topic��offset��ʱ��,Ҳ����ϵͳ��û�д洢�������ߵ����ѷ����ļ�¼��Ϣ,Ĭ��Kafka�����ߵ�Ĭ���״����Ѳ���:latest

auto.offset.reset=latest

- earliest - �Զ���ƫ��������Ϊ�����ƫ����

- latest - �Զ���ƫ��������Ϊ���µ�ƫ����

- none - ���δ�ҵ������������ǰƫ����,�����������׳��쳣

Kafka���������������ݵ�ʱ��Ĭ�ϻᶨ�ڵ��ύ���ѵ�ƫ����,�����Ϳ��Ա�֤���е���Ϣ���ٿ��Ա�����������1��,�û�����ͨ������������������:

//�Ƿ��Զ��ύoffset

enable.auto.commit = true Ĭ��

//�Զ��ύʱ,����ύ

auto.commit.interval.ms = 5000 Ĭ��

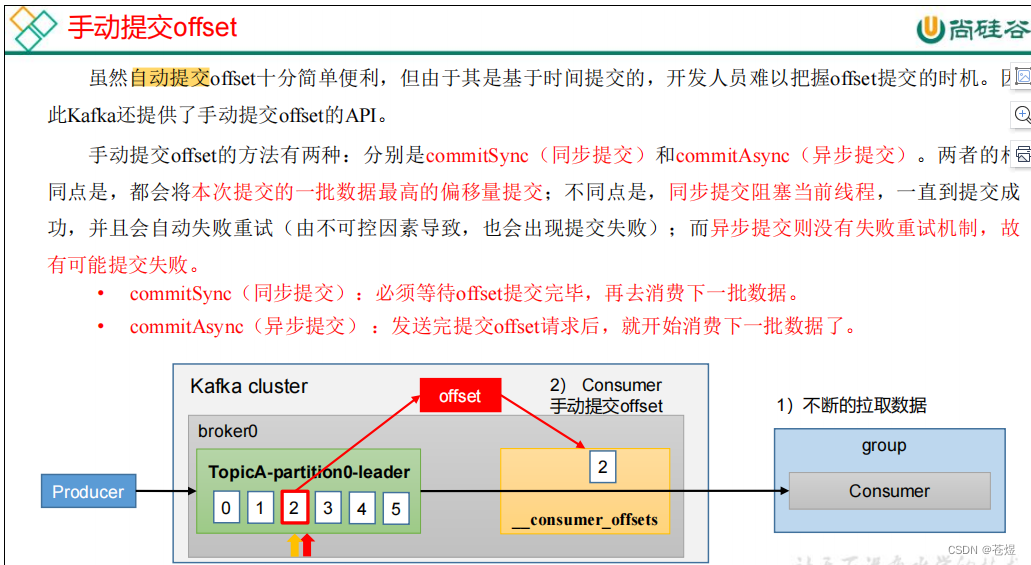

����û���Ҫ�Լ�����offset���Զ��ύ,���Թر�offset���Զ��ύ,�ֶ�����offset�ύ��ƫ����,ע���û��ύ��offsetƫ������Զ��Ҫ�ȱ������ѵ�ƫ����+1,��Ϊ�ύ��offset��kafka��������һ��ץȡ���ݵ�λ�á�

1:�������Զ��ύoffset

package com.kafka.offset;

import org.apache.kafka.clients.consumer.*;

import org.apache.kafka.common.TopicPartition;

import org.apache.kafka.common.serialization.StringDeserializer;

import java.time.Duration;

import java.util.HashMap;

import java.util.Iterator;

import java.util.Map;

import java.util.Properties;

import java.util.regex.Pattern;

public class KafkaConsumerDemo_01 {

public static void main(String[] args) {

//1.����Kafka���Ӳ���

Properties props=new Properties();

props.put(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG,"CentOSA:9092,CentOSB:9092,CentOSC:9092");

props.put(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class.getName());

props.put(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG,StringDeserializer.class.getName());

props.put(ConsumerConfig.GROUP_ID_CONFIG,"group01");

props.put(ConsumerConfig.ENABLE_AUTO_COMMIT_CONFIG,true);

props.put(ConsumerConfig.AUTO_COMMIT_INTERVAL_MS_CONFIG,10000);

//2.����Topic������

KafkaConsumer<String,String> consumer=new KafkaConsumer<String, String>(props);

//3.����topic��ͷ����Ϣ����

consumer.subscribe(Pattern.compile("^topic.*$"));

while (true){

ConsumerRecords<String, String> consumerRecords = consumer.poll(Duration.ofSeconds(1));

Iterator<ConsumerRecord<String, String>> recordIterator = consumerRecords.iterator();

while (recordIterator.hasNext()){

ConsumerRecord<String, String> record = recordIterator.next();

String key = record.key();

String value = record.value();

long offset = record.offset();

int partition = record.partition();

System.out.println("key:"+key+",value:"+value+",partition:"+partition+",offset:"+offset);

}

}

}

}

2:�ֶ��ύ

package com.kafka.offset;

import org.apache.kafka.clients.consumer.*;

import org.apache.kafka.common.TopicPartition;

import org.apache.kafka.common.serialization.StringDeserializer;

import java.time.Duration;

import java.util.HashMap;

import java.util.Iterator;

import java.util.Map;

import java.util.Properties;

import java.util.regex.Pattern;

public class KafkaConsumerDemo_02 {

public static void main(String[] args) {

//1.����Kafka���Ӳ���

Properties props=new Properties();

props.put(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG,"CentOSA:9092,CentOSB:9092,CentOSC:9092");

props.put(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class.getName());

props.put(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG,StringDeserializer.class.getName());

props.put(ConsumerConfig.GROUP_ID_CONFIG,"group01");

props.put(ConsumerConfig.ENABLE_AUTO_COMMIT_CONFIG,false);

//2.����Topic������

KafkaConsumer<String,String> consumer=new KafkaConsumer<String, String>(props);

//3.����topic��ͷ����Ϣ����

consumer.subscribe(Pattern.compile("^topic.*$"));

while (true){

ConsumerRecords<String, String> consumerRecords = consumer.poll(Duration.ofSeconds(1));

Iterator<ConsumerRecord<String, String>> recordIterator = consumerRecords.iterator();

while (recordIterator.hasNext()){

ConsumerRecord<String, String> record = recordIterator.next();

String key = record.key();

String value = record.value();

long offset = record.offset();

int partition = record.partition();

Map<TopicPartition, OffsetAndMetadata> offsets=new HashMap<TopicPartition, OffsetAndMetadata>();

offsets.put(new TopicPartition(record.topic(),partition),new OffsetAndMetadata(offset));

consumer.commitAsync(offsets, new OffsetCommitCallback() {

@Override

public void onComplete(Map<TopicPartition, OffsetAndMetadata> offsets, Exception exception) {

System.out.println("���:"+offset+"�ύ!");

}

});

System.out.println("key:"+key+",value:"+value+",partition:"+partition+",offset:"+offset);

}

}

}

}

3:ָ��offset����

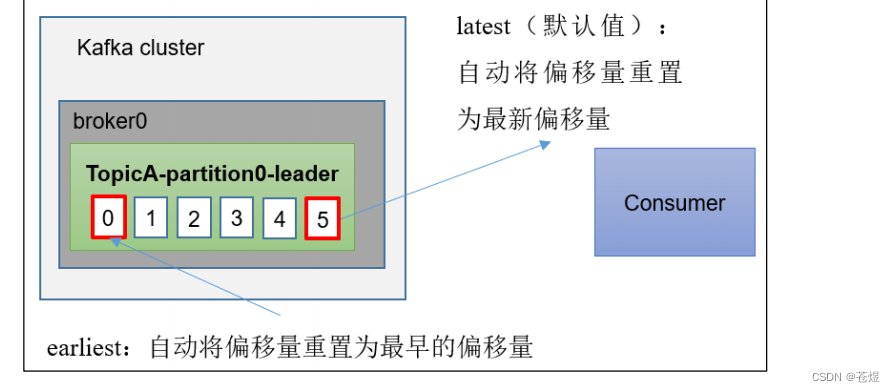

auto.offset.reset = earliest | latest | none Ĭ���� latest��

�� Kafka ��û�г�ʼƫ����(���������һ������)��������ϲ��ٴ��ڵ�ǰƫ����ʱ(����������ѱ�ɾ��),����ô��?

(1)earliest:�Զ���ƫ��������Ϊ�����ƫ����,�Cfrom-beginning��

(2)latest(Ĭ��ֵ):�Զ���ƫ��������Ϊ����ƫ������

(3)none:���δ�ҵ������������ǰƫ����,�����������׳��쳣��

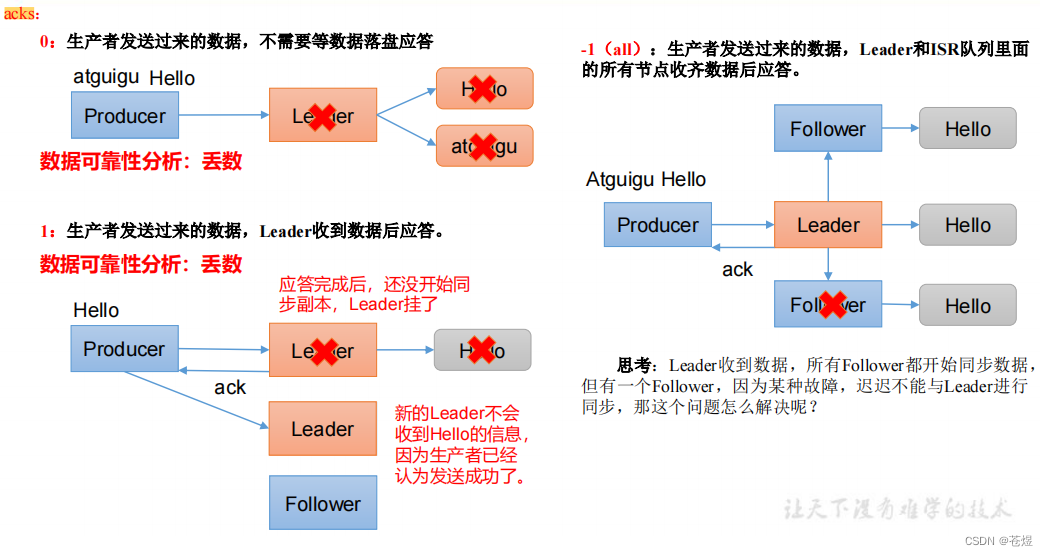

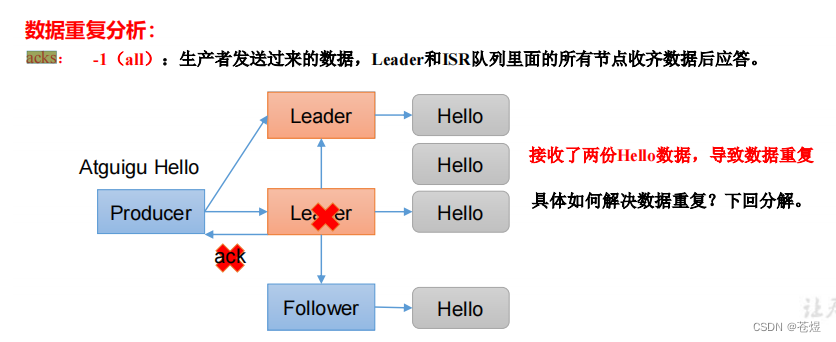

2:AckesӦ���Retores����

Kafka�������ڷ�����һ������Ϣ֮��,Ҫ��Broker�ڹ涨�Ķ�ʱ��AckӦ���,���û���ڹ涨ʱ����Ӧ��,Kafka�����᳢��n�����·�����Ϣ��

acks=1 Ĭ��

- acks=1 - Leader�ὫRecordд���䱾����־��,�����ڲ��ȴ�����Follower����ȫȷ�ϵ������������Ӧ�������������,���Leader��ȷ�ϼ�¼������ʧ��,����Follower���Ƽ�¼֮ǰʧ��,���¼����ʧ��

- acks=0 - �����߸�������ȴ����������κ�ȷ�ϡ��ü�¼���������ӵ����ֻ������в���Ϊ�ѷ��͡������������,���ܱ�֤���������յ���¼��

- acks=all - ����ζ��Leader���ȴ�ȫ��ͬ������ȷ�ϼ�¼���Ᵽ֤��ֻҪ����һ��ͬ�������Դ��ڻ״̬,��¼�Ͳ��ᶪʧ�������������ı�֤�����Ч��acks = -1���á�

����������ڹ涨��ʱ����,��û�еõ�Kafka��Leader��AckӦ��,Kafka���Կ���reties���ơ�

request.timeout.ms = 30000 Ĭ�ϵȴ�Ӧ��ʱ��

retries = 2147483647 Ĭ�����Դ���

package com.kafka.acks;

import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.ProducerConfig;

import org.apache.kafka.clients.producer.ProducerRecord;

import org.apache.kafka.common.serialization.StringSerializer;

import java.util.Properties;

public class KafkaProducerDemo_02 {

public static void main(String[] args) {

//1.�������Ӳ���

Properties props=new Properties();

props.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG,"CentOSA:9092,CentOSB:9092,CentOSC:9092");

props.put(ProducerConfig.KEY_SERIALIZER_CLASS_CONFIG, StringSerializer.class.getName());

props.put(ProducerConfig.VALUE_SERIALIZER_CLASS_CONFIG,StringSerializer.class.getName());

props.put(ProducerConfig.INTERCEPTOR_CLASSES_CONFIG,UserDefineProducerInterceptor.class.getName());

props.put(ProducerConfig.REQUEST_TIMEOUT_MS_CONFIG,1);

props.put(ProducerConfig.ACKS_CONFIG,"-1");

props.put(ProducerConfig.RETRIES_CONFIG,3);

//2.����������

KafkaProducer<String,String> producer=new KafkaProducer<String, String>(props);

//3.������Ϣ����

for(Integer i=0;i< 1;i++){

ProducerRecord<String, String> record = new ProducerRecord<>("topic01", "key" + i, "value" + i);

producer.send(record);

}

producer.close();

}

}

3:�ݵ�д

HTTP/1.1�ж��ݵ��ԵĶ�����:һ�κͶ������ijһ����Դ������Դ����Ӧ�þ���ͬ���Ľ��(���糬ʱ���������)��Ҳ����˵,��������ִ�ж���Դ������������Ӱ�����һ��ִ�е�Ӱ����ͬ��

Kafka��0.11.0.0�汾֧�������˶��ݵȵ�֧�֡��ݵ�����������߽Ƕȵ����ԡ��ݵȿ��Ա�֤�������߷��͵���Ϣ,���ᶪʧ,���Ҳ����ظ���ʵ���ݵȵĹؼ�����Ƿ���˿������������Ƿ��ظ�,���˵��ظ�������Ҫ���������Ƿ��ظ���������:

Ψһ��ʶ:Ҫ�����������Ƿ��ظ�,�����о͵���Ψһ��ʶ������֧��������,�����ž���Ψһ��ʶ

��¼���Ѵ������������ʶ:����Ψһ��ʶ������,����Ҫ��¼����Щ�������Ѿ���������,�������յ��µ�����ʱ,���������еı�ʶ�ʹ�����¼���бȽ�,���������¼������ͬ�ı�ʶ,˵�����ظ���¼,�ܾ�����

���ֳ�Ϊexactly once��Ҫֹͣ��δ�����Ϣ,���������־û���Kafka Topic�н���һ�Ρ��ڳ�ʼ���ڼ�,kafka�������������һ��Ψһ��ID��ΪProducer ID��PID��

PID�����к�����Ϣ������һ��,Ȼ����Broker���������кŴ��㿪ʼ���ҵ�������,���,������Ϣ�����кűȸ�PID / TopicPartition��������ύ����Ϣ���ô�1ʱ,Broker�Ż���ܸ���Ϣ����������������,��Broker�϶������������·�����Ϣ��

enable.idempotence= false Ĭ��

ע��:��ʹ���ݵ��Ե�ʱ��,Ҫ����뿪��retries=true��acks=all

package com.kafka.acks;

import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.ProducerConfig;

import org.apache.kafka.clients.producer.ProducerRecord;

import org.apache.kafka.common.serialization.StringSerializer;

import java.util.Properties;

public class KafkaProducerDemo_02 {

public static void main(String[] args) {

//1.�������Ӳ���

Properties props=new Properties();

props.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG,"CentOSA:9092,CentOSB:9092,CentOSC:9092");

props.put(ProducerConfig.KEY_SERIALIZER_CLASS_CONFIG, StringSerializer.class.getName());

props.put(ProducerConfig.VALUE_SERIALIZER_CLASS_CONFIG,StringSerializer.class.getName());

props.put(ProducerConfig.INTERCEPTOR_CLASSES_CONFIG,UserDefineProducerInterceptor.class.getName());

props.put(ProducerConfig.REQUEST_TIMEOUT_MS_CONFIG,1);

props.put(ProducerConfig.ACKS_CONFIG,"-1");

props.put(ProducerConfig.RETRIES_CONFIG,3);

props.put(ProducerConfig.ENABLE_IDEMPOTENCE_CONFIG,true);

//2.����������

KafkaProducer<String,String> producer=new KafkaProducer<String, String>(props);

//3.������Ϣ����

for(Integer i=0;i< 1;i++){

ProducerRecord<String, String> record = new ProducerRecord<>("topic01", "key" + i, "value" + i);

producer.send(record);

}

producer.close();

}

}

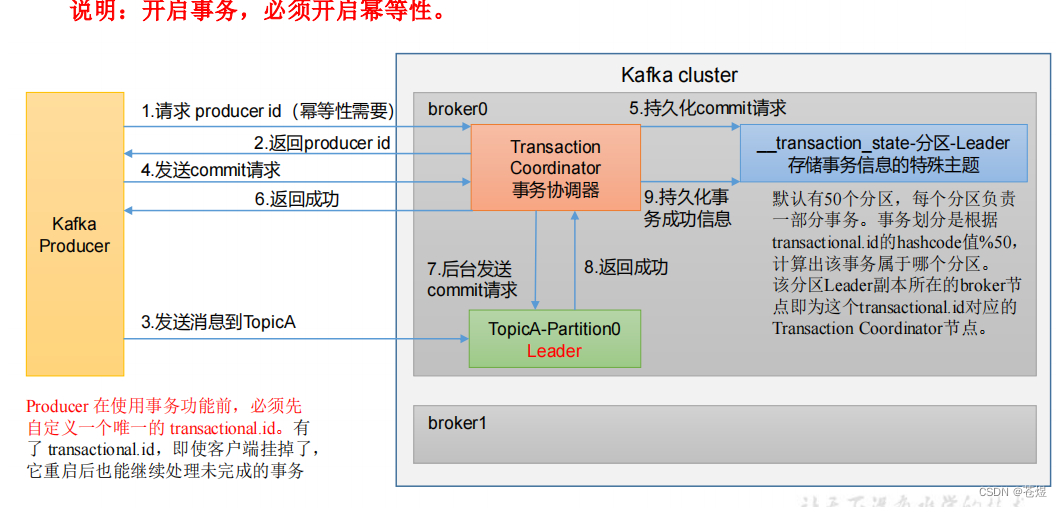

4:����������

��Kafka0.11.0.0����������ݵ��Եĸ���,ͬʱҲ����������ĸ��ͨ��Kafka�������Ϊ ����������Only��������&����������һ����˵Ĭ�����������ѵ���Ϣ�ļ�����read_uncommited����,���п��ܶ�ȡ������ʧ�ܵ�����,�����ڿ�������������֮��,��Ҫ�û����������ߵ�������뼶��

isolation.level = read_uncommitted Ĭ��

��ѡ��������ֵread_committed|read_uncommitted,�����ʼ�������,���Ѷ˱��뽫����ĸ��뼶������Ϊread_committed

�����������������ʱ��,ֻ��Ҫָ��transactional.id���Լ���,һ������������,Ĭ�������߾��Ѿ��������ݵ��ԡ�����Ҫ��"transactional.id"��ȡֵ������Ψһ��,ͬһʱ��ֻ����һ"transactional.id"�洢��,�����Ľ��ᱻ�رա�

�����apiһ����5��,����:

// 1 ��ʼ������

void initTransactions();

// 2 ��������

void beginTransaction() throws ProducerFencedException;

// 3 ���������ύ�Ѿ����ѵ�ƫ����(��Ҫ����������)

void sendOffsetsToTransaction(Map<TopicPartition, OffsetAndMetadata> offsets,

String consumerGroupId) throws

ProducerFencedException;

// 4 �ύ����

void commitTransaction() throws ProducerFencedException;

// 5 ��������(�����ڻع�����IJ���)

void abortTransaction() throws ProducerFencedException;

1:����������

package com.kafka.transactions;

import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.ProducerConfig;

import org.apache.kafka.clients.producer.ProducerRecord;

import org.apache.kafka.common.serialization.StringSerializer;

import java.util.Properties;

public class KafkaProducerDemo01 {

public static void main(String[] args) {

//1.�������Ӳ���

Properties props=new Properties();

props.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG,"CentOSA:9092,CentOSB:9092,CentOSC:9092");

props.put(ProducerConfig.KEY_SERIALIZER_CLASS_CONFIG, StringSerializer.class.getName());

props.put(ProducerConfig.VALUE_SERIALIZER_CLASS_CONFIG,StringSerializer.class.getName());

props.put(ProducerConfig.TRANSACTIONAL_ID_CONFIG,"transaction-id");

//2.����������

KafkaProducer<String,String> producer=new KafkaProducer<String, String>(props);

producer.initTransactions();//��ʼ������

try{

producer.beginTransaction();//��������

//3.������Ϣ����

for(Integer i=0;i< 10;i++){

Thread.sleep(10000);

ProducerRecord<String, String> record = new ProducerRecord<>("topic01", "key" + i, "value" + i);

producer.send(record);

}

producer.commitTransaction();//�ύ����

}catch (Exception e){

producer.abortTransaction();//��ֹ����

}

producer.close();

}

}

2:����������

package com.kafka.transactions;

import org.apache.kafka.clients.consumer.ConsumerConfig;

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.apache.kafka.clients.consumer.ConsumerRecords;

import org.apache.kafka.clients.consumer.KafkaConsumer;

import org.apache.kafka.common.serialization.StringDeserializer;

import java.time.Duration;

import java.util.Iterator;

import java.util.Properties;

import java.util.regex.Pattern;

public class KafkaConsumerDemo {

public static void main(String[] args) {

//1.����Kafka���Ӳ���

Properties props=new Properties();

props.put(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG,"CentOSA:9092,CentOSB:9092,CentOSC:9092");

props.put(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class.getName());

props.put(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG,StringDeserializer.class.getName());

props.put(ConsumerConfig.GROUP_ID_CONFIG,"group01");

props.put(ConsumerConfig.ISOLATION_LEVEL_CONFIG,"read_committed");

//2.����Topic������

KafkaConsumer<String,String> consumer=new KafkaConsumer<String, String>(props);

//3.����topic��ͷ����Ϣ����

consumer.subscribe(Pattern.compile("^topic.*$"));

while (true){

ConsumerRecords<String, String> consumerRecords = consumer.poll(Duration.ofSeconds(1));

Iterator<ConsumerRecord<String, String>> recordIterator = consumerRecords.iterator();

while (recordIterator.hasNext()){

ConsumerRecord<String, String> record = recordIterator.next();

String key = record.key();

String value = record.value();

long offset = record.offset();

int partition = record.partition();

System.out.println("key:"+key+",value:"+value+",partition:"+partition+",offset:"+offset);

}

}

}

}

5:������&����������

����������һ������:

˫ʮһ��ʱ��

1:ҵ��ϵͳ��������Ϣ������topicA��,

2:�ֿ�ϵͳ��������A���õ�������ҵ����,Ȼ�����ɹ��Ķ�����Ϣ����topicB

3:��Ϣ֪ͨϵͳ��������Ϣ��B���õ���,���û�������Ϣ;

��ô������ҵ����,һ����Ϣϵͳ����ʧ��,��ô�ֿ�ϵͳҲӦ�ûع�,��������topic�еĶ�����Ϣ��Ϊδ����,��������ظ�����;

������������������$����������

package com.kafka.transactions;

import org.apache.kafka.clients.consumer.*;

import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.ProducerConfig;

import org.apache.kafka.clients.producer.ProducerRecord;

import org.apache.kafka.common.TopicPartition;

import org.apache.kafka.common.serialization.StringDeserializer;

import org.apache.kafka.common.serialization.StringSerializer;

import java.time.Duration;

import java.util.*;

public class KafkaProducerDemo02 {

public static void main(String[] args) {

//1.������&������

KafkaProducer<String,String> producer=buildKafkaProducer();

KafkaConsumer<String, String> consumer = buildKafkaConsumer("group01");

consumer.subscribe(Arrays.asList("topic01"));

producer.initTransactions();//��ʼ������

try{

while(true){

ConsumerRecords<String, String> consumerRecords = consumer.poll(Duration.ofSeconds(1));

Iterator<ConsumerRecord<String, String>> consumerRecordIterator = consumerRecords.iterator();

//�����������

producer.beginTransaction();

Map<TopicPartition, OffsetAndMetadata> offsets=new HashMap<TopicPartition, OffsetAndMetadata>();

while (consumerRecordIterator.hasNext()){

ConsumerRecord<String, String> record = consumerRecordIterator.next();

//����Record

ProducerRecord<String,String> producerRecord=new ProducerRecord<String,String>("topic02",record.key(),record.value());

producer.send(producerRecord);

//��¼Ԫ����

offsets.put(new TopicPartition(record.topic(),record.partition()),new OffsetAndMetadata(record.offset()+1));

}

//�ύ����

producer.sendOffsetsToTransaction(offsets,"group01");

producer.commitTransaction();

}

}catch (Exception e){

producer.abortTransaction();//��ֹ����

}finally {

producer.close();

}

}

public static KafkaProducer<String,String> buildKafkaProducer(){

Properties props=new Properties();

props.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG,"CentOSA:9092,CentOSB:9092,CentOSC:9092");

props.put(ProducerConfig.KEY_SERIALIZER_CLASS_CONFIG, StringSerializer.class.getName());

props.put(ProducerConfig.VALUE_SERIALIZER_CLASS_CONFIG,StringSerializer.class.getName());

props.put(ProducerConfig.TRANSACTIONAL_ID_CONFIG,"transaction-id");

return new KafkaProducer<String, String>(props);

}

public static KafkaConsumer<String,String> buildKafkaConsumer(String group){

Properties props=new Properties();

props.put(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG,"CentOSA:9092,CentOSB:9092,CentOSC:9092");

props.put(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class.getName());

props.put(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG,StringDeserializer.class.getName());

props.put(ConsumerConfig.GROUP_ID_CONFIG,group);

props.put(ConsumerConfig.ENABLE_AUTO_COMMIT_CONFIG,false);

props.put(ConsumerConfig.ISOLATION_LEVEL_CONFIG,"read_committed");

return new KafkaConsumer<String, String>(props);

}

}