上一篇文章搭建了plg日志监控,本次准备看下目前集群的性能。

对应机器如下:虚机4,前一后三,4台配置均为4核4g

物理机为20核32g台式机。

先进行原始nginx配置的压测 nginx配置如下:

user nginx;

worker_processes 1;

error_log /var/log/nginx/error.log warn;

pid /var/run/nginx.pid;

events {

worker_connections 1024;

}

http {

include /etc/nginx/mime.types;

default_type application/octet-stream;

#配置nginx json格式日志

log_format main '{"@timestamp": "$time_local", '

'"remote_addr": "$remote_addr", '

'"referer": "$http_referer", '

'"request": "$request", '

'"status": $status, '

'"bytes": $body_bytes_sent, '

'"agent": "$http_user_agent", '

'"x_forwarded": "$http_x_forwarded_for", '

'"up_addr": "$upstream_addr",'

'"up_host": "$upstream_http_host",'

'"up_resp_time": "$upstream_response_time",'

'"request_time": "$request_time"'

' }';

access_log /var/log/nginx/access.log main;

sendfile on;

#tcp_nopush on;

keepalive_timeout 65;

#gzip on;

upstream test.miaohr.com {

server 192.168.175.128:8070;

server 192.168.175.129:8070;

server 192.168.175.130:8070;

}

server {

listen 80;

server_name test.miaohr.com;

charset utf-8;

location / {

root html;

index index.html index.htm;

proxy_pass http://test.miaohr.com;

proxy_set_header X-Real-IP $remote_addr;

client_max_body_size 100m;

}

}

}

使用jmeter5.0进行压测,压测命令如下

jmeter -n -t D:/testYc.jmx -l D:/result.txt -e -o D:/webreport

其中

D:/testYc.jmx ------> 测试计划文件的路径

D:/result.txt ------> 将要生成的测试结果文件的存放路径

D:/webreport -------> 将要生成的web报告的保存路径

使用物理机cmd命令进行压测。线程为200,持续100s,报告如下

此时rt为180ms+,tps在980左右

修改nginx配置为长连接,长连接配置如下

user nginx;

worker_processes 1;

error_log /var/log/nginx/error.log warn;

pid /var/run/nginx.pid;

events {

worker_connections 1024;

}

http {

include /etc/nginx/mime.types;

default_type application/octet-stream;

#配置nginx json格式日志

log_format main '{"@timestamp": "$time_local", '

'"remote_addr": "$remote_addr", '

'"referer": "$http_referer", '

'"request": "$request", '

'"status": $status, '

'"bytes": $body_bytes_sent, '

'"agent": "$http_user_agent", '

'"x_forwarded": "$http_x_forwarded_for", '

'"up_addr": "$upstream_addr",'

'"up_host": "$upstream_http_host",'

'"up_resp_time": "$upstream_response_time",'

'"request_time": "$request_time"'

' }';

access_log /var/log/nginx/access.log main;

sendfile on;

#tcp_nopush on;

keepalive_timeout 65;

#gzip on;

upstream test.miaohr.com {

server 192.168.175.128:8070;

server 192.168.175.129:8070;

server 192.168.175.130:8070;

keepalive 500;#最大空闲连接数

}

server {

listen 80;

server_name test.miaohr.com;

charset utf-8;

location / {

root html;

index index.html index.htm;

proxy_pass http://test.miaohr.com;

proxy_set_header X-Real-IP $remote_addr;

client_max_body_size 100m;

}

}

location / {

proxy_pass http://test-api;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header REMOTE-HOST $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_http_version 1.1; # 设置http版本为1.1

proxy_set_header Connection ""; # 设置Connection为长连接(默认为no)

}

}

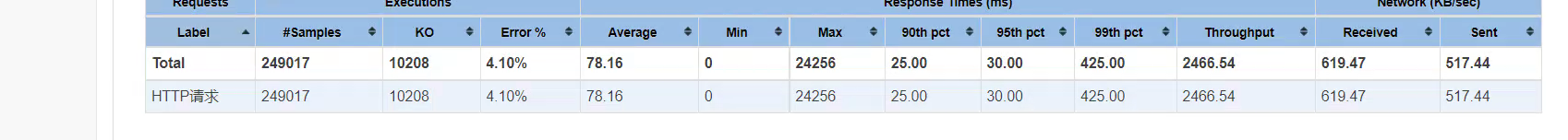

进行第二次压测,报告如下

本次压测,rt降低到76ms,tps上升到2400+。对比短连接,集群的性能得到了很大的提升。但错误率指标有所上升。根据jmeter执行日志查看, 报错集中为以下内容

1650551535574,485,HTTP请求,Non HTTP response code: java.net.BindException,Non HTTP response message: Address already in use: connect,线程组 1-135,text,false,,2437,0,200,200,http://192.168.175.131/getSxbm,0,0,485

根据查阅后发现,这是由于压测机为windows系统,tcp端口资源来不及释放导致。与集群性能无关

长连接与短连接的区别,主要在于长连接模式下,不需要频繁的建立起tcp连接,同时也不需要频繁关闭,节省了这部分资源的消耗。这种情况下比较适合频繁请求的场景下。