目录

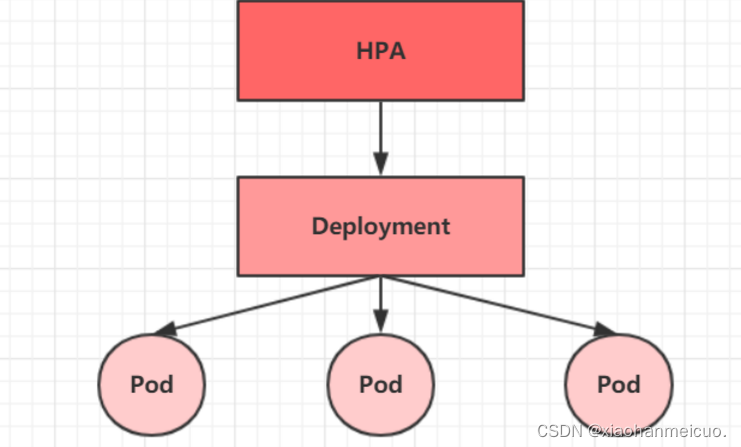

4.?Horizontal Pod Autoscaler(HPA)

1. Pod控制器介绍

Pod是kubernetes的最小管理单元,在kubernetes中,按照pod的创建方式可以将其分为两类:

- 自主式Pod:kubernetes直接创建出来的pod,这种pod删除后就没有了,也不会重建

- 控制器创建的Pod:kubernetes通过控制器创建的pod,这种pod删除了之后会自动创建

Pod控制器是管理pod的中间层,使用pod控制器之后,只需要告诉Pod控制器,想要多少个什么样的Pod就可以了,它会创建出满足条件的Pod并确保每一个Pod资源处于用户期望的目标状态。如果Pod资源在运行中出现故障,它会基于指定策略重新编排Pod.

在kubernetes中,有很多类型的pod控制器,每种都有自己适合的场景,常见的如下:

- ReplicationController:比较原始的pod控制器,目前已经被废弃,由ReplicaSet代替

- ReplicaSet:保证副本数量一直维持在期望值,并支持pod数量扩缩容,镜像版本升级

- Deployment:通过ReplicaSet来控制Pod,并支持滚动升级、回退版本

- Horizontal Pod Autoscaler:可以根据集群负载自动水平调整Pod的数量,实现削峰填谷

- DaemonSet:在集群中指定Node上运行且仅运行一个副本,一般用于守护进程类的任务

- Job:它创建出来的Pod只要完成任务就立即退出,不需要重启或重建,用于执行一次性任务

- Cronjob:它创建的Pod负责周期性任务控制,不需要持续后台运行

- StatefulSet:管理有状态应用

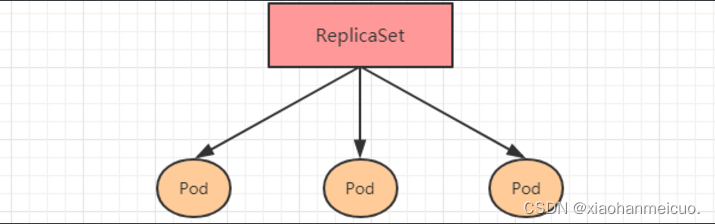

2. ReplicaSet(RS)

ReplicaSet的主要作用是保证一定数量的pod正常运行,它会持续监听这些Pod的运行状态,一旦Pod发生故障,就会重启或重建。同时它还支持对Pod数量的扩缩容和镜像版本的升降级。

?ReplicaSet的资源清单文件:

apiVersion: apps/v1 # 版本号

kind: ReplicaSet # 类型

metadata: # 元数据

name: # rs名称

namespace: # 所属命名空间

labels: #标签

controller: rs

spec: # 详情描述

replicas: 3 # 副本数量

selector: # 选择器,通过它指定该控制器管理哪些pod

matchLabels: # Labels匹配规则

app: nginx-pod

matchExpressions: # Expressions匹配规则

- {key: app, operator: In, values: [nginx-pod]}

template: # 模板,当副本数量不足时,会根据下面的模板创建pod副本

metadata:

labels:

app: nginx-pod

spec:

containers:

- name: nginx

image: nginx:1.17.1

ports:

- containerPort: 80需要新了解的配置项就是spec下面的几个选项:

- replicas:指定副本数量,其实就是当前rs创建出来的pod的数量,默认为1

- selector:选择器,它的作用是建立pod控制器和pod之间的关系,采用的Label Selector机制在pod模板上定义label,在控制器定义选择器,就可以表明当前控制器能管理哪些pod

- template:模板,就是当前控制器创建pod所使用的模板

创建ReplicaSet

创建pc-replicaset.yaml文件如下:

[root@k8s-master01 ~]# vim pc-replicaset.yaml

apiVersion: apps/v1

kind: ReplicaSet

metadata:

name: pc-replicaset

namespace: dev

spec:

replicas: 3

selector:

matchLabels:

app: nginx-pod

template:

metadata:

labels:

app: nginx-pod

spec:

containers:

- name: nginx

image: nginx:1.17.2

#创建rs

[root@k8s-master01 ~]# kubectl create -f pc-replicaset.yaml

replicaset.apps/pc-replicaset created

#查看rs

#DESIRED:期望副本数量

#CURRENT:当前副本数量

#READY:已经准备好提供服务的副本数量

[root@k8s-master01 ~]# kubectl get rs pc-replicaset -n dev -o wide

NAME DESIRED CURRENT READY AGE CONTAINERS IMAGES SELECTOR

pc-replicaset 3 3 3 24s nginx nginx:1.17.2 app=nginx-pod

#查看创建的pod

[root@k8s-master01 ~]# kubectl get pod -n dev

NAME READY STATUS RESTARTS AGE

pc-replicaset-jgjw9 1/1 Running 0 41s

pc-replicaset-kw94c 1/1 Running 0 41s

pc-replicaset-qfs9v 1/1 Running 0 41s

扩缩容

#编辑rs的副本数量,修改spec:replicas:5即可

[root@k8s-master01 ~]# kubectl edit rs pc-replicaset -n dev

replicaset.apps/pc-replicaset edited

#查看pod

[root@k8s-master01 ~]# kubectl get pod -n dev

NAME READY STATUS RESTARTS AGE

pc-replicaset-85jtm 1/1 Running 0 52s

pc-replicaset-jgjw9 1/1 Running 0 10m

pc-replicaset-jppr6 1/1 Running 0 52s

pc-replicaset-kw94c 1/1 Running 0 10m

pc-replicaset-qfs9v 1/1 Running 0 10m

方式2:

#使用scale命令实现扩缩容,后面--replicas=3直接指定数量即可

[root@k8s-master01 ~]# kubectl scale rs pc-replicaset --replicas=3 -n dev

replicaset.apps/pc-replicaset scaled

[root@k8s-master01 ~]# kubectl get pods -n dev

NAME READY STATUS RESTARTS AGE

pc-replicaset-jgjw9 1/1 Running 0 16m

pc-replicaset-kw94c 1/1 Running 0 16m

pc-replicaset-qfs9v 1/1 Running 0 16m镜像升级

方式1:

#编辑rs的容器镜像 -image:nginx:1.17.1

[root@k8s-master01 ~]# kubectl edit rs pc-replicaset -n dev

replicaset.apps/pc-replicaset edited

#查看镜像是否有变化

[root@k8s-master01 ~]# kubectl get rs -n dev -o wide

NAME DESIRED CURRENT READY AGE CONTAINERS IMAGES SELECTOR

pc-replicaset 3 3 3 23m nginx nginx:1.17.1 app=nginx-pod

方式2:

# kuberctl set image rs rs名称 容器=镜像版本 -n namespace

[root@k8s-master01 ~]# kubectl set image rs pc-replicaset nginx=nginx:1.17.2 -n dev

replicaset.apps/pc-replicaset image updated

[root@k8s-master01 ~]# kubectl get rs -n dev -o wide

NAME DESIRED CURRENT READY AGE CONTAINERS IMAGES SELECTOR

pc-replicaset 3 3 3 35m nginx nginx:1.17.2 app=nginx-pod

删除ReplicaSet

#使用kuberctl delete命令会删除此RS以及它管理的Pod

#在kubernetes删除RS钱,会将RS的replicasclear调整为0,等待所有的Pod被删除后,在执行RS对象的删除

[root@k8s-master01 ~]# kubectl delete rs pc-replicaset -n dev

replicaset.apps "pc-replicaset" deleted

[root@k8s-master01 ~]# kubectl get pod -n dev -o wide

No resources found in dev namespace.

#如果希望仅仅删除RS对象(保留pod),可以使用kuberctl delete命令时添加--cascade=false选项(不推荐)

[root@k8s-master01 ~]# kubectl delete rs pc-replicaset -n dev --cascade=false

warning: --cascade=false is deprecated (boolean value) and can be replaced with --cascade=orphan.

replicaset.apps "pc-replicaset" deleted

[root@k8s-master01 ~]# kubectl get pod -n dev

NAME READY STATUS RESTARTS AGE

pc-replicaset-7vmwn 1/1 Running 0 23s

pc-replicaset-hwnzl 1/1 Running 0 23s

pc-replicaset-wlt2r 1/1 Running 0 23s

#也可以使用yaml直接删除(推荐)

[root@k8s-master01 ~]# kubectl delete -f pc-replicaset.yaml

replicaset.apps "pc-replicaset" deleted

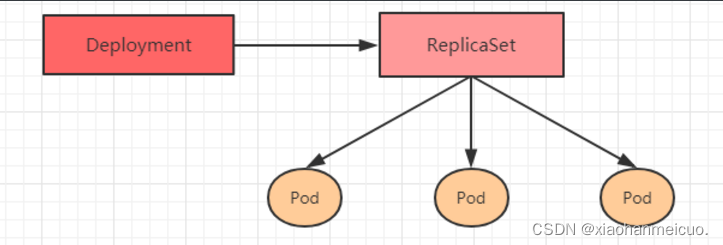

3. Deployment(Deploy)

为了更好的解决服务编排的问题,kubernetes在V1.2版本开始,引入了Deployment控制器。值得一提的是,这种控制器并不直接管理pod,二是通过管理ReplicaSet来间接管理Pod,即:Deployment管理ReplicaSet,ReplicaSet管理Pod。所以Deployment比ReplicaSet功能更强大。

?Deployment主要功能如下:

- 支持ReplicaSet的所有功能

- 支持发布的停止、继续

- 支持滚动升级和回滚版本

Deployment的资源清单文件:

apiVersion: apps/v1 # 版本号

kind: Deployment # 类型

metadata: # 元数据

name: # rs名称

namespace: # 所属命名空间

labels: #标签

controller: deploy

spec: # 详情描述

replicas: 3 # 副本数量

revisionHistoryLimit: 3 # 保留历史版本

paused: false # 暂停部署,默认是false

progressDeadlineSeconds: 600 # 部署超时时间(s),默认是600

strategy: # 策略

type: RollingUpdate # 滚动更新策略

rollingUpdate: # 滚动更新

maxSurge: 30% # 最大额外可以存在的副本数,可以为百分比,也可以为整数

maxUnavailable: 30% # 最大不可用状态的 Pod 的最大值,可以为百分比,也可以为整数

selector: # 选择器,通过它指定该控制器管理哪些pod

matchLabels: # Labels匹配规则

app: nginx-pod

matchExpressions: # Expressions匹配规则

- {key: app, operator: In, values: [nginx-pod]}

template: # 模板,当副本数量不足时,会根据下面的模板创建pod副本

metadata:

labels:

app: nginx-pod

spec:

containers:

- name: nginx

image: nginx:1.17.2

ports:

- containerPort: 80创建deployment

创建pc-deployment.yaml文件如下:

[root@k8s-master01 ~]# vim pc-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: pc-deployment

namespace: dev

spec:

replicas: 3

selector:

matchLabels:

app: nginx-pod

template:

metadata:

labels:

app: nginx-pod

spec:

containers:

- name: nginx

image: nginx:1.17.2

#创建deployment

[root@k8s-master01 ~]# kubectl create -f pc-deployment.yaml --record=true

Flag --record has been deprecated, --record will be removed in the future

deployment.apps/pc-deployment created

#查看deployment

#UP-TO-ADTE 最新版本的pod的数量

#AVAILABLE 当前可用的pod的数量

[root@k8s-master01 ~]# kubectl get deploy pc-deployment -n dev

NAME READY UP-TO-DATE AVAILABLE AGE

pc-deployment 3/3 3 3 36s

#查看rs

#rs的名称是在原来deployment的名字后面添加了一个10位数的随机字符串

[root@k8s-master01 ~]# kubectl get rs -n dev

NAME DESIRED CURRENT READY AGE

pc-deployment-86f4996797 3 3 3 45s

#查看pod

[root@k8s-master01 ~]# kubectl get pods -n dev

NAME READY STATUS RESTARTS AGE

pc-deployment-86f4996797-79k99 1/1 Running 0 64s

pc-deployment-86f4996797-bhp7s 1/1 Running 0 64s

pc-deployment-86f4996797-jwf77 1/1 Running 0 64s

扩缩容

方式1:

#变更副本数量为5个

[root@k8s-master01 ~]# kubectl scale deploy pc-deployment --replicas=5 -n dev

deployment.apps/pc-deployment scaled

#查看deployment

[root@k8s-master01 ~]# kubectl get deploy pc-deployment -n dev

NAME READY UP-TO-DATE AVAILABLE AGE

pc-deployment 5/5 5 5 41m

#查看pod

[root@k8s-master01 ~]# kubectl get pods -n dev

NAME READY STATUS RESTARTS AGE

pc-deployment-86f4996797-79k99 1/1 Running 0 41m

pc-deployment-86f4996797-bhp7s 1/1 Running 0 41m

pc-deployment-86f4996797-gwf9z 1/1 Running 0 34s

pc-deployment-86f4996797-gwzzx 1/1 Running 0 34s

pc-deployment-86f4996797-jwf77 1/1 Running 0 41m

方式2:

#编辑deployment的副本数量,修改spec:replicas:3即可

[root@k8s-master01 ~]# kubectl edit deploy pc-deployment -n dev

deployment.apps/pc-deployment edited

#查看pod

[root@k8s-master01 ~]# kubectl get pods -n dev

NAME READY STATUS RESTARTS AGE

pc-deployment-86f4996797-79k99 1/1 Running 0 46m

pc-deployment-86f4996797-bhp7s 1/1 Running 0 46m

pc-deployment-86f4996797-jwf77 1/1 Running 0 46m

镜像更新

deployment支持两种更新策略:重建更新和滚动更新,可以通过strategy指定策略类型,支持两个属性:

strategy:指定新的Pod替换旧的Pod的策略, 支持两个属性:

? type:指定策略类型,支持两种策略

? ? Recreate:在创建出新的Pod之前会先杀掉所有已存在的Pod

? ? RollingUpdate:滚动更新,就是杀死一部分,就启动一部分,在更新过程中,存在两个版本Pod

? rollingUpdate:当type为RollingUpdate时生效,用于为RollingUpdate设置参数,支持两个属性:

? ? maxUnavailable:用来指定在升级过程中不可用Pod的最大数量,默认为25%。

? ? maxSurge: 用来指定在升级过程中可以超过期望的Pod的最大数量,默认为25%。

重建更新

1)编辑pc-deployment.yaml,在spec节点下添加更新策略

spec:

strategy: # 策略

type: Recreate # 重建更新2)创建deployment进行验证

#变更镜像

[root@k8s-master01 ~]# kubectl set image deployment pc-deployment nginx=nginx:1.17.1 -n dev

deployment.apps/pc-deployment image updated

#查看变更过程,容器是有序的变更

[root@k8s-master01 ~]# kubectl get pods -n dev -w

NAME READY STATUS RESTARTS AGE

pc-deployment-5948ccc5f-tz7kv 0/1 Terminating 0 73s

pc-deployment-6f7f65b46d-j5rll 0/1 ContainerCreating 0 1s

pc-deployment-86f4996797-79k99 1/1 Running 0 19h

pc-deployment-86f4996797-bhp7s 1/1 Running 0 19h

pc-deployment-86f4996797-jwf77 1/1 Running 0 19h

pc-deployment-5948ccc5f-tz7kv 1/1 Terminating 0 87s

pc-deployment-5948ccc5f-tz7kv 0/1 Terminating 0 88s

pc-deployment-5948ccc5f-tz7kv 0/1 Terminating 0 88s

pc-deployment-5948ccc5f-tz7kv 0/1 Terminating 0 88s

pc-deployment-6f7f65b46d-j5rll 1/1 Running 0 100s

pc-deployment-86f4996797-79k99 1/1 Terminating 0 19h

pc-deployment-6f7f65b46d-tbzqj 0/1 Pending 0 0s

pc-deployment-6f7f65b46d-tbzqj 0/1 Pending 0 0s

pc-deployment-6f7f65b46d-tbzqj 0/1 ContainerCreating 0 0s

pc-deployment-6f7f65b46d-tbzqj 1/1 Running 0 1s

pc-deployment-86f4996797-79k99 0/1 Terminating 0 19h

pc-deployment-86f4996797-jwf77 1/1 Terminating 0 19h

pc-deployment-86f4996797-79k99 0/1 Terminating 0 19h

pc-deployment-6f7f65b46d-z6mxm 0/1 Pending 0 0s

pc-deployment-86f4996797-79k99 0/1 Terminating 0 19h

pc-deployment-6f7f65b46d-z6mxm 0/1 Pending 0 0s

pc-deployment-6f7f65b46d-z6mxm 0/1 ContainerCreating 0 0s

pc-deployment-86f4996797-jwf77 0/1 Terminating 0 19h

pc-deployment-86f4996797-jwf77 0/1 Terminating 0 19h

pc-deployment-86f4996797-jwf77 0/1 Terminating 0 19h

pc-deployment-6f7f65b46d-z6mxm 1/1 Running 0 1s

pc-deployment-86f4996797-bhp7s 1/1 Terminating 0 19h

pc-deployment-86f4996797-bhp7s 0/1 Terminating 0 19h

pc-deployment-86f4996797-bhp7s 0/1 Terminating 0 19h

pc-deployment-86f4996797-bhp7s 0/1 Terminating 0 19h

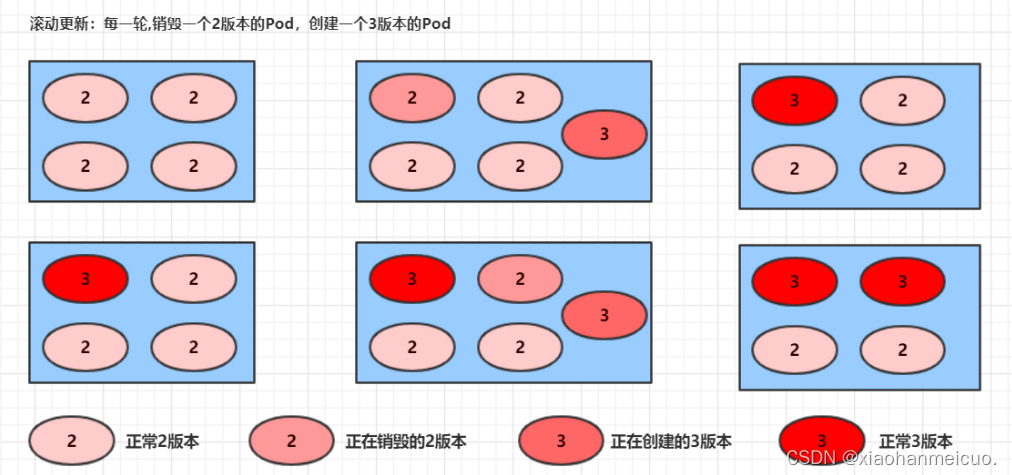

滚动更新

1)编辑pc-deployment.yaml在spec节点下添加更新策略

spec:

strategy: # 策略

type: RollingUpdate # 滚动更新策略

rollingUpdate:

maxSurge: 25%

maxUnavailable: 25%2)创建deploy进行验证

#变更镜像

[root@k8s-master01 ~]# kubectl set image deployment pc-deployment nginx=nginx:1.17.3 -n dev

deployment.apps/pc-deployment image updated

#观察升级过程

[root@k8s-master01 ~]# kubectl get pods -n dev -w

NAME READY STATUS RESTARTS AGE

pc-deployment-6f7f65b46d-j5rll 1/1 Running 0 38m

pc-deployment-6f7f65b46d-tbzqj 1/1 Running 0 36m

pc-deployment-6f7f65b46d-z6mxm 1/1 Running 0 36m

pc-deployment-79f7d88458-s8wt6 0/1 ContainerCreating 0 10s

pc-deployment-79f7d88458-s8wt6 1/1 Running 0 62s

pc-deployment-6f7f65b46d-z6mxm 1/1 Terminating 0 37m

pc-deployment-79f7d88458-z74g8 0/1 Pending 0 0s

pc-deployment-79f7d88458-z74g8 0/1 Pending 0 0s

pc-deployment-79f7d88458-z74g8 0/1 ContainerCreating 0 0s

pc-deployment-6f7f65b46d-z6mxm 0/1 Terminating 0 37m

pc-deployment-6f7f65b46d-z6mxm 0/1 Terminating 0 37m

pc-deployment-6f7f65b46d-z6mxm 0/1 Terminating 0 37m

pc-deployment-79f7d88458-z74g8 1/1 Running 0 2s

pc-deployment-6f7f65b46d-tbzqj 1/1 Terminating 0 37m

pc-deployment-79f7d88458-rn5nr 0/1 Pending 0 0s

pc-deployment-79f7d88458-rn5nr 0/1 Pending 0 0s

pc-deployment-79f7d88458-rn5nr 0/1 ContainerCreating 0 0s

pc-deployment-79f7d88458-rn5nr 1/1 Running 0 1s

pc-deployment-6f7f65b46d-tbzqj 0/1 Terminating 0 37m

pc-deployment-6f7f65b46d-j5rll 1/1 Terminating 0 39m

pc-deployment-6f7f65b46d-tbzqj 0/1 Terminating 0 37m

pc-deployment-6f7f65b46d-tbzqj 0/1 Terminating 0 37m

pc-deployment-6f7f65b46d-j5rll 0/1 Terminating 0 39m

pc-deployment-6f7f65b46d-j5rll 0/1 Terminating 0 39m

pc-deployment-6f7f65b46d-j5rll 0/1 Terminating 0 39m

#中间过程是滚动进行的,边销毁边创建滚动更新的过程:

?镜像更新中rs的变化:

# 查看rs,发现原来的rs的依旧存在,只是pod数量变为了0,而后又新产生了一个rs,pod数量为4

# 其实这就是deployment能够进行版本回退的奥妙所在,后面会详细解释

[root@k8s-master01 ~]# kubectl get rs -n dev

NAME DESIRED CURRENT READY AGE

pc-deployment-5948ccc5f 0 0 0 44m

pc-deployment-6f7f65b46d 0 0 0 42m

pc-deployment-79f7d88458 3 3 3 4m55s

pc-deployment-86f4996797 0 0 0 20h

版本回退

deployment支持版本升级过程中的暂停、继续功能以及版本回退等诸多功能.

kuberctl rollout:版本升级相关功能,支持下面的选项:

- status 显示当前升级状态

- history 显示升级历史记录

- pause 暂停版本升级过程

- resume 继续已经暂停的版本升级过程

- restart 重启版本升级过程

- undo 回滚到上级版本(可以使用--to-revision回滚到指定版本)

#查看当前升级版本的状态

[root@k8s-master01 ~]# kubectl rollout status deploy pc-deployment -n dev

deployment "pc-deployment" successfully rolled out

#查看升级历史记录

[root@k8s-master01 ~]# kubectl rollout history deploy pc-deployment -n dev

deployment.apps/pc-deployment

REVISION CHANGE-CAUSE

1 kubectl create --filename=pc-deployment.yaml --record=true

2 kubectl create --filename=pc-deployment.yaml --record=true

3 kubectl create --filename=pc-deployment.yaml --record=true

4 kubectl create --filename=pc-deployment.yaml --record=true

#可以发现有四次版本记录,说明完成升级过三次

#版本回滚

#这里直接用--to-revision=1回滚到了1版本,如果省略这个选项,就是回退到上个版本,就是2版本

[root@k8s-master01 ~]# kubectl rollout undo deployment pc-deployment --to-revision=2 -n dev

deployment.apps/pc-deployment rolled back

#查看,这里nginx镜像版本直接到了第二版

[root@k8s-master01 ~]# kubectl get deploy -n dev -o wide

NAME READY UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

pc-deployment 3/3 3 3 23h nginx nginx:1.12.1 app=nginx-pod

#其实deployment之所以可是实现版本的回退,就是通过记录历史rs来实现的。一旦想回滚到哪个版本,只需要将当前版本的pod数量降为0,然后将回滚版本的pod提升为目标数量就可以

[root@k8s-master01 ~]# kubectl get rs -n dev

NAME DESIRED CURRENT READY AGE

pc-deployment-5948ccc5f 3 3 3 4h17m

pc-deployment-6f7f65b46d 0 0 0 4h16m

pc-deployment-79f7d88458 0 0 0 3h38m

pc-deployment-86f4996797 0 0 0 23h

金丝雀发布

Deployment控制器支持更新过程中的控制,如“暂停(pause)”或“继续(resume)”更新操作。

好比有一批新的Pod资源创建完成后立即暂停更新过程,此时仅存在一部分新版本的应用,主体部分还是旧的版本。然后,在筛选一小部分的用户请求路由到新版本的Pod应用,继续观察能否稳定地按期望的方式运行。确定没问题之后再继续完成余下的Pod资源滚动更新,否则立即回滚更新操作。

#更新deployment的版本,并配置暂停deployment

[root@k8s-master01 ~]# kubectl set image deploy pc-deployment nginx=nginx:1.17.4 -n dev && kubectl rollout pause deployment pc-deployment -n dev

deployment.apps/pc-deployment image updated

deployment.apps/pc-deployment paused

#观察更新状态

#监控更新的过程,可以看到已经新增了一个资源,但是并未按照预期的状态去删除一个旧的资源,就是因为使用了pause暂停命令

[root@k8s-master01 ~]# kubectl rollout status deploy pc-deployment -n dev

Waiting for deployment "pc-deployment" rollout to finish: 1 out of 3 new replicas have been updated...

[root@k8s-master01 ~]# kubectl get rs -n dev -o wide

NAME DESIRED CURRENT READY AGE CONTAINERS IMAGES SELECTOR

pc-deployment-5948ccc5f 3 3 3 4h45m nginx nginx:1.12.1 app=nginx-pod,pod-template-hash=5948ccc5f

pc-deployment-6f7f65b46d 0 0 0 4h43m nginx nginx:1.17.1 app=nginx-pod,pod-template-hash=6f7f65b46d

pc-deployment-79f7d88458 0 0 0 4h5m nginx nginx:1.17.3 app=nginx-pod,pod-template-hash=79f7d88458

pc-deployment-86f4996797 0 0 0 24h nginx nginx:1.17.2 app=nginx-pod,pod-template-hash=86f4996797

pc-deployment-cf7c57879 1 1 1 80s nginx nginx:1.17.4 app=nginx-pod,pod-template-hash=cf7c57879

[root@k8s-master01 ~]# kubectl get pods -n dev

NAME READY STATUS RESTARTS AGE

pc-deployment-5948ccc5f-kbv9r 1/1 Running 0 28m

pc-deployment-5948ccc5f-khbm9 1/1 Running 0 28m

pc-deployment-5948ccc5f-v4ptt 1/1 Running 0 28m

pc-deployment-cf7c57879-lfrm4 1/1 Running 0 91s

#确保更新的pod没问题了,接着更新

[root@k8s-master01 ~]# kubectl rollout resume deploy pc-deployment -n dev

deployment.apps/pc-deployment resumed

#查看最后更新的状态

[root@k8s-master01 ~]# kubectl get rs -n dev -o wide

NAME DESIRED CURRENT READY AGE CONTAINERS IMAGES SELECTOR

pc-deployment-5948ccc5f 0 0 0 4h48m nginx nginx:1.12.1 app=nginx-pod,pod-template-hash=5948ccc5f

pc-deployment-6f7f65b46d 0 0 0 4h47m nginx nginx:1.17.1 app=nginx-pod,pod-template-hash=6f7f65b46d

pc-deployment-79f7d88458 0 0 0 4h9m nginx nginx:1.17.3 app=nginx-pod,pod-template-hash=79f7d88458

pc-deployment-86f4996797 0 0 0 24h nginx nginx:1.17.2 app=nginx-pod,pod-template-hash=86f4996797

pc-deployment-cf7c57879 3 3 3 5m7s nginx nginx:1.17.4 app=nginx-pod,pod-template-hash=cf7c57879

[root@k8s-master01 ~]# kubectl get pods -n dev

NAME READY STATUS RESTARTS AGE

pc-deployment-cf7c57879-dvgzw 1/1 Running 0 26s

pc-deployment-cf7c57879-lfrm4 1/1 Running 0 5m20s

pc-deployment-cf7c57879-vz8s2 1/1 Running 0 25s

删除Deployme

#删除deployment,其下的rs和pod也将被删除

[root@k8s-master01 ~]# kubectl delete -f pc-deployment.yaml

deployment.apps "pc-deployment" deleted4.?Horizontal Pod Autoscaler(HPA)

?现在已经可以实现手动执行kubectl scale命令实现Pod扩容或缩容,但是这显然不符合Kubernetes的定位目标---自动化、智能化。kubernetes期望可以实现通过检测Pod的使用情况,实现pod数量的自欧东调整,于是就产生了Horizontal Pod Autoscaler(HPA)这种控制器。

HPA可以获取每个Pod利用率,然后和HPA中定义的指标进行对比,同时计算出需要伸缩的具体值,最后实现Pod的数量调整。其实HPA与之前的Deployment一样,也属于一种kubernetes资源对象,它通过追踪分析RC控制的所有目标Pod的负载变化情况,来确定是否需要针对性地调整目标Pod的副本数,这是HPA的实现原理。

?实验:

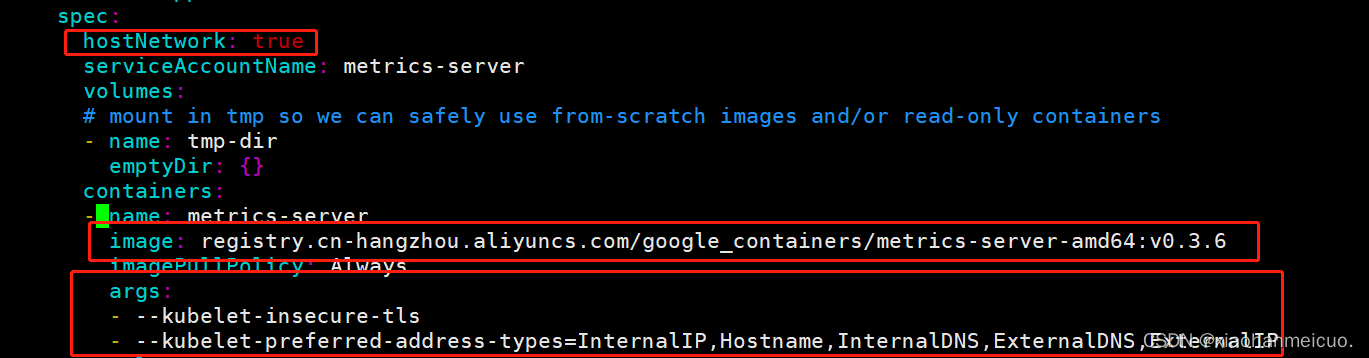

1.安装metrics-server

metrics-server可以用来收集集群中的资源使用情况

#获取metrics-server

[root@k8s-master01 ~]# git clone -b v0.3.6 https://github.com/kubernetes-incubator/metrics-server

正克隆到 'metrics-server'...

#修改deployment,这里修改的是镜像和初始化参数

[root@k8s-master01 ~]# cd metrics-server/deploy/1.8+/

[root@k8s-master01 1.8+]# vim metrics-server-deployment.yaml

hostNetwork: true

image: registry.cn-hangzhou.aliyuncs.com/google_containers/metrics-server-amd64:v0.3.6

args:

- --kubelet-insecure-tls

- --kubelet-preferred-address-types=InternalIP,Hostname,InternalDNS,ExternalDNS,ExternalIP

#安装metrics-server

[root@k8s-master01 1.8+]# kubectl apply -f ./

[root@k8s-master01 ~]# kubectl get pods -n kube-system

metrics-server-644778ff4f-87fgn 1/1 Running 0 93s

#查看pod的运行情况

[root@k8s-master01 ~]# kubectl top node

NAME CPU(cores) CPU% MEMORY(bytes) MEMORY%

k8s-master01 74m 3% 1190Mi 32%

k8s-node01 11m 1% 680Mi 39%

k8s-node02 16m 1% 437Mi 25% 注意:启动时报错可以根据这篇文章解决报错.(解决:metrics-server启动时报unable to recognize “*“: no matches for kind “*“ in version “*“ 等错误_韩以安-CSDN博客)

2. 准备deployment和service

[root@k8s-master01 ~]# vim pc-hpa-pod.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx

namespace: dev

spec:

strategy: # 策略

type: RollingUpdate # 滚动更新策略

replicas: 1

selector:

matchLabels:

app: nginx-pod

template:

metadata:

labels:

app: nginx-pod

spec:

containers:

- name: nginx

image: nginx:1.17.2

resources: # 资源配额

limits: # 限制资源(上限)

cpu: "1" # CPU限制,单位是core数

requests: # 请求资源(下限)

cpu: "100m" # CPU限制,单位是core数

[root@k8s-master01 ~]# kubectl create -f pc-hpa-pod.yaml

deployment.apps/nginx created

[root@k8s-master01 ~]# kubectl expose deployment nginx --type=NodePort --port=80 -n dev

service/nginx exposed

[root@k8s-master01 ~]# kubectl get deploy,pod,svc -n dev

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/nginx 1/1 1 1 2m2s

NAME READY STATUS RESTARTS AGE

pod/nginx-7b95f6c86d-j589s 1/1 Running 0 2m2s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/nginx NodePort 10.100.76.1 <none> 80:32098/TCP 110s

3. 部署HPA

创建pc-hpa.yaml文件

[root@k8s-master01 ~]# vim pc-hpa.yaml

apiVersion: autoscaling/v1

kind: HorizontalPodAutoscaler

metadata:

name: pc-hpa

namespace: dev

spec:

minReplicas: 1 #最小pod数量

maxReplicas: 10 #最大pod数量

targetCPUUtilizationPercentage: 3 # CPU使用率指标

scaleTargetRef: # 指定要控制的nginx信息

apiVersion: apps/v1

kind: Deployment

name: nginx

[root@k8s-master01 ~]# kubectl create -f pc-hpa.yaml

horizontalpodautoscaler.autoscaling/pc-hpa created

#查看hpa

[root@k8s-master01 ~]# kubectl get hpa -n dev

NAME REFERENCE TARGETS MINPODS MAXPODS REPLICAS AGE

pc-hpa Deployment/nginx 0%/3% 1 10 1 3m41s4. 测试

使用压测工具对service地址10.10.10.130:32098 进行压测。

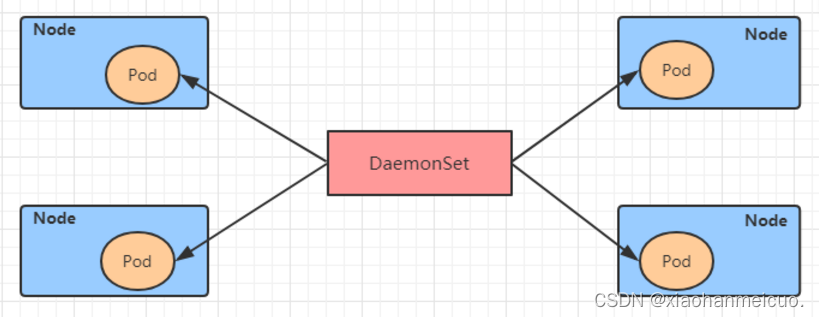

5. DaemonSet(DS)

DaemonSet类型的控制器可以保证在集群中的每一台(或指定)节点上都运行一个副本。一般适用于日志收集、节点监控等场景。也就是说一个Pod提供的功能是节点级别的(每个节点都需要且只需要一个),那么这类Pod就适合适用DaemonSet类型的控制器创建。

?DaemonSet控制器的特点:

- 每当向集群中添加一个节点时,指定的Pod副本也将添加到该节点上

- 当节点从集群中移除时,Pod也就被垃圾回收了

DaemonSet的资源清单文件如下:

apiVersion: apps/v1 # 版本号

kind: DaemonSet # 类型

metadata: # 元数据

name: # rs名称

namespace: # 所属命名空间

labels: #标签

controller: daemonset

spec: # 详情描述

revisionHistoryLimit: 3 # 保留历史版本

updateStrategy: # 更新策略

type: RollingUpdate # 滚动更新策略

rollingUpdate: # 滚动更新

maxUnavailable: 1 # 最大不可用状态的 Pod 的最大值,可以为百分比,也可以为整数

selector: # 选择器,通过它指定该控制器管理哪些pod

matchLabels: # Labels匹配规则

app: nginx-pod

matchExpressions: # Expressions匹配规则

- {key: app, operator: In, values: [nginx-pod]}

template: # 模板,当副本数量不足时,会根据下面的模板创建pod副本

metadata:

labels:

app: nginx-pod

spec:

containers:

- name: nginx

image: nginx:1.17.2

ports:

- containerPort: 80例:创建pc-daemonset.yaml

[root@k8s-master01 ~]# vim pc-daemonset.yaml

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: pc-daemonset

namespace: dev

spec:

selector:

matchLabels:

app: nginx-pod

template:

metadata:

labels:

app: nginx-pod

spec:

containers:

- name: nginx

image: nginx:1.17.2

#创建daemonset

[root@k8s-master01 ~]# kubectl create -f pc-daemonset.yaml

daemonset.apps/pc-daemonset created

#查看daemonset

[root@k8s-master01 ~]# kubectl get ds -n dev -o wide

NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE CONTAINERS IMAGES SELECTOR

pc-daemonset 2 2 2 2 2 <none> 3s nginx nginx:1.17.2 app=nginx-pod

#查看pod,每个node节点都有一个pod

[root@k8s-master01 ~]# kubectl get pod -n dev -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

pc-daemonset-fg847 1/1 Running 0 2m12s 10.244.2.50 k8s-node01 <none> <none>

pc-daemonset-k22ay 1/1 Running 0 2m12s 10.244.2.51 k8s-node02 <none> <none>

#删除daemonset

[root@k8s-master01 ~]# kubectl delete -f pc-daemonset.yaml

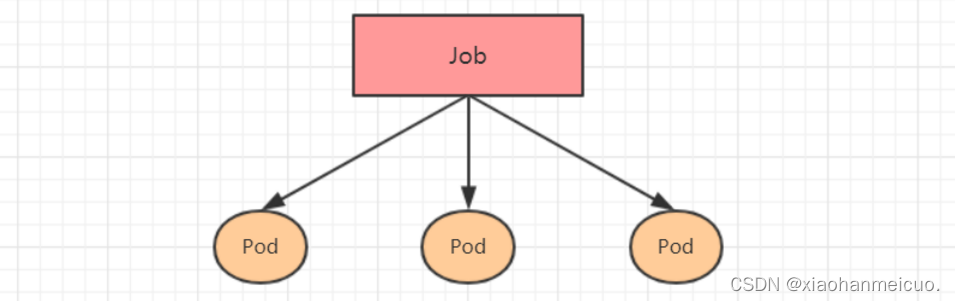

daemonset.apps "pc-daemonset" deleted6. Job

job主要用于负责批量处理(一次要处理指定任务数量)短暂的一次性(每个任务仅运行一次就结束)任务。Job特点如下:

- 当Job创建的pod执行成功结束时,Job将记录成功结束的pod数量

- 当成功结束的pod达到指定的数量时,Job将完成执行

?Job的资源清单文件:

apiVersion: batch/v1 # 版本号

kind: Job # 类型

metadata: # 元数据

name: # rs名称

namespace: # 所属命名空间

labels: #标签

controller: job

spec: # 详情描述

completions: 1 # 指定job需要成功运行Pods的次数。默认值: 1

parallelism: 1 # 指定job在任一时刻应该并发运行Pods的数量。默认值: 1

activeDeadlineSeconds: 30 # 指定job可运行的时间期限,超过时间还未结束,系统将会尝试进行终止。

backoffLimit: 6 # 指定job失败后进行重试的次数。默认是6

manualSelector: true # 是否可以使用selector选择器选择pod,默认是false

selector: # 选择器,通过它指定该控制器管理哪些pod

matchLabels: # Labels匹配规则

app: counter-pod

matchExpressions: # Expressions匹配规则

- {key: app, operator: In, values: [counter-pod]}

template: # 模板,当副本数量不足时,会根据下面的模板创建pod副本

metadata:

labels:

app: counter-pod

spec:

restartPolicy: Never # 重启策略只能设置为Never或者OnFailure

containers:

- name: counter

image: busybox:1.30

command: ["bin/sh","-c","for i in 9 8 7 6 5 4 3 2 1; do echo $i;sleep 2;done"]关于重启策略设置的说明:

? ? 如果指定为OnFailure,则job会在pod出现故障时重启容器,而不是创建pod,failed次数不变

? ? 如果指定为Never,则job会在pod出现故障时创建新的pod,并且故障pod不会消失,也不会重启,failed次数加1

? ? 如果指定为Always的话,就意味着一直重启,意味着job任务会重复去执行了,当然不对,所以不能设置为Always

示例:创建pc-job.yaml

[root@k8s-master01 ~]# cat pc-job.yaml

apiVersion: batch/v1

kind: Job

metadata:

name: pc-job

namespace: dev

spec:

manualSelector: true

selector:

matchLabels:

app: counter-pod

template:

metadata:

labels:

app: counter-pod

spec:

restartPolicy: Never

containers:

- name: counter

image: busybox:1.30

command: ["bin/sh","-c","for i in 9 8 7 6 5 4 3 2 1; do echo $i;sleep 3;done"]

[root@k8s-master01 ~]# kubectl create -f pc-job.yaml

job.batch/pc-job created

# 查看job

[root@k8s-master01 ~]# kubectl get job -n dev -o wide -w

NAME COMPLETIONS DURATION AGE CONTAINERS IMAGES SELECTOR

pc-job 0/1 8s 8s counter busybox:1.30 app=counter-pod

pc-job 0/1 28s 28s counter busybox:1.30 app=counter-pod

pc-job 1/1 28s 28s counter busybox:1.30 app=counter-pod

# 通过观察pod状态可以看到,pod在运行完毕任务后,就会变成Completed状态

[root@k8s-master01 ~]# kubectl get pods -n dev

NAME READY STATUS RESTARTS AGE

pc-job-dxb2h 1/1 Running 0 26s

pc-job-dxb2h 0/1 Completed 0 88s

#接下来,调整下pod运行的总数量和并行数量 即:在spec下设置下面两个选项

#completions: 6 # 指定job需要成功运行Pods的次数为6

#parallelism: 3 # 指定job并发运行Pods的数量为3

#然后重新运行job,观察效果,此时会发现,job会每次运行3个pod,总共执行了6个pod

[root@k8s-master01 ~]# kubectl apply -f pc-job.yaml

job.batch/pc-job created

[root@k8s-master01 ~]# kubectl get pods -n dev -o wide -w

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

pc-job-9j6mr 1/1 Running 0 5s 10.244.2.3 k8s-node02 <none> <none>

pc-job-qs5kp 1/1 Running 0 5s 10.244.1.4 k8s-node01 <none> <none>

pc-job-qs5kp 0/1 Completed 0 28s 10.244.1.4 k8s-node01 <none> <none>

pc-job-rgrc7 0/1 Pending 0 0s <none> <none> <none> <none>

pc-job-rgrc7 0/1 Pending 0 0s <none> k8s-node01 <none> <none>

pc-job-qs5kp 0/1 Completed 0 28s 10.244.1.4 k8s-node01 <none> <none>

pc-job-rgrc7 0/1 ContainerCreating 0 0s <none> k8s-node01 <none> <none>

pc-job-rgrc7 1/1 Running 0 1s 10.244.1.5 k8s-node01 <none> <none>

pc-job-9j6mr 0/1 Completed 0 29s 10.244.2.3 k8s-node02 <none> <none>

pc-job-ljjbg 0/1 Pending 0 0s <none> <none> <none> <none>

pc-job-ljjbg 0/1 Pending 0 0s <none> k8s-node02 <none> <none>

pc-job-9j6mr 0/1 Completed 0 29s 10.244.2.3 k8s-node02 <none> <none>

pc-job-ljjbg 0/1 ContainerCreating 0 0s <none> k8s-node02 <none> <none>

pc-job-ljjbg 1/1 Running 0 1s 10.244.2.4 k8s-node02 <none> <none>

pc-job-rgrc7 0/1 Completed 0 28s 10.244.1.5 k8s-node01 <none> <none>

pc-job-sxprd 0/1 Pending 0 0s <none> <none> <none> <none>

pc-job-sxprd 0/1 Pending 0 0s <none> k8s-node01 <none> <none>

pc-job-rgrc7 0/1 Completed 0 28s 10.244.1.5 k8s-node01 <none> <none>

pc-job-sxprd 0/1 ContainerCreating 0 0s <none> k8s-node01 <none> <none>

pc-job-sxprd 1/1 Running 0 1s 10.244.1.6 k8s-node01 <none> <none>

pc-job-ljjbg 0/1 Completed 0 28s 10.244.2.4 k8s-node02 <none> <none>

pc-job-mb4mj 0/1 Pending 0 0s <none> <none> <none> <none>

pc-job-mb4mj 0/1 Pending 0 0s <none> k8s-node02 <none> <none>

pc-job-ljjbg 0/1 Completed 0 28s 10.244.2.4 k8s-node02 <none> <none>

pc-job-mb4mj 0/1 ContainerCreating 0 0s <none> k8s-node02 <none> <none>

pc-job-mb4mj 1/1 Running 0 1s 10.244.2.5 k8s-node02 <none> <none>

#删除job

[root@k8s-master01 ~]# kubectl delete -f pc-job.yaml

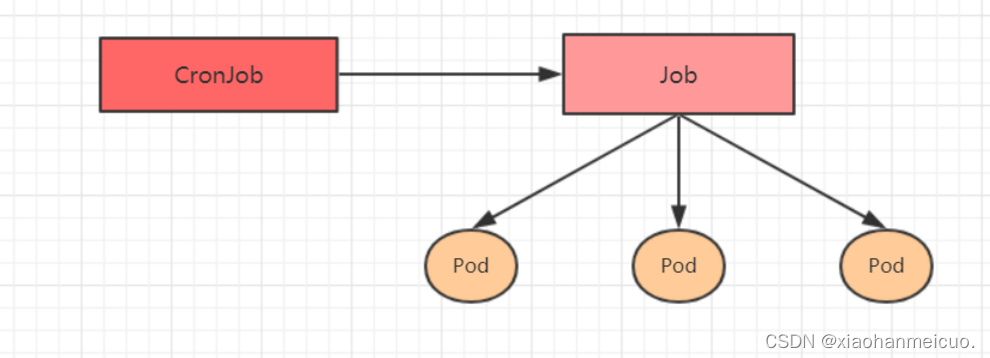

job.batch "pc-job" deleted7. CronJob(CJ)

CronJob控制器以Job控制器资源为其管控对象,并借助它管理pod资源对象,Job控制器定义的作业任务在其控制器资源创建之后便会立即执行,但CronJob可以以类似于Linux操作系统的周期性任务作业计划的方式控制其运行时间点及重复运行的方式。也就是说,CronJob可以在特定的时间点(反复的)去运行job任务。

?CronJob的资源清单文件:

apiVersion: batch/v1beta1 # 版本号

kind: CronJob # 类型

metadata: # 元数据

name: # rs名称

namespace: # 所属命名空间

labels: #标签

controller: cronjob

spec: # 详情描述

schedule: # cron格式的作业调度运行时间点,用于控制任务在什么时间执行

concurrencyPolicy: # 并发执行策略,用于定义前一次作业运行尚未完成时是否以及如何运行后一次的作业

failedJobHistoryLimit: # 为失败的任务执行保留的历史记录数,默认为1

successfulJobHistoryLimit: # 为成功的任务执行保留的历史记录数,默认为3

startingDeadlineSeconds: # 启动作业错误的超时时长

jobTemplate: # job控制器模板,用于为cronjob控制器生成job对象;下面其实就是job的定义

metadata:

spec:

completions: 1

parallelism: 1

activeDeadlineSeconds: 30

backoffLimit: 6

manualSelector: true

selector:

matchLabels:

app: counter-pod

matchExpressions: 规则

- {key: app, operator: In, values: [counter-pod]}

template:

metadata:

labels:

app: counter-pod

spec:

restartPolicy: Never

containers:

- name: counter

image: busybox:1.30

command: ["bin/sh","-c","for i in 9 8 7 6 5 4 3 2 1; do echo $i;sleep 20;done"]需要重点解释的几个选项:

schedule: cron表达式,用于指定任务的执行时间

? ? */1 ? ?* ? ? ?* ? ?* ? ? *

? ? <分钟> <小时> <日> <月份> <星期>? ? 分钟 值从 0 到 59.

? ? 小时 值从 0 到 23.

? ? 日 值从 1 到 31.

? ? 月 值从 1 到 12.

? ? 星期 值从 0 到 6, 0 代表星期日

? ? 多个时间可以用逗号隔开; 范围可以用连字符给出;*可以作为通配符; /表示每...

concurrencyPolicy:

? ? Allow: ? 允许Jobs并发运行(默认)

? ? Forbid: ?禁止并发运行,如果上一次运行尚未完成,则跳过下一次运行

? ? Replace: 替换,取消当前正在运行的作业并用新作业替换它

示例:创建pc-cronjob.yaml

[root@k8s-master01 ~]# vim pc-cronjob.yaml

apiVersion: batch/v1beta1

kind: CronJob

metadata:

name: pc-cronjob

namespace: dev

labels:

controller: cronjob

spec:

schedule: "*/1 * * * *"

jobTemplate:

metadata:

spec:

template:

spec:

restartPolicy: Never

containers:

- name: counter

image: busybox:1.30

command: ["bin/sh","-c","for i in 9 8 7 6 5 4 3 2 1; do echo $i;sleep 3;done"]

#创建

[root@k8s-master01 ~]# kubectl create -f pc-cronjob.yaml

Warning: batch/v1beta1 CronJob is deprecated in v1.21+, unavailable in v1.25+; use batch/v1 CronJob

cronjob.batch/pc-cronjob created

#查看cronjob

[root@k8s-master01 ~]# kubectl get cronjobs -n dev

NAME SCHEDULE SUSPEND ACTIVE LAST SCHEDULE AGE

pc-cronjob */1 * * * * False 1 0s 17s

#查看job

[root@k8s-master01 ~]# kubectl get jobs -n dev

NAME COMPLETIONS DURATION AGE

pc-cronjob-27429579 1/1 28s 112s

pc-cronjob-27429580 1/1 28s 52s

#查看pod

[root@k8s-master01 ~]# kubectl get pods -n dev

NAME READY STATUS RESTARTS AGE

pc-cronjob-27429579-jhsxc 0/1 Completed 0 114s

pc-cronjob-27429580-5c9xl 0/1 Completed 0 54s

#删除cronjob

[root@k8s-master01 ~]# kubectl delete -f pc-cronjob.yaml

Warning: batch/v1beta1 CronJob is deprecated in v1.21+, unavailable in v1.25+; use batch/v1 CronJob

cronjob.batch "pc-cronjob" deleted